Feed aggregator

How LLCs unlock innovation in automotive electronics

A recent McKinsey mobility survey shows that automobile owners are prioritizing battery range, charging speeds, and reliability when considering an electric vehicle (EV). Automakers are responding to these consumer preferences by developing more resilient power systems with higher power density and advanced battery management systems (BMS) that maximize space while improving performance.

Regardless of manufacturer or vehicle type, EV architecture development prompts digital technology innovations. Yet tried-and-true analog technologies such as integrated magnetics offer measurable benefits, with inductor-inductor-capacitors (LLCs) providing stable voltage regulation and a consistent response to the load changes needed for EV charging.

LLC resonant circuits operating within switched-mode DC/DC power converters deliver wide output voltage control, soft switching in the primary, low voltage in the secondary, and slight changes in switching frequency—all requirements for EVs.

Because resonant converters have soft switching capabilities and can handle high voltages with nearly 98% efficiency, these devices can rapidly charge EVs while minimizing energy losses. Compact LLC resonant converter modules enable easy scalability and adaptability for different voltage requirements.

LLC resonant circuitAs Figure 1 shows, LLC resonant converters include MOSFET power switches (S1 and S2), a resonant tank circuit, a high-frequency transformer, and a rectifier. S1 and S2 convert an input DC voltage into a high-frequency square wave.

Figure 1 An LLC resonant half-bridge converter with power switches S1 and S2, a resonant tank circuit, a high-frequency transformer, and a rectifier. Source: Texas Instruments

Figure 1 An LLC resonant half-bridge converter with power switches S1 and S2, a resonant tank circuit, a high-frequency transformer, and a rectifier. Source: Texas Instruments

The resonant tank circuit consists of a resonant capacitor (Cr), a resonant inductor (Lr) in series with the capacitor and transformer (T1), and a magnetizing inductor (LM) in parallel with the capacitor and transformer. Using two inductors allows the tank circuit to respond to a broad range of loads and to establish stable control over the entire load range.

Oscillating at the resonant frequency (fR), the resonant tank circuit eliminates square-wave harmonics and outputs a sine wave of the fundamental switching frequency to the input of T1. Operating the circuit at a switching frequency at or near fR causes the resonant current to discharge or charge the capacitance just before the power switch changes state.

By shaping the current waveform, the resonant tank circuit causes S1 and S2 to turn on at 0 V (zero voltage switching) and turn off at 0 A (zero current switching). The resultant soft switching increases efficiency, decreases energy losses, reduces stress on power systems, and eliminates voltage and current spikes that cause electromagnetic interference (EMI). Soft switching also enables LLC resonant converters to handle a wide range of input and output voltages.

T1 provides input/output isolation. Electrically isolating the input and output circuits prevents ground loops and minimizes interference. Isolation also prevents voltage fluctuations or transients from propagating and allowing voltage variations. After T1 scales the voltage up or down, the rectifier (D1, D2, and CO) converts the sine wave into a stable DC output.

How LLC solutions support high power densityLLC resonant converters support the growing demand for higher-power-density solutions. Since these converters operate at high switching frequencies while maintaining high efficiency, designers can integrate smaller and lighter transformers and inductors into the LLC package.

Integrating Lr and T1 into a single magnetic unit increases the converter’s power density and circuit efficiency. For EV designers, the size, weight, and cost savings gained make it possible to incorporate more functionality into limited spaces. Optimizing the T1 winding and core structure allows the converter to operate within thermal limits.

Strategically and selectively integrating protection features and intelligent control capabilities into analog controllers reduces system complexity while maintaining performance. Using LLC converters allows manufacturers to move beyond the basics toward adaptive power systems and advanced control methods.

Input power proportional control (IPPC) represents a growing focus on the intelligent power management available through LLC resonant circuits.

As shown in Figure 2, IPPC widens the control range of an LLC converter by modulating the switching frequency and comparing the input power to a control signal. By regulating the output voltage and current, the feedback loop directly controls the converter’s input power.

Figure 2 Simplified application schematic for the Texas Instruments UCC25661-Q1 LLC controller implementing the IPPC scheme. Source: Texas Instruments

With the control signal proportional to the input power, the signal becomes limited in range and limits the converter’s power output. As a result, the control signal works as a load monitor regardless of any variations in the resonant converter’s output voltage, preventing unwanted system shutdowns while also protecting valuable system components.

LLC applications for LEVsLight electric vehicles (LEVs) include mopeds, scooters, bikes, and golf carts. Adopting the LLC topology for onboard and external DC/DC converters in an LEV improves the charger efficiency within the battery power and voltage ranges, regardless of the charging architecture. Using an LLC resonant converter also supports the high-power density and efficiency requirements of an LEV while reducing EMI and noise.

When compared to traditional flyback converters and parallel-resonant converters, LLC converters offer specific advantages for LEVs.

One advantage exists through the operation of LLC converters at the wide input and output voltages that match LEV charging requirements. Wide-output LLC converters with IPPC work well for LEVs by supporting constant current and constant voltage charging.

Instead of going into burst mode with a low battery voltage, the converter maintains the operating mode and minimizes ripple into the battery. The stable operating mode shortens the time needed to charge the battery and extends battery life.

LLC applications for PHEVs and EVsPlug-in hybrid electric vehicle (PHEV) and EV architectures can use LLC resonant circuits for the DC/DC converter, BMS, onboard charger (OBC), and traction inverter subsystems. Along with the high efficiency established through zero-voltage switching, resonant converters provide high power density and decreased switching losses.

Figure 3 is a block diagram of a DC/DC converter combined with an active power factor correction (PFC) circuit. The PFC brings the input current and voltage waveforms in phase and increases the system efficiency.

After applying AC power to the input of the PFC stage, the boost voltage from the PFC combines with the filtered voltage at the DC-link capacitor and becomes the input for the DC/DC converter.

Figure 3 DC/DC converter block diagram. Source: Texas Instruments

EV battery management systems monitor and control state of charge, state of health, and residual capacity to maintain the safe operating range of the battery cells.

Within these broad functions, the BMS monitors the voltage, current, and temperature of the batteries and protects against deep discharge, overcharging, overheating, and overcurrent conditions. The cell balancing function of a BMS ensures that each cell in a battery pack has a uniform charging and discharging rate.

Resonant converters provide precise energy management, scalability, and the isolated power needed for a BMS as represented in Figure 4. As EVs incorporate more loads, the power requirements for high- to low-voltage conversion increase and require a higher power density.

Figure 4 An isolated DC/DC converter isolates the high-voltage battery from the low-voltage battery. Source: Texas Instruments

The LLC topology is a good fit for OBC applications because it addresses the need to adapt the output voltage according to the battery’s charging voltage range. LLC resonant converters simply adjust the voltage with the switching frequency.

While traction inverters convert energy stored in the battery into instantaneous multiphase AC power to drive traction motors, LLC resonant circuits operate within the subsystems that support inverters. These subsystems provide input power protection, signal isolation, isolated and non-isolated DC/DC power supplies, current and voltage sensing, and signal isolation.

LLC innovation for LEVs, PHEVs, and EVsThe high efficiency and compact size of LLC resonant circuit modules maximize vehicle range while cutting costs. LLC converters do have limitations, however, when meeting the output capacity requirements of evolving technologies. Limited power capacity and performance degradation under dynamic conditions require a different approach.

As next-generation zone EV architectures become standard, newer PHEVs and EVs will rely on multiple LLC converters distributed throughout the vehicle within the zone control module to optimize distribution, preserve output power stability, and deliver higher power capacity.

New EVs will also use bidirectional DC/DC LLC converters to connect the high-voltage battery with a low-voltage supply, improve charger efficiency, facilitate charging from and discharging to the grid, and reduce space and costs.

Other improvements include producing LLC resonant converters with two transformers and integrating lightweight planar transformers into the tank circuits.

Dual transformer converters may provide wider-range output voltages while maintaining high efficiency while charging. Using planar transformers in converters reduces the weight and size of converter modules.

EV consumer acceptanceEach innovation represents another step toward widespread consumer acceptance of EVs. In turn, EV adoption reduces greenhouse gas emissions, improves local air quality, and reduces the impact on human health.

Andrew Plummer is a product marketing engineer in Texas Instruments’ high-voltage power business. He focuses on growing the automotive, energy infrastructure and aerospace and defense sectors. He graduated with a bachelor’s degree in electrical engineering from the University of Florida.

Related Content

- Power Tips #117: Measure your LLC resonant tank before testing at full operating conditions

- Power Tips #144: Designing an efficient, cost-effective micro DC/DC converter with high output accuracy for automotive applications

- Power Tips #137: Implementing LLC current-mode control on the secondary side with a digital controller

- LLC Power Conversion Explained, Part 1: Introduction

The post How LLCs unlock innovation in automotive electronics appeared first on EDN.

Cornell develops HEMTs on single-crystal AlN substrate for RF power amplifiers

Nuvoton Emphasises Need to Strengthen Taiwan-Israel R&D Collaboration

Nuvoton Technology showcased its leadership in international expansion by participating in the “Israeli-Taiwanese Business Seminar,” hosted by the Economic Division of the Taipei Economic and Cultural Office in Tel Aviv from November 15 to 23. Drawing attention to the practical advantages of its established R&D center in Israel, Nuvoton played a key role in the seminar, sharing on-the-ground insights from its cross-border expansion.

Nuvoton stated that investments in global hubs such as Israel are not only an expansion of its business footprint but also a critical part of its long-term development strategy. By connecting innovation talent and technology networks worldwide, Nuvoton aims to address emerging market challenges and opportunities while helping build a more resilient and competitive Taiwan-Israel technology ecosystem.

During the seminar, Nuvoton highlighted opportunities for collaboration in R&D, technology, and market development among global enterprises. The company’s practical experience offered the delegation concrete guidance for establishing operations in Israel and accessing its innovation resources, reflecting the collaborative spirit within Taiwan’s technology community.

Nuvoton emphasized the complementary strengths of Taiwan and Israel in the high-tech sector: Taiwan excels in IC designing, while Israel leads in software innovation. Building on these advantages, Nuvoton has accelerated its global R&D strategy by establishing an R&D center in Israel, enabling the company to strengthen its international competitiveness.

The post Nuvoton Emphasises Need to Strengthen Taiwan-Israel R&D Collaboration appeared first on ELE Times.

SemiQ launches Gen3 1200V S3 modules for high-power industrial and EV applications

element 14’s DevKit HQ: A One Stop Development Kit Solution

Engineering is all about trying and testing. According to a survey conducted by element 14, most engineering professionals feel that finding the right development kit is a major challenge. Identifying a holistic development kit is essential for most engineers before starting a project. They value standard interfaces and extensibility, often combining or modifying multiple kits to build prototypes and proof-of-concept designs.

Element 14 has come forward with DevKit HQ, a new online resource that brings evaluation boards, development kits, single board computers (SBCs), tools, and technical documents, all in one place. DevKit HQ brings together resources from key supplier product families, like Analog Devices, NXP, AMD, STMicroelectronics, Microchip, Infineon, Renesas, Raspberry Pi, BeagleBoard, Arduino, and more, for multiple purposes, such as, AI/ML, IoT, sensors, wireless, motor control, and power management. This makes it easy for developers to discover, compare, and accelerate their embedded design and innovation.

The site enables engineers to quickly find the latest development kits and modular solutions by application, along with available demo and application software. Engineers can also easily locate evaluation boards that match a supplier’s product family or series.

Additionally, the site features each kit’s datasheets, application notes, training videos, reference designs and more. Together, these resources help engineers accelerate design decisions and drive innovation across various applications, including AI, IoT, sensors, wireless, motor control and power management.

“Our mission is to make life easier for design engineers,” said Daniel Ford, Vice President of Sales at element14. “With the DevKit HQ, we’ve created the leading destination where they can search development kits by application as well as explore new technologies, experiment with the latest kits, and move from idea to prototype faster, freeing up more time to focus on innovation.’’

The post element 14’s DevKit HQ: A One Stop Development Kit Solution appeared first on ELE Times.

USB-IF Hardware Certification to Anritsu for USB4 2.0 Test Solution

ANRITSU CORPORATION gets certified by the USB Implementers Forum (USB-IF) for its test solution for the latest USB4 Version 2.0 (USB4 v2) communication standard.

The solution is based on the Signal Quality Analyzer-R MP1900A and provides advanced USB device evaluation capabilities. It helps improve the quality and reliability of products implementing the USB4 Version 2.0 standard, supporting widespread deployment of next-generation high-speed interfaces.

As of December 2025, USB4 v2 is the most advanced USB standard, delivering data transfer speeds of up to 80 Gbit/s — twice that of USB4 v1 (40 Gbit/s). This supports next-generation applications, such as high-resolution video transmission, external GPUs, high-speed storage, and VR/AR devices.

Furthermore, the specification significantly improves communication performance and reliability. By introducing innovations including Pulse Amplitude Modulation 3-level (PAM3) signaling to improve bandwidth efficiency, the Frequency Variation Profile to enhance the stability of link training — a signal quality and initialization procedure — and a new TS2.CLKSW training sequence incorporating clock switching.

Current demand for evaluation and certification testing is driven primarily by semiconductor manufacturers producing USB4 v2 control ICs. Looking ahead, adoption is expected to expand to test houses for test equipment deployment and, in the long term, to consumer product manufacturers of USB4 v2 hubs, docking stations, and cables.

Product Overview: Signal Quality Analyzer-R MP1900A

The MP1900A is a high-performance Bit Error Rate Tester (BERT) supporting receiver tests for multiple high-speed interfaces, including PCIe, USB, Thunderbolt, DisplayPort, and 400 GbE/800 GbE. It combines industry-leading PPG technology for high-quality waveforms with a high-sensitivity error detector, precision jitter sources (SJ, RJ, SSC, BUJ), and noise sources (CM-I, DM-I). The MP1900A also supports link training and LTSSM analysis for comprehensive high-speed device evaluation.

The post USB-IF Hardware Certification to Anritsu for USB4 2.0 Test Solution appeared first on ELE Times.

As Energy-Efficient Chips Are Rising — HCLTech × Dolphin’s New Partnership Gives the Trend a Heavy Push

Amid the ongoing push, HCLTech & Dolphin Semiconductors have announced a strategic partnership aiming to develop energy-efficient chips for IoT and data centre applications. As the world moves towards energy-efficient chips, it becomes more imperative than ever to trace the lines that are destined to become the future trends of the industry, at large. When chips come into the picture, energy efficiency is the most organic issue to crop up as it decides the longevity and reliability of the concerned chip. As per the statement by HCLTech and Dolphin Semiconductors, the partnership aims to support enterprises seeking to improve energy efficiency and performance as computing workloads increase.

What are Energy-Efficient Chips?

Energy-efficient chips are integrated circuits designed to perform computations while minimizing power consumption to extend battery life, reduce heat generation, and also lower the operational costs. Its architecture includes specialized cores, such as Neural Processing Units (NPUs) and Graphics Processing Units (GPUs), or, more broadly, AI accelerators rather than conventional CPUs. This is to ensure that tasks are performed using the most efficient hardware possible.

Why is it important?

The proliferation and development of energy-efficient chips is primarily important because, as the usage increases, the power consumption would also increase, and in that case, it is important and indispensable to cut the power requirement and bring the hardware to optimum performance, keeping in mind the sustainability and operation limitations, as the power needed would affect the environment and also cost money simultaneously.

HCLTech X Dolphin Semiconductors Partnership

HCLTech will integrate Dolphin Semiconductor’s low-power IP directly into its SoC design workflow, creating scalable, energy-efficient chips that handle a wide range of compute needs while keeping power use in check.

At its core, energy efficiency requires a holistic, full-stack design effort — from initial architecture to the software that ultimately runs on the chip.

The post As Energy-Efficient Chips Are Rising — HCLTech × Dolphin’s New Partnership Gives the Trend a Heavy Push appeared first on ELE Times.

Advanced GAA Chips: Minimizing Voltage Loss and Improving Yield

Courtesy: Lam Research

- As advanced logic chips decrease in size, voltage loss can increase

- An emerging solution is backside power delivery networks that use SABC architecture

The problem: As metal pitch scaling shrinks to support the next generation of logic devices, the IR (or voltage) drop from conventional frontside connections has become a major challenge.

As electricity travels through a chip’s metal wiring, some voltage gets lost because wires have resistance.

- If the voltage drops too much, the chip’s transistors can’t get enough power and can slow down or fail.

- In addition, the resistance of back-end-of-line (BEOL) metal lines and vias is dramatically increasing.

The solution: Backside power delivery networks (BSPDN) can address these challenges and are currently widely studied as an alternative to front-side power delivery and contact schemes.

Virtual Study Compares DBC and SABC on a GAA Device

The Semiverse Solutions team conducted a virtual study using SEMulator3D to analyze gate-all-around (GAA) devices that use BSPDN.

In the Design of Experiments (DOE), the team focused on a process window for a GAA device that uses a direct backside contact (DBC) architecture and compared it to a GAA device process window using self-aligned backside contact (SABC) architecture.

DBC architecture, used to connect contacts with source/drain structures, requires a deep silicon etch, a small edge placement error (EPE), and precise alignment when used in an advanced GAA transistor.

The Semiverse Solutions team conducted the virtual experiment to see if an SABC scheme could address these precise alignment challenges.

Analyzing the process window of a device helps engineers and researchers understand the range of manufacturing conditions under which a device can be reliably produced while meeting its performance and quality requirements.

By comparing the process windows of different architectures, researchers can identify which design offers greater tolerance to manufacturing variations, fewer defects, and better overall performance.

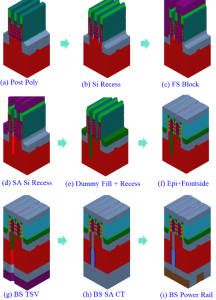

Figure 1 displays the major integration (process) steps for a proposed SABC scheme. The process steps are like those used during a typical GAA logic process manufacturing flow.

Figure 1. The manufacturing process flow of a proposed self-aligned backside contact (SABC) scheme

Figure 1. The manufacturing process flow of a proposed self-aligned backside contact (SABC) scheme

Study Methodology

The team ran multiple virtual fabrication experiments that varied the smallest critical dimensions (CD), overlay, and over-etch amount of the through-silicon via (TSV).

Virtual measurements were taken of the number of opens and shorts generated (number of nets in the structure), high-k damage (high-k material volume change), and the backside contact area of the typical structure.

The manufacturing success criteria were specified as follows:

- Backside contact area (CT to epitaxy): ≥150 nm2

- High K damage: <20 nm3

- No contact with the metal gate shorts

Using these criteria, the results of each virtual experiment in the DOE were classified as a “pass” or “failure” event.

SABC Indicates Higher Yield for Advanced Logic Nodes

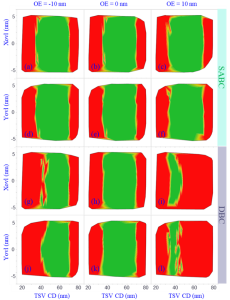

The DOE results are shown in Figure 2 as a set of process window contour diagrams at various CD, overlay, and over-etch amounts for both the SABC and DBC contact schemes. The green areas in Figure 2 represent “pass” results, while the red areas represent “fail” events.

Figure 2. Comparison of SABC and DBC process windows

Figure 2. Comparison of SABC and DBC process windows

Due to its self-aligned capabilities, the SABC approach exhibits a much larger process window (larger green area) than the DBC architecture.

The DBC process window is very narrow, especially when the TSV is 10 nm over- or under-etched. The TSV failure exhibits itself as high-k damage, source-drain to metal gate shorts caused by excessive over-etching, small contact areas created by TSV under-etch and increased EPE caused by a larger TSV CD and additional overlay errors.

The virtual study demonstrated that the SABC approach to backside power minimizes EPE and over-etch variations in the TSV process and provides a much larger and more stable process window than a DBC approach. SABC is promising for use at advanced logic nodes and may support further logic device scaling.

The post Advanced GAA Chips: Minimizing Voltage Loss and Improving Yield appeared first on ELE Times.

The Leading Five Essential Context Window Concepts In LLMs

Courtesy: Micron

This story outlines five essential concepts that explain how large language models process input within a context window. Using clear examples and practical insights, it covers foundational ideas like tokenization, sequence length, and attention. The goal is to help readers better understand how context affects model behavior in AI applications. We also present results from an analytical model used to estimate system behavior, to show how scaling input and output sequence lengths impacts response time. The results highlight how decoding longer outputs takes significantly more time, pointing to the importance of fast memory systems like HBM in supporting efficient inference at scale. These concepts are useful for anyone working with or designing prompts for generative AI systems.

Context window versus length

When working with large language models, it’s important to understand the difference between concepts like context window, context length, and sequence length. These terms are often used interchangeably, which can lead to confusion. In this blog, we will define and refer to them as distinct concepts.

The context window is the model’s maximum capacity: the total number of tokens it can process at once, including both your input and the model’s output. As a simple example, let’s define the rectangle size below as equivalent to a 100,000 token context window.

The context length, on the other hand, is how much you’ve put into that space, which is the actual number of tokens—input tokens (blue) and output tokens (green)—currently in use during a conversation. For example, if a model has a 100,000-token context window and your input uses 75,000 tokens, only 25,000 tokens remain for the model’s response before it reaches the upper limit of the window.

Sequence length typically refers to the length of a single input or output sequence within that window. It’s a more granular measure used in model training and inference to track the length of each segment of text.

The context window sets the limit for how much information a model can process, but it does not directly reflect intelligence. A larger window allows more input, yet the quality of the output often depends on how well that input is structured and used. Once the window is full, the model may lose coherence, leading to unwanted outcomes (for example, hallucinations).

Tokens aren’t words

If the context window is defined by an upper limit (say 100,000), tokens are the units that measure what fits inside, and it’s important to understand that tokens are not words. The words you type into a prompt are fed to a “tokenizer,” which breaks down text into tokens. A single word may be split into several tokens. For example, “strawberry” becomes three tokens and “trifle” becomes two. In other cases, a word may consist of just one token, like “cake”.

| St | raw | berry |

We can test this with a quote from the novel “Emma” by Jane Austen.

“Seldom, very seldom, does complete truth belong to any human disclosure; seldom can it happen that something is not a little disguised or a little mistaken.”

This text contains 26 words, and when run through the tokenizer of the Mistral language model provided by lunary.ai1, it produces 36 tokens. That’s about 0.72 words per token or roughly three-fourths of a word.

The ratio varies, but for English words, you might average around 0.75 words per token. That’s why a model with a 100,000-token context window (per user) does not necessarily fit 100,000 words. In practice, you might fit closer to 75,000 English words or fewer, depending on the text.

estimatedtokens≈words∗1.33

To further check the token-to-word ratio at scale, we ran a quick analysis using eight well-known literary works from Project Gutenberg, a library of more than 75,000 free e-books. First, we counted the words in each book, then ran the texts through a tokenizer to get the token counts. After comparing the numbers, we found that the average ratio was about 0.75 words per token.

Knowing this ratio can help everyday users get more out of their interactions with AI. Most AI platforms, like ChatGPT or Claude, operate with token-based constraints. That is, they process text in tokens, not words, so it’s easy to misjudge how much content you can actually fit into a prompt or response. Because usage is often measured in tokens rather than words, knowing the ratio makes you aware of any limits so you can plan your inputs more strategically. For example, if a model has a 4,000-token input limit, that’s roughly 3,000 words. This is good to know when feeding a model a long document or dataset for tasks like finding key insights or answering questions.

Attention is not equally distributed within the context window

AI hallucinations are often misunderstood as quirky behavior or signs that a language model is buggy and unreliable. But hallucinations are not random; they often stem from how a model might process and prioritize information, which is determined by things like how well a model is trained and how it distributes attention. In transformer-based models like GPT or Claude, attention is the mechanism that helps the model decide which parts of the context are most relevant when generating a response. To better understand the concept of attention, imagine being at a noisy cocktail party. If someone calls your name, you instinctively tune in.

“Frodo! Over here!”

But what if four people call your name at once from different corners of the room?

“Frodo! It’s me, Sam!”

“Frodo! Come quick!”

“Frodo! Look this way.”

“Frodo … yesss, precious Frodo …”

You hear them all, but your focus is now split. You might even pay more attention to the voice you recognize or the one closest to you. Each sound gets a fraction of your attention, but not all equally. It’s not a perfect analogy but this is one way you can conceive of how attention works in large language models. The model pays attention to all tokens in the context window, but it gives more weight to some than to others. And that’s why attention in large language models is often described as “weighted”, meaning that not all tokens are treated equally. This uneven distribution is key to understanding how models might prioritize information and why they sometimes appear to lose focus.

More context may or may not mean better answers

A model can scan all tokens within the context window, but it doesn’t consider each token with equal interest. As the window fills (say, up to 100,000 tokens), the model’s attention becomes more diffuse. In its attempt to keep track of everything, clarity may diminish.

When this happens, the model’s grip on the conversation loosens, and a user might experience slower, less coherent responses or confusion between earlier and later parts of the conversation thread. Hallucinations, from the Latin hallucinat or “gone astray in thought,” often appear at this edge. It’s important to understand that these occurrences are not signs that the model is malfunctioning. It is actually an indication that the model is reaching its threshold, where it is operating at capacity. And here is where the model may struggle to maintain coherence or relevance across long spans of input.

From the model’s perspective, earlier tokens are still visible. But as the window fills up and its attention becomes more distributed, the precision of response may degrade. The model might misattribute facts from previous prompts or fuse unrelated ideas into something that sounds coherent but isn’t. In the case of hallucinations, the model isn’t lying. It’s reaching for a reasonable response from fragments it can no longer fully distinguish, making a guess under the strain of limited attention. And to be fair, the model is working with what it has, trying to make sense of a conversation that’s grown too big to reliably focus on. Understanding attention in this way helps explain why more context doesn’t always lead to better answers.

That said, long context windows (greater than 200,000 and now reaching 1 million or more tokens) can be genuinely useful, especially for complex reasoning and emerging applications like video processing. Newer models are being trained to handle longer contexts more effectively. With better architecture and training, models can more effectively manage attention across inputs, reducing hallucinations and improving responses. So, while more context doesn’t always lead to better answers, newer models are getting better at staying focused, even when the conversation gets really long.

Sequence length affects response time

Following the explanation of attention, it’s useful to understand how sequence length affects inference. We can now ask a practical question: What happens when we vary the sequence length?

The input sequence length affects time to first token (TTFT), the time from entering the request to receiving the first output token. TTFT matters most for GPU performance, as it reflects how quickly the GPU can process the input and then compute it to output the first token. In contrast, varying the output sequence length affects inter-token latency (ITL) or the time between each generated token. This latency is more relevant to memory usage.

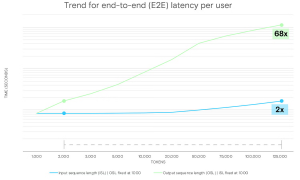

To explore this further, we used a first-order analytical model to estimate end-to-end latency during LLM inference. We ran the model using Llama 3 70B on a single GPU with high-bandwidth memory (HBM3E 12H, 36GB across 8 placements), and a context window of 128,000 tokens.

The chart below shows the impact of increasing input sequence length (ISL) and output sequence length (OSL) on the entire end-to-end latency. Each measurement was taken with a batch size of 1 (i.e., a single request).

Figure. End-to-end latency per user (seconds), for both output and input sequence lengths

Figure. End-to-end latency per user (seconds), for both output and input sequence lengths

Key takeaways

One important takeaway when measuring latency is that it takes much more time for the model to generate a long response than to process a long prompt. The model can read and understand the input all at once, which is relatively fast even for lengthy prompts. But generating a response happens token by token, with each new token depending on everything generated so far. This takes more time because the model follows an autoregressive process, meaning each token is built on the ones before it. For example, increasing the input sequence length (ISL) from 2,000 to 125,000 tokens results in only a roughly two times increase in latency. In contrast, scaling the output sequence length (OSL) across the same range leads to a roughly 68 times increase. This difference arises because longer input sequences drive more prefill computation, which can process multiple tokens in parallel. Meanwhile, decoding is inherently sequential, generating one token at a time, and that takes more time and demands much more memory bandwidth.

The implication is that longer output sequences result in longer decode times, and that means the GPU and memory subsystem remain active longer. In this context, power efficiency at the hardware level becomes especially valuable. A memory device like Micron HBM3Ee that runs using much less power than comparable high-bandwidth memory devices can complete identical inference tasks while using less energy.

For a user, this insight underscores the importance of optimizing prompts and managing input length (trimming any unnecessary content, for example). And if you’re building real-time apps, you can usually handle longer inputs without much trouble. But keeping the output concise may help your system stay fast and responsive.

The important role of memory for context length

Inference latency depends not only on sequence length but also on how the system manages the demands on compute and memory as it processes inputs and generates outputs. Many recently released language models now advertise context windows that exceed one million tokens. These larger context windows (when fully utilized) place greater stress on the memory subsystem, which may appear to the user as slower execution and increased runtimes. Newer memory technologies will offer higher bandwidth and larger capacity to support these larger context windows, improving response times and overall throughput (tokens per second). But these performance gains raise questions about energy use. As inference workloads scale to millions of tokens, designing systems that use power efficiently becomes increasingly important. Systems that remain active for longer periods require more power, and memory devices designed to use less power without sacrificing bandwidth can help address this challenge. For example, Micron HBM3E consumes much less power than competing high-bandwidth memory devices. And this lower power can help reduce the amount of energy AI consumes during inference workloads involving millions of tokens. Looking ahead, next-generation memory technologies, like HBM4 and HBM4E, are being designed to deliver even higher memory bandwidth and capacity while improving power efficiency. These improvements, which stem from advances in process technology (Micron’s use of 1-gamma DRAM), are expected to enable faster data movement with lower energy cost. Moreover, as these technologies mature, they may further reduce latency and improve throughput and energy use in large-scale AI deployments.

The post The Leading Five Essential Context Window Concepts In LLMs appeared first on ELE Times.

Revamping a Solid-State Battery Cell

Courtesy: Comsol

Ever experience these common annoyances? You’re about to leave for the day and realize you forgot to charge your phone. Or, you’re on the road and remember your EV needs a charge. The integration of solid-state batteries into electric vehicles, electronics, and energy storage systems — once realized — will leave problems like these in the past. Solid-state batteries have the potential to charge faster and last longer, all while being a safer option. Simulation can help battery designers investigate solid-state batteries to better predict their performance for future uses.

The Solid-State Battery: A Fervently Anticipated Development

Solid-state batteries (SSBs) use a solid electrolyte to conduct ions between both electrodes, whereas conventional batteries use a liquid electrolyte or gel polymer. This difference gives SSBs many advantages over lithium-ion batteries, such as a longer lifecycle. Batteries in current EVs typically last 5–8 years, while EVs with solid-state batteries could increase this to 15–20 years. In addition, while the average Li-ion battery experiences degradation at 1000 lifecycles, an SSB could remain at 90% original capacity after 5000 cycles.

Incorporating solid-state batteries into electric vehicles means less time waiting for them to charge.

Incorporating solid-state batteries into electric vehicles means less time waiting for them to charge.

SSBs can complete a charge cycle much faster than other battery types, too. While the typical Li-ion battery takes about 45 minutes to reach 80% charge, an SSB could reach the same charge in 12 minutes, or in as little as 3 minutes. SSBs are also safer for consumer use. Without a liquid electrolyte, they are much less flammable and volatile than other options. Plus, by avoiding liquid electrolytes and carbon anodes, they offer more energy storage density (Ref. 1).

A Design Challenge Spanning Decades

The solid electrolyte was first discovered by physicist Michael Faraday in the early 1830s, and its mechanisms and potential uses have been a subject of research ever since. Fast-forward to the 2020s, when a wide variety of automakers, electronics companies, and research institutions are investing a large portion of their R&D in SSBs. However, battery research and design is an expensive and resource-intensive processes. Simulation can help battery developers investigate design challenges under different operating conditions and use cases.

SSBs are subject to a phenomenon called lithiation, in which the electrodes within the solid components of the battery grow and shrink, causing mechanical stress. In addition, the movement of ions in the battery during charge–discharge cycles causes stress and volume changes. These issues can lead to reduced lifespan and energy storage in the battery and even mechanical failure.

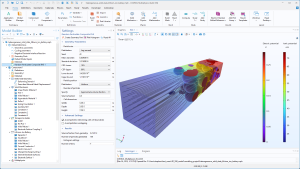

Multiphysics modeling can be used to analyze an SSB design. In the Heterogeneous Model of a Solid-State Battery Unit Cell tutorial model, we take you through the modeling process in the COMSOL Multiphysics software.

Modeling a Solid-State Battery in COMSOL Multiphysics

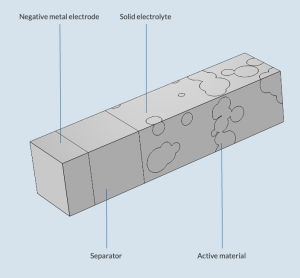

The Heterogeneous Model of a Solid-State Battery Unit Cell tutorial model simulates the charge–discharge cycle in an SSB, particularly how charge and mass transport interact with solid mechanics. The model geometry is made up of a composite positive electrode, a lithium metal negative electrode, and a solid electrolyte separator, located between both electrodes.

The geometry of the solid-state battery model.

The geometry of the solid-state battery model.

Specialized physics interfaces and features make the setup of the model straightforward. The conservation of charge, mass, and momentum can be modeled with the Lithium-Ion Battery, Transport in Solids, and Solid Mechanics interfaces, respectively. There are also specialized features for modeling:

- Plating at the negative electrode

- Growth and shrinkage of the positive electrode

- Redox reaction at the electrode–solid electrolyte interfaces

The SSB model and physics settings in COMSOL Multiphysics.

The SSB model and physics settings in COMSOL Multiphysics.

The simulation of the heterogeneous SSB evaluates certain quantities at the end of charge, including the electric and ionic potentials and von Mises stress in the solid electrolyte.

The results also include the evaluation of global quantities, including the cell voltage, state of charge, and stress in the z direction of the battery.

Paving the Way for SSBs

Looking into the mechanics of solid-state batteries with simulation can help researchers, automakers, and electronics companies incorporate SSBs into components and devices in the coming years — not decades.

The post Revamping a Solid-State Battery Cell appeared first on ELE Times.

The Rise of Smart Audio: From Sound to Intelligence

Courtesy: Infineon

What if your fridge warned you before it broke? Well, now it’s possible.

Imagine if your refrigerator hears a subtle vibration, predicting failure before it happens. Your oven guides you by the sound of your food sizzling. A health patch silently monitors your breathing patterns, alerting you to irregularities in real-time.

This is not science fiction. This is the sign of a new era, where audio drives intuitive, human-centric interactions between people and devices.

Audio as the interface: Beyond buttons and screens

Voice is the most natural interface we have ever known. Unlike traditional inputs, speech allows us to interact hands-free and eyes-free. This makes the technology ideal for daily tasks like cooking, driving, or assisting those with disabilities.

But to make this seamless and personal, technology needs more than a microphone. The solution demands:

- Persistent and ultra-efficient audio processing

- Robust AI, engineered to work together at the edge

Power and Performance — The Technology Behind Always-On Audio

Always-on audio relies on ultra-low-power architectures designed to listen continuously without draining energy. Modern microcontroller platforms now integrate autonomous analog subsystems capable of monitoring and pre-processing sound while operating in deep-sleep modes, enabling persistent listening with minimal power consumption.

Arm Cortex-M55

At the processing core, the Arm Cortex-M55 with Helium DSP extensions delivers significantly higher performance for embedded audio tasks—providing up to three times the efficiency needed for real-time signal processing, noise suppression, and on-device inference.

Neural Network Acceleration

Dedicated neural network accelerators further enhance these systems by offloading compute-intensive functions such as wake-word detection and voice activity recognition. This makes continuous listening feasible even in compact, battery-powered devices—from wearables to distributed IoT sensors—while maintaining fast response times and efficient power usage.

Intelligence – Software brings audio to life

DEEPCRAFT AI Suite is the engine behind transformative audio experiences. DEEPCRAFT Voice Assistant solution brings the following features, optimized for low-power Infineon MCUs:

- Accurate voice commands

- Custom wake words

- Keyword recognition

Speech-to-intent AI offers best-in-class performance with high accuracy and minimal false detects, while supporting both native and non-native English speakers.

DEEPCRAFT Audio Enhancement cleans up noisy environments with robust AI techniques:

- Noise suppression

- Acoustic echo cancellation

- Dynamic beamforming

These features are calibrated with easy-to-use tools for rapid integration. Developers can build, test, and deploy voice models via a no-code graphical UI, ensuring faster product development.

Integrated innovation – Affordability meets sophistication

Where once only expensive devices could offer clarity and smart voice recognition, PSOC Edge brings advanced edge audio to everyone.

Its built-in DSP capabilities and audio front-end middleware mean complex processing. From multi-mic beamforming to acoustic event detection, all happens within a single, efficient platform.

Furthermore, DEEPCRAFT’s AI-driven enhancements extend these advantages to cost-sensitive products like entry-level earbuds or low-cost smart sensors. This ensures everyone gets premium experiences.

Your next device, powered by Infineon audio innovation

With traditional solutions, advanced features like real-time voice and sound recognition have required expensive hardware or deep dependence on cloud-based computing. This limitation made them inaccessible to lower-end devices.

The synergy of PSOC Edge hardware and DEEPCRAFT software enables even resource-constrained devices to truly hear, understand, and act—all in real time, on ultra-low power, and with human-like intuition.

This breakthrough makes previously exclusive, cloud-dependent features available across a broader range of devices, democratizing intelligent functionality. Infineon is committed to building interfaces that empower people through natural, voice-driven interaction.

Takeaway

From predictive maintenance to voice-guided cooking, smart audio is reshaping how we live. Infineon’s integrated hardware and software make this transformation accessible to all, enabling high-quality, always-on audio experiences.

The post The Rise of Smart Audio: From Sound to Intelligence appeared first on ELE Times.

Serrated Edges: For Less Noise and Improved Fan Performance

Courtesy: Cadence

Understanding Noise Reduction in Industrial Fans

Industrial fans are widely utilized across various sectors, including manufacturing, automotive, and energy production, playing a vital role in ventilation and cooling. However, a notable drawback of these powerful machines is the significant noise they produce, which can range from 70 to 120 decibels. A primary contributor to this noise is the aerodynamic turbulence created by the fan blades. Addressing the challenges posed by the noise generated by industrial fans is a continuing focus of research in this area.

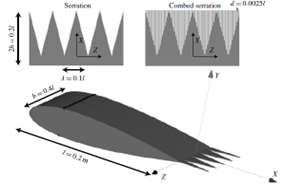

One promising avenue for reducing this noise involves passive noise mitigation methods, such as modifying the trailing edges of the fan blades. By incorporating designs with features such as sawtooth or serrated edges, we can effectively reduce noise levels without compromising performance. Computational fluid dynamics (CFD) studies of industrial fan designs can help pinpoint the optimal configuration that enhances performance and minimizes operational noise.

Sawtooth and combed-sawtooth trailing-edge serrations (Avallone et al., 2018)

Sawtooth and combed-sawtooth trailing-edge serrations (Avallone et al., 2018)

In the webinar on CFD for Turbomachinery: Boost Performance & Control Noise, Antonis Karasavvidis, principal customer service engineer, and Domenico Mendicino, senior product engineering manager, examine a case study on the CFD analysis of industrial fan blades with serrated edges to understand how these modifications can effectively reduce the noise and enhance performance. This blog provides an overview of the case study presented in the webinar.

Overview: CFD Simulation of Industrial Fan with Serrated Edges

This case study examines the aerodynamic and acoustic performance of a ventilation fan, focusing on modifications to the blade design and their impact on airflow and noise characteristics under turbulent flow conditions. Starting with a baseline design, a ventilation fan was initially created using mean line design tools, achieving a blade tip Mach number of about 0.2. The design features a bell mouth at the inlet and blades constructed in three sections, utilizing NACA 65 profiles. This foundational design serves as a benchmark for subsequent modifications and performance evaluations.

Blade Variations and Design Enhancements

The study examines two types of serrated trailing edges added to the baseline design to achieve noise reduction and potential performance enhancements. These include:

- Variable Serration: A serration pattern applied with varying geometry along the blade’s trailing edge

- Uniform Serration: A consistent pattern cut along the trailing edge

Further enhancements include mechanical features such as embossing, pivots, and fillets, which are standard in this type of turbomachinery. Assessing these blade variations allows for comprehensive insight into their aerodynamic and acoustic effects.

Mesh Generation Workflow for Accurate Simulation

In this case study, Fidelity AutoGrid generates a high-quality, low-Reynolds-number mesh comprising approximately 2 million cells in approximately 20 seconds for the baseline design. This mesh is a structured multi-block grid with matching nodes on the periodic boundaries.

Given the complex geometries associated with the serrated trailing edges, an advanced mesh generation workflow was implemented, utilizing an unstructured mesh to capture the complex blade geometry while keeping the high-quality structured multi-block grid for most of the flow path. Utilizing Fidelity AutoGrid and ANSA, structured and unstructured grid strategies were combined to capture the intricate details efficiently.

Results of CFD Simulations

Using the GPU-enabled Fidelity Flow Solver, the simulations investigated the aerodynamic performance of the baseline design, uniform, and variable serrated blades. The solver provided rapid convergence within 200 iterations for the steady-state simulation and 3,600 time steps for an unsteady run with 10 inner iterations. Leveraging GPU acceleration on the Cadence Millennium platform provided high-fidelity results within minutes, even for the mixed-grid simulations.

The results indicated:

- Trailing Edge Effects: Serrations alter the pressure field near the trailing edges, particularly influencing the mixed-out flow downstream and the wake width

- Geometric Influence: Longer serration teeth facilitated enhanced energy exchange, correlating with improved aerodynamic performance

Additionally, the hub’s pivot and other mechanical features induced secondary flows, disrupting velocity profiles at the outlet and creating vortices, especially in the serrated configurations.

Turbulent viscosity ratio distribution downstream of the blade for the baseline and uniform serration design

Turbulent viscosity ratio distribution downstream of the blade for the baseline and uniform serration design

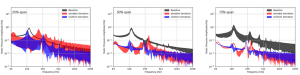

Noise Prediction and Analysis

The study evaluated noise characteristics through pressure fluctuations downstream of the trailing edge using both stationary and moving probes at different span heights. Key findings include:

- Stationary Probes: Minor differences in noise levels at various heights, dominated by blade-passing frequencies

- Moving Probes: Significant noise reduction effects at higher spans with serrated blades, while lower spans were governed by turbulence from the pivot and other design complexities

Pressure fluctuations from the three probes located at span heights of 25%, 50%, and 75% on three different designs.

Pressure fluctuations from the three probes located at span heights of 25%, 50%, and 75% on three different designs.

This case study highlights the aerodynamic and acoustic advantages of serrated trailing edges in ventilation fan design. By leveraging advanced mesh generation and GPU-based CFD solvers, the study achieved efficient simulations and precise results. The findings emphasize the importance of optimizing serrated geometries and conducting far-field noise analyses to refine fan performance, reduce noise emissions, and enhance design efficiency.

The post Serrated Edges: For Less Noise and Improved Fan Performance appeared first on ELE Times.

Happy Workbench Wednesdays! A bunch of folks advised that I should clean up my space. Not done yet, but it’s a start

| It’s still a mess; I just reappropriated the mess to my desk for sorting later. But yeah, this environment wasn’t fit for doing anything. And it showed in the quality of my work work (permanent work from home employee) as well as the projects that I had lined up on this desk. Now at least my bench is somewhat tidy, I actually figured out the issue of this HP frequency counter [link] [comments] |

Infineon adds new packages to CoolSiC MOSFET 750V G2 family

VisIC closes $26m round B investment

It ain’t much but it’s honest work.

| My cute lil workshop/man-cave/study room. [link] [comments] |

Від 2G до 5G: як проєктують і впроваджують сучасні базові станції мобільного зв'язку

Одним із підрозділів університету, що активно співпрацюють з компанією "Київстар" у межах її домовленостей про співробітництво з КПІ ім. Ігоря Сікорського, є Навчально-науковий інститут телекомунікаційних систем. Нещодавно провідні фахівці компанії прочитали для його студентів лекцію, в якій висвітлили цілу низку питань, особливо корисних для майбутніх інженерів галузі.

Two-wire precision current source with wide current range

An interesting analog design problem is the precision current source. Many good designs are available, but most are the three-wire types that can be used as a positive (see Figure 1) or a negative (Figure 2) polarity source, but not both from the same circuit.

Figure 1 A typical three-wire [power supply (PS), ground, and load] precision positive current source offering an accuracy of 1% or better. The output current is 1.24/R1.

Figure 1 A typical three-wire [power supply (PS), ground, and load] precision positive current source offering an accuracy of 1% or better. The output current is 1.24/R1.

Figure 2 A typical three-wire negative current source, or a current sink.

Wow the engineering world with your unique design: Design Ideas Submission Guide

Two-wire designs exist and have the advantage of being able to serve in either polarity connection. Some of them are simple and cheap but somewhat limited in terms of performance. See Figure 3 for a classic example.

Figure 3 A textbook classic two-wire current source/sink where 40V > (V+ – V-) > 4.5V.

Amazingly, this oldie but goodie comprises just two commodity components, one of which is the single resistor that programs it (R1). Its main limitation is a (conservative) 10 mA minimum output current:

Output current = 1.25/R1 >= 10mA and <= 1.5A

Accuracy (assuming perfect R1) = +/- 2%

In other news, a recently published high-performance, ingenious, and elegantly simple Design Idea (DI) for a two-wire source comes from frequent contributor Christopher Paul. Christopher Paul’s circuit significantly extends the precision and voltage compliance of the genre. See it at: “A precision, voltage-compliant current source.”

Meanwhile, my effort is shown in Figure 4. This design takes a different approach to the two-wire topology that allows more than a 1000:1 ratio between maximum and minimum programmed output. It boasts uncompromised precision over the full range.

Here’s how it works.

Figure 4 A two-wire source/sink with 1% or better precision over > 1000:1 output range.

The precision 1.24-V reference Z1 is the heart of the circuit. Start-up resistor R6 provides it with a microamp-level trickle of current on power up. That’s not much, but all it needs is to squeak out more than A1’s 100 µV of input offset.

Then, A1’s positive feedback from R2 will take over to regeneratively provide Z1 with the required 80 µA of bias through R5. At this point, the A1 pin 5 will stabilize at 1.240 V, and R3 will pass 10 µA.

That will pull A2 pin 3 positive and coax A2’s to turn on pass transistor Q1. The Io current, passed by Q1, will develop output-proportional negative feedback across R1.

This will sink 10 µA (1.24V/R4) current through R4, nulling and balancing A2’s non-inverting input and its output. This will set Io to 1.24V/R1 + 10µA.

Damping resistors R8 and R9 together with compensation network R7C1 provide a modicum of oscillation and other naughty behavior suppression. This will generally encourage docile niceness.

The minimum programmable Io budget consists of op-amp current draw (500 µA max) + Z1 bias (82 µA max) + R4 feedback (10.1 µA max) + R6 trickle (4 µA max) = 596 µA. The maximum Io is limited by A2’s output capability and Q1’s safe operating area; 1 A is a conservative ceiling.

Although both op-amp and Q1 are rated for 36 V, don’t overheat them with more voltage than the load compliance requires. Even then, for output in the ampere range, Q1 will definitely need a robust heatsink, and R1 and R8 need to be big and fat.

Stephen Woodward’s relationship with EDN’s DI column goes back quite a long way. Over 100 submissions have been accepted since his first contribution back in 1974.

Related Content

- A precision, voltage-compliant current source

- Basic design equations for three precision current sources

- A negative current source with PWM input and LM337 output

- A high-performance current source

- Active current mirror

The post Two-wire precision current source with wide current range appeared first on EDN.

Designing a Gilbert Cell Based Automatic Gain Controller

AI-Powered Semiconductor Design for EV Reliability: Why India Can Lead the Next Electronics Revolution?

By Sukhendu Deb Roy, Industry Consultant

Why India is uniquely positioned to lead the next electronics revolution by closing the loop between silicon, software, and the road?

Intro: India’s EV Electronics MomentIndia is at a powerful inflection point: a fast-growing EV market, a strengthening semiconductor design ecosystem, and a deep pool of AI and software talent. The real value in this transition will not come from assembling more electric vehicles alone, but from mastering the chips, power electronics, and intelligence that make EVs reliable, safe, and always available.

From SiC traction inverters to battery management systems (BMS) and telematics ECUs, EV reliability is fundamentally an electronics problem that plays out under harsh, real-world Indian conditions. For OEMs and their Tier-1 and Tier-2 partners, this shift means semiconductor decisions, electronics architecture, and AI strategy are no longer separate silos; together they determine uptime, warranty risk, and customer trust.

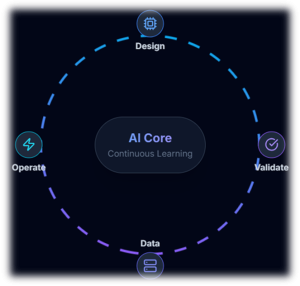

AI can close the loop between semiconductor device behavior and field performance, turning today’s reactive maintenance into tomorrow’s predictive and self-healing EV ecosystems.

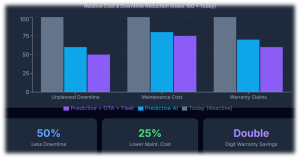

Takeaway: AI-powered semiconductor and EV electronics design can shift reliability from reactive repairs to predictive and self-healing systems, cutting failures, downtime, and warranty costs by roughly 40–60% over time — and India is uniquely placed to lead this shift.

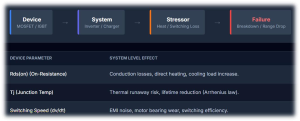

Every EV reliability story starts at the device level. Choices around Si/SiC MOSFET design, IGBT technology, gate-driver strategies, packaging, and thermal paths determine switching losses, heat, and long-term stress in traction inverters and onboard chargers. Under Indian duty cycles

— high ambient temperatures, stop-go traffic, overloaded vehicles, and poor roads — those design decisions show up later as derating, efficiency loss, or outright failures.

The same applies to battery cells and BMS hardware: cell chemistry, form factor, and sensing strategies determine how accurately the system can “see” degradation or thermal risk. When this visibility is poor, fleets experience unexpected range drops, thermal events, and a costly pattern of warranty claims and field fixes.

Traditional semiconductor and power-electronics design relies on simulations, design for reliability (DfR), lab validation, and field-return analysis. AI adds a new, continuous feedback loop: it learns from billions of switching events, thermal cycles, and usage profiles observed in real vehicles, and feeds that learning back into both device design and control algorithms.

This loop uses signals such as high-frequency waveforms, temperature gradients across modules, vibration signatures, and battery state-of-health (SoH) curves to identify stress patterns and precursors to failure. Over time, designers can co-optimize silicon, packaging, and firmware for actual Indian operating conditions rather than idealized lab scenarios — improving both first-time-right silicon and long-term field reliability.

A quick India scenario: Imagine a 2-wheeler fleet in Chennai. AI models trained on inverter waveforms and temperature data across thousands of rides can flag an emerging failure pattern in a particular batch of power modules and trigger an OTA-driven derating and firmware fix before riders see breakdowns.

Four AI Capabilities That Change EV Reliability Predictive BMS Intelligence (Cell-level)Batteries are the single largest cost item in EVs and one of the most reliability-critical. AI models can continuously learn from cell voltages, temperatures, internal resistance evolution, and charge–discharge histories to detect early degradation, thermal runaway risk, and balancing drift at cell level. For Indian conditions — high temperatures, frequent partial charging, and stop-start usage — such predictive BMS intelligence can extend usable battery life by double-digit percentages and materially improve safety. Better SoH and range estimation also reduce range anxiety for drivers and allow fleet operators to plan maintenance proactively.

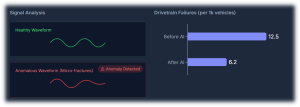

Traction inverters and motors sit at the heart of EV performance, directly built on semiconductor device and magnetic design Machine-learning models can analyse switching waveforms, current and voltage harmonics, torque–speed behavior, vibration patterns, and thermal maps to catch subtle anomalies long before a fault triggers a visible error. This enables early detection of gate-drive misbehavior, partial shorts, insulation degradation, bearing wear, and cooling issues that would otherwise surface as roadside failures or derated power. At fleet scale, such diagnostics can realistically cut unexpected drivetrain failures by 30–50%, significantly improving asset utilization.

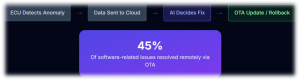

Diagnostics alone are not enough; systems need a way to act on insights in the field. Over-the-air (OTA) firmware platforms, combined with AI, can detect abnormal behavior in ECUs, roll back to known-good images, patch vulnerabilities, and adjust control parameters to protect power devices and batteries. For example, if analytics indicate elevated stress on a particular inverter design in hot regions, OTA updates can modify switching patterns, current limits, or thermal thresholds to reduce risk without requiring a workshop visit. OEMs already see significant warranty and recall savings from OTA-based fixes; AI-guided self-healing will deepen this advantage and improve customer experience.

The full power of AI emerges when every vehicle becomes a data node. Connected EVs stream anonymized health, usage, and environment data to the cloud, where AI aggregates patterns across cities, duty cycles, and seasons. Refined diagnostic and control models are then pushed back to vehicles via OTA, closing the loop from chip to cloud and back.

In this paradigm, EVs do not just age; they improve over time. Updated BMS models provide better SoH estimates, inverter control becomes more efficient, and fault detection grows more sensitive with every kilometer driven. The same hardware platform becomes more valuable with each software and model refresh — a powerful shift in how the industry thinks about lifecycle value.

Evidence from other industries shows that AI-driven predictive maintenance can reduce unplanned downtime by about 30–50% and cut maintenance costs by 18–25% through earlier fault detection and better planning. At the same time, connected diagnostics and OTA capabilities allow OEMs to resolve many issues remotely, significantly lowering warranty and recall costs.

When these approaches are applied systematically across EV batteries, powertrain electronics, and software — and when semiconductor and system design are instrumented for rich telemetry — it is credible to target 40–60% improvements in reliability metrics and warranty economics over a multi-year horizon. This magnitude of impact justifies serious investment from OEMs, Tier-1s, Tier-2s, and policymakers.

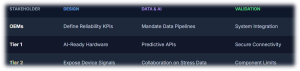

- OEMs

- Define EV reliability KPIs (uptime, failure rates, cost per vehicle) that explicitly depend on electronics and AI, not just mechanical systems.

- Mandate data pipelines, OTA capabilities, and diagnostic hooks as core requirements in platform and supplier specifications.

- Tier-1 suppliers (inverters, BMS, ECUs, telematics)

- Design hardware and firmware with AI-grade observability: rich sensing, timestamped logs, and secure connectivity interfaces.

- Offer OEMs diagnostics and predictive-maintenance APIs as part of the product, not as optional add-ons.

- Tier-2 and component suppliers (devices, sensors, boards)

- Expose relevant device-level health signals (e.g., temperature, switching counters, error events) that higher-level ECUs and cloud models can consume.

- Collaborate with Tier-1s on test data, stress conditions, and failure signatures to make AI models more accurate.

India is already emerging as a major center for semiconductor design, verification, and R&D services, with global chip majors expanding their engineering presence in the country. Parallelly, the domestic EV market — particularly in 2-wheelers, 3-wheelers, and commercial fleets — is scaling rapidly, creating demanding real-world use cases that generate rich data for AI models.

This combination positions India to move beyond low-cost manufacturing and become a global hub for EV electronics intelligence, including:

- AI-optimized power device reference designs for SiC/IGBT

- Predictive and interpretable BMS/Inverter control

- Validated OTA/diagnostics platforms for fleet

These are exportable IP and platform plays that can serve global OEMs, not just domestic brands, while also anchoring higher-value electronics manufacturing within India.

To capture this opportunity, India’s ecosystem needs alignment across six pillars:

- Semiconductor & power-device design: Build AI-ready telemetry and reliability sensing into devices and modules from the outset.

- Power-electronics & system integration: Architect inverters, BMS, and ECUs for rich observability and secure connectivity.

- Software-defined vehicle architectures: Treat data collection, model deployment, and OTA as first-class design goals, not afterthoughts.

- Secure-by-design engineering: Ensure that telematics, OTA, and data flows are robust against cyber threats.

- Validation & standards: Extend traditional validation with AI-driven test generation, digital twins, and continuous in-field learning loops.

- Interdisciplinary Talent Development: Fund research and training programs to explicitly create hybrid talent (e.g., AI engineers specializing in power electronics and thermal physics) required to bridge the gap between device design and fleet data.

If OEMs, Tier-1s, Tier-2s, chip companies, startups, and policymakers work together across these pillars, India can define the template for AI-powered semiconductor design and EV reliability — and, in doing so, help lead the next global electronics revolution.

Email: mailsukhendu@gmail.com

LinkedIn: Sukhendu Deb Roy

The post AI-Powered Semiconductor Design for EV Reliability: Why India Can Lead the Next Electronics Revolution? appeared first on ELE Times.