ELE Times

Keysight Expands PCIe® 7.0 Test Portfolio with New Receiver Stress Calibration

Keysight Technologies today announces a new PCIe 7.0 Receiver (RX) Test application, growing its PCIe 7.0 portfolio to enable end-to-end transmitter and receiver validation. The receiver’s test application targets the emerging challenge of receiver validation performance at 128 GT/s for next-generation computers, AI, and data centre applications.

PCIe base specification releases continue to shorten and the PCIe 7.0 standard moves towards adoption. Engineers face rising challenges in validating receiver performance. These challenges are caused by a lack of test equipment for receiver testing, along with the increasingly complex stress signal calibration requirements.

At 128 GT/s, PCIe 7.0 receiver validation has become a defining hurdle for the industry. Reliable validation testing ensures the least risk and interoperability as the ecosystem scales. Keysight’s receiver test solution enables engineers to validate devices with confidence.

The combination of M8050A BERT family, M8042A 120 Gbaud pattern generator and M8043A error analyser forms the receiver dress testing. This enables accurate signal generation and analysis for ASIC validation.

Complimenting the hardware, the new N5991PB7A software helps in accelerating the receiver validation process by simplifying the calibration and control of PCIe 7.0 receiver stress signals. Advanced automation capabilities enable accuracy in ASIC receiver characterisation.

Combining the hardware and the software formulates a comprehensive PCIe 7.0 receiver test solution that aligns the validation workflow and improves measurement accuracy with ASIC development reliability in common clock mode.

The Key benefits of the new receiver stress calibration for PCIe 7.0 :

- Accelerates Receiver Bring-Up and Validation: Automated PCIe 7.0 RX workflows reduce manual setup and enable faster results.

- Reduces Compliance Risk at 128 GT/s: Specification-aligned, stressed-signal generation exposes receiver weaknesses initially, minimising last-stage rework.

- Compliments End-to-End PCIe 7.0 Test: When combined with Keysight’s PCIe 7.0 TX test solution, engineers gain comprehensive transmitter and coverage.

The post Keysight Expands PCIe® 7.0 Test Portfolio with New Receiver Stress Calibration appeared first on ELE Times.

VETH100A1DD1 ESD Protection Diode Passes IEEE 10BASE-T1S Compliance Tests

The Vishay Semiconductor VETH100A1DD1 ESD has successfully passed IEEE 10BASE-T1 compliance testing. It confirms suitability for use in a one-pair Ethernet (OPEN) bus architecture.

The VETH100A1DD1 meets all three OPEN Alliance EMC Test Specifications for ESD Protection Devices. Which supports 100BASE-T1S , and 1000BASE-T1 applications. 10BASE-16 is an automotive data bus which is designed to connect eight nodes over a single twisted-pair cable with lengths of up to 25 meters. The standard nominal data rate is 10 Mbit/s using baseband transmission over one twisted pair in short-range operations. Ethernet connects 100BASE-T1, 1000BASE-T1 , and 10BASE-TS in a multidrop bus topology. It protects automotive Ethernet networks.

10BASE-T1S network includes an ESD protection device at each node; very low capacitance is critical to maintain signal integrity across the bus. The VETH100A1DD1 is specially designed to meet this requirement, offering a capacitance below 1 pF as described in the 10BASE-T1S test specification, making it well-suited for 10BASE-T1S applications while remaining compatible with higher-speed automotive Ethernet standards.

Vishay manufactures one of the world’s largest portfolios of semiconductors and electronic components that are essential to create innovative designs in automotive, industrial, computing, consumer, telecommunications, military, aerospace, and medical markets.

The post VETH100A1DD1 ESD Protection Diode Passes IEEE 10BASE-T1S Compliance Tests appeared first on ELE Times.

Union Cabinet Authorises Two New Semiconductor Units With an Incremental Investment of Rs. 3,936 Crore

The Union Cabinet approves two more semiconductor projects under the India Semiconductor Mission (ISM) with an investment of more than Rs. 3936 Crore. India’s first commercial Mini/Macro LED display; the facility is based on GaN(Gallium Nitride) Technology and a semiconductor facility. These two approvals are expected to generate more than 2,230 employment opportunities for skilled professionals in Gujarat.

Crystal Matrix Limited (CML) will establish a compound facility semiconductor fabrication. The annual capacity for Mini/Micro-LED display panels is 72,000 sq. meters, and for Mini/Macro LED GaN Epitaxy Wafers is 24,000 sets of RGB wafers. Primarily, these products will be used in large displays for TVs and signage/commercial displays, medium-sized displays for tablets, smartphones, car displays, and Micro displays for Extended Reality(XR) glasses and smart watches.

Suchi Semiconductor Private Limited (SSPL) will set up an Outsourced Semiconductor Assembly and Test(OSAT) facility in Surat, Gujarat, with a production capacity of 1033.20 million chips per annum. The aim is to include power electronics, analog ICs, industrial systems, automotive, industrial automation, and customer electronics.

These two approvals are enhanced by infrastructure support from 315 academic institutions and 104 start-ups across the country. Two projects have already initiated the commercial shipment, and two more are expected to start soon. It would add to the growing world-class chip manufacturing in India.

The post Union Cabinet Authorises Two New Semiconductor Units With an Incremental Investment of Rs. 3,936 Crore appeared first on ELE Times.

Arrow Electronics Launches Web-based “Digital Test Drive” to Streamline Hardware Testing

Arrow Electronics (NYSE: ARW) today announced the launch of Digital Test Drive, a cloud‑based remote engineering service that helps technology developers evaluate hardware faster, reduce costs and improve productivity.

Through a secure, private web link, individual users and distributed teams can instantly connect to a pre-set up virtual machine and connect via cloud directly to physical development boards hosted in Arrow’s engineering labs. Users can remotely control evaluation kits, access software environments, run tests and view results in real time. Workshops, training, product demonstrations and live support from Arrow’s technical experts are available.

Digital Test Drive simplifies early‑stage testing and collaboration by helping eliminate common barriers such as kit availability, shipping delays, customs paperwork, platform comparisons, complex setup and software installation, which helps businesses shorten the development cycles and accelerate decision‑making.

“Digital Test Drive helps remove the delays and complexity that slow product development,” said Murdoch Fitzgerald, chief growth officer of global services for Arrow’s global components business. “There’s no shipping, no setup and fewer up‑front costs, just instant access to the tools engineering teams need to work more efficiently.”

Digital Test Drive complements Arrow’s existing Test Drive program that allows customers to borrow physical hardware for on‑site evaluation for up to 28 days.

More information:

Digital Test Drive – Remote Hardware Testing

About Arrow Electronics

Arrow Electronics (NYSE: ARW) sources and engineers technology solutions for thousands of leading manufacturers and service providers. With 2025 sales of $31 billion, Arrow helps enable innovation across major industries and markets. Learn more at arrow.com.

The post Arrow Electronics Launches Web-based “Digital Test Drive” to Streamline Hardware Testing appeared first on ELE Times.

From Updates to Intelligence: How OTA, Data, and Ethernet Are Reshaping Vehicles

In an exclusive interview with ELE Times, Shrikant Acharya, CTO and Co-founder of Excelfore, outlines how vehicles are evolving from simple update-driven systems to intelligent, data-centric platforms. He explains the distinction between OTA updates and data aggregation within a unified lifecycle pipeline, while highlighting innovations such as adaptive delta compression and distributed architectures. Acharya also explores the growing role of Ethernet, AI, and scalable system design in shaping software-defined vehicles, positioning India as a key market in this transformation.

ELE Times: Could you elaborate on OTA updates and how they differ from in-vehicle data-related processes? Also, what differentiates your OTA solution in this evolving landscape?

Excelfore:

It is important to distinguish between OTA updates and data aggregation. OTA primarily refers to a one-way process—delivering updates from infrastructure to the device. In contrast, extracting data from the device back to the infrastructure is better described as data aggregation. When viewed as a unified pipeline, both functions contribute to lifecycle management. Updates are deployed to improve or fix device functionality, while data is retrieved to evaluate performance, detect issues, and validate those updates through analytics.

From a technical standpoint, OTA updates are asynchronous, involving large data transfers—often several gigabytes —owing to their bulk, especially in systems like Android-based infotainment. Conversely, data retrieval is typically synchronous or near-real-time, requiring smaller, segmented packets to ensure continuity and responsiveness, thereby maintaining the real-time nature of the aggregation. In essence, while both operate within the same pipeline, OTA updates and data aggregation serve fundamentally different purposes—one enables corrective action, while the other supports monitoring and analysis.

OTA has evolved significantly—from early implementations in industrial systems to its adoption in automotive environments. Initial solutions, such as those derived from mobile update frameworks, were primarily suited for infotainment systems and can be considered first-generation approaches. At the same time, our solution represents a more advanced, third-generation architecture. A key innovation lies in its plug-and-play capability. Devices entering the network authenticate themselves through certificates and register dynamically. The client system acts as a generic dispatcher without embedded knowledge of the vehicle or environment, enabling deployment across diverse ecosystems.

Another major advancement is the distributed architecture. Complexity is intentionally removed from the communication pipeline and instead distributed between the server and device. This approach ensures scalability, simplifies integration, and allows seamless accommodation of legacy systems. OEMs can retain existing device management frameworks while selectively adopting newer capabilities.

Agents within devices handle updates, ensuring structured execution while maintaining flexibility. This modular and distributed design is central to our differentiation, which also helps OEMs to preserve legacy.

ELE Times: Could you explain the concept and significance of adaptive delta compression? How does this approach optimize bandwidth and system performance?

Excelfore:

Traditionally, software updates required transmitting the entire payload. Delta compression improves efficiency by sending only the differences between software versions, significantly reducing bandwidth usage and update time. However, managing these differential files over time creates a substantial IT burden for OEMs. Our approach shifts this responsibility to the server-client system. The server dynamically determines when and how to generate and transmit delta updates, eliminating the need for OEMs to manage them manually.

Also, if one doesn’t want to use the main channel to send these large files, you only give them a reference to the URL for that payload, and then the agent sets up an independent connection and puts it down. Also, the “adaptive” aspect introduces intelligence into this process. The system evaluates multiple parameters—such as device memory, processing capability, network interface (CAN, LIN, Ethernet), and connection speed—to determine the most efficient compression strategy.

Additionally, large payloads are handled via separate channels, ensuring that the primary communication pipeline remains responsive for critical operations such as authentication and command execution.

Regarding optimization, it is achieved by tailoring data packets to device constraints. For instance, if a device has limited cache capacity, the system ensures that data units fit precisely within that space. This avoids inefficiencies caused by partial data processing and repeated memory access. Beyond cache considerations, factors such as network speed and interface type are also evaluated. The system assigns weighted parameters to these variables and generates an optimal data transfer strategy, ensuring efficient utilization of bandwidth while maintaining system performance.

ELE Times: With the rise of SDVs and advanced features, how do you see networking technologies evolving?

Excelfore:

Ethernet has emerged as the dominant in-vehicle networking standard due to its scalability, cost efficiency, and high bandwidth capabilities. Earlier technologies like FlexRay served as transitional solutions but have largely been superseded.

While legacy systems such as CAN will continue to exist due to installed base constraints, advancements like 10 Mbps multi-drop Ethernet are increasingly capable of replacing them.

Time-Sensitive Networking (TSN) plays a crucial role, particularly in time synchronization and deterministic data transmission. Combined with Quality of Service (QoS) mechanisms, it enables efficient bandwidth utilization—often achieving up to 85–90% channel efficiency compared to significantly lower utilization without traffic management.

ELE Times: How are SDVs reshaping vehicle architecture and OEM strategies? How do you view the evolution of SDVs and connected vehicles in India?

Excelfore:

The term SDV is often used loosely, but its true definition involves a standardized hardware platform whose functionality can be dynamically reconfigured through software.

Architecturally, the industry has evolved from domain-based systems to zonal architectures with centralized computing. Zonal controllers process localized data, which is then transmitted to central compute units for decision-making.

This shift introduces challenges, particularly in thermal management, as high-performance compute systems generate significant heat. Cooling solutions have thus become a critical component of system design.

For India, it presents a unique opportunity, having bypassed several legacy stages of technological evolution. This allows for a more forward-looking approach, with fewer constraints from outdated systems. There is a strong willingness to adopt advanced technologies based on value and functionality. This mindset, similar to what was observed in China during its rapid technological growth phase, creates a favorable environment for innovation.

For technology providers, this openness enables deeper collaboration and the deployment of cutting-edge solutions, positioning India as a promising market for SDVs and connected vehicle ecosystems.

ELE Times: What role do you see AI playing in OTA and SDV ecosystems?

Excelfore:

AI adoption in vehicles is constrained by cost and computational limitations. As a result, the focus is shifting toward domain-specific, lightweight models rather than large, generalized AI systems.

While generative AI will primarily reside in the cloud, vehicles will utilize smaller models tailored to specific functions—such as diagnostics or object detection. One practical application is the digitization of vehicle manuals, enabling intelligent interpretation of diagnostic codes and user-friendly outputs.

However, monetization will be a key factor. Advanced AI-driven features are unlikely to be offered free of cost and will likely be delivered as subscription-based services.

ELE Times: How do you ensure safety and integrity in OTA updates, especially for critical systems?

Excelfore:

Data integrity is ensured through mechanisms such as SHA-256 hashing, which verifies that transmitted data remains unaltered. If discrepancies are detected, updates are rejected.

Authentication is enforced באמצעות digital certificates, establishing both device identity and software origin. Additionally, encryption ensures that only the intended device can decode and execute the update.

A critical vulnerability lies in key management during manufacturing. Protecting private keys is essential, as any compromise at this stage can undermine the entire security framework.

The post From Updates to Intelligence: How OTA, Data, and Ethernet Are Reshaping Vehicles appeared first on ELE Times.

Audio over Ethernet: How Stellar G6 is replacing dedicated audio cables with a single Ethernet backbone

STMicroelectronics is enabling a shift from dedicated audio wiring to Audio over Ethernet in next-generation vehicles. The Stellar G6 automotive MCU integrates hardware-level Time-Sensitive Networking, Media Clock Recovery, and a dedicated communication engine to deliver high-fidelity, zero-jitter audio over the vehicle’s existing Ethernet backbone. The approach eliminates the need for proprietary A2B cables and transceivers, saving automakers approximately $70 per vehicle while enabling new capabilities such as real-time Active Noise Cancellation at the zonal level. A joint solution with AutoCore has already demonstrated end-to-end latency under two milliseconds, and ST is showcasing the technology live at Embedded World 2026 in Nuremberg.

Bringing high-fidelity audio to the software-defined vehicle

In a car, sound is personal. Listeners sit in fixed, asymmetrical positions surrounded by dozens of speakers, and their brains are ruthlessly precise about timing. A delay of just five milliseconds between two speakers is enough for the Haas Effect to kick in, tricking the listener into “pinning” the sound to whichever speaker fired first. A delta of two milliseconds can pull the entire soundstage to one side of the cabin, destroying the “phantom center” that makes a singer feel like they’re standing on the dashboard. When speakers fall slightly out of sync, sound waves collide destructively, creating nulls in the frequency response that make audio sound hollow or metallic. This is comb filtering, and it’s the acoustic signature of a timing problem.

These are not edge cases. They are the everyday reality of in-cabin audio, and they explain why the automotive industry has relied on dedicated wiring like A2B (Automotive Audio Bus) for so long. A2B is effective, but it demands its own cabling and transceivers, adding weight, complexity, and cost to the vehicle harness. Now that the industry is shifting toward Software-Defined Vehicles and zonal architectures, a new question is taking center stage: can a single Ethernet backbone carry diagnostics, control signals, and high-fidelity audio at the same time, without compromising the millisecond precision that human hearing demands?

With the Stellar G6 automotive MCU, we set out to prove that it can.

Latency is a number; jitter is the real enemy

Engineers often focus on latency, the constant delay between source and speaker. However, in automotive audio, jitter is far more destructive. Jitter is the variation in that delay. On a standard Ethernet network, an audio packet can get stuck behind a burst of sensor data. If the delivery time “jitters” by even a few microseconds, it introduces phase distortion that smears the music. For applications like Active Noise Cancellation, where a microphone signal must be inverted and played back through a speaker in near real-time, jitter doesn’t just degrade quality. It breaks the physics entirely.

Solving this requires more than a fast processor. It requires determinism, meaning the guarantee that a packet arrives exactly when it’s supposed to, and clock coherency, ensuring every node in the vehicle shares the same nanosecond. These are hardware problems, and they need hardware answers.

What Stellar G6 brings to audio over Ethernet

The Stellar G6 was engineered to treat audio as a time-critical stream, not as generic data. Three hardware-level capabilities make this possible. First, the Stellar G6 features a built-in L2+ Ethernet Switch supporting the full suite of Time-Sensitive Networking (TSN) standards. IEEE 802.1AS (gPTP) synchronizes every node in the vehicle to a sub-microsecond master clock. IEEE 802.1Qbv (scheduled traffic) creates protected time slots for audio and microphone data, ensuring they always get priority even on a congested network. IEEE 802.1CB enables seamless redundancy through Ethernet ring topologies, eliminating the single point of failure that plagues traditional star configurations.

Second, even with a perfectly synchronized network, the audio sample clock can still drift. The Stellar G6 includes specialized Media Clock Recovery hardware. Rather than relying on a software-based PLL, a dedicated digital hardware loop recovers the Audio Master Clock directly from the Ethernet stream, keeping speakers and microphones in perfect phase. The result: virtually zero jitter on the recovered clock, which is the critical enabler for professional-grade audio delivery.

Third, Stellar embeds a dedicated communication engine that offloads all data-moving and synchronization tasks from the main CPUs. This hardware isolation means that a processing spike in the vehicle’s body-control zone cannot cause a pop or a glitch in the audio. Communication runs at the lowest possible latency, completely decoupled from whatever else the host cores are doing.

From central processing to localized intelligence

Traditionally, all audio processing happened in a central head unit. Moving to an Ethernet-based zonal architecture changes this fundamentally. With a Stellar G6 acting as the Zonal Controller at each vehicle zone, significant compute now sits closer to every speaker and microphone.

This unlocks capabilities that were previously impractical. In-Cabin noise cancellation becomes possible by placing microphones near individual seats, identifying noise sources such as a loud conversation in the rear, and cancelling them locally. Road noise cancellation works on the same principle: the system captures vibration and road noise through zone-level microphones, generates an anti-noise signal, and plays it back through nearby speakers with near-zero latency. The processing happens at the edge, in the zone, rather than travelling back and forth to a central unit. For the passenger, the result is a cabin that can become a sanctuary, a workspace, or a private sound bubble, all updated over-the-air as easily as a smartphone app.

The cost equation: saving up to $70 per vehicle

Beyond acoustic performance, Audio over Ethernet carries a straightforward economic argument. By eliminating dedicated A2B cables and transceivers and reusing the vehicle’s existing Ethernet backbone, automakers can save approximately $70 per vehicle. In an industry where every cent on the bill of materials is scrutinized, consolidating audio onto a network that already exists for diagnostics and control is not just elegant engineering. It’s a significant cost reduction that scales across millions of units.

From proof-of-concept to production validation

In January 2026, we announced a collaboration with AutoCore on an Ethernet-based Zonal Controller distributed audio solution. By combining Stellar G6’s Media Clock Recovery with AutoCore’s TSN protocol stack, the joint solution achieved end-to-end audio latency of less than two milliseconds. That is fast enough to run high-performance Active Noise Cancellation over a standard Ethernet backbone.

At Embedded World 2026, we are taking this further with a live demonstration of Stellar G6’s native Audio-over-Ethernet capabilities. The demo features two Zonal Controller Units, each built around a Stellar G6, connected in a ring topology. Each ZCU streams four channels of 24-bit audio over Ethernet, for a total of eight high-fidelity streams running simultaneously. Visitors can witness the audio clock recovery in action, hear the zero-jitter playback quality firsthand, and see the resilience of the ring topology through live plug-and-unplug trials that demonstrate fault tolerance without audio interruption. It is a concrete, audible proof point: dedicated audio cables are no longer a requirement for premium in-cabin sound.

The Ethernet backbone is the nervous system of the SDV

We are moving toward a future where the vehicle’s Ethernet backbone becomes its nervous system, and Audio over Ethernet is one of the most visible and audible ways this transformation is taking hold. When a vehicle can use its Zonal Controllers to deliver immersive sound, suppress road noise, or create a private acoustic zone for every passenger, the concept of what a “car” offers fundamentally changes.

Stellar G6 is not just a processor in this journey. Solving one of the most demanding timing and synchronization problems in hardware, it allows automotive engineers to focus on the experience rather than the plumbing. As the industry embraces the zonal revolution, we are ready to help redefine what the drive actually sounds like.

The post Audio over Ethernet: How Stellar G6 is replacing dedicated audio cables with a single Ethernet backbone appeared first on ELE Times.

Exploring The Surreality Of High-End Manufacturing On Indian Soil With Sudhir Tangri And Takuya Furata From Keysight

As Keysight explores localization and diversification opportunities through its recently inaugurated manufacturing facility in Chennai, India, ELE Times sat down with Mr Sudhir Tangri, Vice President & General Manager Asia Pacific, Keysight Technologies, and Takuya Furata, Senior Director Global Marketing, Asia Pacific, Keysight Technologies, to understand the nuances of the initiative and what Keysight is looking for in India now that its manufacturing facility is operational!

Keysight’s vision for its manufacturing facility and its integration within its global strategy are thoroughly examined. Sudhir Tangri highlights the significance of commencing manufacturing operations in India as a pivotal moment for Keysight’s presence in the region. The discussion delves into the comprehensive nature of the manufacturing process, from component sourcing to end-to-end production, underscoring the company’s commitment to quality and innovation. Moreover, the conversation emphasizes the value a manufacturing facility brings to Keysight India, aligning with the company’s core principle of prioritizing proximity to customers. This strategic move reflects Keysight’s enduring dedication to customer-centric practices, a principle that has guided the company’s trajectory over the past four to five decades.

Why A Manufacturing plant in India?

As the world witnessed the challenges of consolidated supply chains and consequential shortages following the COVID-19 pandemic, it was high time for companies to think about diversification across the supply chain, right from design to procurement and shipping. The case is the same with Keysight. “To diversify our global supply chains,” says Sudhir Tangri, referring to the reason for expansion.

Coming to India, Takuya Furata says, “India is not just leading, it is probably number one when it comes to a lot of indexes as well, so it’s growing, and our business is growing strongly in India as well.” Further, he adds, “So to be closer to the customers of the fastest-growing economy is a natural choice because we look into the future.” Tracing the history of Keysight’s plants in Japan and the USA, they were eventually moved to get closer to the customer as Keysight’s market expanded.

Global Impact of India Plant

Reflecting on the global impact of the newly inaugurated plant, Takuya Furata says, “Not just India, but within Asia, having two different manufacturing sites will benefit a lot of Asian customers for sure.” As demand picks up in Asia, Keysight is focused on derisking from one single manufacturing site in Asia to better support its customers across the continent.

“Looking over the entire Asia, this is such a happy moment because that’s going to add another value to the Asian customer,” adds Takuya Furata as he underlines the global impact of the facility, considering Asia’s geographical vicinity to India. This would largely mean smaller turnaround times, smaller manufacturing lifecycles, and easier procurement for the Keysight customers in Asia.

Challenges

While referring to the potential challenges of having a manufacturing facility in India, he says, “The priority right now is to stabilize and mature the manufacturing operations that we have started.” On the same lines, Takuya Furata talks about how worldwide companies must cross Day 01 to reach the next phase of production, Day 02, to make their efforts a success. He says, “If you don’t clear Day 01, there is no Day Two, right? So, it’s imperative for the entire team, at Keysight here and the Keysight team, to make this successful.”

One-of-a-Kind Dynamic

With the inauguration of the manufacturing facility in Chennai, Keysight India becomes the first T&M company in India to have its own manufacturing facility, which desirably puts Keysight into an entirely distinct segment of T&M companies in India. Reacting to the development along with a long-held dream of high-end manufacturing on Indian ground, Sudhir Tangri calls it a “surreal thing” unfolding before his eyes.

The post Exploring The Surreality Of High-End Manufacturing On Indian Soil With Sudhir Tangri And Takuya Furata From Keysight appeared first on ELE Times.

New Plug-In Timing Module Delivers Precise, Reliable Synchronization for Data Centers and 5G Networks to Meet the Demands of AI and Next-Generation Connectivity

National, 24th April 2026: As data centers and 5G networks become the backbone of AI-driven innovation and digital transformation, the need for precise, resilient timing solutions has never been more critical. Timing is not just a technical requirement, but rather a strategic enabler for high-performance, scalable infrastructure. Microchip Technology (Nasdaq: MCHP) today announces its MD-990-0011-B family of plug-in timing modules, delivering turnkey, high-precision synchronization for data center servers and 5G virtualized Radio Access Networks (vRAN).

Developed in collaboration with Intel, the MD-990-0011-B timing module is designed for seamless compatibility with Intel® Xeon® 6 SoC-powered server platforms, supporting both OEMs and ODMs in building future-ready systems. By leveraging Intel’s foundational vRAN architecture, the module enables robust, low-latency time synchronization, which is essential for distributed AI workloads and real-time applications.

Engineered for the reliability and scalability required by cloud infrastructure, virtualization, and high-availability deployments, the MD-990-0011-B supports automatic source selection and locking across Global Navigation Satellite Systems (GNSS), Synchronous Ethernet (SyncE), and Precision Time Protocol (PTP). This flexibility supports continuous, accurate timing even as network demands evolve.

“Timing is the invisible force that guides the world’s most transformative technologies. With the MD-990-0011-B timing modules, Microchip enables designers to address timing requirements proactively, whether at the outset or during upgrades,” said Randy Brudzinski, corporate vice president of Microchip’s frequency and time systems business unit. “Our plug-in solution eliminates the complexity of custom timing circuits, providing integration and reliability, accelerating innovation and reducing time-to-market for data centers and 5G networks.”

“Microchip’s MD-990-0011-B Timing Module aligns with Intel’s commitment to enable next-generation infrastructure by providing scalable, high-performance platforms that are ready for the demands of 5G, AI and cloud computing,” said Mike Merluzzi, GM of radio access networks at Intel Corporation. “By simplifying timing integration and enhancing reliability on Intel Xeon 6 SoC-powered platforms, we’re helping customers accelerate innovation and deployment.”

Delivering exceptional precision in time and frequency accuracy, along with robust holdover capabilities, the MD-990-0011-B timing modules are available in two variants. The MD-990-0011-BC01 offers 8 hours of holdover performance, while the MD-990-0011-BA01 offers 4 hours of holdover performance. These timing modules consolidate several of Microchip’s advanced technologies into a single, highly integrated solution. Key components include:

- Synchronous Ethernet (SyncE) Synthesizer (ZL80132B): Features two independent Digital Phase-Locked Loop (DPLL) channels for flexible and resilient synchronization

- Oven Controlled Crystal Oscillators (OCXOs, OX-22x): Engineered to provide up to 8 hours of holdover, ensuring stable timing during GNSS outages or network disruptions

- MCP9808 Temperature Sensor supporting enhanced environmental monitoring, 24LC024 EEPROM implementing board configuration, and VC-820 for low jitter performance

- Application image: www.flickr.com/photos/microchiptechnology/55091198450/sizes/l

The post New Plug-In Timing Module Delivers Precise, Reliable Synchronization for Data Centers and 5G Networks to Meet the Demands of AI and Next-Generation Connectivity appeared first on ELE Times.

Rohde & Schwarz to host Power Electronics Online Conference “From Design to Validation” in May

Munich, April 21, 2026 — The power electronics market is being driven by stricter efficiency targets, higher power densities, and increasing integration with large-scale power grids. Consequently, engineers must cope with non-ideal component behavior, fast transient stresses on wide-bandgap devices, and ever more demanding EMC requirements. The conference will address these challenges by presenting measurement-centric solutions that can be implemented with modern oscilloscopes, vector network analyzers, and precision power analyzers.

The program opens on May 5 with a keynote by Tobias Keller (Hitachi Energy) entitled “Power Semiconductors: Shaping the Future Power Grid – Performance and Reliability for Future Decades”. Tobias Keller will discuss the qualification of silicon and silicon carbide (SiC) devices for high-voltage grid applications, focusing on thermal cycling, short-circuit robustness, and long-term reliability data.

A second keynote, delivered on May 6 by Veit Hellwig (Infineon Technologies), will examine the impact of gallium-nitride (GaN) technology on high-voltage motor inverter topologies.

In addition to the keynotes, the conference comprises a series of technical sessions. One presentation will analyze passive component characterization, highlighting methods for extracting parasitic inductance and capacitance at frequencies above 100 MHz and demonstrating the influence of these non-idealities on converter stability. Another session will detail automated dynamic characterization of SiC and GaN power devices, showing how double-pulse test rigs can be synchronized with high-speed digitizers to reduce measurement uncertainty and to capture fast recovery behavior.

Electromagnetic compatibility topics are covered in two dedicated talks. The first provides practical guidance on the use of near-field probes for pinpointing radiated emission sources and for validating the effectiveness of EMI filter designs. The second demonstrates a complete conducted emission measurement workflow on a small-scale prototype, using a Line Impedance Stabilization Network (LISN) together with a modern mixed signal oscilloscope. The presenter will also outline a filter design methodology that exploits the time-frequency capabilities of the instrument.

A further webinar addresses the growing need for accurate efficiency measurement in data center and AI server power supplies. By employing precision power analyzers capable of tracking distorted waveforms and rapid load transients, participants will learn how to obtain true input and output power values that satisfy 80 PLUS certification requirements.

The last session focuses on harmonic current and voltage flicker compliance for low-voltage, grid-connected products. The speaker will review the limits and test procedures defined in IEC/EN 61000-3-2/-3-3 and IEC/EN 61000-3-12/-3-11, and will demonstrate how integrated compliance testing software linked to a power analyzer can deliver automated pass/fail decisions from early prototype evaluation through to final type approval.

Speakers include subject matter experts from Rohde & Schwarz, Hitachi, Infineon, PE-Systems, Würth Elektronik, and the Universities of Bremen and Zaragoza. Their contributions combine academic insight with industrial experience, providing attendees with both theoretical background and hands-on measurement strategies.

The conference is free of charge, but registration is required. The full agenda, speaker biographies and the registration portal are available at: http://www.rohde-schwarz.com/power-electronics-conference

The post Rohde & Schwarz to host Power Electronics Online Conference “From Design to Validation” in May appeared first on ELE Times.

STMicroelectronics propels new era of ultra-wideband technology for automotive and smart device applications

- Introducing the ST64UWB family: the first fully integrated ultra-wideband (UWB) solution supporting IEEE 802.15.4z and upcoming IEEE 802.15.4ab UWB standard with multi-millisecond ranging (MMS), including narrow-band assistance radio (NBA)

- ST64UWB family delivers industry-leading RF performance leveraging ST’s 18 nm FD-SOI technology

- Best-in-class performance enables new use cases and enhances user experience for automotive, smart home, and smart building applications

STMicroelectronics (NYSE: STM), a global semiconductor leader serving customers across the spectrum of electronics applications, introduces an ultra-wideband (UWB) chip family that comprehensively supports the next-generation wireless standard for localizing and tracking devices at distances up to several hundred meters. This UWB chip family combines extended range with greater processing power and robustness to enable new and improved automotive, consumer, and industrial use cases, including secure digital access control, presence and motion sensing, and precise approach detection.

“The ST64UWB family we announce today is an industry-first system-on-chip supporting the latest ultra-wideband specification, IEEE 802.15.4ab, including narrow-band assistance radio, with ultra-precise ranging and sensing,” said Rias Al-Kadi, General Manager, Ranging and Connectivity Division, STMicroelectronics. “These chips are tailored for automotive, consumer, and industrial applications, providing innovators with a powerful platform for the next wave of ultra-wideband use cases.”

The emerging standard builds on the IEEE 802.15.4z UWB wireless technology in today’s hands-free digital car keys that unlock a vehicle on approach. New technical enhancements enabled by multi-millisecond ranging (MMS) and narrowband assistance (NBA) extend operating range, strengthen connections with devices carried in bags or rear pockets, and enable direction finding at close range to better interpret user intent. IEEE 802.15.4ab also enhances radar mode, improving use cases such as child presence detection (CPD) in vehicles, a potentially life-saving feature recommended by Euro-NCAP, the independent vehicle safety assessment organization.

The devices are now sampling to major Tier 1s and original equipment manufacturers.

Why IEEE 802.15.4ab and ST64UWB matter

“IEEE 802.15.4ab is set to become the backbone of next-generation ultra-wideband,” said Andrew Zignani, Senior Research Director at ABI Research. “By 2030, we expect the vast majority of ultra-wideband-equipped vehicles to migrate to this new standard, leveraging a rapidly growing installed base of hundreds of millions of compatible smartphones. Meanwhile, backward compatibility with IEEE 802.15.4z allows the industry to adopt these enhancements quickly without disrupting existing deployments, while enabling valuable new user experiences and services across multiple end markets.”

“IEEE 802.15.4ab is the foundation for enabling a new generation of key fobs as part of a digital key system,” said Daniel Siekmann, Head of Car Access HW D&D Team, Forvia Hella. “It offers more than eight times the range of 802.15.4z and significantly better non-line-of-sight performance, which allows for key fob functionality to reliably perform from a back-pocket or inside a bag. With backward compatibility to 802.15.4z, it provides a practical path to replace legacy HF/LF key fobs with a modern ultra-wideband-based architecture, a transition that is further enabled by STMicroelectronics’ new ST64UWB chips.”

“By adopting 802.15.4ab, car access systems can simultaneously improve performance, cost efficiency, and robustness. The more than eightfold increase in range effectively mitigates back-pocket and other obstructed-signal conditions. At the same time, backward compatibility with 802.15.4z gives OEMs like LGIT the flexibility to either enhance reliability using their existing fixed reference points or reduce the number of reference points to lower overall system cost,” said William Jung, Team Leader, LG Innotek.

“With IEEE 802.15.4ab, the ability to drastically increase UWB performance, especially when the smartphone is left in the rear pocket, is highly appreciated,” said Bernd Bär, Expert Product Line Technology, Marquardt. “At the same time, operating within tight global homologation limits while remaining backward compatible with existing IEEE 802.15.4z ecosystems tremendously extends the applicability of UWB systems.”

“Over the last decade, Nuki has helped establish and shape the smart lock category in Europe. We firmly believe Ultra-Wideband is a transformative technology for precise, hands-free unlocking,” said Jürgen Pansi, Chief Innovation Officer, Nuki Home Solutions. “Together with STMicroelectronics and their ST64UWB solution, we are showcasing how the IEEE 802.15.4ab standard can bring the power of Aliro and UWB to our region.”

Further information for editors

The three SoCs introduced today (ST64UWB-A100, ST64UWB-A500, and ST64UWB-C100) are built on 18 nm FD-SOI process that boosts link budget by nearly 3dB versus standard bulk technologies, extending range by roughly 50% beyond the gains already delivered by the IEEE 802.15.4ab standard.

The ST64UWB-A series, designed for automotive applications and starting with the ST64UWB-A100 for use cases such as digital key and precise vehicle localization, features an Arm® Cortex®-M85 core and supports ASIL A(B) automotive safety concept. The ST64UWB-A500 adds AI acceleration and digital signal processing to support edge AI-powered radar applications, including child presence detection (CPD), kick sensing, and outward-facing use cases, such as parking sensors and radar-based vehicle-sentinel mode. These radar capabilities benefit from the new 15.4ab Kaiser pulse shape and the upgraded 1.3 GHz bandwidth of UWB channel 11, resulting in twice the accuracy compared to 500 MHz channels.

The ST64UWB-C100, built on an Arm Cortex-M85 core, targets commercial and consumer applications, delivering best-in-class hands-free and tap-free user experiences with full Aliro standard compatibility.

ST is accelerating next-generation UWB adoption with a comprehensive development kit including a UWB stack (PHY/MAC), a radar toolbox, development boards, a reference design for antennas, and application examples for both automotive and consumer markets. Find out more on product specification and 802.15.4ab technology at www.st.com/uwb

About STMicroelectronics

At ST, we are 48,000 creators and makers of semiconductor technologies, mastering the semiconductor supply chain with state-of-the-art manufacturing facilities. An integrated device manufacturer, we work with more than 200,000 customers and thousands of partners to design and build products, solutions, and ecosystems that address their challenges and opportunities, and the need to support a more sustainable world. Our technologies enable smarter mobility, more efficient power and energy management, and the wide-scale deployment of cloud-connected autonomous things. We are on track to be carbon neutral in all direct and indirect emissions (scopes 1 and 2), product transportation, business travel, and employee commuting emissions (our scope 3 focus), and to achieve our 100% renewable electricity sourcing goal by the end of 2027. Further information can be found at www.st.com

The post STMicroelectronics propels new era of ultra-wideband technology for automotive and smart device applications appeared first on ELE Times.

Sasken Announces Hyderabad Center of Excellence to Scale Product Engineering and Digital Innovation

Hyderabad, India: April 16, 2026: Sasken Technologies Ltd. (BSE: 532663, NSE: SASKEN), a leading provider of product engineering and digital transformation services, today announced the launch of its Center of Excellence (CoE) in Hyderabad. The new center strengthens Sasken’s regional delivery footprint and deepens collaboration with strategic Chipset customers like Qualcomm and their OEMs.

Located in one of India’s fastest-growing technology ecosystems, the Hyderabad CoE will focus on next-generation engineering across connected devices, 5G-led platforms, IoT solutions, embedded systems, and digital product engineering. The center is designed to enable closer customer collaboration, accelerate engineering cycles, and support faster product innovation for complex global programs across industries such as Automotive, Smart devices, HiTech, Satellite communication, Industrial etc.

As part of this expansion, Sasken also announced the appointment of Nirmala Datla, Chief Data Science & Engineering Officer, as Site Leader for the Hyderabad CoE. She will drive delivery excellence, build next-generation engineering capabilities, and lead talent development from the location.

Alongside the leadership appointment, Sasken plans to hire initially 100+ specialized professionals from Hyderabad’s strong technology talent ecosystem in the coming months. Hiring will focus on high-skill roles across semiconductors, ODM, automotive, and Data Science & Engineering, supporting advanced engineering programs in connected devices, intelligent platforms, and next-generation digital ecosystems for global customers.

“The Hyderabad CoE represents a strategic investment to support the growing demand for Sasken’s capabilities in product engineering and digital transformation. Hyderabad’s strong engineering talent ecosystem enables us to scale delivery, deepen customer collaboration, and accelerate innovation and time-to-market for our customers,” said Nirmala Datla, Chief Data Science and Engineering Officer, Sasken Technologies.

“Hyderabad presents us with an excellent talent pool across our BU’s. The launch of this Center of Excellence strengthens Sasken’s delivery footprint and positions us to support customers with greater speed, scale, and proximity,”. “Aligned with our growth strategy, we continue to make focused investments in talent and capabilities that enable us to scale the right segments and deliver sustained growth.” said Hareesh Ramanna, Chief Experience Officer, Sasken Technologies.

The post Sasken Announces Hyderabad Center of Excellence to Scale Product Engineering and Digital Innovation appeared first on ELE Times.

India sharpens its electronics manufacturing edge at electronica India and productronica India 2026 in Greater Noida

- Visitor turnout: 20,922 participants, including key buyers and sourcing decision-makers, turn up for the Greater Noida edition.

- Business engagement: The event facilitated 1,500+ buyer-seller meetings, focused on localisation, supplier discovery, and supply chain partnerships

- International presence: Strong participation from countries like Germany, China, Japan, Taiwan, and the United States

Greater Noida: India’s ambition to position itself as a global electronics manufacturing hub is beginning to reflect in more concrete ways; on factory floors, in policy corridors, and increasingly, on industry platforms where supply chains are being actively reshaped.

The 2026 Greater Noida edition of electronica India and productronica India brought this shift into focus, with a strong turnout of global suppliers, domestic manufacturers, and sourcing leaders. For an industry navigating geopolitical realignments and cost pressures, the event served less as a showcase and more as a working marketplace. The event was attended by 20,922 participants and brought more than 1000+ suppliers and distributors from across the globe and India to the show floor.

The Government of Uttar Pradesh, as the Host State, played a key role in supporting electronica India and Productronica India 2026 in Greater Noida. Its partnership reflected the state’s continued focus on building a strong electronics manufacturing ecosystem, backed by policy support, infrastructure development, and investment facilitation. The presence and involvement of senior government representatives underscored Uttar Pradesh’s intent to position itself as a preferred destination for electronics manufacturing, while also enabling closer engagement between industry stakeholders and policymakers on the ground.

Therefore, the inauguration was led by a cross-section of political and industry leaders, including Shri Suresh Kumar Khanna, Uttar Pradesh’s Minister for Finance and Parliamentary Affairs, and Shri Jitin Prasada, Union Minister of State for Electronics and IT, and for Commerce and Industry. They were joined by Shri Nand Gopal Gupta ‘Nandi’, Minister for Industrial Development; Shri Sunil Kumar Sharma, Minister for IT & Electronics; Shri Ajit Singh Pal, Minister of State for Science and Technology, Electronics and IT; and Shri Alok Kumar, Principal Secretary, IT & Electronics, Uttar Pradesh.

Scale with direction

Across its dual editions in Greater Noida and Bengaluru, the platform now brings together over 60,000 participants annually, reflecting a 50% expansion in scale and reinforcing its position as a key industry meeting point.

The Greater Noida edition saw significant participation from key manufacturing economies, including Germany, China, Japan, Taiwan, and the United States—an indicator of India’s increasing integration into global value chains.

More notably, 1500+ structured and on-ground B2B meetings were conducted during the event, many centred on supplier diversification, localisation strategies, and lead-time optimisation. These are areas that have moved to the top of boardroom agendas as companies reassess dependence on concentrated supply bases.

The current edition reflected a clear emphasis on capability building. Exhibitors pointed to growing interest in component manufacturing, automation, and supply chain resilience. Buyers, particularly from sectors such as automotive and consumer electronics, were seen evaluating domestic suppliers with an eye on long-term partnerships rather than short-term procurement.

Alongside the exhibition, a series of conferences provided a forum for more detailed engagement, aligning with the sector’s current priorities, addressing policy, supply chain resilience, automotive electronics, PCB manufacturing, and advanced production technologies. These themes were explored through platforms such as the UP Electronics Leadership Summit, the ELCINA Supply Chain Summit, the Automotive Display Conference by ICEA, the Bharat PCB Tech Conference, and the SMT Thought Leadership Summit.

The edition also emphasised innovation, featuring a Startup Pavilion supported by the Government of Uttar Pradesh and curated industry podcasts. A notable development was the launch of BPCA, Bharat’s dedicated platform for printed circuits and assemblies, introduced in collaboration with ELCINA and Messe Muenchen India.

Industry bodies such as ELCINA and ICEA were active participants, contributing to both conference discussions and closed-door industry interactions.

Rajoo Goel, Secretary General, ELCINA, stated,“The conversations at electronica India and productronica India 2026 reflected a clear shift in industry priorities. Localisation has moved beyond policy intent and is increasingly becoming a business imperative. The platform brought the value chain together in a way that enabled more practical discussions around strengthening component manufacturing capabilities and reducing external dependencies.”

Pankaj Mohindroo, Chairman, ICEA, added, “What stood out clearly was the growing maturity of India’s electronics ecosystem. We are now seeing a far stronger convergence between policy direction, industry investments, and supply chain strategies, an alignment that is critical for sustainable scale.

electronica and productronica India 2026 played an important role in advancing this momentum by enabling meaningful, direct engagement between global technology and component suppliers and Indian manufacturers who are expanding with long-term commitment and strategic intent.”

Voices from the floor

Participants indicated that the value of the platform lay in the quality of engagement rather than scale alone.

Tsuyuki Junichi – Division Head – Robotics Support Business Division, Yamaha Motor India Sales (P) Ltd said, “For Yamaha Motor India Sales (P) Ltd., electronica India and productronica India, opened more meaningful conversations with teams that are actively planning their next phase of manufacturing growth.”

Mr. Narendra Savant – VP Operations, Kyoritsu Electric India Pvt Ltd stated, “What stood out for us at electronica India and productronica India 2026 in Greater Noida was the clarity of intent from buyers. They came with defined sourcing requirements, timelines, and technical expectations. We saw strong interest across diverse industrial manufacturers for our Customised Robotic + Automation Integrated solutions and our Made-in-India End-of-Line Testers, which aligns with where the Indian electronics manufacturing production needs are heading. For us, the value of this platform lies in the ability to engage with decision-makers who are actively evaluating long-term supply partnerships rather than short-term procurement.”

Raj Kumar Saini – Managing Director, Saini Communications Pvt Ltd, mentioned,“The quality of interaction has definitely gone up. We’re no longer talking about basic automation; most conversations are now around integration, efficiency, and how to scale operations. We’ve had interest coming in from multiple sectors, which is a good sign. You can see that manufacturers are thinking more long-term now, and that changes the kind of discussions you have.”

Visitors highlighted the ability to evaluate global and domestic suppliers within a single platform, enabling faster decision-making.

Robins N T – Head Strategic Sourcing & New Product Development, Simple Energy said, “We are actively looking at diversifying supply chains and increasing localisation, and the interactions here help accelerate that process. The level of preparedness among exhibitors has improved significantly, which makes it easier to move from evaluation to actual business discussions within a short span”.

Srinivasan Sampath – Head NPD Materials at Pricol Limited, said, “What worked well for us at electronica India and productronica India 2026 in Greater Noida was the ability to benchmark multiple suppliers side by side. It helped us compare capabilities, timelines, and approach in a very practical way. In a short span, we were able to narrow down options that would have otherwise taken months to evaluate.”

Anuj Kumar Srivastava – Global Head- Facilities, Secure Meters Ltd. mentioned, “We came in to understand how quickly suppliers in India are adapting to new requirements, especially around quality standards and turnaround times. The interactions here gave us a clearer picture of who is ready to scale and who we can work with as we expand and diversify our operations. Pleased to see focus on Make in India.”

The event also witnessed strategic announcements and MoUs, including expanded collaborations between international PCB and component associations and Indian industry bodies, aimed at strengthening supply chain capabilities and fostering technology exchange.

Dr Reinhard Pfeiffer, CEO, Messe München, said, “What is becoming evident is how quickly India is moving from being part of the conversation about the supply chain to influencing it. Companies no longer assess the country solely based on cost or scale; they are also looking at long-term manufacturing alignment. This shift is reflected in the discussions taking place at electronica India and productronica India 2026 in Greater Noida.”

Bhupinder Singh, President – IMEA, Messe München & CEO, Messe Muenchen India said, “electronica India and productronica India are increasingly reflecting what the industry is dealing with in real time, supply chain adjustments, localisation, and the need for reliable partners. The conversations here are more structured, more practical, and closely linked to actual business decisions, which is where their relevance comes from.”

Looking ahead

The second edition of electronica India and productronica India 2026 is scheduled to be held in Bengaluru from September 16 to 18, 2026, extending the platform’s reach into another major electronics manufacturing hub.

As the industry recalibrates in response to geopolitical and economic shifts, platforms such as these are likely to play a more central role. The question, increasingly, is not whether India will be part of the global electronics supply chain—but how large a role it intends to occupy.

The post India sharpens its electronics manufacturing edge at electronica India and productronica India 2026 in Greater Noida appeared first on ELE Times.

Nuvoton Releases an Industry-Leading-Class High-Power Violet Laser Diode (402 nm, 4.5 W) 1.5 times higher output than our conventional product

Kyoto, Japan, April 15, 2026 – Nuvoton Technology announced today the start of mass production of a “high-power violet laser diode (402 nm, 4.5 W) ” that achieves industry-leading class optical output in a 9.0 mm diameter CAN package (TO-9). This product achieves 1.5 times the optical output compared to our conventional product through our proprietary device structure and heat dissipation design technology, and contributes to improving production throughput in optical equipment such as maskless lithography systems. Furthermore, adding this product to our lineup enables our product portfolio to support major photosensitive materials used in advanced semiconductor packaging.

Achievements:

- Achieves 4.5 W high-power at 402 nm, 1.5 times that of our conventional product, enhancing production throughput in maskless lithography systems

- Expands our lineup of light sources for maskless lithography in advanced semiconductor packaging, supporting multiple major photosensitive materials

- Expands the lineup of mercury lamp replacement solutions [6] , providing new options in light source selection

For more product details, please see here: https://nuvoton.co.jp/semi-spt/apl/rd/?id=1100-0272

Features of New Product:

- Achieves 4.5 W high-power at 402 nm, 1.5 times that of our conventional product, enhancing production throughput in maskless lithography systems

Violet (402 nm) laser diodes generally suffer from relatively low wall-plug efficiency [7] and significant self-heating, and are also prone to short-wavelength-induced degradation, which makes stable high-power operation difficult.

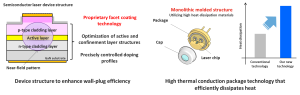

To address these challenges, the “device structure that enhances wall-plug efficiency (WPE)” and the “high thermal conduction package technology that effectively dissipates heat,” which were used in the high-power ultraviolet laser diode [8] (379 nm, 1.0 W) announced as a new product in January 2026, have been expanded to the violet (402 nm) band.

As a result, we are launching a “high-power violet laser diode (402 nm, 4.5 W)” that achieves 1.5 times the optical output compared to our conventional product. In particular, by applying our proprietary facet coating technology that suppresses degradation factors at the laser facets, we have improved the lifetime performance during high-power operation, and by adopting a monolithic molded structure using high heat dissipation materials for the package, we have improved heat dissipation performance.

By achieving both “high-power” and “high reliability”, this product enhances production throughput in industrial optical equipment where high quality is required.

| Figure 1: “Device structure that enhances wall-plug efficiency” and “High thermal conduction package technology that effectively dissipates heat” |

- Expands our lineup of light sources for maskless lithography in advanced semiconductor packaging, supporting multiple major photosensitive materials

This product will deliver significant value in maskless lithography for advanced semiconductor packaging, a market that is rapidly growing, driven by expanding demand for artificial intelligence (AI) and other applications.

In circuit formation for advanced semiconductor packages, maskless lithography technology that directly exposes (draws) wiring patterns based on design data has been attracting attention in recent years, as it enables not only cost reduction and development period shortening, but also high-precision patterning correction in response to substrate warpage and distortion.

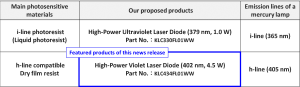

For laser diodes, which are one of the main light sources in this maskless lithography technology, there has been a demand for compatibility with wavelengths close to the i-line (365 nm) and h-line (405 nm), which are the emission lines of mercury lamps, in order to correspond to the main photosensitive materials, as well as higher output for the purpose of improving the production throughput of equipment.

In addition to the “high-power ultraviolet laser diode (379 nm, 1.0 W)” for i-line applications announced in January 2026, we are adding this new product, “high-power violet laser diode (402 nm, 4.5 W) “, for h-line applications to our lineup.

This expansion strengthens our lineup of light sources for maskless lithography in advanced semiconductor packaging, enabling consistent support for multiple major photosensitive materials while contributing to higher production throughput of equipment.

| Table 1: Major Photosensitive Materials in Maskless Lithography for Advanced Semiconductor Packages and Our Proposed Products |

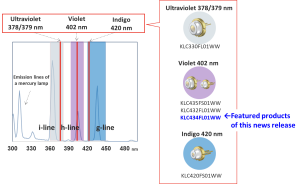

- Expands the lineup of mercury lamp replacement solutions, providing new options in light source selection

This product will be newly added to the lineup of our “semiconductor laser-based alternatives to mercury lamps.” The h-line (405 nm), which is an emission line of mercury lamps, is used in a wide range of fields such as photocuring, 3D printing, sensing, biomedical applications, and marking, and this product provides customers with a new option as an alternative light source for these applications.

Furthermore, by leveraging the high-power performance that is a feature of this product, it will contribute to improving the efficiency of processes that were difficult to realize in the past, as well as to the creation of new optical applications.

| Figure 2: “Mercury Lamp Replacement Solution Using Semiconductor Lasers” Developed by Our Company |

This product is scheduled to be exhibited at our booth at “OPIE’26” to be held in Yokohama, Japan.

Applications:

- Maskless lithography

・ Resin curing

・ Sensing

・ Marking

・ 3D printing

・ Biomedical

・ Alternative light source for mercury lamps, etc.

Product name:

KLC434FL01WW

Specifications:

| Part number | KLC434FL01WW |

| Wavelength | 402 nm |

| Optical Output Power | 4.5 W |

| Package Type | TO-9 CAN |

Start of mass production: May 2026

The post Nuvoton Releases an Industry-Leading-Class High-Power Violet Laser Diode (402 nm, 4.5 W) 1.5 times higher output than our conventional product appeared first on ELE Times.

Mission accomplished: Infineon technology proves reliable once again in space on Artemis II

- Infineon’s radiation-hardened semiconductors performed flawlessly on NASA’s Artemis II Orion capsule across ten days in space.

- Since the 1970s Infineon’s radiation-hardened technology has proven reliable across hundreds of space missions.

- With the world’s first JANS-qualified internally manufactured rad-hard gallium nitride (GaN) transistor, Infineon sets the benchmark for space semiconductors.

Munich, Germany – 15 April 2026 – NASA’s Artemis II mission has successfully returned to Earth after ten days in space, having approached the Moon and reached the farthest distance from our planet ever achieved by crewed spaceflight. The four astronauts have safely returned home, delivering renewed proof that the radiation-hardened (rad-hard) semiconductor solutions of Infineon Technologies AG (FSE: IFX / OTCQX: IFNNY) perform reliably even under the most extreme conditions of deep space. From critical power supply and control systems to data communications, Infineon’s rad-hard devices from its IR HiRel (high reliability) division supported the electronic backbone at the heart of the Orion capsule.

“Space programs require technologies and partners they can rely on for decades. Infineon is a critical technology partner, and we are proud to have once again contributed to the success of a historic space mission,” said Mike Mills, Senior Vice President and General Manager of IR HiRel at Infineon. “The space industry is evolving rapidly: more missions, more data, more electrification – while facing increasing pressure on size, weight and power consumption. In this equation, semiconductors are becoming a central focus in space. The fact that our components performed flawlessly from the first to the last minute of the Artemis II mission is no coincidence. It is the result of decades of engineering expertise, state-of-the-art qualification processes and a deep understanding of what semiconductors must deliver in space.”

Artemis II marks decades of space heritage for Infineon. As far back as the 1970s, Infineon’s predecessor companies supplied the first rad-hard components for NASA and ESA space programs. Since then, Infineon IR HiRel has supported hundreds of space missions including navigation satellites, the International Space Station (ISS), and today’s Artemis program. Our rad-hard components have traveled further than any other human-made object, over 20 billion kilometers from Earth. As a technology leader, Infineon continues to invest in, develop and manufacture the best performing rad-hard semiconductors supporting the space design community on a global scale.

The demands placed on semiconductors in space are immense. Beyond Earth’s protective magnetic field, high-energy particles strike electronic components unimpeded and can permanently damage or destroy them, causing mission failure. Infineon’s rad-hard technology addresses these mechanisms not through passive shielding, but through a semiconductor architecture that is radiation-resistant by design. All products are qualified to the most stringent international space standards, including MIL-PRF-38535 Class V, MIL-PRF-19500, ESA’s ESCC standards and NASA EEE-INST-002, ensuring their reliable performance.

At Infineon, innovation is developed at the system level: semiconductor technology, rad-hard assurance, and packaging perform together. An optimized overall system influences not only electrical performance, but also thermal behavior and long-term reliability – while simultaneously reducing weight and volume. Every gram counts in space, Infineon’s rad-hard parts provide a decisive system-level advantage.

Wide-Bandgap technology: GaN takes the next step

Infineon is also advancing the use of new semiconductor materials in space applications. Gallium nitride (GaN) enables lower switching losses, higher power density and higher switching frequencies – reducing power losses and magnetic component requirements, which translates directly into further weight savings. Built on internal manufacturing capabilities and the process and quality stability that comes with it, Infineon’s award-winning rad-hard 100-V GaN transistor, qualified to JANS (Joint Army Navy Space) per MIL-PRF-19500, brings GaN from concept to proven technology for demanding space missions. Infineon’s JANS qualified device is the first and only internally manufactured rad-hard GaN transistor on the market.

Infineon offers a broad rad-hard portfolio spanning Si power MOSFETs and GaN transistors, gate drivers and solid-state relays, in addition to rad-hard memories and radio frequency (RF) devices. Backed by in-house radiation testing capabilities and guaranteed long-term product availability, Infineon positions itself not merely as a component supplier, but as a strategic technology partner for the entire space industry.

The post Mission accomplished: Infineon technology proves reliable once again in space on Artemis II appeared first on ELE Times.

Bosch and Qualcomm expand collaboration to strategic ADAS solutions

Cockpit Computers: 10 million units delivered

• High-performance solutions: Bosch and Qualcomm aim to make ADAS solutions for enhanced safety and comfort available to everyone

• Continued Business Momentum: Collaboration has secured significant new business wins for both next-generation ADAS and cockpit solutions

• Proven Global Success: Bosch delivers over 10 million cockpit computers powered by Snapdragon Cockpit Platforms

• Global Market Penetration: Deliveries span all vehicle segments from entry to premium, serving both regional and global automakers

Stuttgart, Germany / San Diego, USA – Bosch and Qualcomm Technologies, Inc. announced today that they are expanding their strategic partnership, which has focused on vehicle computers for cockpit solutions, to also include ADAS solutions. Together, Bosch and Qualcomm Technologies are helping address one of the industry’s most pressing needs – scaling intelligent vehicle technology to meet growing consumer demand for vehicles that are automated, connected, and highly personalized. The companies also highlighted a significant milestone in their longstanding collaboration: Bosch has developed and delivered more than 10 million vehicle computers based on Qualcomm Technologies’ Snapdragon Cockpit Platforms for the global automotive market.

“By combining leading-edge compute technology with our system integration expertise – hardware, software, and safety – we enable automakers to meet the rising demand for personalized, safe, and comfortable driving experiences,” said Christoph Hartung, Member of the Bosch Mobility business sector board, Chief Technology Officer for Systems, Software, and Services, and President of the division Cross-Domain Computing Solutions.

“The growing success of our collaboration with Qualcomm Technologies underlines a central value Bosch brings to the industry: we provide the robust, high-performance computing platforms that form the backbone of today’s software-defined vehicle“, said Christoph Hartung, Member of the Bosch Mobility business sector board, Chief Technology Officer for Systems, Software, and Services, and President of the division Cross-Domain Computing Solutions.

“Our collaboration with Bosch spans the full spectrum of vehicle compute – from high‑performance cockpit systems to scalable automated driving solutions and emerging centralized vehicle architectures – all powered by Snapdragon Digital Chassis automotive platforms,” said Nakul Duggal, EVP and Group GM, Automotive, Industrial and Embedded IoT, and Robotics, Qualcomm Technologies, Inc.

“ADAS is where performance and safety must scale in the real world. By expanding our work with Bosch into production-ready ADAS platforms, we’re helping automakers bring advanced driver assistance across vehicle lines more efficiently, with a clear path to centralized compute“, said Nakul Duggal, EVP and Group GM, Automotive, Industrial and Embedded IoT, and Robotics, Qualcomm Technologies, Inc.

Building on this momentum, the companies are extending their collaboration through new ADAS production programs. These programs leverage Bosch’s cost-optimized vehicle computer architecture, powered by Qualcomm Technologies’ Snapdragon Ride platform, to support practical and scalable ADAS deployments. The collaboration also includes purpose-built combined cockpit and ADAS platforms supporting mixed criticality applications delivered on a single system-on-chip, unique to Snapdragon Ride

platform, to support practical and scalable ADAS deployments. The collaboration also includes purpose-built combined cockpit and ADAS platforms supporting mixed criticality applications delivered on a single system-on-chip, unique to Snapdragon Ride Flex SoCs, aligning with automakers’ software-defined vehicle strategic initiatives. At the core of these programs is the Bosch ADAS integration platform – a scalable, modular vehicle computer designed for ADAS functions. With high bandwidth, computing power, and memory management, it meets strict safety and security standards, fuses multiple sensor technologies for a precise 360° environment model, and runs complex algorithms to deliver safe, dynamic vehicle behavior—even at high speeds.

Flex SoCs, aligning with automakers’ software-defined vehicle strategic initiatives. At the core of these programs is the Bosch ADAS integration platform – a scalable, modular vehicle computer designed for ADAS functions. With high bandwidth, computing power, and memory management, it meets strict safety and security standards, fuses multiple sensor technologies for a precise 360° environment model, and runs complex algorithms to deliver safe, dynamic vehicle behavior—even at high speeds.

The Next Frontier: Jointly Engineering the Future of ADAS

Bosch and Qualcomm Technologies’ joint approach is delivering scalable, cost-optimized vehicle computers with ADAS solutions that have secured multiple global customer design wins in the East Asian market. These joint efforts provide automakers with critical flexibility and a clear migration path to centralized computing architectures featuring a small number of highly powerful vehicle computers instead of many individual control units. Powered by the scalable Snapdragon Ride Platform from Qualcomm Technologies, Bosch´s vehicle computers support a broad range of configurations – from entry-level ADAS, such as speed and distance regulation or lane keeping, to advanced automated driving systems. The first vehicles from these new business wins are expected on the road in 2028.

In addition, ADAS and cockpit solutions can also be consolidated onto a single platform to give automakers even greater flexibility and reduce architectural complexity. To this end, Bosch and Qualcomm Technologies are also working on solutions using existing products: Snapdragon Ride Flex builds on this foundation by enabling the consolidation of cockpit and ADAS functions onto a single, safety-certifiable SoC, reducing system complexity, power consumption, and cost while giving automakers a path toward centralized compute architectures. Bosch’s cockpit and ADAS integration platform combines the system functions for assisted and automated driving and infotainment, like personalized navigation and voice assistance functions, in one high-performance computer.

Flex builds on this foundation by enabling the consolidation of cockpit and ADAS functions onto a single, safety-certifiable SoC, reducing system complexity, power consumption, and cost while giving automakers a path toward centralized compute architectures. Bosch’s cockpit and ADAS integration platform combines the system functions for assisted and automated driving and infotainment, like personalized navigation and voice assistance functions, in one high-performance computer.

Both the ADAS and cross-domain computing solutions are designed to meet stringent safety requirements (up to ASIL-D) while reducing complexity and cost. For drivers, this means greater access to advanced Level 2 driving features like lane keeping, hands-free driving, and intelligent automated parking.

A story of successful collaboration: defining the modern digital cockpit

The collaboration between Bosch and Qualcomm Technologies is redefining the modern digital cockpit by serving the full spectrum of both the regional and global automotive market across North America, Asia, and Europe. This approach has driven exponential growth since first deliveries began in 2021, scaling from one million units in 2023 to ten million in less than three years, fueled by successful program awards with vehicle manufacturers worldwide. The delivery milestone underscores the companies’ shared ability to industrialize advanced automotive technologies at a global scale for the software-defined vehicle era, spanning entry-level to premium vehicles. The success is rooted in Bosch’s flexible and scalable approach, leveraging Snapdragon Cockpit Platforms. The Bosch cockpit integration platform can drive an increasing number of in-vehicle displays and camera inputs. Qualcomm Technologies’ Snapdragon Cockpit Platforms combine high-performance compute with power-efficient design to enable a wide range of vehicle experiences. That includes crisp, essential displays in cost-optimized systems up to premium systems featuring ultra-low-latency HMI responsiveness, multi-display configurations, immersive multimedia, AI-powered conversational voice assistance, and higher levels of personalization – while maintaining efficiency across vehicle segments.

The post Bosch and Qualcomm expand collaboration to strategic ADAS solutions appeared first on ELE Times.

Gartner Forecasts Worldwide Semiconductor Revenue to Exceed $1.3 Trillion in 2026

- Semiconductor Revenue to Grow 64% in 2026

- DRAM Prices to Increase by 125% in 2026 and Storage Crisis to Extend into 2027