ELE Times

Everspin Launches New Generation of Unified Memory for Embedded Systems

Everspin Technologies, a leading developer and manufacturer of magnetoresistive random access memory (MRAM) persistent memory solutions, today announced the UNISYST MRAM family, a new generation of unified memory designed to fundamentally change how embedded systems store and access code and data.

“System designers are running into the physical and performance limits of NOR flash, especially as process nodes move below 40 nanometers and workloads become more demanding,” said Sanjeev Aggarwal, president and CEO of Everspin Technologies. “With UNISYST, we are extending our MRAM roadmap to higher densities while giving customers a practical way to start with PERSYST today and migrate to a code-and-data MRAM architecture as soon as it is available.”

UNISYST is a unified code-and-data MRAM architecture that bridges traditional configuration memory and higher-density persistent storage, extending MRAM into traditional NOR flash applications where superior performance, endurance and reliability are valued. Built as a natural extension of Everspin’s existing PERSYST MRAM platform, UNISYST gives customers a practical, simple migration path from today’s serial MRAM devices to higher-density unified memory without requiring changes to system architecture or software.

Everspin will initially offer the UNISYST family in densities ranging from 128 megabits to 2 gigabits, using a standard xSPI interface operating up to octal SPI at 200MHz. The devices are planned to feature AEC-Q100 Grade 1 qualification and minimum 10-year data retention at extreme temperatures, supporting demanding environments across automotive, aerospace, industrial and edge AI applications.

“As generative AI models move from the cloud to embedded systems, we’re suddenly dealing with assets that are tens or even hundreds of megabytes in size,” said Kwabena W. Agyeman, President and Co-founder of OpenMV. “Storing those models is only part of the challenge — updating them quickly during development and deployment is equally important. High-speed, non-volatile Everspin UNISYST MRAM changes what’s practical for edge AI systems by removing the write bottlenecks associated with traditional flash.”

UNISYST delivers high-bandwidth read and write speeds in a non-volatile memory device, enabling fast boot, rapid updates and predictable performance without the tradeoffs of traditional flash-based designs. By combining high-speed access with persistent storage, UNISYST supports software-defined systems that require frequent reconfiguration while maintaining data integrity across power cycles.

Everspin MRAM has been deployed in mission-critical storage applications for nearly two decades, valued for its endurance and reliability. UNISYST builds on Everspin’s proven MRAM foundation with capabilities designed to support more complex, software-defined systems:

- Code-and-data MRAM architecture designed as a next-generation alternative to other non-volatile memory

- Standard xSPI interface operating up to octal SPI at 200MHz

- Read bandwidth of up to 400 MB/s and write bandwidth of approximately 90 MB/s, over 400 times faster than NOR flash

- Write endurance up to 10 times higher than typical NOR

- AEC-Q100 Grade 1 qualification and minimum 10-year data retention for high-reliability designs

UNISYST is aimed at applications where non-volatile memory must combine high bandwidth, high endurance and predictable behaviour over temperature and time. Target use cases include:

- AI at the edge: Fast AI weight updates, critical storage at the edge, local code-and-data storage for workloads that need fast boot, rapid reconfiguration and non-volatile operation close to the sensor, with the ability to execute in place, removing the need for multiple system memories

- Military and aerospace: Field-programmable gate array (FPGA) configuration and code storage for mission-critical systems, including low-Earth orbit satellites and other platforms that require frequent over-the-air updates

- Automotive: Control, logging and configuration memory in systems that must meet Grade 1 temperature requirements and long-term data retention

- Industrial and casino gaming: High-traffic logging and configuration in environments that demand fast writes, long endurance and persistent storage supporting data logging

The launch of UNISYST represents a platform-level expansion of Everspin’s MRAM portfolio, extending the company’s role from a niche memory supplier to a mainstream memory player serving a multibillion-dollar market. By unifying code storage and data memory, Everspin is addressing the growing demands of software-defined systems that require faster boot times, frequent updates and predictable behaviour over long operating lifetimes.

The post Everspin Launches New Generation of Unified Memory for Embedded Systems appeared first on ELE Times.

TI’s microcontroller portfolio and software ecosystem expanded to enable edge AI in every device

Texas Instruments (TI) introduced two new microcontroller (MCU) families with edge artificial intelligence (AI) capabilities, supporting the company’s commitment to enabling edge AI across its entire embedded processing portfolio. The MSPM0G5187 and AM13Ex MCUs integrate TI’s TinyEngine neural processing unit (NPU), a dedicated hardware accelerator for MCUs that optimises deep learning inference operations to reduce latency and improve energy efficiency when processing at the edge.

TI’s embedded processing portfolio is supported by a comprehensive development ecosystem, including the CCStudio integrated development environment (IDE). Its generative AI features allow engineers to use simple language to accelerate code development, system configuration and debugging through industry-standard agents and models paired with TI data. Altogether, TI is accelerating the adoption of edge AI across electronic devices, from real-time monitoring in wearable health monitors and home circuit breakers to physical AI in humanoid robots. These end-to-end innovations are featured in TI’s booth at embedded world 2026, March 10-12, in Nuremberg, Germany.

“TI invented the digital signal processor almost 50 years ago, laying the groundwork for today’s edge AI processing,” said Amichai Ron, senior vice president, Embedded Processing and DLP® Products at TI. “Now TI is leading the next phase of innovation by integrating the TinyEngine NPU across our entire microcontroller portfolio, including general-purpose and high-performance, real-time MCUs. By enabling AI across our software, tools, devices and ecosystem, we are making edge AI accessible and easy to use for every customer and every application.”

“While much of the world has been focused on AI acceleration and NPUs in bigger SoCs, it turns out some of the more interesting and far-reaching applications of AI can be enabled inside smaller chips like microcontrollers,” said Bob O’Donnell, President and Chief Analyst at TECHnalysis Research. “Edge-based applications of AI acceleration can make consumer devices more intelligent and industrial devices more efficient. Plus, if you can combine these chips with software development tools that themselves leverage AI to help build AI features, you bring the power of AI acceleration to a significantly wider audience of engineers and device designers.”

Advanced intelligence at your fingertips

Consumers are always looking for everyday technology to be more intelligent, from fitness wearables to home appliances and electrical systems. However, many engineers believe that AI capabilities are limited to higher-end applications due to high costs, power demands, and coding requirements. TI’s new MSPM0G5187 Arm Cortex-M0+ MSPM0 MCU represents a fundamental shift for embedded designers, who can now bring edge AI to a wide range of simpler, smaller and more cost-effective applications.

With local computation, the TinyEngine NPU executes computations required by neural networks in parallel to the primary CPU running application code. Compared to similar MCUs without an accelerator, this hardware acceleration:

- Minimises the flash memory footprint.

- Lowers latency by up to 90 times per AI inference.

- Reduces energy utilisation by more than 120 times per AI inference.

Such levels of efficiency allow resource-constrained devices – including portable, battery-powered products – to process AI workloads. At under US$1 in 1,000-unit quantities, the MSPM0G5187 MCU reduces system and operating costs by offering an affordable alternative to other MCU or processor architectures.

Real-time control plus AI acceleration for multimotor systems

Motor control applications in appliances, robotics and industrial systems increasingly call for intelligent features such as adaptive control and predictive maintenance, but implementing these capabilities has historically required complex, multi-chip designs. Building on over two decades of motor control leadership through the C2000 real-time MCU portfolio, TI’s new AM13Ex MCUs are the industry’s first to integrate a high-performance Arm Cortex-M33 core, TinyEngine NPU and advanced real-time control architecture into a single chip.

real-time MCU portfolio, TI’s new AM13Ex MCUs are the industry’s first to integrate a high-performance Arm Cortex-M33 core, TinyEngine NPU and advanced real-time control architecture into a single chip.

This degree of integration enables designers to implement sophisticated motor control and AI features simultaneously without external components, lowering bill-of-materials costs by up to 30%. Key enhancements include:

- The ability to maintain precise real-time control loops for up to four motors while the TinyEngine NPU runs adaptive control algorithms for load sensing and energy optimisation.

- An integrated trigonometric math accelerator that performs calculations 10 times faster than coordinate rotation digital computer (CORDIC) implementations, delivering more precise, responsive motor-control performance.

Easily train, optimise and deploy AI models

Both MCU families are supported by TI’s CCStudio Edge AI Studio, a free development environment that simplifies model selection, training and deployment across TI’s embedded processing portfolio. This edge AI toolchain gives engineers full flexibility to run AI models on TI MCUs through either hardware or software implementations. Today, there are more than 60 models and application examples available in the tool to help developers start deploying edge AI in any device, with additional tasks and models planned in the future.

The post TI’s microcontroller portfolio and software ecosystem expanded to enable edge AI in every device appeared first on ELE Times.

R&S to showcase future-proof EMC testing solutions at EMV 2026

Rohde & Schwarz will participate in EMV 2026, Europe’s premier trade fair and congress dedicated to electromagnetic compatibility, held from March 24-26 in Cologne. At the event, which serves as a crucial platform for industry professionals, the company will show its latest advancements in test & measurement equipment to address the evolving challenges within the EMC landscape.

Rohde & Schwarz will demonstrate a broad portfolio of solutions designed to streamline and optimise EMC testing across diverse sectors, including power electronics, consumer, industrial, automotive, Satcom, military, and wireless communications. EMC testing is evolving to meet the demands of emerging technologies and a crowded radio frequency spectrum. Innovations like AI, 6G, and quantum computing present new challenges for ensuring reliable performance, while widespread electrification and increased bandwidth requirements necessitate testing at higher frequencies. To address these shifts, Rohde & Schwarz is developing scalable and modular test solutions focused on repeatable, reliable measurements – streamlining the path from initial assessment to final certification. A further focus is on bridging the gap between real-world field performance and laboratory testing.

At the show, Rohde & Schwarz will showcase a versatile and adaptable solution for conducted and radiated emission testing with the EMI test receivers R&S EPL1001 and R&S EPL1007 with frequency ranges up to 1 GHz and 7.125 GHz. These receivers provide a scalable approach to EMC testing, allowing users to select the optimal configuration for their needs, whether for efficient pre-compliance measurements or fully CISPR 16-1-1 compliant testing for certification.

Rohde & Schwarz is showcasing a speed-optimised EMI test with its industry-leading R&S ESW test receiver — with ESW-B1000R 970 MHz bandwidth extension — and the automated R&S ELEKTRA software: A live demonstration highlights the system’s capabilities for rapid and detailed device characterisation with 3D emission plots generated by R&S ELEKTRA for a typical commercial EMI test. Complementing this is the R&S HF1444G14 high-gain antenna, extending testing capabilities up to 44 GHz for standards like MIL-STD and FCC.

Rohde & Schwarz will also be expanding its R&S BBA300 family of broadband amplifiers with its new dual-band amplifier series R&S BBA300-CDE/FG for 380 MHz to 13 or 18 GHz and the R&S BBA300-DE1000 with an output power of up to 1000 W in the 1 GHz to 6 GHz range. With high linearity, continuous and very wide frequency bands, and innovative protection concepts for high availability, the R&S BBA300 family meets the requirements for EMC immunity testing today and tomorrow.

Rohde & Schwarz will also show its full vehicle antenna test (FVAT) capabilities at the show. Modern vehicles increasingly rely on multiple antennas – for GNSS, Wi-Fi, cellular services like C-V2X, and more to enable safety, convenience and infotainment features – requiring comprehensive full-vehicle antenna testing. This testing enables vehicle manufacturers and their suppliers to characterise radiation performance, verify RF robustness, ensure co-existence of different wireless technologies and ultimately validate the functions and services enabled by wireless connectivity.

For in-depth signal analysis, Rohde & Schwarz will feature the R&S MXO 3 Series oscilloscope, boasting an unmatched acquisition rate exceeding 4.5 million waveforms per second and featuring up to 8 channels. This advanced oscilloscope also includes powerful standard functions such as a very fast FFT and zone trigger capabilities that empower engineers to quickly and precisely understand complex circuit behaviour, essential for effective EMI troubleshooting and design optimisation.

Rohde & Schwarz will also actively contribute to the congress with technical sessions, workshops and demos focusing on EMI test speed optimisation, EMC for medical products and closed-loop Reverb chamber testing. Attendees can also join a panel discussion exploring the impact of Artificial Intelligence on the EMC landscape, covering its current benefits and potential future challenges. Besides others, a Rohde & Schwarz expert will discuss AI’s role in areas like testing and development, and address concerns about new vulnerabilities.

The post R&S to showcase future-proof EMC testing solutions at EMV 2026 appeared first on ELE Times.

Infineon extends leadership position in global microcontroller market

Infineon Technologies further extends its number one position in the global microcontroller market. According to the latest research by Omdia [1], the company increased its total microcontroller market share to 23.2 per cent in 2025 (2024: 21.4 per cent), achieving a year-on-year gain of 1.8 percentage points – the largest increase among its competitors. Notably, this market share gain was achieved against the backdrop of a slightly declining microcontroller market (-0.3 percent).

“This great market result reflects our relentless commitment to accelerating innovation for customer value, outstanding system solutions, and strong customer relations,” said Andreas Urschitz, Chief Marketing Officer and Member of the Management Board at Infineon. “With our superior product portfolio, reliable software, and easy-to-use development tools, we help our customers create value and address the global challenges of decarbonization and digitalisation. Outgrowing the market is a direct outcome of our continued investment in technology and our close collaboration with our partners worldwide.”

Ethernet to enhance microcontroller business for software-defined vehicles

Infineon climbed to the top spot in the global microcontroller market for the first time in 2024, after becoming the number one in the specific market for automotive microcontrollers already one year earlier. The company’s leading market position will be further strengthened by the successful acquisition of Marvell’s Automotive Ethernet business, a milestone transaction completed in August 2025. This move expands Infineon’s cutting‑edge connectivity portfolio, enhancing the company’s system capabilities for central compute architectures in software-defined vehicles (SDV). Integrating the industry-leading BRIGHTLANE automotive Ethernet portfolio with Infineon’s AURIX, PSOC and TRAVEO automotive microcontroller families creates an unmatched system offering for SDVs, enabling features such as autonomous driving, advanced driver‑assistance systems, and secured over‑the‑air updates.

Infineon microcontrollers empower physical AI, such as humanoid robots

Furthermore, the acquisition opens additional growth opportunities in emerging IoT fields and physical AI, such as humanoid robotics. AURIX, PSOC and MOTIX microcontrollers from Infineon empower humanoid robots to safely perceive, think, and interact with their environment in real-time, facilitating advanced computing, smart actuation and motor control, connectivity, and intelligent edge functions.

Infineon enables the key functional blocks in humanoid robots, supporting customers from concept to mass production across industrial, service, and home applications. With its PSOC portfolio, Infineon continues to expand its presence in industrial and consumer markets, offering scalable, secure, and power‑efficient microcontroller solutions widely used in smart home systems, industrial control equipment and connected IoT devices.

Cybersecurity features for future requirements are already implemented today

From IoT devices to connected vehicles, industrial infrastructure, AI‑driven applications, and robotics, cybersecurity is essential. Therefore, Infineon microcontrollers are engineered with future-proof security in mind to protect data, identities and systems from the start and across the entire lifecycle. This includes, for example, complying with international security standards such as ISO/SAE 21434 (automotive security) for the latest generation AURIX and TRAVEO MCUs. Furthermore, Infineon engineers architectures that meet future requirements, such as from the EU Cyber Resilience Act or for post-quantum cryptography, already today – for example, in the latest PSOC products for industrial and consumer applications, as well as AURIX and TRAVEO automotive MCUs.

Infineon at embedded world 2026: Showcasing future-ready innovations

From 10 to 12 March 2026, at embedded world in Nuremberg, Germany, Infineon is presenting its comprehensive portfolio of industrial, consumer and automotive microcontrollers, with a strong focus on innovation for secured, connected, and intelligent systems. Visitors can experience this at Infineon’s booth (Hall 4A, Booth 138) and through a series of presentations and live demos.

[1] Based on or includes research from Omdia: Annual 2001-2025 Semiconductor Market Share Competitive Landscaping Tool – 4Q25. March 2026. Results are not an endorsement of Infineon Technologies AG. Any reliance on these results is at the third party’s own risk.

The post Infineon extends leadership position in global microcontroller market appeared first on ELE Times.

Traction Inverter: Keys to understanding the inverter, the traction, and why X-in-1 solutions are increasingly popular

Courtesy: STMicroelectronics

Traction inverters are at the heart of electric vehicles, meaning that they are one of the modules with the most significant impact on overall efficiency, range, and performance. According to the US Department of Energy, the electric drive system is responsible for some of the most significant losses in an EV, totalling about 18%. Moreover, a report by McKinsey & Company explains that the “top reasons” for consumers to avoid EVs are costs, charging concerns, and range anxiety, two of which are mainly impacted by the traction inverter’s performance. Optimising the electric drive train is thus the quickest and surest way to improve an EV to make it more compelling, and why ST recently published a white paper on traction inverters

Why are traction inverters challenging? The role of a traction inverter A traction inverter

A traction inverter

In a nutshell, the traction inverter takes the DC electrical energy from the battery, converts it into properly commutated three-phase alternating current, and sends it to a traction motor, which then converts it into kinetic energy. Consequently, the traction inverter is also responsible for modulating the AC sent to the motors to adjust for things like torque and speed. Similarly, regenerative braking, which converts mechanical energy into DC power to recharge the battery, also depends on the traction inverter. Hence, the reason drivers love the responsiveness of their EVs, as well as how certain driving features can extend the overall range, is dependent on the performance of the traction inverter, among other things.

The challenges behind the traction and the inversion A DC-DC Converter

A DC-DC Converter

While most two-wheel-drive vehicles will have one or two inverters, an all-wheel drive may have up to one inverter per traction motor and one traction motor per wheel. It all depends on how car makers want to address the car’s overall performance. Hence, it’s easy to see some of the challenges that engineers must solve when designing a traction inverter that must not only convert electrical energy but also sense phase current, monitor motor position, and even manage control loops. While many engineers focus on the “inverter”, “traction” comes with a unique set of challenges, such as determining a rotor’s position with precision, or the whole traction inverter will be grossly inefficient.

Moreover, as EVs increasingly support high-power DC charging, they come with higher DC-link voltages, which means the traction inverter must adapt to reduce losses while enabling traction motors to draw more power. It’s a great example of how modern car modules are highly interdependent and how changing one aspect of the vehicle has ripple effects on many other systems and modules. As the white paper shows (see Table 3), there’s a strong “correlation between motor power, battery size, and DC link voltage.” Put simply, engineers can’t design traction inverters in isolation but must take a more global approach or risk seriously hampering performance due to a poorly suited system.

How to find solutions and design great traction inverters? Choosing the right gate driversTo answer these challenges, the white paper aims to provide key concepts and solutions engineers can apply to their designs. For instance, it looks at how to use gate drivers and power transistors to modulate the current in stator windings. Too often, teams treat these devices as commodities and miss the critical impact they may have on their traction inverters. However, a mismatch between the transistors and gate drivers will result in significantly higher losses, among other things. It’s why a galvanically isolated driver for IGBT and SiC MOSFETs, like the STGAP4S, can make a tremendous difference. ST even offers an evaluation board, the EVALSTGAP4S, which significantly hastens the development of a proof of concept.

Finding the right microcontroller The SR5E1-EVBE5000P

The SR5E1-EVBE5000P

Another challenge is the ability to control the traction motors with enough precision and speed to improve the EV’s performance. Such a feat is directly tied to the microcontroller that will house the PWM timers and the logic responsible for calculating the field-oriented control mechanisms, among other functions. Using the wrong device will not only hinder performance but also create critical problems that cannot be fixed easily unless the platform supports things like over-the-air updates, the highest levels of functional safety, and more. ST is already offering MCUs tailored for EV applications, like the new Stellar E series and evaluation boards like the SR5E1-EVBE5000P.

Adopting the X-in-1 trendAnd the white paper contains so many more solutions, tips, and expert advice. As ST offers a unique and wide-ranging portfolio of devices that can directly improve traction inverters, the paper also helps engineers anticipate a new trend: X-in-1. Increasingly, we see makers coming up with integrated systems that include the on-board charger, DC-DC converter, and traction inverter. Since these systems impact one another, integrating them helps create a more meaningful and intentional design. However, that means engineers must widen their expertise and rely on a portfolio that includes a broader range of devices.

The post Traction Inverter: Keys to understanding the inverter, the traction, and why X-in-1 solutions are increasingly popular appeared first on ELE Times.

5 Upcoming AIoT Trends to Lookout for in 2026

Courtesy: Hikvision

As we enter 2026, the convergence of artificial intelligence (AI) and IoT infrastructure is reshaping industries, unlocking unprecedented opportunities to optimise operations, enhance security, and improve sustainability. Yet with great technological power comes great responsibility, and the AIoT industry is increasingly focused on ensuring AI develops in ways that are safe, ethical, and beneficial to all. Here are the five key trends shaping the AIoT landscape in 2026.

Scenario-based AIoT solutions are rapidly unlocking new business valueThanks to AIoT, we are witnessing a profound digital shift moving beyond basic IT informatisation to deep integration with Operational Technology (OT). In this transition, business value is no longer created by fragmented data collection, but increasingly by harvesting insights naturally and continuously from daily operations. By embedding perception capabilities into specific real-world scenarios, AIoT is enabling organisations to move from manual management to much more agile, automated control.

This is creating operational capabilities that were once impossible, enabling real-time decision-making, which can rapidly deliver new business value. In the field of industrial safety, for example, we see workshops shifting from reactive response to proactive prevention. Hazardous manual inspections are being replaced by advanced spectral technologies such as TDLAS, which remotely detect natural gas leaks in seconds. The result is a dramatic reduction in response times to emergency situations.

It’s a similar story with quality control. Food manufacturers, for example, are now leveraging AI-driven X-ray systems to instantly identify foreign objects like stones, glass, and bone that were once invisible.

Or consider inventory management, where mining and feed plants are now utilising 3D millimetre-wave radar to automatically scan silos. This is yet another application of AIoT that, in this case, is creating a new level of precision in volumetric data, eliminating human error, and enabling fully automated, real-time control.

Large-scale AI models are evolving into new capabilities for “AI+”Large-scale AI models are empowering the core analysis and processing flow through “AI+” integration. While large language models have revolutionised human-digital interaction, industry-specific models are now reshaping how IoT data interacts with the physical world.

We can already see that by embedding AI into data analysis and signal processing, these models significantly enhance precision and efficiency. For example, traffic and perimeter security models, trained on massive datasets, are pushing the limits of perception. By processing complex data, they minimise false alarm rates for incidents and intrusions. Meanwhile, in audio sensing, “AI+ signal processing” is redefining audio capture by filtering background static and isolating human voices in noisy environments. This technology improves the signal-to-noise ratio, ensuring clear sound pickup even in challenging conditions.

Deeply anchored in this multi-modal understanding, AI Agents are now bridging the gap between perception and human intent. Powered by large language models, these agents enable users to communicate naturally using everyday language. Commands like “Find the person wearing purple clothes who parked a blue SUV this morning” are processed by intelligent security systems to automatically retrieve relevant video segments. Such capabilities are transforming AIoT systems from specialised tools that require professional training into intelligent assistants that are accessible to everyone.

Edge AI is transforming devices from data collectors to intelligent analysersAnother shift we are seeing is towards edge computing. Increasingly, the “Cloud + AI” model is no longer the only option for enterprise digitalisation. By moving AI functions from the cloud to the edge, organisations can achieve millisecond-level response times, operate seamlessly offline, and maintain on-premises privacy. It’s an architectural shift that eliminates bandwidth dependency and significantly reduces infrastructure overhead.

Because devices process raw data directly, this localised architecture extends its value by greatly optimising storage efficiency. This is particularly significant for complex video analysis, powered by visual AI models. Here, edge devices can now precisely identify key targets such as people or vehicles at the source. Based on this accurate segmentation, the system applies differentiated encoding—preserving critical foreground details, while compressing background areas that contribute little investigative value.

This AI-driven approach drastically reduces storage requirements without sacrificing visual clarity. For organisations deploying thousands of cameras across multiple sites, this naturally translates into substantial savings on storage infrastructure, lower ongoing costs, and simplified data management, making large-scale AIoT deployments economically viable.

Responsible AI is embedding ethics into every stage of innovationAI is transforming our lives, work, and business at an unprecedented pace. Yet, this revolution brings a critical responsibility: to ensure innovation unfolds safely, ethically, transparently, and beneficially for all. Responsible AI is no longer optional—it is both a moral imperative and a strategic necessity that builds trust, mitigates risk, and drives long-term innovation. As public awareness and regulatory oversight intensify globally, from Europe’s regulatory pioneering to regional initiatives worldwide, international collaboration becomes essential to harnessing AI’s potential while, at the same time, promoting security, prosperity, and human well-being.

Responsible AI practices, then, must permeate the entire AI lifecycle—from research and development to deployment and real-world application.

This includes establishing guiding principles and governance frameworks, adopting responsible approaches throughout development, and ensuring safety, accountability, and transparency in products and solutions. It is a systematic endeavour requiring industry-wide coordination and collective action across sectors and borders, involving policymakers, industry partners, researchers, and other stakeholders. Only through sustained commitment and open collaboration can we shape an AI future that truly serves humanity.

AIoT is expanding technology’s role from business to society and the environmentAnother key trend that we are seeing is the rapid expansion of application areas for AIoT. In addition to the traditional business solutions, AIoT is now being widely adopted for broader social and environmental applications, demonstrating how intelligent systems can serve humanity and nature.

In ecological protection, for example, specialised AIoT devices are revolutionising conservation efforts, from wildlife monitoring to vegetation health tracking. Indeed, crop growth monitoring systems that leverage AIoT technologies for large-scale, real-time analysis of crop health are becoming increasingly widespread in agriculture. This capability addresses the inefficiencies of manual inspections, enabling precise management and optimising yields through digitisation.

AIoT is also being used to improve public safety. AI-driven drowning prevention systems, for example, are being deployed in areas which are known to be high risk. They utilise real-time video analytics to detect hazardous conditions, automatically identifying when an individual enters dangerous areas, for example. When this happens, the technology triggers an immediate alert, transforming passive monitoring (or no monitoring at all) into a highly effective and proactive solution that can save lives.

Looking ahead: the future of AIoTFor organisations accelerating their digital transformation journeys, these trends offer both guidance and inspiration. The future of AIoT, after all, is about creating real value for businesses, enhancing experiences for people, and building a more sustainable world for everyone. And that future is arriving now.

The post 5 Upcoming AIoT Trends to Lookout for in 2026 appeared first on ELE Times.

Space internet is coming, and satellite networks could bypass app stores and telcos entirely

Low Earth Orbit (LEO) satellite constellations are entering a new phase of telecom relevance. What began as fixed satellite broadband for remote homes has evolved into direct-to-device connectivity integrated within 3GPP Non-Terrestrial Network standards. Modern satellites are no longer simple bent-pipe relays. They incorporate regenerative payloads, digital beamforming arrays, onboard processing, and inter-satellite optical links that allow orbital mesh routing. The engineering sophistication is undeniable.

However, for telecom professionals and network architects, the key discussion is not about technological capability. It is about architectural positioning: can satellite networks scale to rival terrestrial radio access networks (RAN)? Can they bypass traditional telecom operators? And do they meaningfully challenge app-store ecosystems? The answers require a grounded understanding of spectrum physics, link budgets, and capacity density.

Spectrum Architecture: IMT and Non-IMT Realities

Direct-to-device satellite systems operate either in traditional satellite allocations (non-IMT bands such as L-band or S-band) or within IMT spectrum harmonised under 3GPP NTN specifications.

In non-IMT bands, scalability faces structural limits. Propagation at these frequencies is highly dependent on near line-of-sight conditions. Building penetration loss, urban canyon multipath fading, and foliage attenuation reduce reliability. Unlike terrestrial networks that can densify through small cells and sectorization, satellites illuminate wide geographic footprints. They cannot dynamically increase cell density in obstructed urban terrain.

This makes non-IMT direct-to-handset connectivity better suited for open environments such as rural regions, highways, maritime routes, and disaster zones rather than dense urban centres. IMT integration under NTN introduces greater harmonisation. Release 17 and beyond specify extended timing advance calibration, Doppler shift compensation, modified Hybrid Automatic Repeat Request (HARQ) timing, and satellite-aware mobility management. Devices can theoretically switch between terrestrial LTE/5G and orbital access with protocol continuity.

Yet the operational model remains conditional. Satellite access is typically triggered when terrestrial RSRP or SINR drops below defined thresholds. The modem evaluates signal quality and only activates NTN mode when necessary. This ensures satellite resources are preserved, and terrestrial networks handle high-density traffic loads.

Elon Musk, CEO of SpaceX, captured the strategic goal succinctly:

“There should be no dead zones anywhere in the world for your cell phone.” The emphasis is on coverage ubiquity, not urban capacity replacement.

Capacity Density: The Defining Constraint

The most decisive technical limitation is spectral density. Terrestrial operators achieve massive throughput through:

- Massive MIMO spatial multiplexing

- Dense macro-cell grids

- Small-cell layering in high-traffic zones

- Fibre-backed backhaul

- Millimeter-wave overlays

- Aggressive frequency reuse patterns

Satellite beams, even with advanced spot-beam architectures and frequency reuse, cover substantially larger areas. The spectral efficiency per square kilometre cannot match dense terrestrial deployments. Additionally, handheld devices operate under strict uplink power constraints, limiting achievable modulation and coding schemes for satellite links.

From a Shannon capacity standpoint, satellite systems are optimised for wide-area coverage, not high-density concurrency. In densely populated markets, even a mid-sized terrestrial operator can deliver greater aggregate throughput than an orbital beam serving the same footprint. This reality defines satellite’s optimal roles:

- Extending connectivity to underserved geographies

- Providing redundancy during disasters

- Supporting maritime and aviation mobility

- Enabling IoT in sparse environments

- Enhancing national connectivity resilience

Gwynne Shotwell, President of SpaceX, has consistently emphasised connectivity as foundational infrastructure. Reliable global access enables economic participation in regions where terrestrial networks are economically infeasible. The engineering model aligns with that vision.

Inter-Satellite Routing and Cloud-Native Architecture

Modern LEO constellations differentiate themselves through inter-satellite optical links (ISLs). Instead of routing traffic exclusively through ground gateways, data can hop between satellites before downlinking closer to its destination. This reduces dependence on terrestrial fibre choke points and can optimise long-haul routing paths.

Software-defined payloads further allow dynamic beam shaping, adaptive spectrum allocation, and load balancing. Combined with cloud-native packet cores and virtualised network functions, satellite systems increasingly resemble distributed edge clouds in orbit.

However, engineering challenges persist:

- Beam handover must be predictive to prevent session drops.

- Doppler shift compensation requires continuous frequency correction.

- Latency variability introduces jitter that must be absorbed at the transport layer.

- Congestion control algorithms, often QUIC-based, must adapt dynamically.

These are solvable challenges, but they reinforce the reality that satellite networks are engineered for resilience and reach rather than metro throughput supremacy.

Application Distribution and App-Store Dynamics

The notion that satellite networks could bypass app stores often conflates connectivity with runtime control. Satellite networks can facilitate cloud-streamed applications, Progressive Web Apps leveraging Web Assembly, multicast firmware updates, and enterprise-managed OTA deployments. However, runtime enforcement remains device-governed. Operating systems from Apple and Google maintain secure boot chains, code-signing validation, and hardware root-of-trust mechanisms independent of the access network.

Thus, while connectivity may be decentralised, execution control remains centralised within device ecosystems. App-store displacement at mass consumer scale remains unlikely in the near term. Satellite-enabled distribution is most viable in enterprise, industrial, defence, and controlled-device environments where policy governance is internally managed.

Global Regulatory Architecture

Satellite beams inherently traverse national borders. This introduces complex regulatory questions regarding lawful intercept, spectrum harmonisation, emergency service prioritisation, and data sovereignty. Unlike terrestrial towers confined within licensed areas, orbital coverage footprints overlap multiple jurisdictions simultaneously.

Regulators worldwide are converging toward coexistence frameworks where satellite operators must comply with local licensing, security audits, and traffic monitoring obligations. Encryption policies, gateway localisation requirements, and national security clearances are increasingly embedded within approval processes.

Indian Regulatory Perspective

In India, satellite internet operates within a structured licensing regime under the Department of Telecommunications. Operators must obtain a Global Mobile Personal Communication by Satellite (GMPCS) license to provide satellite communication services. Spectrum allocation is subject to administrative assignment or auction-based frameworks, depending on policy direction. Gateway earth stations require approval from national authorities, and security compliance is mandatory. Traffic monitoring capabilities must be provisioned in accordance with lawful intercept regulations. Data localisation considerations, especially under emerging digital governance frameworks, may require traffic breakout within Indian jurisdiction rather than pure inter-satellite routing for domestic data flows.

Additionally, satellite services must align with spectrum coordination under the Wireless Planning & Coordination (WPC) Wing. Coexistence with terrestrial IMT networks requires careful interference management and harmonisation. Regulatory approvals also involve security vetting of network elements and equipment supply chains.

India’s regulatory approach emphasises sovereign oversight while encouraging innovation through hybrid terrestrial-satellite integration models. Partnerships between satellite operators and domestic telecom providers are often preferred to ensure compliance with national security and licensing frameworks.

Industry Alignment: Complement, Not Replace

Sunil Bharti Mittal, Chairman of Bharti Airtel, has emphasised cooperation between satellite and terrestrial operators. In dense markets, terrestrial RAN grids remain unmatched in spectral reuse efficiency and urban throughput.

The long-term architecture, therefore, becomes hybrid:

- Terrestrial networks manage dense capacity loads.

- Satellite networks eliminate coverage gaps.

- Multi-RAT device logic dynamically orchestrates between both.

This convergence is not theoretical. It is already embedded within modem firmware design, NTN standardisation, and regulatory frameworks.

Engineering Takeaways

Telecom engineers and policymakers should focus on:

- Intelligent multi-RAT orchestration between terrestrial and NTN layers

- Adaptive transport protocols for variable-latency satellite links

- Robust cryptographic identity frameworks for secure OTA distribution

- Spectrum coexistence planning in IMT-integrated NTN deployments

- Regulatory compliance mechanisms for cross-border satellite beams

Conclusion

Space internet is a meaningful technological evolution. Advanced beamforming, regenerative payloads, inter-satellite optical routing, and NTN standardisation represent major engineering progress. But spectrum reuse laws and capacity density constraints remain decisive. Satellite networks excel in reach, resilience, and redundancy. Terrestrial networks dominate high-density throughput and urban spectral efficiency. The future of connectivity is not orbital disruption of telecom operators or wholesale bypass of app ecosystems. It is a structured convergence of a layered architecture where Earth and orbit operate in coordinated harmony.

Engineers who design seamless integration across these layers will define the next decade of global communications.

The post Space internet is coming, and satellite networks could bypass app stores and telcos entirely appeared first on ELE Times.

Motor Vehicle Motors Without Rare Earths: Chara Technologies’ Reluctance Motor Bet

Six years ago, when rare earth magnets were still a footnote in most mobility conversations, Bhaktha Keshavachar was already convinced they would become a problem. “We are going from hydrocarbons to electrons for all of our energy,” says the Co-Founder and CEO of Chara Technologies in an exclusive interaction with Kumar Harshit, Technology Correspondent, ELE Times. “In this electric future, motors will be at the heart of every machine. They will be the engines of the future economy. And if motors are central, they must be sustainable.”

At the time, Permanent Magnet Synchronous Motors (PMSMs) dominated electric mobility. Efficient, compact, and powerful, they owed much of their performance to neodymium-iron-boron magnets—magnets built on rare earth elements. But to Bhaktha, the efficiency narrative hid a deeper vulnerability.“One country controls 90 to 95 per cent of the rare earth supply chain,” he says. “It’s not about whether that country is good or bad. They will do what is in their best interest. But that may not be good for us.”

That asymmetry, coupled with environmentally intensive mining and rising geopolitical tensions, became the trigger. “Rare earth is a global problem. Everyone is experiencing the same issue. If we build the right product, the opportunity is global.”

Rethinking the MotorTo understand Chara’s bet, one must first understand how conventional motors work. In a PMSM, the stator generates a rotating magnetic field. The rotor, embedded with powerful permanent magnets, locks onto this field, producing torque. “It works really well,” Bhaktha acknowledges. “The magnets help generate larger torque for smaller amounts of current.”

Induction motors avoid magnets but sacrifice efficiency and power density in traction applications. That left a third architecture—reluctance motors. “The principle is simple,” he explains. “Magnetic flux always takes the path of least resistance. Just like water. Our rotor is designed so that it constantly tries to align itself to the lowest reluctance path. That alignment generates torque.”

Instead of relying on embedded magnets, Chara’s motor uses precisely engineered electrical steel geometries. “We depend on the properties of electrical steel to generate torque. That is the source of its simplicity.” Physics is not new. The engineering to make it commercially competitive is.

The Trade-Off No One SeesRemoving magnets means giving up their brute magnetic strength. To compensate, Chara increases copper in the windings and optimises steel design. The result is a motor that is roughly 15 per cent heavier than a comparable PMSM.

“Our most popular motor is for a three-wheeler,” Bhaktha says. “A PMSM motor is about 15 kilograms. Ours is about 18 kilograms.” On paper, that sounds like a disadvantage. But Bhaktha shifts the conversation from component-level comparison to system-level thinking.

“In a three-wheeler with a gross vehicle weight of 750 kilograms, three kilograms is a rounding error,” he says. “But efficiency over the duty cycle is what really determines range.” He explains how PMSMs require flux weakening at higher speeds—injecting additional current to counteract the very magnets that give them low-speed torque. That process consumes energy and complicates control.

“Our efficiency curve is flatter,” he says. “In duty cycle efficiency, we are 5 to 10 per cent better. For the same vehicle and same battery, you can get 5 to 10 per cent more range.” That improvement can eliminate the need for additional battery capacity—often heavier and costlier than the motor difference itself. “At the system level, our motor can actually make the vehicle lighter,” he adds.

From Scepticism to ShipmentsAfter four years of R&D, Chara began commercial sales in 2024. Today, it ships hundreds of motors every month to customers in India and abroad. But market acceptance did not come easily. “Three questions were always asked,” Bhaktha recalls. “‘Where have you deployed this? What about long-term reliability? And how can we depend on a startup?”

Convincing OEMs to replace the “heart of the machine” with a new architecture required more than performance claims. It required patience—and, unexpectedly, geopolitics. “Only after the geopolitical eruption last year did people start seriously looking at our technology,” he says. “Business improved a lot after that.”

The rare earth issue, once dismissed as distant, had become immediate.

Longevity Without MagnetsReliability is often framed as a risk for new technologies. Bhaktha turns that assumption around. “If you put everything on equal footing, induction motors and our motors should actually have longer life,” he explains. “Permanent magnets can demagnetise because of temperature or external fields. We don’t have that problem.”

By eliminating magnets from the rotor, the design removes a potential failure mode altogether. “In terms of reliability, we are equal or better than PMSM,” he says.

India’s Strategic MomentThe conversation inevitably widens to India’s industrial landscape. Electronics assembly is growing. Semiconductor fabs are emerging. Government schemes like the Electronic Component Manufacturing Scheme (ECMS) are pushing localisation.

“The controller part of the motor is electronics,” Bhaktha notes. “Schemes like ECMS will definitely help. We need that support. It’s like a child learning to walk—the initial support matters.” While motor materials such as steel and copper are already sourced domestically, semiconductor components remain largely import-dependent. “We have to start a strategic drive for components,” he says. “Otherwise, we will face the same vulnerability elsewhere.”

China’s dominance across the value chain looms large in his assessment. “In cost, quality, and timeliness, it is very hard to beat them today,” he says candidly. “But India has a large domestic market. We can deploy new technologies here, nurture them, and then export.”

Capital, Talent, and ConvictionBuilding deep-tech hardware in India is not easy. “Capital is scarce for projects like us,” Bhaktha says. “Our gestation periods are long. We need patient capital that can wait ten or fifteen years.” Talent, too, presents challenges. “It is easier to find people who write software code than people who understand electromagnetics, thermals, and hardware,” he says. But a reverse migration trend is helping. Engineers trained at global universities are returning, drawn by the opportunity to build foundational technologies.

And then there is the storytelling. “It was very difficult to explain why we were doing this,” he admits. “Rare earth was not even a mainstream phrase when we started.”

The 2030 MixBhaktha does not predict a single architecture winning the future. Instead, he envisions a diversified market. “Just like we had petrol, diesel, and CNG engines, we will have PMSM, reluctance motors, externally excited synchronous motors, and induction motors,” he says.

In traction applications alone, he believes rare-earth-free motors could capture about a quarter of the market by 2030. “It might be more,” he adds. “It is difficult to predict how quickly these things move. But rare earth is a real problem. As long as we keep solving the right problem, there will be opportunities.”

Conclusion

The electric revolution is often framed as a battery story. But as Bhaktha reminds us, every electron must eventually turn a shaft. If that shaft can spin without strategic dependencies embedded inside it, the implications extend far beyond efficiency. They touch resilience, sovereignty, and industrial autonomy.

Rare earth-free motors, once a niche research topic, are now entering production lines. And in that shift lies a quiet redefinition of what powers the electric future.

The post Motor Vehicle Motors Without Rare Earths: Chara Technologies’ Reluctance Motor Bet appeared first on ELE Times.

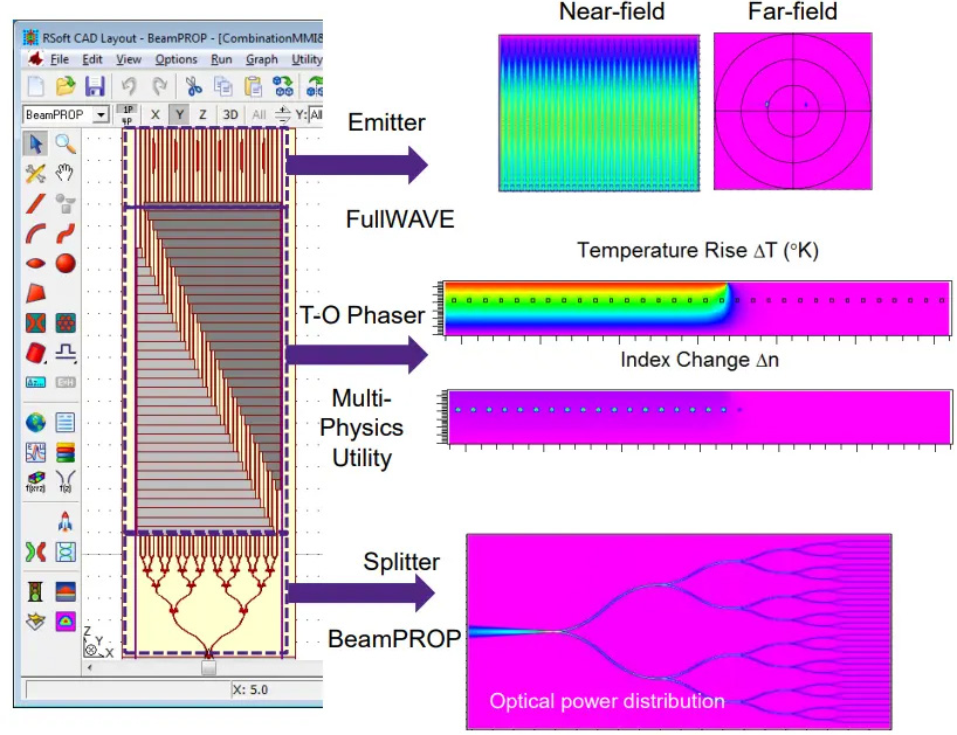

Photonics-electronics Convergence Technology Becomes Essential to Next-generation DCs Precise Measurements Required for DCI Evaluation

Courtesy: Anritsu Corporation

Due to the capacity constraints imposed by metropolitan areas, there is a growing trend to shift towards decentralized regional data centers. Along with the adoption of optical coherent transmission, such as 400G-ZR and OpenZR+, key to achieving this is the precise visualisation of fine quality. Anritsu, a long-established manufacturer of measurement instruments, supports this advancement of data centre networks with its high-precision measurement technology and support system completed in Japan.

The rapid growth in demand for AI has accelerated the global development of data centres, giving rise to an explosive growth in the amount of computational processing. In Japan, however, capacity limits are becoming apparent due to there being little physical space and an overextended electricity grid in metropolitan areas such as Tokyo, Chiba, and Osaka. This situation has led to a move towards the construction of decentralised data centres in rural areas.

Essential to supporting this decentralisation are high-speed, large-capacity, and low-latency data centre interconnections (DCIs). The communication speed of 400G is becoming mainstream, while the development of 800G-compatible products is progressing. At the same time, however, the increase in power consumption that accompanies higher transmission speeds is becoming an issue.

Co-Packaged Optics (CPO), an optical device technology that utilises photonics electronics convergence, is expected to be key to solving this problem.

Daiki Mochizuki, director of the Solutions Marketing Department at Anritsu’s Service Infrastructure Solutions Division, said, “Hyperscalers are also paying attention to CPO, with momentum building for its practical application.” CPO is an architecture that can significantly reduce transmission loss and power consumption by implementing optical transceivers in the same package as the switch ASIC, while shortening the length of the electrical wiring as much as possible. This also contributes to the IOWN initiative’s goal of “reducing electricity consumption to 1/100,” and is therefore attracting attention as a core technology for supporting next-generation infrastructure.

Director Daiki Mochizuki (right) and Manager Mitsuhiro Usuba, Solution Marketing Department, Service Infrastructure Solutions Division, Test & Measurement Company

Director Daiki Mochizuki (right) and Manager Mitsuhiro Usuba, Solution Marketing Department, Service Infrastructure Solutions Division, Test & Measurement Company

On the other hand, unlike pluggable optical transceivers, which are easy to replace, CPOs may require the replacement of the entire device in the event of its failure. Therefore, more precise measurements and evaluations that have been undertaken in the past are required to ensure reliability in the development and manufacturing stages.

Comprehensive Measurement Solutions for CPO Quality Enhancement

In CPO, the optical elements and ASICS are extremely close to each other, making it very difficult to guarantee performance after implementation and to identify the demarcation point of responsibility among vendors. Anritsu offers measurement solutions to overcome this issue.

Mr Mochizuki first introduced the Bit Error Rate Tester (BERT), MP1900A. This is an instrument that visualises transmission errors by passing a test signal through a device, and which can accurately detect even minute bit errors.

The MP2110A is an optical sampling oscilloscope that analyses the waveforms and jitter of high-speed optical signals. As such, it is widely used on production lines for pluggable optical transceivers such as QSFP-DD. Due to its high repeatability and measurement accuracy, it will be increasingly applied to signal quality evaluation in new architectures such as CPO. These devices enable the quantitative understanding of signal quality and modulation integrity through “eye diagram measurement which visualises multiple signal waveforms by overlaying them.

In addition, the MS9740B is an optical spectrum analyser that analyses the wavelength characteristics of optical devices while measuring the Optical Signal-to-Noise Ratio (OSNR) and Side- Mode Suppression Ratio (SMSR). “There is a need to support measurement from a variety of perspectives to ensure the quality of optical devices,” said Mochizuki, further mentioning that these instruments are widely used not only by NTT’s research and development department but also by major device manufacturers.

MT 1040A: Essential for Distributed DCs – Focus on Virtual Tester Development

The practical operation of a distributed data centre requires that the network handle multiple geographically distant locations as if they were a single data centre. To this end, it is essential to be able to precisely measure and manage the latency and quality of communications. The Network Master Pro MT1040A addresses this need.

The MT1040A supports multiple communication standards, including 400G Ethernet. It is also equipped with a forward error correction (FEC) analysis function, enabling the comprehensive verification of the communication quality from the physical layer to the network layer.

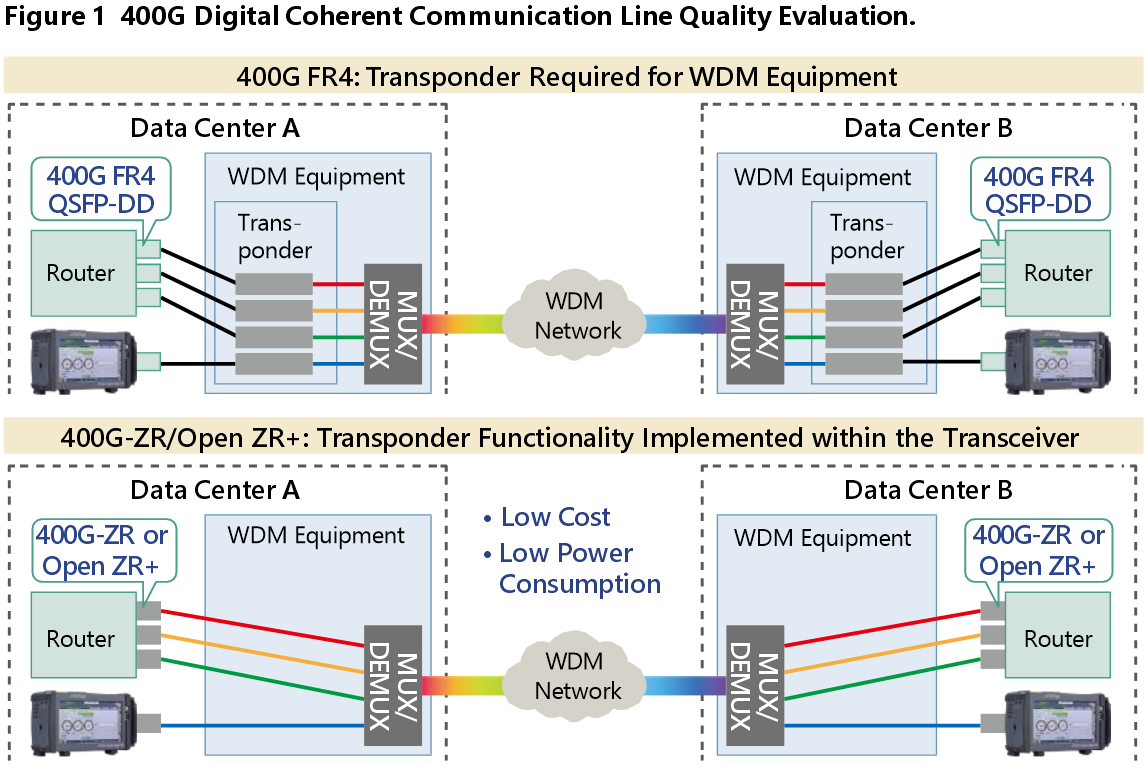

Notably, it supports digital coherent transmission technologies such as 400G- ZR and OpenZR+, with measurement possible at both the IP and optical layers. Until recently, transponder manufacturers were the main users of the device, but with the spread of 400G-ZR/OpenZR+ transceivers, which do not require transponders and which can be directly mounted on routers, their use is expanding to those equipment vendors that deal with coherent signals and users who are building ROADM networks.

While the use of 400G-ZR/OpenZR+ transceivers reduces both the number of devices and the power consumption, it also requires those users dealing with carrier networks to evaluate the network quality themselves, a task that was previously handled by telecommunications carriers.

The MT1040A, which supports QSFP-DD. plays an important role here because it can directly connect to 400G-ZR/OpenZR+ compatible transceivers and measure end-to-end communication quality.

Mitsuhiro Usuba, manager of the department, said: “More and more companies are considering introducing the 400G-ZR, which is becoming more multi- vendor compatible, but some are worried about its operation. To address this, we bring the MT1040A to the customer’s site to measure latency and throughput and support their operational launch.”

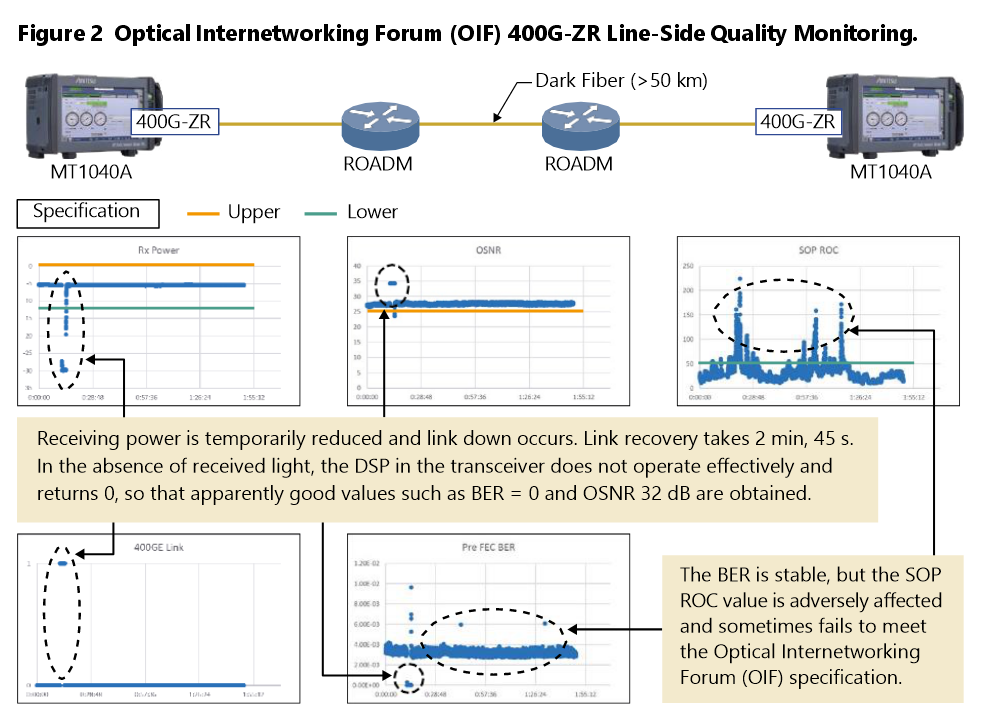

Figure 2 shows an example of measuring 400G-ZR network quality using the MT1040A. Two MT1040As are connected to the ends of an ROADM network using dark fibre. As a result, link downs due to temporary drops in receiving power, the time required to recover the link, and the detection conditions in the absence of received light were observed in detail. In addition, the MT1040A captures quality variations that cannot be detected by normal BER measurements, such as State- Of-Polarization Rate-Of-Change (SOP ROC).

Anritsu is further developing virtual testers for 5G MEC and cloud-native environments. The goal is to enable end-to-end latency and throughput measurements by deploying virtualized software testers on the server side, even in environments where it is physically difficult to install testers, such as in data centers or in automotive. “To take advantage of MEC’s low latency, it is important to have the technology to measure and guarantee its performance,” said Usuba.

Anritsu’s strength lies in its ability to complete all processes from planning to development, through production, to support in Japan. As such, Anritsu is an unparalleled partner in the construction and operation of increasingly sophisticated and complex next-generation networks.

Signal Quality Analyzer-R MP1900A

Signal Quality Analyzer-R MP1900A

Network Master Pro MT1040A

Network Master Pro MT1040A

The post Photonics-electronics Convergence Technology Becomes Essential to Next-generation DCs Precise Measurements Required for DCI Evaluation appeared first on ELE Times.

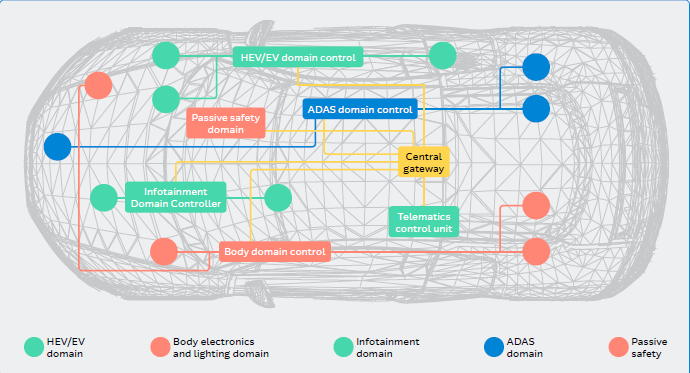

Driving the Future of Vehicle E/E Architecture: Arrow Electronics to Support Next-Generation Mobility

By

- Murdoch Fitzgerald, chief growth officer of global services for Arrow’s global components business, and

- Dr. Raphael Salmi, president of Arrow Electronics’ South Asia, Korea & Japan components business

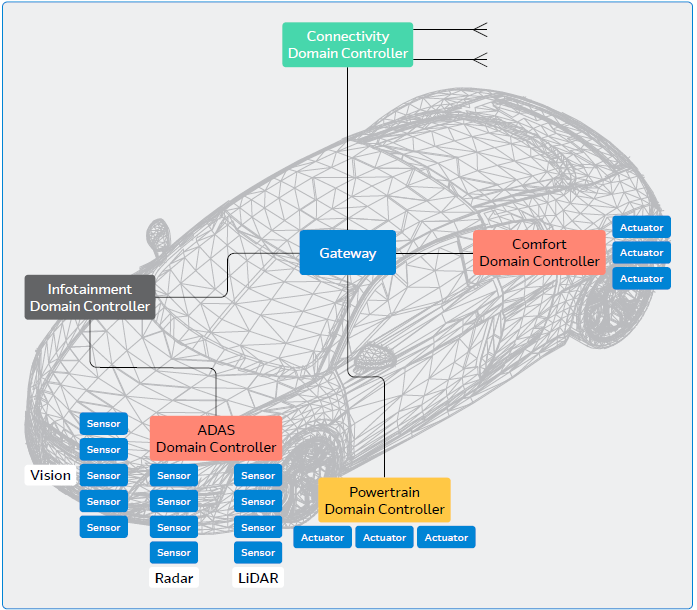

The automotive industry is rapidly advancing toward architectures built for high‑bandwidth data movement, centralized compute, and lifecycle‑ready software operations. Traditional distributed ECU topologies—characterized by increasing wiring mass, point‑to‑point signaling, and proliferation of function-specific modules—are no longer adequate to meet the computational and functional demands of modern vehicles. E/E architecture is vital to this transformation because it provides the foundational electrical, networking, and computing framework required to support higher data throughput, real‑time decision‑making, and the integration of increasingly complex vehicle functions.

The global Vehicle E/E Architecture market was valued at $46.2 Bn in 2024 and is projected to reach $115.6 Bn by 2033, growing at a CAGR of 10.7% (source: Global Market Insights)

Technical Challenges and Complexities Involved in the Adoption of E/E Architecture

- Complex Interdependencies: ADAS, infotainment, and V2X must interoperate across protocols, bridging legacy and new systems.

- Cybersecurity: Increased connectivity expands the attack surface and increases security design complexity.

- Power & Thermal Management: Diverse power demands require real‑time energy and thermal control to prevent failures.

- Validation & Testing: Complex system interactions demand extensive simulation and HIL testing.

- Regulatory Compliance: E/E architectures must meet safety, emissions, and data‑privacy regulations end‑to‑end.

- Environmental Considerations: Sustainable design prioritizes recyclability and lower environmental impact.

Architectural Transformation: From Distributed ECUs to Centralized, Zonal Topologies

Next‑generation E/E architectures shift to a centralized, hierarchical model:

- High‑Performance Compute (HPC) Nodes: Centralized compute consolidates functions from multiple ECUs, reducing module count and enabling ADAS, autonomy, connectivity, and advanced diagnostics.

- Zonal Controllers: Controllers aggregate sensors and actuators by physical zone, cutting wiring length by 30–50% and harness weight by 15–30%.

- Smart Endpoints (SEPs): Ethernet‑centric networks simplify edge connectivity, replacing multiple legacy buses with scalable, deterministic communications.

- High‑Speed Interconnect & Power Distribution: Advanced connectors, harnesses, Ethernet, timing, and power components ensure signal integrity, EMC stability, and high‑speed performance.

E/E Architecture: Engineering the New Vehicle Nervous System

To support this transformation, Arrow Electronics has launched a strategic initiative and dedicated research hub focused on enabling robust next‑generation Electrical and Electronic (E/E) architectures. The initiative addresses critical design, integration, and supply‑chain requirements for OEM and tier‑1 engineering teams building the next wave of mobility platforms.

Arrow Electronics: Technical Enablement Across the Full E/E Stack

Cross Disciplinary Engineering Support: Arrow’s initiative provides engineering teams with access to expertise spanning semiconductors, networking, IP&E, system architecture, safety, and cybersecurity. This includes:

- Architecture level guidance on HPC, zonal, and endpoint implementation

- Safety and cybersecurity engineering aligned to ISO 26262, ISO 21434, and UN R155 expectations

- Power distribution and 48V readiness design considerations

- EMC-driven component selection for high-speed Ethernet and mixed signal environments

This interdisciplinary support helps design teams reduce risk early in platform development.

“E/E architecture is the cornerstone of the modern automotive revolution, enabling the transition from hardware-centric machines to intelligent, software-defined mobility,” said Murdoch Fitzgerald, chief growth officer of global services for Arrow’s global components business. “By combining our global engineering reach with a broad range of components and specialized software expertise, we are well positioned to help our customers navigate this complexity, reducing their time-to-market and helping ensure their platforms are built to adapt as the industry evolves.”

Comprehensive Technology Ecosystem

Arrow’s portfolio includes components and subsystems essential to modern architectures, such as:

- Vehicle networking processors and real-time controllers

- PCIe switching and high-speed interconnect devices

- Automotive Ethernet PHYs, switches, MACsec enabled devices

- High-speed connectors and automotive-grade cabling ecosystems

- Automotive memory, storage, timing, and power components

Access to these technologies simplifies system integration and allows rapid architecture prototyping.

Strengthened Software & Safety Capabilities: Through expanded software engineering centers and the addition of established automotive software firms, Arrow now supports:

- AUTOSAR Classic and Adaptive development

- System-level modelling, HIL/SIL workflows, and model-based development

- OTA and diagnostic pipeline development

- Functional safety engineering and cybersecurity analysis

These capabilities enable engineering teams to build systems that are robust, certifiable, and scalable across vehicle lines.

Automotive Grade Supply Chain Reliability: Modern vehicle platforms require stable, long lifecycle, traceable electronic components. Arrow supports engineers with:

- Multi-sourced, risk-balanced component strategies

- Lifecycle and obsolescence planning

- Global inventory breadth across semiconductor and IP&E categories

This mitigates supply chain risk during development, validation, and production scaling.

Arrow’s E/E Architecture Research Hub

To accelerate architecture development, Arrow has launched an external research hub providing:

- Technical whitepapers

- High-level and subsystem-specific design guidance

- Deep dive analyses of HPC, zonal, and endpoint architectures

- Reference material on safety, cybersecurity, and diagnostics

- Component selection insights and technology mappings

The hub is designed as a resource for engineers, architects, and procurement specialists engaged in next-generation platform design.

E/E architecture represents a complete overhaul of the “nervous system” within modern vehicles. Photo copyright 2026 Artlist Ltd.

E/E architecture represents a complete overhaul of the “nervous system” within modern vehicles. Photo copyright 2026 Artlist Ltd.

Arrow Electronics is a central solution aggregator for E/E architecture, bridging the gap between individual components and complete, integrated systems.

Arrow Electronics is a central solution aggregator for E/E architecture, bridging the gap between individual components and complete, integrated systems.Photo copyright 2026 Artlist Ltd.

Local customer success case:

Arrow Electronics Fuels SAVART Motors’ EV Manufacturing Expansion in Indonesia, Boosting engineering and supply chain capabilities to drive sustainable e-mobility

Arrow has supported Indonesia’s homegrown EV maker SAVART Motors in designing and manufacturing high-quality, safe, and affordable electric scooters.

Founded in 2018, SAVART Motors stands out as one of the few local brands with in-house R&D capabilities, advanced prototyping hardware and software, and a dedicated testing and manufacturing facility in Mojokerto, East Java, Indonesia.

Indonesia’s motorcycle market is the third largest in the world. With nearly 130 million motorcycles on the road, the emissions from these vehicles significantly impact air quality and contribute to climate change. To address this, Indonesia aims to have 13 million electric two-wheelers on the roads by 20302, reducing greenhouse gas emissions and air pollution while promoting eco-friendly commuting.

Empowering homegrown EV entrepreneurs to drive electrification and e-mobility, SAVART Motors meticulously designs its electric scooters from the ground up, seamlessly integrating design aesthetics and performance to suit road conditions, riding culture, and local market expectations. With a strong commitment to quality, safety, comfort, and R&D excellence, the majority of electrical and mechanical components are developed in-house by a team of dedicated and talented engineers who are graduates of leading universities in Indonesia.

SAVART Motors is electrifying Indonesia’s transportation landscape by designing and manufacturing its electric vehicles almost entirely in-house. The company has reached a significant milestone with a 74.27% TKDN verification, reflecting the high level of domestic content in its goods and services produced in Indonesia. From concept through production, SAVART’s engineers develop cutting-edge technology tailored to the needs of local riders. Through its collaboration with Arrow Electronics, SAVART gains access to advanced components from leading global brands such as Analog Devices, Infineon, Littelfuse, Quectel, and STMicroelectronics. Arrow’s support strengthens SAVART’s designs, accelerates production timelines, and enables efficient scaling, while helping the company maintain its commitment to quality and innovation as a homegrown Indonesian brand.

“Electrification and AI-powered technologies are fundamentally transforming transportation,” said Dr. Raphael Salmi, president of Arrow Electronics’ South Asia, Korea & Japan components business. “We are excited to provide SAVART Motors with the essential engineering capabilities and supply chain services they need to manufacture EVs that not only prioritize safety, comfort, and ease of use but also cater to the needs of Indonesian riders. By offering a comprehensive technology portfolio that includes smart IoT connectivity modules, microprocessors, sensors, and automotive-grade silicon carbide MOSFETs, we are well-positioned to be their trusted technology supplier as they continue to revolutionize sustainable e-mobility in Indonesia and beyond.”

A substantial portion of the electronic components in SAVART Motors’ latest model has been sourced and supplied by Arrow. In addition to complementing SAVART Motors’ in-house R&D efforts, Arrow has provided engineering support and guidance on system integration, including adaptive user interfaces, smart vehicle control units, AI-based user profiling, keyless and fingerprint security access, and smart battery management systems.

The post Driving the Future of Vehicle E/E Architecture: Arrow Electronics to Support Next-Generation Mobility appeared first on ELE Times.

Designing AI-resistant technical evaluations

Courtesy: Anthropic

What we learned from three iterations of a performance engineering take-home that Claude keeps beating.

Evaluating technical candidates becomes harder as AI capabilities improve. A take-home that distinguishes well between human skill levels today may be trivially solved by models tomorrow, rendering it useless for evaluation.

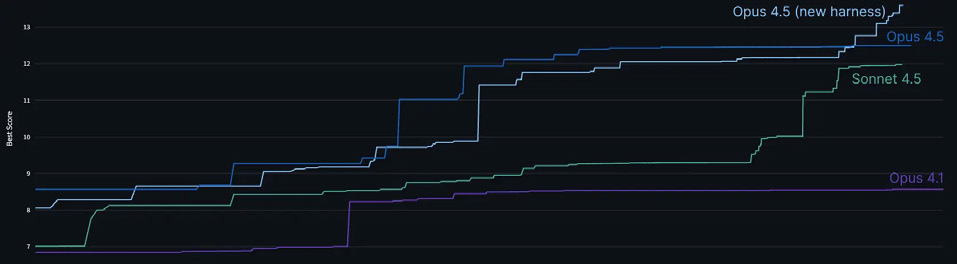

Since early 2024, our performance engineering team has used a take-home test where candidates optimise code for a simulated accelerator. Over 1,000 candidates have completed it, and dozens now work here, including engineers who brought up our Trainium cluster and shipped every model since Claude 3 Opus.

But each new Claude model has forced us to redesign the test. When given the same time limit, Claude Opus 4 outperformed most human applicants. That still allowed us to distinguish the strongest candidates—but then Claude Opus 4.5 matched even those. Humans can still outperform models when given unlimited time, but under the constraints of the take-home test, we no longer have a way to distinguish between the output of our top candidates and our most capable model.

I’ve now iterated through three versions of our take-home in an attempt to ensure it still carries a signal. Each time, I’ve learned something new about what makes evaluations robust to AI assistance and what doesn’t.

This post describes the original take-home design, how each Claude model defeated it, and the increasingly unusual approaches I’ve had to take to ensure our test stays ahead of our top model’s capabilities. While the work we do has evolved alongside our models, we still need stronger engineers—just increasingly creative ways to find them.

To that end, we’re releasing the original take-home as an open challenge, since with unlimited time, the best human performance still exceeds what Claude can achieve. If you can best Opus 4.5, we’d love to hear from you—details are at the bottom of this post.

The origin of the take-home

In November 2023, we were preparing to train and launch Claude Opus 3. We’d secured new TPU and GPU clusters, our large Trainium cluster was coming, and we were spending considerably more than we had in the past on accelerators, but we didn’t have enough performance engineers for our new scale. I posted on Twitter asking people to email us, which brought in more promising candidates than we could evaluate through our standard interview pipeline, a process that consumes significant time for staff and candidates

We needed a way to evaluate candidates more efficiently. So, I took two weeks to design a take-home test that could adequately capture the demands of the role and identify the most capable applicants.

Design goals

Take-homes have a bad reputation. Usually, they’re filled with generic problems that engineers find boring and which make for poor filters. My goal was different: create something genuinely engaging that would make candidates excited to participate and allow us to capture their technical skills at a high-level of resolution.

The format also offers advantages over live interviews for evaluating performance engineering skills:

- Longer time horizon: Engineers rarely face deadlines of less than an hour when coding. A 4-hour window (later reduced to 2 hours) better reflects the actual nature of the job. It’s still shorter than most real tasks, but we need to balance that with how onerous it is.

- Realistic environment: No one is watching or expecting narration. Candidates work in their own editor without distraction.

- Time for comprehension and tooling: Performance optimisation requires understanding existing systems and sometimes building debugging tools. Both are hard to realistically evaluate in a normal 50-minute interview.

- Compatibility with AI assistance: Anthropic’s general candidate guidance asks candidates to complete take-homes without AI unless indicated otherwise. For this take-home, we explicitly indicate otherwise.

Longer-horizon problems are harder for AI to solve completely, so candidates can use AI tools (as they would on the job) while still needing to demonstrate their own skills.

Beyond these format-specific goals, I applied the same principles I use when designing any interview to make the take-home:

- Representative of real work: The problem should give candidates a taste of what the job actually involves.

- High signal: The take-home should avoid problems that hinge on a single insight and ensure candidates have many chances to show their full abilities — leaving as little as possible to chance. It should also have a wide scoring distribution and ensure enough depth that even strong candidates don’t finish everything.

- No specific domain knowledge: People with good fundamentals can learn specifics on the job. Requiring narrow expertise unnecessarily limits the candidate pool.

- Fun: Fast development loops, interesting problems with depth, and room for creativity.

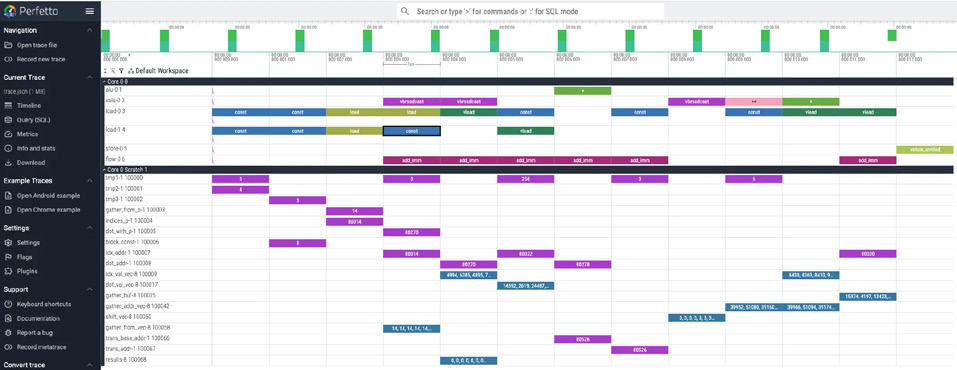

The simulated machine

I built a Python simulator for a fake accelerator with characteristics that resemble TPUs. Candidates optimise code running on this machine, using a hot-reloading Perfetto trace that shows every instruction, similar to the tooling we have on Trainium.

The machine includes features that make accelerator optimisation interesting: manually managed scratchpad memory (unlike CPUs, accelerators often require explicit memory management), VLIW (multiple execution units running in parallel each cycle, requiring efficient instruction packing), SIMD (vector operations on many elements per instruction), and multicore (distributing work across cores).

The task is a parallel tree traversal, deliberately not deep learning flavoured, since most performance engineers hadn’t worked on deep learning yet and could learn domain specifics on the job. The problem was inspired by branchless SIMD decision tree inference, a classical ML optimisation challenge as a nod to the past, which only a few candidates had encountered before.

Candidates start with a fully serial implementation and progressively exploit the machine’s parallelism. The warmup is multicore parallelism, then candidates choose whether to tackle SIMD vectorisation or VLIW instruction packing. The original version also included a bug that candidates needed to debug first, exercising their ability to build tooling.

Early results

The initial take-home worked well. One person from the Twitter batch scored substantially higher than everyone else. He started in early February, two weeks after our first hires through the standard pipeline. The test proved predictive: He immediately began optimising kernels and found a workaround for a launch-blocking compiler bug involving tensor indexing math overflowing 32 bits.