Microelectronics world news

555 VCO revisited

It is well known that a 555 timer in astable mode can be frequency modulated by applying a control voltage (CV) to pin 5. The schematic on the left of Figure 1 shows this classic 555 VCO.

Figure 1 Classic VCO (left) and new 555 VCO variant (right), where Pin 5 is not modulated, which leads to a constant 50% pulse width, independent of frequency.

Figure 1 Classic VCO (left) and new 555 VCO variant (right), where Pin 5 is not modulated, which leads to a constant 50% pulse width, independent of frequency.

Modulating pin 5 has some severe drawbacks: The control voltage (CV) must be significantly > 0 V and < V+, otherwise the oscillation stops.

Wow the engineering world with your unique design: Design Ideas Submission Guide

In contrast to a typical VCO, which outputs 0 Hz or Fmin @ CV=0 and reaches Fmax @ CVmax, the CV behavior of the classic 555 VCO is inverted and nonlinear. This is due to the modulation of the upper and lower Schmitt trigger thresholds, and pulse width changes with frequency. The useful tuning range Fmax/Fmin is limited to about 3.

Stephen Woodward’s “Can a free-running LMC555 VCO discharge its timing cap to zero?” shows some clever improvements: linear-in-pitch CV behavior and an extended 3 octave range, but still suffers from other “pin 5” drawbacks.

The schematic on the right of Figure 1 shows a new variant of the 555 VCO. Pin 5 is not modulated, which leads to a constant 50% pulse width, independent of frequency.

A rising CV results in a higher frequency. CV=0 is allowed and generates Fmin.

The useful tuning range is >10 and ≥100, with some caveats noted below.

Although it uses only 2 resistors and 1 capacitor, like the classic 555 astable configuration, it is a bit harder to understand. The basic function of adding a fraction of the square wave output voltage to the triangle voltage over C, which rises in frequency, is described in my recent Design Idea (DI), “Wide-range tunable RC Schmitt trigger oscillator.”

There, I use a potentiometer to add a fraction of the output to the capacitor voltage.

In the new 555 VCO variant, the potentiometer voltage is replaced by an external CV, which is chopped by the 555 discharge output (pin 7).

When CV is 0, the voltage on the right side of C3 is also 0, and the VCO outputs Fmin. With rising CV, a square wave voltage between 0V (pin 7 discharging) and CV (pin 7 open) appears on the right side of C3. Similar to my above-mentioned DI, this square wave voltage must always be smaller than the hysteresis voltage (555: Vh=V+/3), otherwise Fmax goes towards infinity. That is why you must watch your CVmax if you want to reach high Fmax/Fmin ratios.

Figure 2 shows a QSPICE simulation of frequency with respect to CV from 0 V to 3.9 V in 100 mV steps.

Figure 2 QSPICE simulation of frequency with respect to CV from 0 V to 3.9 V in 100 mV steps.

A prototype with component values from Figure 1 and V+=12 V has been breadboarded, and a rough frequency-versus-CV curve is measured and marked with a red dot in the QSPICE simulation in Figure 2.

Figure 3 shows a scope screenshot for Fmin.

Figure 3 A scope screenshot for Fmin, CH1 (yellow) output voltage, CH2 (magenta) CV=0.

In conclusion, the new 555 VCO circuit overcomes some drawbacks of the classic version, like limited CV range, inverted CV/Hz behavior, and changing pulse width, without using more components. Unfortunately, it still shows nonlinear CV/Hz behavior. Maybe using a closed loop, with an opamp and a simple charge pump, can tame it by raising the chip count to 2.

Uwe Schüler is a retired electronics engineer. When he’s not busy with his grandchildren, he enjoys experimenting with DIY music electronics.

Related Content

- Can a free-running LMC555 VCO discharge its timing cap to zero?

- Wide-range tunable RC Schmitt trigger oscillator

- A pitch-linear VCO, part 1: Getting it going

- A pitch-linear VCO, part 2: taking it further

- A two-way mirror—current mirror that is

The post 555 VCO revisited appeared first on EDN.

Infineon’s 2026 edition of GaN Insights eBook highlights adoption in power electronics

Simplifying inductive wireless charging

What do e-bikes and laptops have in common? Both can be wirelessly charged by induction.

E-bikes and laptops both use lithium-ion batteries for power, chosen for their light weight, high energy density, and long lifespan. Both systems can be wirelessly recharged via the wireless power transfer (WPT) method that uses electromagnetic induction to transfer energy to the battery without cables.

For e-bikes, there is a wireless charging pad or inductive tile that e-bikes park on to transfer power. For induction charging, one coil is integrated into the static pad or tile (transmitter coil) and the other (the receiver coil) is situated on the bike, often in the kickstand. The charging pad’s coil, fed by AC, creates a magnetic field, which in turn produces current in the bike’s coil. This AC is then converted to DC, to power the bike’s battery.

The principle is the same for laptops, as well as a broad range of consumer and industrial devices, including small robots, drones, power tools, robotic vacuum cleaners, wireless routers, and lawnmowers.

Microchip provides a 300-W electromagnetic inductive wireless electric power transmission reference design that can be incorporated into any type of low-power consumer or industrial system for wireless charging (see block diagram in Figure 1). It consists of a Microchip WP300TX01 power transmitter (PTx) and Microchip WP300RX01 power receiver (PRx). The design operates with efficiency of over 90% at 300-W power and a Z-distance (the distance between pairing coils) of 5−10 mm.

Figure 1: Block diagram of the 300-W inductive power transfer reference design (Source: Microchip Technology Inc.)

Figure 1: Block diagram of the 300-W inductive power transfer reference design (Source: Microchip Technology Inc.)

The transmitter (Figure 2) is nominally powered from a 24-V rail and the receiver regulates the output voltage to nominal 24 V.

Figure 2: Block diagram of the power transmitter (Source: Microchip Technology Inc.)

Figure 2: Block diagram of the power transmitter (Source: Microchip Technology Inc.)

The design’s operating DC input voltage range is 11 V to 37 V, with input overvoltage and undervoltage protection, as well as overcurrent and thermal protection via a PCB/coil temperature-monitoring functionality. Maximum receiver output current is 8.5 A, and the receiver output voltage is adjustable from 12 V to 36 V.

The design implements a Microchip proprietary protocol, developed after years of research and development and, with patents granted in the U.S., ensuring reliable power transfer with high efficiency. The system also implements foreign object detection (FOD), a safety measure that avoids hazardous situations should a metallic object find its way in the vicinity of the charging field. Once the FOD detects a metallic object near the charging zone, where the magnetic field is generated, it stops the power transfer.

The reference design incorporates this functionality on the main coil, ceasing power from the transmitter until the object is removed. FOD is performed by stopping four PWM drive signals, with four being the maximum to avoid stopping the charging entirely.

This reference design also detects some NFC/RFID cards and tags.

Transmitter and receiverThe WP300TX01 is a fixed-function device designed for wireless power transfer, as is the WP300RX01 chip, designed for receiving wireless power. The two are paired together for a maximum power transfer of 300 W.

The user can configure the input’s under- and overvoltage, as well as the input’s overcurrent and overpower. There are three outputs for general-purpose LEDs and multiple OLED screens, as well as five inputs for interface switches. The design enables OLED display pages to allow viewing and monitoring of live system parameters, and as with the input parameters, the OLED panel’s settings can be configured by the user.

The WP300RX01 device operates from 4.8 V to 5.2 V, in an ambient temperature between −40°C and 85°C. Like with the transmitter controller, this device provides overvoltage, undervoltage, overcurrent, overpower, and overtemperature protection, with added qualification of AEC-Q100 REVG Grade 3 (−40°C to 85°C), which refers to a device’s ability to function reliably within this ambient temperature range.

The reference design simplifies and accelerates WPT system design and eliminates the need to go through the certification process, as it has already been accredited with the CE certification, which signifies that a product meets all the necessary requirements of applicable EU directives and regulations.

Types of wireless chargingThere are different types of wireless charging, including resonant, inductive, electric field coupling, and RF. Inductive charging for smartphones and other lower-power electronic devices is guided by the Qi open standard, introduced by the Wireless Power Consortium in 2010, to create a universal, interoperable charging concept for electronic devices.

The Qi open standard promotes interoperability, thus avoiding multiple chargers and cables, as well as market fragmentation into different proprietary solutions. Many manufacturers have adopted this standard in their products, including tech giants like Apple and Samsung.

Since 2023, the Qi 2.0 version brings faster charging to mobile devices to 15 W, certified for interoperability and safety. Qi 2.0 devices feature magnetic attachment technology, which aligns devices and chargers perfectly for improved energy efficiency for faster and safer charging and ease of use. Qi 2.X includes the Magnetic Power Profile (MPP) with an added operating frequency of 360 kHz. With MPP, a magnetic ring ensures the receiver’s coil aligns perfectly with the charger’s coil, thus improving power transfer and reducing heat.

Qi 2.2, released in June 2025, enables 25-W charging, building on the convenience and energy efficiency of Qi while improving the wireless charging time.

Simultaneous charging of two 15-W Qi receiversIn addition to its 300-W electromagnetic inductive wireless electric power transmission reference design reviewed earlier in this article, Microchip also offers the Qi2 dual-pad wireless power transmitter reference design. This dual-pad, multi-coil wireless power transmitter reference design enables simultaneous charging of two 15-W Qi receivers (see Figure 3).

At the heart of the design is a Microchip dsPIC33 digital-signal controller (DSC) that simultaneously controls both charging pads. The dual-pad design is compatible with the Qi 1.3 and Qi 2.x standards, as well as MPP and Extended Power Profile.

The hardware is reconfigurable and supports most transmitter topologies. In addition to MPP, it supports Baseline Power Profile for receivers to 5 W.

Figure 3: Block diagram of the Qi 2.0 dual-pad wireless power transmitter reference design (Source: Microchip Technology Inc.)

Figure 3: Block diagram of the Qi 2.0 dual-pad wireless power transmitter reference design (Source: Microchip Technology Inc.)

The MPP charging pad initiates charge with a 12-kHz inverter switching frequency but will shift to 360 kHz when connected to an MPP PRx. The dsPIC33CK DSC executes two charger instances. To facilitate support for different protocols, real-time decisions based on charging pad and receiver type are required.

The MPP charging pad initiates charge with a 12-kHz inverter switching frequency but will shift to 360 kHz when connected to an MPP PRx. The dsPIC33CK DSC executes two charger instances. To facilitate support for different protocols, real-time decisions based on charging pad and receiver type are required.

The software-based design provides a high level of flexibility to optimize key features of the wireless power system, such as efficiency, charging area, Z-distance, and FOD. To support applications with a wide input voltage range, each PTx includes a front-end four-switch buck-boost (4SWBB) converter for power regulation. The 4SWBB connects to a full-bridge inverter for driving the resonant tank. On the MPP charger, additional resonant capacitor switch networks enable higher resonant frequency. An MP-A13 charger implements a similar coil select circuitry for energizing the coil with the strongest signal possible, enabling a wider area of placement.

This reference design is automotive-grade and includes CryptoAuthentication, hardware-based (on-chip) secure storage for cryptographic keys, to protect communication and data handling. In addition, the design includes a Trust Anchor TA100/TA010 secure storage subsystem. The dsPIC33CK device architecture also allows the integration of additional software stacks, such as automotive CAN stack or NFC stacks for tag detection.

It’s worth noting that the variable-input voltage, fixed-frequency power control topology implemented in the transmitter is ideal for systems that must meet stringent electromagnetic-interference and electromagnetic-compatibility requirements.

In addition to all these features, including FOD through calibrated power loss, the dual-charging reference design also provides measured quality factor/resonant frequency and ping open-air object detection; multiple fast-charge implementations, including for Apple and Samsung; and several receiver modulation types, such as AC capacitive and AC/DC resistive. For added safety, the design includes thermal power foldback and shutdown and overpower protection.

A UART-USB communication interface enables reporting and debugging of data packets, and LEDs indicate system status and coil selection. There is a reset switch and temp sensor inputs for added functionalities.

With the continuously evolving standards for Qi and unique new applications requiring higher-wattage wireless charging, there is plenty of opportunity for innovation and growth in the wireless charging space. Microchip experts can provide you with the right guidance for seamlessly bringing your wireless charging solution to market.

The post Simplifying inductive wireless charging appeared first on EDN.

Navitas unveils 10kW DC–DC platform delivering 98.5% efficiency for 800VDC next-gen AI data centers

Anritsu Achieves Skylo Certification to Accelerate Global Expansion for NTNs

ANRITSU CORPORATION announced the expansion of its collaboration with Skylo Technologies with the successful certification of Anritsu’s RF and protocol test cases for Skylo’s non-terrestrial network (NTN) specifications. This milestone completes a comprehensive suite of Skylo-approved RF and protocol test cases, enabling narrowband IoT devices to operate seamlessly over Skylo’s NTN in alignment with 3GPP Release 17.

The momentum behind satellite-to-ground connectivity continues to accelerate as mobile operators and enterprises seek to extend reliable coverage across remote regions, industrial sites, and maritime environments. Under these circumstances, Skylo’s NTN network brings efficient power, low cost, and highly resilient NB-IoT capabilities to industries such as agriculture, logistics, maritime, and mining, enabling remote sensing, asset tracking, and safety-critical applications where a terrestrial network is out of reach.

Using Anritsu’s ME7873NR and ME7834NR platforms, now certified under the Skylo Carrier Acceptance Test program, device manufacturers will be able to validate NB-IoT NTN chipsets, modules, and devices for Skylo’s network with a fully automated and repeatable test environment. These solutions integrate 3GPP 4G and 5G protocols with NTN-specific parameters, ensuring accurate simulation of live network scenarios while reducing test time and accelerating device readiness.

Anritsu’s test solutions provide end-to-end validation for terrestrial and non-terrestrial networks within a single environment, enabling realistic emulation of satellite channel conditions and orbital dynamics for comprehensive verification of device performance. This level of testing rigour ensures interoperability, reliability, and high performance for dual-mode NTN devices destined for deployment across global markets.

Andrew Nuttall, Chief Technology Officer and Co-founder at Skylo Technologies, said: “We’re excited to join forces with Anritsu to accelerate innovation in non-terrestrial networks. This collaboration strengthens our shared commitment to delivering reliable, high-performance connectivity solutions for a rapidly evolving global market. Together, we’re enabling the next generation of devices and services that will redefine what’s possible in satellite-enabled connectivity.”

Daizaburo Yokoo, General Manager of Anritsu’s Mobile Solutions Division, said: “Partnering with Skylo represents an exciting step forward in advancing non-terrestrial network technology. This collaboration underscores our shared commitment to drive interoperability and set new standards for the future of global communications.”

Skylo operates on 3GPP Release 17 specifications and has developed additional “Standards Plus” extensions to enhance performance and interoperability across satellite networks. These Skylo-specified enhancements ensure that devices certified through the Skylo CAT program deliver robust connectivity and a seamless user experience across its expanding NTN footprint.

In partnership with Skylo, Anritsu remains committed to advancing 5G device development, enabling seamless global connectivity for data, voice, and messaging.

The post Anritsu Achieves Skylo Certification to Accelerate Global Expansion for NTNs appeared first on ELE Times.

Arrow Electronics Initiates Support for Next-Gen Vehicle E/E Architecture

Arrow Electronics has launched a strategic initiative and research hub to support next-generation vehicle electrical and electronic (E/E) architecture.

The available resources provide automotive manufacturers and tier-1 suppliers with the engineering expertise and supply chain stability required to navigate the industry’s shift toward software-defined vehicles.

As consumer and commercial vehicles evolve into complex, intelligent platforms, the traditional method of adding a separate computer for every new electronic feature is no longer sustainable. E/E architecture represents a complete overhaul of the “nervous system” within modern vehicles.

This fundamental shift moves away from hundreds of individual components toward a more centralised system where powerful computing hubs manage multiple functions. This transition can streamline and harmonise systems and operations while reducing the internal wiring of a car by up to 20 per cent, leading to vehicles that are lighter, more energy-efficient and easier to update via software throughout the vehicle’s lifecycle.

Aggregating Hardware, Software and Supply Chain Expertise

Arrow is a central solution aggregator for E/E architecture, bridging the gap between individual components and complete, integrated systems. Arrow’s portfolio of design engineering services includes a dedicated team of automotive experts who provide cross-technology support in both semiconductor and IP&E (interconnect, passive and electromechanical components) sectors.

This technical depth is matched by a vast global inventory and robust supply chain services that help ensure confidence through multisourced, traceable component strategies and proactive obsolescence planning so that automakers have the right components in hand when they need them.

In addition to hardware, Arrow has significantly expanded its transportation software footprint in recent years to include expertise in AUTOSAR, functional safety standards and automotive cybersecurity.

Strengthening the Automotive Ecosystem

“E/E architecture is the cornerstone of the modern automotive revolution, enabling the transition from hardware-centric machines to intelligent, software-defined mobility,” said Murdoch Fitzgerald, chief growth officer of global services for Arrow’s global components business. “By combining our global engineering reach with a broad range of components and specialised software expertise, we are well positioned to help our customers navigate this complexity, reducing their time-to-market and helping ensure their platforms are built to adapt as the industry evolves.”

Arrow’s E/E architecture initiative builds on the company’s 2024 acquisitions of specialist software firms iQMine and Avelabs, leading engineering services providers for the automotive and transportation industry. These additions have bolstered Arrow’s software development centres and its Automotive Centre of Excellence.

To support engineers and procurement leaders through E/E architecture redesign, Arrow has launched a new dedicated research hub. This online resource provides comprehensive technical insights, whitepapers and design tools specifically for E/E architecture development.

The post Arrow Electronics Initiates Support for Next-Gen Vehicle E/E Architecture appeared first on ELE Times.

Software-Defined Everything: The Foundation of the AI-powered Digital Enterprise

Courtesy: Siemens

Industry today is not facing a single technological change but a structural transformation. Markets are evolving faster than production systems, product life cycles are shortening, while industrial assets are designed to last for decades. At the same time, complexity along the entire value chain is increasing – technologically, organizationally, and regulatory. In this reality, adaptability becomes the decisive capability to secure and sustainably develop industrial value creation.

Within this context, classical automation reaches its structural limits. Automation based on fixed sequences, static logics, and extensive manual engineering can no longer keep up with the pace of modern industry. Efficiency gains within this paradigm are insufficient when products, processes, and frameworks are constantly changing – and they do not provide a sustainable foundation for the widespread use of artificial intelligence.

What is needed now is the next evolutionary step: the automation of automation itself. Instead of specifying every process in detail, industrial systems must be empowered to solve tasks autonomously – based on objectives, context, and continuous learning. Software-Defined Everything (SDx) becomes the necessary organising principle: it decouples functionality from specific hardware, creates a continuous, lifecycle-spanning data foundation, and enables systems to self-configure, adapt, and optimise.

In production, this approach manifests as Software-Defined Automation (SDA). SDA is the consistent application of Software-Defined Everything to the production automation layer. Control logic, functionality, and intelligence are decoupled from physical hardware, software-defined, and continuously developed. Hardware remains the stable, high-performance foundation, while software provides flexibility, adaptability, and learning capability to production systems.

This creates the structural basis for the AI-powered Digital Enterprise: an industrial organisation in which software, digital twins, and industrial AI work in closed-loop cycles, systems learn continuously, and decisions are not only prepared but also operationally executed. From this capability, the path to the Industrial Metaverse opens up – as the next stage of development, where planning, simulation, collaboration, and operational control converge in a shared digital space, supporting real industrial value creation in real time.

Stable foundation, flexible control: Software-Defined Automation in production

For many years, industrial functionality was inseparably tied to hardware. New requirements meant new components, modifications, or downtime. This model was stable – but no longer fast enough.

Software-Defined Everything breaks this logic. Functions, intelligence, and control are decoupled from specific hardware and moved into software. In production, this takes the form of Software-Defined Automation (SDA): the automation layer itself becomes software-defined, controlled, and continuously improved, while hardware continues to serve as a stable, high-performance foundation.

This fundamentally changes industrial systems:

- Functions can be adapted via software instead of physical modifications

- Systems evolve continuously throughout their lifecycle

- Adaptability becomes a structural characteristic

Industry becomes not only more digital but also definable, controllable, and optimizable through software.

Practical example: Software-Defined Automation in action

How this transformation is already becoming reality can be seen in the automotive industry. Companies, together with Siemens, are implementing Software-Defined Automation as an integral part of Software-Defined Everything. By introducing a virtual, TÜV-certified PLC, production control logic is no longer tied to physical control hardware but runs as software – centrally managed, flexibly scalable, and continuously updated.

This implements a core principle of SDA: the automation layer itself is software-defined. New functions can be rolled out via software, production systems can be quickly adapted to new vehicle variants, and updates and tests can be prepared and validated virtually. IT and OT environments converge into a unified, software-based operation.

The result is production that is not only more efficient but also learning- and AI-capable – a key prerequisite for the AI-powered Digital Enterprise.

Software-Defined as a bridge between goal and reality

The real value of Software-Defined Everything lies not in individual applications but in connecting the digital target picture with actual operations. SDx – and in production specifically SDA – enables the digital representation of target and actual states of industrial systems and products.

Real operational data from running plants is combined with target states from simulations, digital twins, and engineering models. Unlike isolated analytics or digital twin solutions, this creates a continuous, consistent data foundation across the entire lifecycle – from design through implementation to optimisation. Most importantly, it creates a bidirectional connection: digital insights directly influence operations.

Digital insights are no longer abstract. They become actionable.

Why Software-Defined Everything is the prerequisite for Industrial AI

Artificial intelligence only delivers value in industry if it can do more than analyse – it must act. On a software-defined data foundation, target and operational states can be continuously compared and contrasted. AI methods detect deviations, identify correlations across products, machines, and plants, and derive concrete optimisation recommendations.

The decisive step follows: Software-Defined Everything – and in production, Software-Defined Automation – closes the loop. AI-driven insights are directly translated into operational adjustments. Machines, processes, and products respond autonomously, without manual reconfiguration.

This creates learning systems that continuously improve – not as an exception, but as the standard.

The AI-powered Digital Enterprise: Learning as an operating system

When Software-Defined Everything, Software-Defined Automation, digital twins, and industrial AI interact, a new form of industrial organisation emerges. Products become platforms, production systems dynamically adapt to new variants and requirements, and knowledge is generated in ongoing operations and systematically made usable.

The AI-powered Digital Enterprise is therefore not a static target but a continuous learning process embedded within the systems themselves.

Industrial Metaverse: The consequence of a Software-Defined reality

From this development, the Industrial Metaverse becomes tangible – not as a visualisation, but as a new operational and management layer. When digital twins accurately reflect the real state, when AI prepares or autonomously makes decisions, and when software directly translates these decisions into real-world actions, the virtual space becomes the central environment for planning, collaboration, and optimisation.

Software-Defined Everything as a structural capability

Software-Defined Everything – with Software-Defined Automation as the core for production – is not a short-term trend or an isolated technology choice. It is the structural prerequisite to make industrial systems learning-capable, adaptable, and future-proof, and to unlock the full potential of AI for the industry of the future.

The post Software-Defined Everything: The Foundation of the AI-powered Digital Enterprise appeared first on ELE Times.

3 semicon-enabled innovations impacting our experience of the world

Courtesy: Texas Instruments

The chips that power today’s smartphones contain over 15 billion transistors; the semiconductors powering data centres can have hundreds of billions of transistors. Semiconductors drive and power breakthroughs across hundreds of critical and emerging industries, such as robotics, personal electronics and artificial intelligence. As semiconductors continue to enable the world to function and make life more convenient and safer, their role will only increase.

The importance of chips – and the electronics they’re enabling – has been made possible by years of semiconductor progress. Let’s review how semiconductor technologies are enabling three innovations in electronics that impact how we experience the world.

Innovation No. 1: Systems that operate safely around humans

“You might think humanoids are 3 to 5 years away. But really, humanoids are the present,” said Giovanni Campanella, general manager of factory automation, motor drives and robotics at TI, at a Computex speech.

Humanoids’ emergence is anything but simple. Robots that perform chores in homes, complete tasks in a factory, or even clean dishes in a restaurant kitchen must adapt in dynamic environments, where things change every second.

In order to build adaptable robots that can operate around humans in diverse settings, such as domestic or business environments, design engineers must leverage semiconductor technologies. Each of these technologies must work together to perform the actions of one safe and functional humanoid. Actuators in robots enable their movements. With sensing, the robot can perceive its surrounding environment, and a central computer acts as its brain, analysing and making decisions from that sensing data. Communication with the compute units and actuators happens in real time, so the humanoid can complete a task, such as handing an object to someone.

Innovation No. 2: Smaller, more affordable, smarter devices

Smartphones and laptops keep getting thinner and lighter. Medical patches provide continuous monitoring without external equipment. Devices are on a trajectory to fit into an individual’s life, increasing convenience and accessibility.

How are designers able to continually progress toward the trend of “smaller” and more convenient when last year’s newest smartphone was already the smallest ever?

Significant advances in component design are enabling this progress. An example of this was our launch of the world’s smallest MCU, reflecting breakthroughs in packaging, integration and power efficiency that allow more functionality to fit into dramatically smaller spaces.

“With the addition of the world’s smallest MCU, our MSPM0 MCU portfolio provides unlimited possibilities to enable smarter, more connected experiences in our day-to-day lives,” said Vinay Agarwal, vice president and general manager of MSP Microcontrollers at TI.

Due to semiconductors, headphones that were once clunky can now fit into a pocket and provide a premium audio experience. Smart rings instantly track health metrics like activity and heart rate without interrupting everyday activities. With devices like the world’s smallest MCU, the prevalence of smaller, more affordable electronics that seamlessly blend into an already-existing routine is expanding.

Innovation No. 3: AI everywhere

By 2033, the global AI market is expected to account for $4.8 trillion – 25 times higher than the $189 billion valuation in 2023. AI is already enabling smartphones to process images in real time, cars to monitor drivers and their surroundings, and medical devices to deliver precise insights, and with its projected growth, the possibilities of where else AI can appear seem endless.

But with the influx of power needed to process the massive amounts of data that AI requires – and the inevitable demand to process even more data – there must be supporting infrastructure.

This is why moving energy from the grid to the gate is crucial – by optimising every stage of the power chain, from the electrical grid to the logic gates inside computer processors, TI helps support widespread AI adoption while improving efficiency, reliability, and sustainability.

At the same time, the need for more power to process the computations that AI requires has reshaped system designs. Software-defined architectures have enabled products to adapt and deploy new AI capabilities without new hardware. Software is increasingly becoming an important driver of flexibility, differentiation, and energy efficiency in applications such as vehicles, robotic systems and appliances.

Even at the edge, we’re working with designers now to implement AI onto devices such as solar panels to detect potentially dangerous arc faults. But that’s only one way we’re supporting the increase of AI.

“We’ll continue developing those use cases that make sense,” said Henrik Mannesson, general manager of energy infrastructure at TI. “But we also recognise the need to build universal tools that enable customers to further innovate with edge AI.”

Conscluion:

From robots that can safely work alongside humans to ultra-compact devices that seamlessly integrate into daily life, and AI systems that scale responsibly from the edge to the cloud, semiconductor innovation is redefining how technology touches the world around us. These advances are not happening in isolation; they are the result of sustained progress in sensing, computing, power management, and software-driven design working in unison. As demand grows for smarter, safer, and more energy-efficient systems, semiconductors will remain the invisible backbone enabling engineers to turn ambitious ideas into practical, real-world solutions. In shaping what’s next, the smallest components will continue to have the biggest impact.

The post 3 semicon-enabled innovations impacting our experience of the world appeared first on ELE Times.

TP-Link’s Kasa HS103: A smart plug with solid network connectivity

With Amazon’s smart plug teardown “in the books”, our engineer turns his attention to some TP-Link counterparts, this first one the best behaved of the bunch per hands-on testing results.

Two months back, I introduced you to several members of TP-Link’s Kasa and Tapo smart home product lines as successors to Belkin’s then-soon and now (at least as you read these words, a few weeks after I wrote them) defunct Wemo smart plug devices. I mentioned at the time that I’d had particularly good luck, from both initial setup and ongoing connectivity standpoints, with the Kasa HS103:

An example of which, I mentioned at the time, I’d shortly be tearing down both for standalone inspection purposes and subsequent comparison to the smaller but seemingly also functionally flakier Tapo EP10:

Today, I’ll be actualizing my HS103 teardown aspiration, with the EP10 analysis to follow in short order, hopefully sometime next month. What’s inside this inexpensive device, and is it any easier to disassemble than was Amazon’s Smart Plug, which I dissected last month?

Let’s find out. As usual, I’ll begin with some outer box shots of the four-pack containing today’s patient. You may call the packaging “boring”. I call it refreshingly simple. As well as recyclable.

Sorry, I couldn’t resist including that last one  .

.

Now for the device inside the box, beginning with a conceptual block diagram. Interestingly, although I’d mentioned back in December that TP-Link now specs the HS103 to handle a current draw of up to 15A, the four-pack (HS103P4) graphic on Amazon’s website still list 12A max:

Its three-pack (HS103P3) graphic counterpart eliminates the current spec entirely, replacing it with the shadowy outline of an AC outlet set, which I suppose is one way to fix the issue!

And now for some real-life shots, as usual (and as with subsequent images) accompanied by a 0.75″ (19.1 mm) diameter U.S. penny for size comparison purposes:

See that seam? I ‘spect that’ll be a key piece for solving the puzzle of the pathway to the insides:

And, last but not least, all those specs that the engineers out there reading this know and love, including the FCC certification ID (2AXJ4KP115):

Cracking (open) the caseNow to get inside. Although I earlier highlighted the topside seam, I decided to focus my spudger attention on the right side to start, specifically the already visible gap between the main chassis and the rubberized gasket ring:

Quickly results-realizing that I was indirectly just pushing the side plate (containing the multi-function switch) out of its normal place, I redirected my attention to it more directly:

Success, at least as a first step!

Now for that gasket…

At this point, however, we only have a visual tease at the insides:

Time for another Amazon-supplied conceptual diagram:

And now for the real thing. This junction overlap gave me a clue of how to start:

It wouldn’t be a proper teardown without at least a bit of collateral damage, yes?

Onward, I endure it all for you, dear readers:

Voilà:

Boring half first:

Now for the half we all really care about:

As with its Amazon smart plug predecessor, the analog and power portions are “vanilla”:

The off-white relay at far right on the main PCB, for example, is the HF32FV-16 from Hongfa. Perhaps the most interesting aspect of the analog-and-power subsystem, at least to me, is the sizeable fuse below the pass-through ground connection, which I hadn’t noticed in the Amazon-equivalent design (although perhaps I just overlooked it?). The digital mini-PCB abutting the relay, on the other hand, is where all the connectivity and control magic take place…

In the upper left corner is the multicolor LED whose glow (amber or/or blue, and either steady or blinking, depending on the operating mode of the moment) shines through the aforementioned translucent gasket when the switch is powered up (and not switched off):

Those two unpopulated eight-lead IC sites below it are…a titillating tease of what might be in a more advanced product variant? In the bottom left corner is the embedded 2.4 GHz Wi-Fi 1T1R antenna. And to its right is the “brains” of the operation at the other end of the antenna connection, Realtek’s RTL8710, which supports a complete TCIP/IP “stack” and integrates a 166 MHz Arm Cortex M3 processor core, 512 Kbytes of RAM and 1 Mbyte of flash memory.

Stubborn solderSpeaking of power pass-throughs…what about the other side of the main PCB? The obvious first step is to remove the screw whose head you might have already noticed in the earlier shot:

But that wasn’t enough to get the PCB to budge out of the chassis, at least meaningfully:

Recall that in the Amazon smart plug design, not only the back panel’s ground pin but also its neutral blade pass through intact to the front panel slots, albeit with the latter also split off at the source to power the PCB via a separate wire. The line blade is the only one that only goes directly to the PCB, where it’s presumably switched prior to routing to the front panel load slot.

In this design, that same switching scheme may very well be the case. But this time the back panel neutral connection also routes solely to the PCB. Note the two beefy solder points on the main PCB, one directly above the screw location and the other to the right of its solder sibling. I was unable to get either (far from both of) them either successfully unsoldered from above or snipped from below. And all I could discern on the underside of the PCB from peering through the gap were a few scattered additional passive components, anyway.

So, sorry, folks, I threw in the towel and gave up. I’m assuming that those two particular solder points, befitting the necessary stability not only electrically but also mechanically, i.e., physically, leveraged higher-temperature solid or silver solder that my iron just wasn’t up for. Or maybe I just wasn’t sufficiently patient to wait long enough for the solder to melt (hey, it’s happened before). Regardless, and as usual, I welcome your thoughts on what I was able to show you, or anything else related to this product and my teardown of it, for that matter, in the comments!

—Brian Dipert is the Principal at Sierra Media and a former technical editor at EDN Magazine, where he still regularly contributes as a freelancer.

Related Content

- Amazon’s Smart Plug: Getting inside requires more than just a tug

- How smart plugs can make your life easier and safer

- Smart plug provides remote control functionality for home devices via voice command

- Teardown: Smart plug adds energy consumption monitoring

- This smart plug automatically resets your router when your Internet goes out

The post TP-Link’s Kasa HS103: A smart plug with solid network connectivity appeared first on EDN.

EEVblog 1734 - Uni-T UT892 2000V High Voltage Multimeter TESTED

Lumentum’s quarterly revenue grows 65% year-on-year to $665.5m

Infineon’s silicon carbide power MOSFETs selected for Toyota’s new bZ4X model

Look at these monsters! 29,000 microfarad

| Came across this capacitor bank inside of this giant battery charger just figured I'd share, LOL. It has (3) 29k microfarad 200vdc, and (1) 13k microfarad 200vdc capacitors. Gives me the heebie-jeebies just looking at it... It has a built-in capacitor discharge button but still... [link] [comments] |

Progress on our Open Access Health Tracker

| Wanted to share a picture of our progress on our open access health tracker. We hand assembled our first prototype (left) in 2025. Around 140 components with the smallest being 01005. Our learning: DON'T use 01005/0204 if you hand assemble. It was not a lot of fun, but we got our first prototype to work. We redesigned and improved. This time using a 4 layer flexible PCB + stiffener. AND we learned, ordering the prototypes mostly pre-assembled. However, we ran into the problem that we forgot to thermally shield our temperature related sensors (any suggestions on this very much welcome). We also ran into the issue that our 2.4GHz antenna didn't work anymore, most likely due to the PCB change, but a small cable will do the job. Now we are working on our third prototype. Integrating more sensors, compacting and fixing mistakes we made. [link] [comments] |

The Next Phase of Energy Storage: When Batteries Start Working with the Grid

Authoredby: Rajesh Kaushal, Energy Infrastructure & Industrial Solutions (EIS) Business Group Head, India & SAARC, Delta Electronics India

For decades, the electricity grid operated on a simple principle: power had to be generated at the exact moment it was consumed. Coal plants, gas turbines, and hydro stations were dispatched to follow demand, and the grid was built around predictability and centralised control.

That principle is now being fundamentally rewritten.

As renewable energy becomes central to India’s power system, variability has entered the equation at an unprecedented scale. Solar and wind generation do not follow traditional load curves, and their growing share is changing how grids must be designed and operated. In this new reality, energy storage, particularly Battery Energy Storage Systems (BESS), is moving from being a supporting technology to becoming a core grid asset.

We are entering the next phase of energy storage, where batteries no longer sit on the sidelines but actively work with the grid.

From Backup to Backbone

In its early years, energy storage in India was largely viewed as backup power, used during outages or in niche, isolated applications. That perception is changing rapidly.

Today, batteries are expected to play a much broader role:

- Smoothing renewable variability

- Managing peak demand

- Deferring transmission upgrades

- Providing frequency and voltage support

- Enabling faster and more resilient grids

According to projections from the Central Electricity Authority, India will require over 82 GWh of total energy storage by 2026–27, with BESS contributing nearly 35 GWh, rising to 411 GWh total by 2031–32, with batteries accounting for over 236 GWh.

These are not incremental additions. They signal a structural shift in how the power system will be planned, operated, and stabilised.

When Policy Meets Scale

A key indicator of this transition is policy clarity and rapidly declining costs.

Recent tariff-based competitive bidding shows that the cost of BESS has plummeted from around ₹10.18 per kWh to approximately ₹2.1 per kWh, assuming two daily cycles. Based on market trends and utilisation patterns, the cost at 1.5 cycles per day is expected to be around ₹2.8 per kWh. This aligns closely with average solar tariffs, making storage increasingly competitive.

India’s policy framework supports this transition:

- Viability Gap Funding schemes supporting 13,220 MWh of BESS capacity with ₹3,760 crore, and an additional 30 GWh with ₹5,400 crore support through the Power System Development Fund.

- Inter-State Transmission System (ISTS) charges waiver for co-located BESS projects for 12 years and graded waivers for non-co-located projects.

- The PLI “National Programme on Advanced Chemistry Cell (ACC) Battery Storage” aims to establish 50 GWh of domestic Advanced Chemistry Cell manufacturing capacity, including 10 GWh for grid-scale applications to reduce import dependency and future costs.

These mechanisms are accelerating adoption and enhancing affordability, shifting storage from pilot projects to mainstream system planning.

Storage Enters Grid Planning

Perhaps the clearest indicator of maturity is how storage is now treated in national planning.

Nearly 47 GW of BESS capacity has already been considered in India’s transmission planning horizon up to 2032. This is a profound change. Batteries are no longer “add-ons” installed after the grid is built. They are being planned alongside transmission lines, substations, and renewable corridors.

This integration unlocks new possibilities:

- Managing congestion without building new lines

- Firming renewable power at the point of injection

- Providing local grid support closer to demand centres

In effect, storage becomes a flexible, digital asset embedded within the physical grid.

When Batteries Start Talking to the Grid

The next phase of energy storage is not defined by chemistry alone. It is defined by intelligence.

A battery that simply charges and discharges on a timer is useful. A battery that communicates with the grid in real time is transformative.

Advanced power electronics, grid-forming inverters, and intelligent control systems allow BESS to:

- Respond instantly to frequency deviations

- Stabilise weak grids with high renewable penetration

- Coordinate with solar and wind plants to deliver dispatchable power

- Support black start and islanding operations

This is where energy storage stops being passive infrastructure and starts behaving like an active grid participant.

At Delta, our energy infrastructure approach is built around this convergence, where power electronics, automation, and digital control come together. Batteries are no longer isolated assets. They operate as part of a wider ecosystem that includes inverters, energy management systems, EV charging infrastructure, and grid interfaces.

Beyond Utilities: Storage Touches Everyday Life

While much of the discussion around BESS focuses on utilities and large-scale projects, the impact of grid-integrated storage is far broader.

For industries, it means improved power quality and reduced exposure to peak tariffs.

For cities, it means greater resilience during extreme weather events.

For renewable developers, it means predictable revenues and bankable projects.

For consumers, it ultimately means a cleaner, more reliable power supply.

Energy storage is becoming an invisible enabler, rarely noticed when it works well, but critical to system reliability when it is absent.

India’s Unique Opportunity

India’s energy transition is happening at a scale and speed few countries have attempted. Peak demand continues to rise, renewable capacity is expanding rapidly, and electrification is accelerating across transport, industry, and households.

This creates a unique opportunity. Instead of retrofitting storage into an aging grid, India can design a future-ready system where renewables, batteries, and digital infrastructure are integrated from the outset.

But success will depend on how well technology, policy, and execution align:

- Clear market signals for ancillary services

- Standards for grid-forming and hybrid systems

- Long-term visibility for manufacturers and developers

- Skill development for operating a more complex, digital grid

A Grid That Thinks, Responds, and Adapts

The next phase of energy storage is not only about adding battery capacity. It is about how the grid itself is designed and operated.

Future power systems will need to sense conditions in real time, respond quickly to changes in demand and supply, and adapt to increasing variability from renewable sources. When batteries are fully integrated into grid operations, they can support frequency regulation, peak management, and network stability more effectively than standalone assets.

India has already begun moving in this direction. Energy storage is being considered within transmission planning, renewable integration strategies, and market mechanisms. The focus now shifts from adoption to optimisation: how efficiently storage can be deployed, controlled, and scaled to deliver maximum system value.

In the years ahead, the grid’s role will extend beyond power delivery. It will increasingly manage energy flows dynamically, with storage playing a central role in enabling reliability, flexibility, and long-term sustainability.

The post The Next Phase of Energy Storage: When Batteries Start Working with the Grid appeared first on ELE Times.

IEEE 802.3at for no money

| For a long time wanted myself Poe capable switch but didn't wanted to pay like 3x or just subconsciously wanted to die in house fire one day, it's not important. Basic 8 port 100m switch with all pairs available on connector(Wich is unsurprisingly rare). Ptc fuses rated 0.5a with 1A trip point. Power for switch is made from led driver scalvaged from cheap bulb. It is slightly modified to work from polarity agnostic 48v and provides about 4v isolated which is enough to power small switch. It is second attempt, first switch was fried because there 2 annoying standards with + and - inverted requiring a lot of diodes to ensure not frying anything which I skipped thinking working with a known Poe source I am safe and having non isolated step down converter is fine. Wrong assumptions indeed. Now everything works relatively safe, in final version before assembling I added isolator between fuses and transformer legs. No fire yet. [link] [comments] |

TOYOTA Selects Infineon’s SiC Power Semiconductors for its New, “bZ4X”

Infineon Technologies announced that CoolSiC MOSFETs (silicon carbide (SiC) power MOSFETs) have been adopted in the new bZ4X model from Toyota, the world’s largest automaker. Integrated into the on-board charger (OBC) and DC/DC converter, the SiC MOSFETs leverage the material’s advantages of low losses, high thermal resistance, and high voltage capability to help extend driving range and reduce charging time.

“We are very proud that Toyota, one of the world’s largest automakers, has chosen Infineon’s CoolSiC technology. Silicon carbide enhances the range, efficiency and performance of electric vehicles and is therefore a very important part of the future of mobility,” said Peter Schaefer, Executive Vice President and Chief Sales Officer Automotive at Infineon. “With our dedication and our commitment to innovation and zero-defect quality, we are well-positioned to meet the growing demand for power electronics in electromobility.”

Infineon’s CoolSiC MOSFETs feature a unique trench gate structure that reduces normalised on-resistance and chip size, enabling reductions in both conduction and switching losses to contribute to higher efficiency in automotive power systems. In addition, optimised parasitic capacitance and gate threshold voltage enable unipolar gate drive, contributing to the simplification of drive circuits for automotive electric drive train and supporting high-density, high-reliability design for OBC and DC/DC converters.

The post TOYOTA Selects Infineon’s SiC Power Semiconductors for its New, “bZ4X” appeared first on ELE Times.

STMicroelectronics expands strategic engagement with AWS, enabling high-performance compute infrastructure for cloud and AI data

STMicroelectronics has announced an expanded strategic collaboration with Amazon Web Services (AWS) through a multi-year, multi-billion USD commercial engagement serving several product categories. The collaboration establishes ST as a strategic supplier of advanced semiconductor technologies and products that AWS integrates into its compute infrastructure, enabling AWS to provide customers with new high-performance compute instances, reduced operational costs, and the ability to scale compute-intensive workloads more effectively.

As part of this expanded relationship, ST will work with AWS to optimise electronic design automation (EDA) workloads in the cloud. AWS’s scalable compute power enables silicon design acceleration, parallelises design tasks, and gives engineering teams the flexibility to handle dynamic compute demands and speed products to market.

Commercial Agreement

This engagement covers a broad range of semiconductor solutions leveraging ST’s portfolio of proprietary technologies. ST will supply specialised capabilities across high-bandwidth connectivity, including high-performance mixed-signal processing, advanced microcontrollers for intelligent infrastructure management, as well as analogue and power ICs that deliver the energy efficiency required for hyperscale data centre operations.

The collaboration will help customers reduce the total cost of ownership and bring products to market faster. ST’s specialised technologies help AWS address the increasing demands for compute performance, efficiency, and data throughput required to support growing AI and cloud workloads.

Jean-Marc Chery, ST President & CEO, commented: “This strategic engagement establishes ST as an important supplier to AWS and validates the strength of our innovation, proprietary technology portfolio, and proven manufacturing-at-scale capabilities. Our advanced semiconductor solutions will directly power AWS’s next-generation infrastructure, enabling its customers to push the boundaries of AI, high-performance computing, and digital connectivity. This collaboration positions us ideally for further scale-up across multiple market segments, from data centre infrastructure to AI connectivity, positioning ST at the centre of the AI revolution.”

ST has issued warrants to AWS for the acquisition of up to 24.8 million ordinary shares of ST. The warrants will vest in tranches over the term of the agreement, with vesting substantially tied to payments for ST products and services purchased by AWS and its affiliates. AWS may exercise the warrants in one or more transactions over a seven-year period from the issue date at an initial exercise price of $28.38.

The post STMicroelectronics expands strategic engagement with AWS, enabling high-performance compute infrastructure for cloud and AI data appeared first on ELE Times.

GaN Benefits in Motor Controls

By: Ester Spitale, Technical Marketing Manager, STMicroelectronics and Albert Boscarato, Application Lab Manager, STMicroelectronics

GaN benefits in different applications

The major challenge of power electronics today is dealing with the growing need for improved efficiency and power performance, and at the same time, the constant pursuit of cost and size reductions.

The introduction of Gallium Nitride (GaN) technology, a relatively new wide bandgap compound, moves in this direction, as it becomes increasingly available commercially, its use is growing tremendously.

With a better figure-of-merit (FOM), on-resistance RDS(on), and total gate charge (QG) than silicon counterparts, High-electron-mobility transistor (HEMT) devices based on gallium nitride (GaN) also offer a high drain to source voltage capability, zero reverse recovery charge and very low intrinsic capacitances.

The first application where GaN technology has spread is power conversion: GaN represents the leading solution for improving efficiency, making it possible to meet the most stringent energy requirements. The capability to work at higher switching frequencies enables higher power densities, and therefore reduction of the system dimensions, weight and cost.

Size and energy efficiency are also crucial in electronic motor designs: minimising conduction and switching losses in the drive is key for reducing energy waste.

Performance improvement in motor drivers relying on classic silicon MOSFETs and IGBTs is becoming more difficult as silicon technology approaches theoretical limits for power density, breakdown voltage, and switching frequency. Due to their superior electrical characteristics, GaN transistors are a valid alternative to MOSFETs and IGBTs in high-voltage motor control applications.

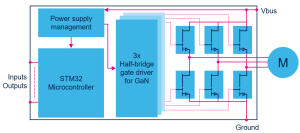

Simplified block diagram of a power inverter based on GaN transistors

Simplified block diagram of a power inverter based on GaN transistors

Fueling the next generation of motor inverters

GaN is promising important benefits even in applications operating at low frequencies (up to 20kHz). In the realm of home appliances, motor-driven systems such as washing machines, refrigerators, air conditioners, and vacuum cleaners rely heavily on motor inverters to control speed, torque, and efficiency. Unlike industrial servo or precision motors, the physical size of these motors is largely fixed due to mechanical and functional constraints. This means that the traditional approach of reducing overall system size by shrinking the motor itself is not feasible. Instead, improvements must be sought in the inverter and power electronics that drive these motors.

In this sense, it is important to point out that the benefit of GaN over traditional silicon transistors does not come from a single parameter that stands out. It is rather the sum of different aspects concatenating together.

GaN has a de facto negligible reverse recovery charge (Qrr) and low parasitic capacitances, which in turn enable working with slightly higher dV/dt. While the motor winding and insulation limit the maximum allowable dV/dt, GaN’s capability to operate at higher switching speeds allows designers to optimise switching edges carefully.

Moreover, a safe and drastic reduction of dead-time is also achievable without risking shoot-through faults. Time between high-side and low-side switching can be easily lowered by a factor of 10. This can improve inverter efficiency and reduce switching losses without compromising motor reliability.

As remarkable as it gets, the performance is not over yet. In fact, all these “little” improvements combined lead to what may be considered the most relevant of them all: the removal of the heatsink.

Kiss your heatsink goodbye

The considerable reduction in power dissipation allows designers to reduce or even remove bulky heatsinks in the inverter power stage. The assembly line may now require fewer steps in the manufacturing process. No heatsink also means no screws or mounting joints, thus avoiding mechanical failures that can appear when the appliance is already long in the field. An interesting potential saving of service and warranty costs.

The overall result is a more compact, lightweight, and cost-effective inverter design that fits better within the demanding and highly competitive space of the home appliances market.

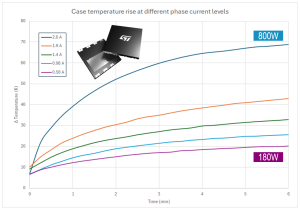

700 V GaN mounted on a motor inverter running without a heatsink

700 V GaN mounted on a motor inverter running without a heatsink

The waveforms show how smooth and cold a GaN can be. In the example above, the device under test has a typical RDS(on) of 80mΩ. The motor inverter runs at a switching frequency of 16 kHz, with a maximum dV/dt slightly under 10V/ns.

A power level of about 800 W can be safely achieved without incurring thermal runaway. The increase in temperature Δt is less than 70 °C, which leaves a good margin before reaching the maximum operating junction temperature (TJmax) of 150 °C.

This remarkable result is achieved without a heatsink, with GaNs mounted on and cooled down through a common 2-layer PCB.

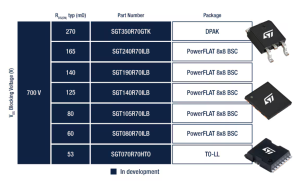

STPOWER GaN Transistors

STPOWER GaN Transistors are intrinsically normally off, p-GaN gate e-mode transistors that offer a zero reverse recovery charge. ST offers today seven part numbers rated 700 V breakdown voltage (VDS), with typical on-resistance RDS(on) ranging from 270 mΩ down to 53 mΩ in DPAK, PowerFLAT 8×8, and TO-LL packages.

The portfolio is rapidly growing, adding on different packages, RDS(on) and breakdown voltage levels.

The post GaN Benefits in Motor Controls appeared first on ELE Times.

Union Minister Ashwini Vaishnaw inaugurates TI’s new, world-class R&D centre

Texas Instruments (TI) officially opened its new, state-of-the-art product research and development (R&D) centre in Bengaluru at an event commemorating the company’s 40-year presence in India. As the first multinational company to establish an R&D centre in India in 1985, TI has been instrumental in shaping India’s semiconductor landscape for four decades. The new 550,000-square-foot centre features a collaborative workspace dedicated to developing world-class chip designs. The centre includes an end-to-end reliability lab equipped with advanced testing capabilities for various environmental conditions, along with many other integrated circuit design labs.

Inaugurated by Shri Ashwini Vaishnaw, Union Minister for Railways, Information & Broadcasting, Electronics & Information Technology, Government of India, alongside TI leaders, the new centre highlights the company’s strategic vision to propel semiconductor innovation and nurture world-class design talent. This expansion reinforces TI’s commitment to developing breakthrough analogue and embedded processing technologies while strengthening its support for the design ecosystem and its growing customer base in India.

Shri Ashwini Vaishnaw, Union Minister for Railways, Information & Broadcasting; Electronics & Information Technology, Government of India, said, “I congratulate Texas Instruments on the inauguration of this world-class R&D centre in Bengaluru. TI has been a true pioneer in India’s semiconductor journey and stood as a testament to consistently nurturing the design talent ecosystem in India. The company’s expanded investment reinforces India’s position as a global hub for semiconductor design, development and supports our vision of building an innovation-led nation.”

Santhosh Kumar, president and managing director, TI India, said, “As we celebrate 40 years in India, this milestone reflects TI’s rich legacy and our strong commitment to the future. TI India’s product development and design teams drive research and breakthrough innovations for customers worldwide. Our world-class engineers are central to pioneering the next generation of semiconductor advancements.”

The company recently opened an additional sales office to strengthen its partnership with Indian customers, while the new R&D facility builds on its innovation capabilities in the region. With thousands of employees in India, TI continues to expand its presence in the region.

The post Union Minister Ashwini Vaishnaw inaugurates TI’s new, world-class R&D centre appeared first on ELE Times.