Збирач потоків

Why Connected MCUs Are Replacing Bolt-On Wireless in IoT Devices?

Courtesy: Infineon

Connected MCUs are gaining popularity rapidly.

If you’ve spent any time building or supporting connected products over the last decade, you’ve seen the pattern repeat itself: a product team realises they need wireless, so they bolt on a Wi-Fi module, wire up SDIO or SPI, route antennas where there’s some available space, and duct-tape the firmware stack into place right before release.

It works…until it doesn’t. And the truth is, most of us knew it was going to be painful the moment that architecture was chosen.

Whether it’s a forklift, a vitals monitoring device, a handheld scanner, or an HVAC controller, the problems are surprisingly universal. And they all stem from the same root issue.

Wireless was treated like an accessory instead of a part of the system.

We’re finally at a turning point. Connected MCUs, especially with integrated Wi-Fi 6 and 6E, change the entire equation.

Let me walk you through why this shift is happening and what it solves.

The pain: Bolt-on wireless was never as simple as it looked

Customers usually come with the same set of problems:

- Integration complexity snowballs quickly

That “easy” SDIO Wi-Fi module seems fine at first, until you realise:

- Your host processor can’t keep up under load

- The driver needs specific kernel patches

- The layout constraints choke your antenna performance

- You’re juggling two separate firmware roadmaps

By the end, half the schedule is spent debugging issues no one originally accounted for.

- RF performance suffers because it has to fit the enclosure, not the system

Bolt-on designs force antennas into whatever space is left. That might be inside a forklift mast, behind a metal enclosure, or buried under plastic in a medical device.

You can predict the RF problems before they happen, and yet they still happen.

- Certifications slow everything down

When wireless is a separate module, you:

- Test the radio module for compliance

- Test your host MCU for EMI

- You have to do integration testing when you put them together

- And then redo it every time you want to change the antenna

Teams underestimate this every single time.

- The BOM cost keeps going higher

One board for the host MCU, one for wireless, external memory, custom harnesses, enclosures… By the time the full system is built, the wireless subsystems cost more than the product owner ever expected.

And in long-lifecycle industries, like material handling, medical, and commercial HVAC, that pain compounds across entire product lines.

The turning point: Wi-Fi 6 and 6E connected MCUs

The reason this shift is happening is simple:

We finally have connected MCUs that are powerful enough, low-power enough, and secure enough to replace external wireless subsystems entirely.

This means the wireless subsystem is no longer bolted on. It’s a self-contained compute & connectivity module that slides directly into your main system design. For the first time, the architecture reflects how engineers actually want to build products:

A single module that handles wireless, networking, security, protocol stacks, and memory, rather than scattering those components across multiple boards.

Simplifying the integration, RF performance, and certification challenges that discrete systems have.

The post Why Connected MCUs Are Replacing Bolt-On Wireless in IoT Devices? appeared first on ELE Times.

FYI you can use AI to identify components. Take a picture of the component and upload it to an AI

| Make sure the package markings are clear in your picture. I used grok. It will even find parts if it doesn't have the part number on the part, just a marking code. [link] [comments] |

I'm a first year high school electrical student and I designed a 4-to-10 weighted sum decoder from scratch using discrete NPN transistors. Here's how it works.

| I started this a few months ago. No university, no engineering background, just a goal: 4 input switches, 10 LEDs, light up N LEDs when the inputs sum to N. I figured out the logic, built it in simulation, got told I was wrong by experienced people, proved them right, and then discovered what I built has a name in a field I'd never heard of. --- **The Core Idea: Non-Binary Weighting** Most 4-bit decoders assign binary weights: 1, 2, 4, 8. I didn't do that. I assigned decimal additive weights: - SW-A = 1 - SW-B = 2 - SW-C = 3 - SW-D = 4 Maximum sum = exactly 10. Every integer from 0 to 10 is reachable. The 16 physical switch combinations collapse into 11 unique output states. Five of those states are reachable by two different switch combinations (e.g. A+D = 5 and B+C = 5). The circuit correctly treats these as identical — it decodes *value*, not *pattern*. --- **Logic: Series NPN AND Gates** Each output channel is a chain of NPN transistors in series. All transistors in the chain must be ON for collector current to flow — logical AND. Chain depth varies per output: - 1 NPN: single input conditions - 2 NPNs in series: two-input conditions - 3 NPNs in series: three-input conditions - 4 NPNs in series: sum = 10 only The Vbe stacking problem is real — 4 transistors in series drops ~2.8V. I solved it by using a 9V supply and adding a booster NPN after each AND gate to restore a clean full-swing signal before hitting the LED stage. --- **Output Stage** Each booster drives an LED via a 330 ohm resistor to VCC: R = (9V - 2V) / 20mA = 350 ohms → 330 ohm standard value, ~21mA per LED This fully isolates logic voltage from LED forward voltage. Without this separation the LED acts as a voltage divider and corrupts the logic states — I learned that the hard way in the simulation. --- **The Part That Surprised Me** After I finished, someone pointed out that this circuit structure is identical to a single hardware neuron: - Weighted inputs → synaptic weights - Arithmetic sum → dendritic summation - AND gate threshold → activation function - Thermometer output → step activation I had never heard of neuromorphic computing when I designed this. I just landed there by solving the problem from first principles. Apparently there's a billion dollars of research built on the same idea. --- **Simulation Results (all confirmed working):** - A → 1 LED ✓ - B → 2 LEDs ✓ - C → 3 LEDs ✓ - A+B → 3 LEDs ✓ - A+D → 5 LEDs ✓ - B+C → 5 LEDs ✓ - B+D → 6 LEDs ✓ - A+B+C+D → 10 LEDs ✓ --- Happy to share full schematics and simulation screenshots. Thanks for reading. [link] [comments] |

EEVblog 1739 - UNUSUAL REPAIR! : Beelink Ryzen 9 Mini PC

Thats why you should plan first and then do the rest.

| submitted by /u/Worth-Ganache1472 [link] [comments] |

AXT’s Q4/2025 revenue constrained by delay in China export permits

TP-Link’s Kasa EP10: If at first it doesn’t connect, buy, buy again

How visibly different (if at all) inside are two generations of smart plugs, and is the more recent device’s comparative connectivity issue due to hardware, software, or a combination of the two?

Back in early December, EDN published my initial write-up in a planned series of posts covering experiences setting up and using devices from TP-Link’s two somewhat-overlapping smart home hardware, software, and service ecosystems, Kasa and Tapo. The first two products I’ve tried out (I’ve since added several more to the stable; stand by for additional details in future blog posts and teardowns) were both Kasa-branded and were also both smart plugs: the HS103, which I subsequently dissected here:

and its more diminutive successor, the EP10:

My so-far sample set is small, so conclusions should be accordingly calibrated. That said, I’ve had no issues with any of the multiple HS103 devices I’ve so far activated here at the residence, whether in the initial setup steps or during subsequent usage. The same can’t be said, however, for the EP10. None of the devices I tried in either of the first two four-packs I purchased would successfully setup-connect to my Wi-Fi network. But both devices in the third two-pack worked fine…at least until I subsequently disassembled one of them. Meet today’s teardown candidate, as usual, accompanied by a 0.75″ (19.1 mm) diameter U.S. penny for size comparison purposes:

This particular two-pack was sourced from Amazon’s Resale (formerly Warehouse) sub-site, therefore rationalizing the non-TP-Link sticker stuck to the top of the box:

And since, as I’d mentioned previously, I got the idea to do a comparative teardown between the HS103 and EP10 after sending back for refund the original two four-packs of the latter, I can’t say whether their hardware versions matched this device’s v1.6 ID. v1.0 and v1.8 EP10 designs have also been shipped by the company (all three with multiple firmware releases):

Inside…

and underneath a sliver of literature, along with a bit of protective foam:

is our patient:

whose sibling, I’ve already noted, is in active use:

Some as-usual overview shots to start; the EP10 has dimensions of 2.36 x 1.50 x 1.21 in (60 x 38 x 33 mm) and weighs 0.13 lb (59 g) versus its slightly heftier HS103 predecessor at 2.62 x 1.57 x 1.5 in (66.5 x 40 x 38 mm) and 0.25 lb (113 g):

The LED-augmented on/off, pairing and reset switch is on the left side this time:

Theoretically, at least, the visible presence of a screw head implies a potentially simpler disassembly process as compared to the HS103 of the past. We shall see…

Once again, there’s a seam-inclusive topside, suggestive of the pathway inside:

And, last but not least, the bottom-side stamped specification suite, including the always-insightful FCC ID (2AXJ4EP10):

Speaking of pathways inside, let’s take the first step in the journey, shall we?

I wish I could say the two halves of the case then separated straightaway…but that’d be a lie:

Still, the mission was eventually accomplished, this time with an added bonus: no blood loss!

This YouTuber’s video (which, although it claims to be of an HS103, is actually of an EP10; note the switch location, along with glimpses of the bottom-side markings) bolsters my opinion as to the device’s lingering disassembly difficulty. Alas, I didn’t come across it until afterwards:

Comparatively boring front half first:

including a closeup of the left-side mechanical switch’s translucent insides:

Now for the (rear) half I suspect you all mostly care about:

The relay on the right side is, at least in my v1.6 hardware version of the design, a Hongfa HF32FV-16, the exact same component I found a month back in my HS103 teardown:

However, the one in the video I just showed you, complete with a convenient “v1.8” hardware version sticker atop it, is blue in color, therefore presumably from a different manufacturer. As is the one shown in the FCC certification internal photos, which is sticker-less, but I’m assuming it references the initial v1.0 hardware design. And now for the other end, containing the digital and RF (control and wireless communications) sections, of which I’m most interested, both in an absolute sense and functionally relative to the HS103 predecessor:

Once again, there’s the on/off, pairing, and reset switch, this time right next to the LED, and with both now surrounded by the previously encountered LED-only light leak-preventing foam. The embedded antenna runs along the PCB’s right edge. And the “brains” of the operation at the end of the antenna are seemingly also the same as in the HS103: Realtek’s RTL8710, which, as I noted before, supports a complete TCP/IP “stack” and integrates a 166 MHz Arm Cortex M3 processor core, 512 Kbytes of RAM, and 1 Mbyte of flash memory. The only differences, perhaps reflective of a silicon revision, are in the IC’s bottom two marking lines. The IC in the HS103 says:

08F01H3

G038A2

while the Realtek RTL87210 in the EP10 design is marked as follows for the 2nd and 3rd lines:

08EL0C1

G031A2

Alas, and as with the HS103 precursor, I was unsuccessful in my attempt to free the EP10’s PCB from the rear-half case within which it was ensconced. I’ll alternatively attempt to pacify your curiosity by first pointing out that a scattering-of-passive PCB backside image is included in the FCC certification internal photo set. And I’ll also point you toward another video, this one also showing both PCB sides but also more broadly of interest to me (and you as well, I suspect):

I found it within a Reddit post I stumbled across while doing my initial research. The OP (original poster, for those of you not yet familiar with frequently used Reddit verbiage) had an EP10 whose relay had developed perpetually clicking behavior. Turns out one of the “can” capacitors on the board had gone bad; replacing it restored normal functionality (not to mention ending the din). Note that the relay in the version of the hardware shown in this video (which I think also says v1.8, although the video-frame images aren’t clear) is also blue in color.

(Not-) working theoriesThis internal information is all well and good, I hope you agree, but it still doesn’t answer my fundamental question: why was I successful in using only a subset of the EP10s I tried setting up? I’ll first reiterate something I said in my initial December 2025 coverage:

I wondered if these particular smart plugs, which, like their seemingly more reliable HS103 precursors, are 2.4 GHz Wi-Fi-only, were somehow getting confused by one or more of the several relatively unique quirks of my Google Nest Wifi wireless network:

- The 2.4 GHz and 5 GHz Wi-Fi SSIDs broadcast by any node are the same name, and

- Being a mesh configuration, all nodes (both stronger-signal nearby and weaker, more distant, to which clients sometimes connect instead) also have the exact same SSID.

If I was right, the issue might have been caused by an EP10 software shortcoming, which a newer version of the firmware could conceivably resolve. But this leads to a chicken-and-egg situation. Downloading and installing the latest firmware to the device requires that I first connect the EP10 to TP-Link’s “cloud” firmware repository via my smartphone intermediary. But absent a sufficiently functional initial firmware version, I can’t get the device online in the first place. To wit, note that the TP-Link devices’ lack of Bluetooth support precludes using this alternative wireless communications interface to get them updated; it’s Wi-Fi or nothing.

A fundamental hardware limitation is also a possibility, of course. Via both documented and pictorial evidence, I’m aware (as, now, are you as well) of at least three different hardware versions of the EP10. For that matter, TP-Link’s website currently lists six different hardware versions of the HS103 “in the wild”, ranging from v1.0 to v5.8. All five of the HS103s currently active in my home are v5 units, the Kasa app conveniently tells me via the Device Info screen in each device’s advanced settings. Again, the sample sizes are small and therefore statistically suspect: did I just get lucky with the HS103s, and unlucky with the first two batches of EP10s?

With that, I’ll wrap up and refer you to the comments section below for any answers you might be willing to publicly posit for my closing questions, and/or any other thoughts you might have! Stay tuned, as I alluded to earlier both in this post and a prior one in the series, for additional teardowns to come of products from both TP-Link’s Kasa and Tapo smart plug families, along with other, potentially even more interesting, smart home ecosystem devices.

—Brian Dipert is the Principal at Sierra Media and a former technical editor at EDN Magazine, where he still regularly contributes as a freelancer.

Related Content

- Tapo or Kasa: Which TP-Link ecosystem best suits ya?

- How smart plugs can make your life easier and safer

- Teardown: Smart plug adds energy consumption monitoring

- Teardown: A Wi-Fi smart plug for home automation

- Teardown: Smart switch provides Bluetooth power control

The post TP-Link’s Kasa EP10: If at first it doesn’t connect, buy, buy again appeared first on EDN.

❤️ Чемпіонат КПІ з шахів!

Відкрито реєстрацію на найграндіозніший університетський турнір за багато років. Перші змагання вже 10 березня. Поспішайте взяти участь у Чемпіонаті КПІ із шахів!

BluGlass enters AUS$1.25m development program with US tier-1 defence prime for visible GaN DFB lasers and gain chips

R&S’s next-generation Wi-Fi 8 access point testing in collaboration with NETGEAR

NETGEAR has selected the CMP180 radio communication tester from Rohde & Schwarz for the development of future Wi-Fi 8 access points. By integrating the tester into their design validation test environment, NETGEAR will be able to speed up the development of performance-optimised Wi-Fi products.

Rohde & Schwarz, a leading supplier of test and measurement equipment for wireless applications, and NETGEAR, manufacturer of advanced networking technologies and leading-edge Wi-Fi 7 products, collaborate to get the next generation Wi-Fi 8 products ready for the market.

Wi-Fi 8 is the next generation Wi-Fi based on the upcoming IEEE 802.11bn standard. With the focus on ultra-high reliable (UHR) WLAN, this new technology will improve the wireless user experience at homes, offices and factories: high-speed connectivity under all conditions with low latency for gaming, learning and working applications, which will use augmented reality (AR) and virtual reality (VR) to provide an immersive user experience.

Design validation of Wi-Fi 8 access points requires test solutions which support the latest Wi-Fi 8 features like distributed resource units (DRU) or unequal modulation (UEQM) with up to 320 MHz wide channels in all supported bands and highest modulation schemes (4096QAM), while providing the performance (EVM), and scalability (4×4 MIMO) required to optimize the wireless device performance.

Rohde & Schwarz provides NETGEAR with the CMP180 radio communication tester, a future-proof non-signalling testing solution for wireless devices, which can be used in research, development, validation and production. It supports many cellular and non-cellular technologies, including the latest Wi-Fi 6E, Wi-Fi 7, Wi-Fi 8 and 5G NR FR1 in frequencies up to 8 GHz and bandwidths of up to 500 MHz.

The CMP180 comes equipped with two analysers, two generators and two times eight RF ports in a single box, plus the possibility to scale up by stacking several testers. This makes it a cost-efficient test solution with best-in-class performance, addressing current and future test demands.

While its fast multi-DUT testing capabilities make the CMP180 ideal for testing in mass-production test environments, test engineers can use the instrument throughout the entire development cycle: from engineering validation tests (EVT), design validation tests (DVT) and production validation tests (PVT) to mass production (MP).

Joseph Emmanuel, VP, Consumer Business Unit HW Engineering at NETGEAR, says: “Working with Rohde & Schwarz enables us to bring our Wi-Fi 8 products on the market with the expected high quality and extremely high performance for the best multi-gigabit Wi-Fi experience everywhere at home.”

Goce Talaganov, Vice President Mobile Radio Testers at Rohde & Schwarz, says: “We are grateful for the close collaboration with NETGEAR on the latest Wi-Fi 8 technology. Our experience in wireless device testing and early cooperation with Wi-Fi 8 chipset and device vendors helped us to improve our test solution for the upcoming broad Wi-Fi 8 market.”

The post R&S’s next-generation Wi-Fi 8 access point testing in collaboration with NETGEAR appeared first on ELE Times.

Smartphone production grows 2.5% to 1.25 billion units in 2025

Redefining Precision: How CNC Robotics is Transforming Machining with SINUMERIK Machine Tool Robot

Courtesy: Siemens

Walk into any modern factory, and you’ll meet robots: palletising, tending, loading, unloading. Useful? Absolutely. But using them for more advanced robotic tasks like machining steel with tight tolerances, you’ll always hear the old refrain: “Robots aren’t rigid enough,” like in textbooks and lectures. That was then. Today, CNC robots shorten the distance between the agility of industrial robots and the precision of machine tools. And at the centre of that shift is the SINUMERIK Machine Tool Robot (MTR) – the first robot we can confidently call a machining asset, with steel milling capabilities and more, not just an automation helper.

In this article, you can find the following three things:

- Learn what “CNC robotics” means and what robots do on the shopfloor today.

- Understand why the SINUMERIK Machine Tool Robot is different.

- Zoom out to CNC Robotics as a whole and the practical benefits you can expect.

Along the way, we will challenge a couple of comfortable assumptions in the industry. Consider it as an invitation to rethink what a robot can be used for and also where it unleashes new automation potential.

CNC Robotics: From “Good at Handling” to “Great at Machining”

For years, robots excelled at tasks with low process forces, such as handling, assembly, welding, or laser cutting. They’re flexible, they have reach, and they integrate well around machines. But whenever we crossed the line into machining, conventional robot mechanics and their controls hit a wall: Insufficient stiffness and path accuracy under load, slow machining, and vibrations. That reality entrenched a mindset: “Let machines machine, let robots move things.”

SINUMERIK CNC robotics aims to break that model by putting CNC-grade motion control and digital workflows into the robot’s core. With SINUMERIK, that means:

- A control concept that treats the robot like a machine tool, not a black‑box auxiliary.

- Integration into the SINUMERIK ONE CNC environment (including a digital twin for simulation and validation before the first cut).

- A solution family that spans from simple connections for handling through to full high‑precision motion control of machines using robot kinematics, meeting you where you are on the automation journey.

“If robots still strike you as unsuitable for high‑precision tasks, the latest developments may surprise you.”

Meet the SINUMERIK Machine Tool Robot: a Robot That Machines Like a Machine

At the core of the story is this: Siemens developed the SINUMERIK Machine Tool Robot (MTR) technology, combining the agility of a 6‑axis robot with the precision of a CNC machine tool. So how did we achieve that?

- Machine‑tool‑grade control: The MTR is controlled by SINUMERIK ONE, Siemens’ digital‑native CNC. It lets a robot inherit machine‑tool behaviours for high-precision path tasks.

- Measured gains: Compared to conventional industrial robots, users can expect over 200% higher path accuracy and significantly higher dynamic stiffness. That’s the difference between “good enough for trimming” and “great even for steel.”

- Real productivity: The new control concept delivers 20–40% productivity increases, which is also compelling in non‑process-force path processes (laser, waterjet) where speed and path smoothness dominate.

Now, let’s add something from the shopfloor perspective, we don’t say enough: the user experience is as critical as the physics of the process. With SINUMERIK ONE, the digital twin lets you verify programs, validate reach and sequence, and fine-tune before you ever stop the line, all with existing machine tool programming know-how. Commissioning becomes a digital problem first, a hardware problem second – and that’s a non-trivial cultural shift.

Recognition Matters: Innovators of the Year

Breakthroughs like this don’t exist in a vacuum. The hybrid‑drive system, developed together with Fraunhofer Institute for Manufacturing Technology and Advanced Materials and Siemens colleagues, was recognised with the Siemens “Inventor of the Year” award.

“Swiss Army Knife” Machining – Brought to Life by Hybrid Drive Innovation

A core complaint against machining with robots has been stiffness under process forces, especially in heavy-duty machining of steel or tough alloys. Here’s where an innovative hybrid drive concept changes the picture.

- By combining the strengths of direct motors (precision, speed) and geared motors (robustness, power), the hybrid approach delivers both sensitivity and muscle.

- Robots equipped this way stay stable and have low vibration at high feed rates, even under strong process‑force excitation, approaching the precision and dynamics of classic machine tools.

- The result is a robot that genuinely evolves into the “Swiss Army knife” of manufacturing: precision machining where needed, agile flexibility everywhere else, with a smaller overall footprint.

This isn’t just a technical refinement; it affects practical operations: it can reduce floor‑space requirements and lower energy use per part.

The post Redefining Precision: How CNC Robotics is Transforming Machining with SINUMERIK Machine Tool Robot appeared first on ELE Times.

Infineon and Subaru’s collaboration improves driver safety by enhancing real-time performance in advanced driver assistance systems

Infineon Technologies AG and Subaru Corporation are collaborating to enhance driver safety, confidence and comfort in future Subaru vehicles. Infineon plays a key role in Subaru’s integrated electronic control unit (ECU) for next‑generation advanced driver assistance systems (ADAS) and vehicle motion control: Infineon’s latest AURIX microcontroller (MCU) enhances the real-time capability of this ECU compared to previous generations, supporting faster, more reliable processing of vehicle and sensor information.

“As advanced driver assistance systems become more sophisticated, reliable real-time operation across the entire system is key,” said Peter Schaefer, Executive Vice President and Chief Sales Officer, Automotive at Infineon. “With our market-leading microcontroller family AURIX, we support Subaru in building the foundation needed to deliver dependable decision-making and control across the vehicle.”

“Subaru is working on the development of an integrated electronic control unit that coordinates next‑generation EyeSight and vehicle motion control for future Subaru vehicles,” said Eiji Shibata, Executive Officer and Chief Digital Car Officer, Subaru Corporation. “Infineon’s AURIX MCU is a core technology that will support robust sensor data fusion and real‑time control within this integrated ECU, and is a key element enabling the evolution of next‑generation ADAS and vehicle motion control. We have built a strong relationship of trust with Infineon over many years and have collaborated from the early stages of development to optimise the design of the AURIX MCU. We value this trusted partnership and look forward to Infineon’s next‑generation MCU.”

In the integrated ECU, Subaru leverages Infineon’s most advanced automotive MCU – AURIX TC4x – to strengthen computing and in-vehicle networking. AURIX TC4x will serve as the main controller for next-generation ADAS functionality controlled by the ECU. In real-time, it enables sensor data fusion as well as decision-making and control, by utilising inputs from camera, radar and other sensors – delivering faster and more reliable driver assistance functions. TC4x combines up to six cores at 500 MHz in lockstep operation and automotive functional safety up to ASIL-D.

Infineon and Subaru have already collaborated for Subaru’s current generation ADAS. Both companies will deepen their collaboration around in-vehicle computing and networking in the future and will drive technology development and value creation toward safer and more secure mobility.

One year ago, Infineon climbed to the number one position in the global microcontroller market, after having reached this position for automotive microcontrollers specifically already in 2023. Since then, the company has further strengthened its position by developing technology ready to meet car manufacturers’ future MCU requirements, such as paving the way for RISC-V to become the open standard for automotive MCUs. Furthermore, Infineon has strengthened its MCU-adjacent product portfolio through the acquisition of the automotive Ethernet business from Marvell in August 2025. With this move, Infineon has created the most comprehensive system offering in the industry for centralised computing architectures in software-defined vehicles.

The post Infineon and Subaru’s collaboration improves driver safety by enhancing real-time performance in advanced driver assistance systems appeared first on ELE Times.

E/E Architecture Redefined: Building Smarter, Safer, and Scalable Vehicles

The automotive industry is shifting toward a new generation of electrical and electronic architectures, moving from distributed ECUs to domain and zonal systems centered around centralized computing. This webinar covers the technical drivers behind that change and the engineering impacts on modern vehicle design.

Attendees will learn how wiring optimization, functional safety, cybersecurity, network speed, and subsystem integration influence architectural choices across the vehicle platform. Join Vishal Barde, Associate Director of Automotive Engineering at eInfochips, as he shares real-world examples of how OEMs are speeding up their move to scalable, software-ready architectures. Engineers and system architects will leave with useful insights they can apply to current and future projects.

Equip yourself with the architectural blueprints needed to lead the shift toward software-defined vehicles.

Access the webinar here!

The post E/E Architecture Redefined: Building Smarter, Safer, and Scalable Vehicles appeared first on ELE Times.

Designing LIDAR on a Chip: A Multiphysics Simulation Workflow for Integrated Photonics

Courtesy: Keysight

Introduction

LIDAR (Light Detection and Ranging) has become a cornerstone technology for autonomous vehicles, enabling high-resolution spatial mapping and object detection. As the industry pushes toward scalable, cost-effective solutions, LIDAR on a chip has emerged as a compelling alternative to traditional mechanical systems. Its advantages—compactness, robustness, and the absence of moving parts—make it an excellent candidate for large volume manufacturing.

However, achieving a commercially viable on-chip LIDAR requires careful optimisation. Designers must minimise insertion loss, maximise output optical power, broaden beam steering range, and narrow the emitted beam. To meet these challenges, reliable and specialised photonic simulation tools are essential for reducing development cycles and ensuring high-performance designs.

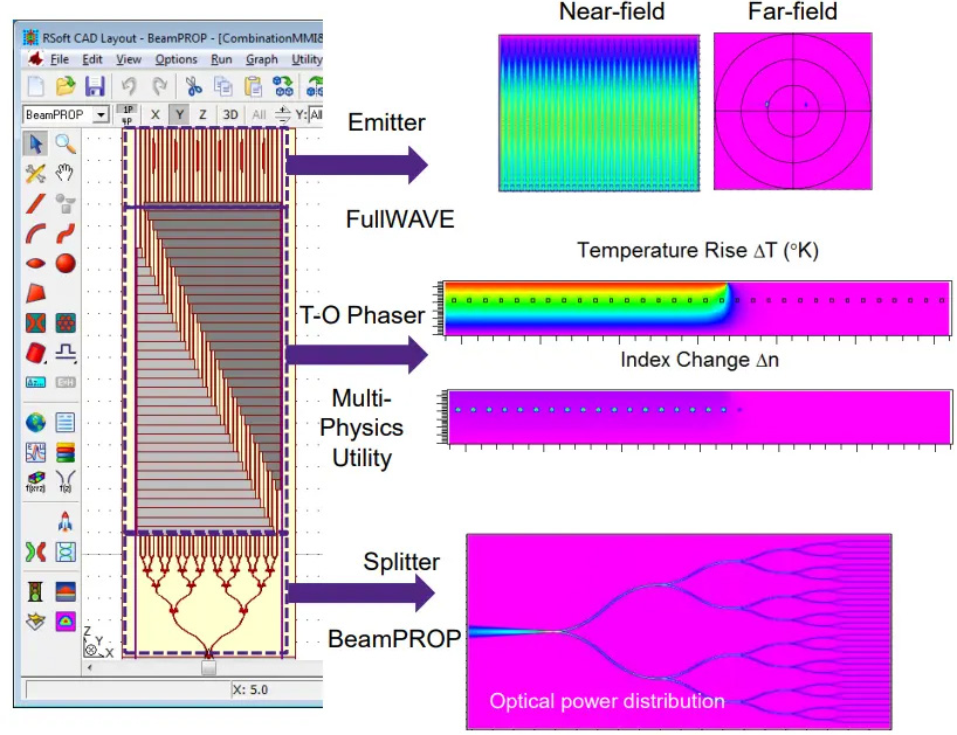

Overall Design and Simulation Strategy

To efficiently design a LIDAR-on-chip system, the device is decomposed into functional blocks, each simulated using the most appropriate tool from the RSoft Photonic Device Tools suite:

Cascaded 1×32 splitter: BeamPROP BPM

BPM is ideal for 1×2 splitters due to little backward reflection and suitability for slowly varying structures.

Thermo-optical phase shifter: BeamPROP BPM + Multiphysics Utility

BPM handles optical propagation, while the Multiphysics Utility computes temperature-dependent refractive index perturbations.

Emitter (grating antenna array): FullWAVE FDTD

FDTD (Finite Difference Time Domain) is required for omnidirectional light propagation and accurate grating coupler modelling.

This modular approach ensures each component is optimised using the most accurate and computationally efficient method available.

Step-by-Step Design of Individual Components

Power Splitter

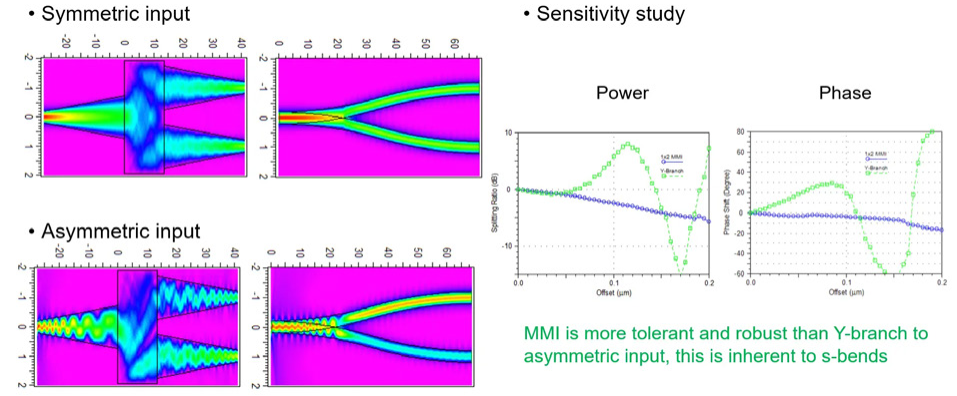

A splitter tree is constructed using cascaded 1×2 splitters—either MMI or Y‑branch designs.

1×2 MMI splitters

- Low insertion loss (~0.3 dB)

- Robust to asymmetric input

- More complex to design

- Wavelength sensitive, limited bandwidth, polarisation dependent

Y‑branch splitters

- Simple geometry (two S‑bends)

- Broadband and polarisation independent

- Higher insertion loss (~2 dB)

- Less tolerant to asymmetric input

Both structures are well-suited to BeamPROP BPM, which solves one-way wave equations under assumptions of slow structural variation and monochromatic excitation.

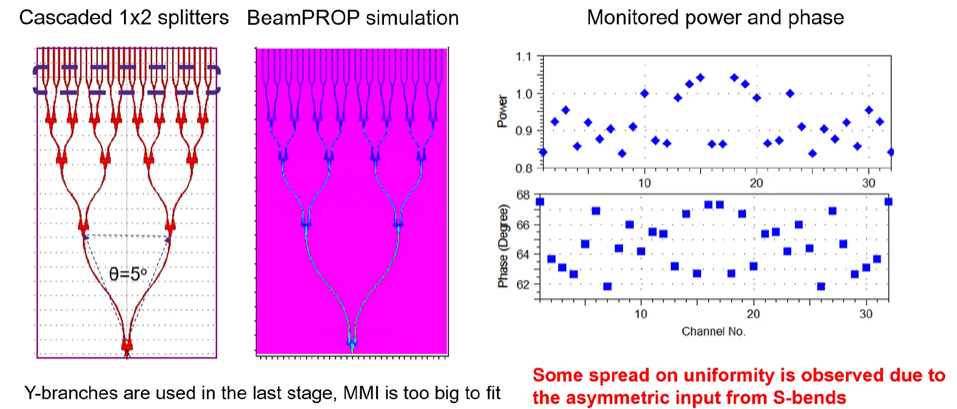

After optimising width and length using 2.5D (2D‑EIM) BPM, sensitivity analyses were performed for symmetric and asymmetric inputs. The final 1×32 splitter tree uses four levels of 1×2 MMIs, followed by a fifth level of Y‑branches where MMIs become too large to fit the remaining layout area.

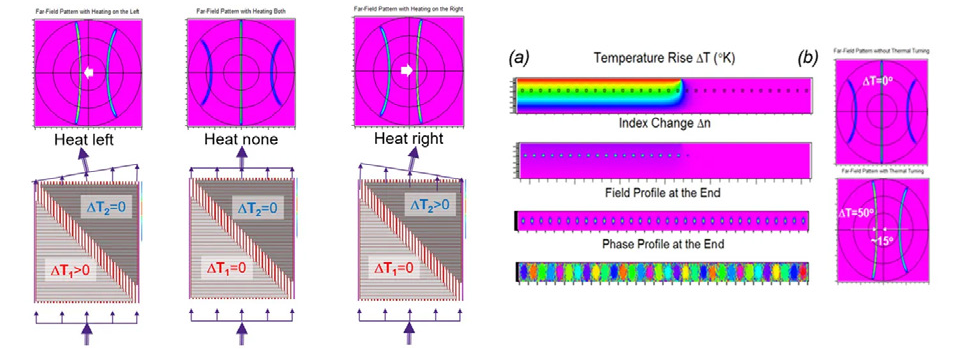

Thermo-Optical Phase Shifter

Silicon’s strong thermal sensitivity (dn/dT = 0.00024/K) enables phase tuning by heating waveguide arrays. Unequal heating introduces phase delays between channels, steering the output beam.

The workflow:

- The thermal diffusion equation was solved to obtain the temperature distribution.

- Temperature profile converted into refractive index perturbation.

- BPM simulates optical propagation to compute amplitude and phase at the output.

- Far‑field analysis reveals the resulting beam steering.

For a temperature change of ΔT = 50 °C, the phase difference between adjacent waveguides is 120°. BPM predicts a steering angle of 15°, matching the theoretical value:

Emitting Gratings

To efficiently outcouple light (orthogonally) with minimal divergence, the grating must be properly apodized. FDTD optimisation yields an optimal tapered-width grating profile, normalised to the grating length (Fig. 5).

The post Designing LIDAR on a Chip: A Multiphysics Simulation Workflow for Integrated Photonics appeared first on ELE Times.

Most of us started here

| submitted by /u/hakh-ti-cxamen [link] [comments] |

Mastering EDA Tools: How India is Upskilling 85,000 Engineers for the Global Chip Race

The Government of India’s ‘Chips to Startups’ (C2S) programme, under the India Semiconductor Mission, has made tremendous progress, completing training for 85,000 engineers in semiconductor design over the past decade. Students have been trained in 315 academic institutions as part of the current chip design training programme.

Union Minister for Electronics and IT, Ashwini Vaishnaw, highlighted how the programme has provided students with an experience of using world-class EDA tools, such as Synopsys, Cadence, Renesas, AMD, Ansys, and Siemens. Using these tools, the students have experienced hands-on learning as needed when they step into the industry. So far, the ministry claims to have recorded more than 1.85 crore hours of EDA tool usage for chip design training.

The training under this programme comprises a holistic experience from design and fabrication to packaging and testing. The chips designed by students are tested at Mohali’s Semiconductor Laboratory. This allows them to understand the complete semiconductor development cycle.

Students from across the country are taking this training, and the government aims to expand this programme under the second edition of the India Semiconductor Mission by raising the number of affiliated institutions from 315 to 500.

As we expect the electronics industry to grow manifold in the coming years, the need for a skilled workforce is bound to rise exponentially, too. Hence, the upskilling and thorough training programme are important to keep India at the forefront of this global race.

By: Shreya Bansal | Sub-Editor

The post Mastering EDA Tools: How India is Upskilling 85,000 Engineers for the Global Chip Race appeared first on ELE Times.

Skyworks and MediaTek demo early 6G FR3 and PC1 RF front-end innovations at MWC

Analog IC longevity is an underappreciated reality

I recently saw an announcement from a major IC vendor, posted in September 2025, letting users know that “STMicroelectronics sets 20-year availability for popular automotive microcontrollers.” The news is that ST was committed to maintaining the cited parts for 20 years instead of their present 15-year assurance.

“Good for them” was my first thought, as that’s the right thig to do for both their OEM and actual vehicle customers. After all, with the average age of cars on the road in the United States approaching 15 years and with little sign of slowing down or even leveling off, that makes sense.

There are two presumed reasons for the longer lifetime. First, cars are built better; the “rust-bucket” and “fall apart” tendencies of many of those pre-1980/90 cars have greatly diminished due to better design, materials, paints, tests, and processes. Second, the cost of a new car is so high that even costly repairs make sense for many.

Ironically, those less reliable, mostly mechanical cars did have one major virtue: they were repairable then and can generally be repaired/restored even today. Many of their old parts are available via specialty sources either as “new old stock” (NOS) or slightly used. And those that can’t be sourced can be machined or 3D printed if the owner has time and resources.

The issue is not limited solely to cars; unavailable mechanical assemblies are a very different case than electronic ones. In 2022, a team at Verisurf was contracted by the U.S. Air Force to reverse engineer and recreate a 300-piece “throttle quadrant” from the E-3 Airborne Early Warning and Control System (AWACS), by disassembling an existing unit piece-by-piece (Figure 1). See “Reverse Engineering the Boeing E-3 Sentry’s Secondary Flight Controls”.

Figure 1 This throttle quadrant from an E-3 AWACS radar aircraft was recreated via precise piece-by-piece measurement and fabrication of each of its 300 pieces. Source: Verisurf

They used a combination of tools, including basic calipers, advanced metrology systems, CAD/CAM software, close-up photographs, and more to capture and then recreate this control unit-top tolerances of better than 0.005 inches.

For the computers-on-wheels electronics of today’s cars, it’s a very different reality. Will you be able to get an engine control module, or one of the other hundred or so MCU-based modules, even 15 years from now? I’m betting the answer is “no” or “very unlikely,” but we’ll have to wait and see how that story unfolds.

The issue of unavailable parts is not limited solely to automobiles, although that is the largest and most visible application. Unlike most consumer products, there are many areas where useful lives of 20, 30, and more years are expected. Among these are industrial applications, railways, mil/aero, critical infrastructure, and even some home systems such as HVACs.

The challenge of replacement parts and their relatively low volume is not being ignored, as the ST announcement shows. The U.S. Defense Microelectronics Agency (DMEA) has instituted an Advanced Technology Supplier Program V (ATSP V) with 13 companies that, among other objectives, includes approaches to developing and creating components in ultra-low volumes for repair and replacement.

What about “analog”?

With all these legitimate concerns about long-term component availability, there’s one interesting aspect. One fact does stand out: unlike digital ICs and processors, the analog world has a different mindset. Analog-circuit designers tend to stick with a component that they have used successfully, even if it’s a few years old and could easily be replaced by a nominally better part.

Ther are several reasons for this tactic. Once an analog part is in the signal chain meeting specs, there’s a reluctance to take change on a new part and design which may have unknown issues and idiosyncrasies. Factors such as parasitics, layout, and power-supply sensitivity (to cite a few) likely will affect design validation, in contrast to the field experience with the existing design.

There are classic analog parts that have been available for decades, and while not recommended for new designs, they are still available if needed for repair, replacement, or even a newer design. Even better, if they are not available, there is often a drop-in replacement with superior performance; this is especially the case for basic 8-pin op amps.

I can think of three “ancient” analog components as examples:

- The AD574 “complete” 12-bit A/D converter from Analog Devices, introduced in the 1978–1980, became the industry-standard ADC for microprocessor interfacing (Figure 2). It was notable for integrating a buried Zener reference clock and 3-state output buffers for direct 8/16-bit bus interfacing. While its die and process have been upgraded and it’s now available in other packages, you can still get it in the original 28-pin housing.

Figure 2 The 12-bit ADC was the first complete unit with “tight” specifications and is still offered 45 years after its initial release. Source: Analog Devices

- The INA133 instrumentation amplifier from Burr-Brown was introduced around 1998 (Burr-Brown was acquired by Texas Instruments in 2000), and it’s still offered in a variety of packages and grades by TI (Figure 3). Like AD574, it’s not recommended for new designs; you can see its top-tier specifications on page 40 of the 2000 Burr-Brown Product Selection Guide.

Figure 3 Burr-Brown’s INA133 instrumentation amplifier provided excellent performance with modest power requirements and has been continuously available since its introduction in 1998. Source: Texas Instruments

- Finally, we can’t look at the 555 timer-oscillator-multivibrator, a clear contender as one of the most classic components of all time and the longest-lived along with the 74 op amp (Figure 4). Devised by Hans Camenzind and marketed as an 8-pin DIP by Signetics in 1971, it’s still available in many versions, including duals and quads as well as CMOS variations. Despite its age, it’s often used to solve annoying timing and oscillator problems at low cost, and there are many “cookbooks” showing innovative ways in which it can be used.

Figure 4 It’s very likely that no IC has spawned more creative and clever design ideas and handbooks and solved as many circuit problems as the 555 timer-oscillator-multivibrator. Source: Wikipedia

There are others, of course, such as the 60-year-old 2N3905 or 2N2222 transistors—it doesn’t get more basic than that.

While many analog components have a long and viable life with their original or descendent vendors, there is even a solution for the many cases where that source does not want to manufacture or support that IC forever. Companies such as Rochester Electronics work out a formal arrangement and license to take over the rights, tooling, support, and test procedures for the parts. Users who need the part don’t need to consider grey-market or even counterfeit products; instead, they get ICs which are 100% legitimate but via a different supplier.

ST’s announcement is welcome, of course. I wish that more vendors would make that sort of commitment, difficult as it may be, or at least commit to licensing unwanted products to non-competing vendors. For now, if you want long-term continuity, stick with analog parts as much as possible.

Have you ever had to deal with repairing a product having electronic components that were no longer available, or even doing regular production on a long-lived product where you needed more than just a few? Did you find parts, or did you have to do a full redesign? How painful was that process?

Related Content

- Go offline, and crack open a design book

- 2N3904: Why use a 60-year-old transistor?

- Vacuum tubes are dead; long live vacuum tubes

- Thermal printers: should be obsolete, but still going strong

- Appliance schematic, wiring diagram bring troubleshooting joy

The post Analog IC longevity is an underappreciated reality appeared first on EDN.

From 10 to 1000 TOPS: Why Automotive Chips Need a New Architecture

Speaking at the Auto EV Tech Vision Summit 2025, Namrta Sharma, Technical Director at Aritrak Technologies, highlighted how chiplet architectures are emerging as a crucial enabler for the next generation of automotive semiconductors. As vehicles transition toward software-defined platforms, Sharma emphasized that semiconductor design must evolve to meet unprecedented computational demands while balancing cost, scalability, and time-to-market.

Setting the context, Sharma described the ongoing transformation in the automotive sector. “We should all agree that we are actually going through a metamorphosis,” she said, adding that the automotive industry is currently experiencing “a big transformation of the century.” Vehicles are no longer defined solely by mechanical and electrical components. Instead, the modern car is increasingly becoming a software-driven platform.

Computational Requirements at Rise in Automotives

“The car is no longer just mechanical and electrical,” Sharma noted. “The car is a software-defined vehicle. So, the role of the semiconductors in the car is also changing. It is no longer just adding to some features. It actually defines the car. It is the core intelligence of the car.” This shift is dramatically expanding the semiconductor requirements inside vehicles. Modern automotive electronics must support electrification, battery management, in-vehicle infotainment, constant connectivity, and advanced driver assistance systems (ADAS), while also laying the groundwork for autonomous driving.

Sharma highlighted how the computational requirements behind these capabilities have grown exponentially. “If you see the numbers for the compute, Level-2 ADAS required just 10 TOPS, which is 10 trillion operations per second, which was just a decade ago,” she explained. “But now the requirement is about 1,000 TOPS for full autonomy. So it is like a 100x gap.”

Limitations at Play

Meeting such performance requirements using conventional monolithic chip design is becoming increasingly difficult. Integrating CPUs, GPUs, communication circuits, and power management components into a single system-on-chip (SoC) results in extremely large semiconductor dies. However, manufacturing such large chips is constrained by physical and economic limits. “There is a limit. It is called the reticle limit,” Sharma explained. “That is the biggest size that we can manufacture in a foundry, and it is about 850 mm² as of now.”

Large monolithic chips also face yield challenges. A defect in even a small portion of a large die can render the entire chip unusable, leading to significant losses in manufacturing yield and rising costs. To address these issues, the semiconductor industry has increasingly turned to chiplet architectures. “The solution was simple,” Sharma said. “Just cut this big die into multiple dies. These are all small functioning blocks, and these are called chiplets.”

Chiplets: A Yield Optimisation Solution

By breaking down a large chip into smaller modular dies, chiplet architectures help overcome reticle limitations and improve yield. If a defect occurs, only the affected chiplet is discarded rather than the entire system. This modular approach has already gained traction in high-performance computing systems and is now finding relevance in automotive electronics.

Sharma also pointed out that chiplets enable a shift toward heterogeneous integration. Instead of manufacturing every component using the most advanced—and expensive—process node, different chiplets can be fabricated using technology nodes optimized for their specific functions. “For example, the CPU needs the highest compute, so it is in the latest technology node,” she explained. “But the other things, like sensors or memory engines, need not be.”

Chiplets Optimised Time to Market

Beyond cost and yield advantages, chiplets significantly improve time-to-market. Sharma emphasized that modular architectures allow semiconductor companies to reuse proven components and focus their efforts on product differentiation. “You need not design the whole chip, you need not design the whole SoC,” she said. “You can just design your differentiating chiplet.” This flexibility also allows manufacturers to scale systems quickly for different vehicle segments.

Performance levels can be adjusted simply by replacing or modifying individual chiplets, enabling rapid customization without redesigning the entire architecture. However, Sharma cautioned that the transition to chiplet-based systems introduces new challenges. With multiple chiplets integrated into a single package, design complexity shifts from the chip level to the system level. This requires advanced electronic design automation (EDA) tools capable of co-optimizing silicon, packaging, and interconnect technologies.

Testing & Standards

With this, testing and validation also become more complex. The success of chiplet integration depends on ensuring that each component integrated into the system is a “known good die.” As Sharma noted, this requires new testing methodologies and infrastructure capable of validating chiplets both individually and within the larger system.

Another key factor in the long-term success of chiplets is the development of industry-wide standards. Sharma highlighted the importance of emerging interconnect standards such as Universal Chiplet Interconnect Express (UCIe), which aim to enable interoperability between chiplets from different vendors. As the ecosystem evolves, Sharma believes collaboration across the semiconductor value chain will play a critical role. Foundries, design houses, EDA companies, substrate providers, and industry consortia are already working together to establish the standards and infrastructure needed to support chiplet-based systems.

Conclusion

Summarizing her key message, Sharma emphasized that chiplet architectures are not just about cost optimization. Instead, they represent a fundamental shift in how semiconductor systems are designed for rapidly evolving markets like automotive. “Chiplet heterogeneous integration provides not only the cost benefit,” she concluded, “it also provides the speed of execution—the speed to make changes fast and react to innovation.”

As automotive electronics continue to grow in complexity and performance requirements, chiplets may well become the architectural foundation enabling the next wave of innovation in software-defined vehicles.

The post From 10 to 1000 TOPS: Why Automotive Chips Need a New Architecture appeared first on ELE Times.