ELE Times

Why Frugal engineering is a critical aspect for advanced materials in 2026

by Vijay Bolloju, Director R&D, iVP Semiconductor

Widespread electrification of everything is pushing the boundaries of Power Electronics systems. The demand for high power densities and lower weight in systems necessitates the use of novel materials.

Representational Image

Representational Image

The newer generation power semiconductor devices can operate at higher temperatures. Operating at higher temperatures can increase the power densities and reduce the Power device costs of the system. At the same time, it poses reliability concerns due to dielectric breakdown, deformation, and increased leakage currents due to ionic contamination of the moulding compounds. Packaging materials capable of reliably operating at higher temperatures are needed to exploit their capabilities to the fullest.

It is also evident from the recent trends that the operating voltages of systems like EVs, Data Centres, telecom, etc, are on the rise. Higher operating voltages warrant a higher degree of compliance for the safety of the users.

The cost breakdown of the high-power electronic systems shows that more than half of the cost comes from non-semiconductor materials. Materials such as plastics used for packaging, thermal interface materials (TIM), sealing compounds, heat dissipaters such as heat sinks, cooling liquids, substrates, connectors, etc.

Substrates play a major role in the thermal performance, structural stability, and reliability of the systems. FR4 PCBs have very poor thermal conductivity (0.24 W/m-K) and are commonly used for low-power systems. FR4 also has low Tg (~ 130 °C) and limits the operating range for the power semiconductors. These substrates are not recommended for high-power applications.

Aluminium Metal core PCBs (MCPCBs)are also widely used for building electronic circuits. These substrates have relatively higher thermal conductivity (2 W/m-K) and higher Tg. MCPCBs offer better mechanical stability and thermal performance. Though multi-layer MCPCBs are available, the most common MCPCBs are single-layer due to cost considerations. This will limit the ability to make the systems compact.

Ceramic substrates such as alumina (Al2O3), aluminium nitride (AlN) have excellent thermal conductivity and mechanical stability. Alumina has 100X higher thermal conductivity (24 W/m-K) and aluminium nitride has 1000X higher thermal conductivity (240 W/m-K) than FR4 material. They also render superior reliability and high-temperature operation capability. They are perfectly suited for high-power systems. They are also single-layer due to cost considerations.

The selection of the substrate materials should be based on the cost-performance criteria. Cost of the substrates increases in this order: FR4 PCBs, MCPCBs, and ceramic substrates. But the power semiconductor costs will reduce in the reverse order due to the improvement in the thermal conductivity. The reliability of the system also depends on the substrate choice – Ceramics offering the best, and FR4 the least. So, a sensible trade-off should be considered to make a suitable choice.

Thermal interface materials (TIM) also have a profound effect on the system performance, reliability, and cost. They are often neglected and not paid due attention. But they can really help in enhancing the thermal performance of the system and even reducing the number of power devices needed to implement the designs. TIMs also help in providing dielectric insulation to the system. So, an ideal TIM has high thermal conductivity and high dielectric strength. Choosing a proper TIM that meets the system requirements can help in reducing overall system cost and size.

Choosing proper substrate materials, TIM, and heat dissipator can reduce the system cost and size considerably and lead to frugal designs.

A holistic approach to design from the selection of power device technologies, substrates, TIM, and power dissipators may result in high-performance, reliable, and lower-cost systems.

Currently, the Indian materials ecosystem is poor and needs to be revamped to serve the power electronics industry to achieve higher performance metrics. The plastics, substrates, TIM, and other materials can be locally developed using advances in materials such as nano-materials, carbon compounds, engineering plastics, composite materials, etc. India has a mature ceramics industry serving the energy sector, the medical industry, etc. The technologies can be used to make substrate materials for power electronics applications. Metallization of the ceramic substrates to print the circuits is also an essential skill set to be developed.

High thermal conductivity composite materials, metal foam forming, and phase change materials can elevate the thermal performance of the systems. If the system can be cooled using advanced materials without the need for a liquid cooling system, it can considerably reduce the system cost and improve the reliability of the system.

All the materials described above that can improve system performance and reliability while reducing cost (Frugal innovations) can be developed and manufactured locally. A concerted and collaborative effort is all it needs.

The post Why Frugal engineering is a critical aspect for advanced materials in 2026 appeared first on ELE Times.

India’s Semicon Programme: From Design to Packaging

Union Minister of State for Electronics and Information Technology Shri Jitin Prasada, stated India’s achievements from the Semicon India Programme. The Indian Government launched the programme to develop a complete ecosystem ranging from design, fabrication, assembly, testing and packaging.

The government approved 10 units worth Rs. 1.6 lakh crore to set up Silicon fab, Silicon Carbide fab, advanced packaging, memory packaging, among others. These are expected to cater to chip requirements of multiple sectors such as consumer appliances, industrial electronics, automobiles, telecommunications, aerospace, and power electronics. The minister also recalled how some of the approved projects are also using indigenous technology for assembly, testing and packaging of semiconductor chips.

Additionally, the Product Linked Incentive scheme (PLI) for large scale electronics manufacturing of mobile phones and certain specified components, attracted an investment of Rs 14,065 Cr. up to October 2025.

Design Development

On the design front, the government launched the Design Linked Incentive scheme (DLI), which provided support to 23 companies (24 designs) for designing chips and SoCs for the products in satellite communication, drones, surveillance camera, Internet of Things (IoT) devices, LEDs driver, AI devices, telecom equipment, smart meter, etc. Additionally, to assist with infrastructure, the government provided free design tool (EDA) access to 94 startups, enabling 47 lakh hours of design tool usage.

Developing a Skilled Workforce

Realising the importance of skilled workforce in the semiconductor manufacturing process, the government also launched several programmes and collaborations to build a skilled workforce for India. The All India Council for Technical Education (AICTE) has launched various courses to provide technical training to students.

The government’s Chips to Start-up (C2S) Programme encourage India’s young engineers, where the government provides latest design tools (EDA) to 397 universities and start-ups.

A Skilled Manpower Advanced Research and Training (SMART) Lab has also been setup in NIELIT Calicut with an aim to train 1 lakh engineers nation-wide. More than 62 thousand engineers have already been trained.

ISM has also partnered with Lam Research for conducting a large-scale training programme in nanofabrication and process-engineering skills. These would further augment skilled workforce for ATMP and advanced packaging. The program aims to generate 60,000 trained manpower in next 10 years.

Subsequently, the FutureSkills PRIME program is a collaborative initiative of MeitY and National Association of Software and Service Companies (NASSCOM) aimed at making India a cutting-edge digital talent nation.

The post India’s Semicon Programme: From Design to Packaging appeared first on ELE Times.

Caliber Launches Advanced 3-Phase Monitoring Relay for India’s Industries

Caliber Interconnect Solutions Private Limited, a global engineering and deep-tech solutions company, has introduced its latest Three Phase Monitoring Relay, to address the growing need for reliable electrical protection across India’s industrial, infrastructure, and renewable energy sectors.

As industries increasingly operate in environments affected by voltage fluctuations and power quality challenges, the relay is designed to deliver accurate and dependable monitoring. Built on True RMS (TRMS) measurement principles, it provides precise voltage assessment even under distorted or unstable electrical conditions—an essential requirement for modern manufacturing, HVAC, power distribution, and solar installations.

Suresh Babu, Managing Director, Caliber Interconnects, said, “With rapid electrification and automation across Indian industry, electrical reliability has become a foundational requirement. This Three Phase Monitoring Relay is engineered to ensure consistent system protection and operational continuity in real-world conditions.”

The relay continuously monitors all three phases and detects critical electrical anomalies, including over-voltage and under-voltage with configurable thresholds, phase imbalance, phase loss, and phase sequence faults—issues that are among the most common causes of industrial equipment failure. Clear LED fault indications enable quick identification and troubleshooting at the panel level, supporting faster response by maintenance teams.

Manufactured using UL 94 V-0 grade flame-retardant material, the device emphasizes safety and durability. Its compact 19.1 mm footprint and fast response time of under two seconds make it suitable for space-constrained panels and contemporary industrial control systems.

Designed and manufactured in India, the Three Phase Monitoring Relay aligns with the country’s focus on electrical safety, energy efficiency, and resilient industrial infrastructure. Caliber Interconnects brings over two decades of experience in delivering high-reliability engineering and product solutions across semiconductors, automotive, railways, avionics, and medical sectors, serving customers in India, Singapore, the United States, Japan, Malaysia, and Israel. The company is ISO 9001, AS9100D, and ISO 27001 certified.

The Three Phase Monitoring Relay is suitable for a wide range of applications including industrial machinery, HVAC systems, motors, generators, pumps, compressors, solar energy systems, and electrical control panels. It is available through Caliber Interconnects’ offices in Bengaluru and Coimbatore, supporting deployment across India.

The post Caliber Launches Advanced 3-Phase Monitoring Relay for India’s Industries appeared first on ELE Times.

AEW & Wirepas Partner to Accelerate Smart Meter Rollout in India

Allied Engineering Works Pvt. Ltd. (AEW), manufacturer of Smart Electricity Meters, announced jointing the Wirepas Ecosystem with the aim to provide meters fully compatible with the Wirepas Certified Platform. This step marks the company’s commitment to enabling large-scale, highly reliable and interoperable Smart Metering Solutions in India based on the market proven Wirepas RF Mesh. Wirepas’ recently announced Wirepas Certified Platform in India provides a unified framework to ensure guaranteed performance, interoperability and reliability for India’s ambitious AMI deployments.

“Joining the Wirepas ecosystem is a key step in our roadmap,” said Ashutosh Goel, CMD, AEW. “We are confident that this technology can effectively address India’s smart metering needs and are committed to developing meters that meet the stringent requirements.”

Wirepas CEO Teppo Hemiä commented: “We are thrilled to welcome AEW into the Wirepas ecosystem. Their commitment is a strong reflection of the growing momentum we see across India. The Wirepas model has always been built on collaboration with local partners and on doing things transparently together. The ambition and readiness of manufacturers like AEW are essential for achieving the massive scale India requires. We look forward to supporting their journey toward fully compliant, high-performance products.

Joining the Wirepas ecosystem reinforces AEW’s long-term strategy to deliver robust, interoperable, and scalable solutions for India’s rapidly expanding smart metering programs.

The post AEW & Wirepas Partner to Accelerate Smart Meter Rollout in India appeared first on ELE Times.

World’s First 5G NR NTN Certification: Anritsu and Samsung Lead RFCT Testing

ANRITSU CORPORATION announced its New Radio RF Conformance Test System “ME7873NR” to have achieved PCS Type Certification Review Board (PTCRB) certification for the first time *A in test cases related to non-terrestrial networks (NR NTN) utilizing 5G technology, using Samsung’s NR NTN modem chipset.

The NTN is a communication system that integrates satellite communication and airborne technologies along with terrestrial infrastructure, leveraging satellites in low Earth orbit (LEO), medium Earth orbit (MEO), and geostationary Earth orbit (GEO).

3GPP Release 17 introduces “NR NTN”, which includes Non-Terrestrial Networks into the 5G New Radio (NR) standards. With this advance, 5G devices can connect to satellites using the same protocols as terrestrial base stations, paving the way for global 5G coverage beyond traditional infrastructure.

The PTCRB definition of NR NTN testing signifies the formal commencement of the NTN device certification process. Going forward, NR NTN is expected to be actively adopted by network operators worldwide to enhance service quality and expand coverage. Anritsu will contribute to the proliferation of NTN devices by providing certification test systems.

Product Overview

The ME7873NR is a 3GPP-compliant 5G RF conformance test platform certified by both the Global Certification Forum (GCF) and PCS Type Certification Review Board (PTCRB). It supports Frequency Range 1 (FR1, Sub-6 GHz) out-of-the-box, and combining it with an OTA (CATR) chamber * adds support for Frequency Range 2 (FR2, mmWave).

For more information about ME7873NR

Learn more about “Non-Terrestrial Networks (NTNs)”

*A For PTCRB certification test cases related to NR NTN (Anritsu survey, December 2025)

Technical Terms

* OTA (CATR) Chamber: Compact Antenna Test Range. A 3GPP-compliant CATR anechoic chamber designed to block external radio waves and prevent internal reflections to accurately measure wireless device performance via over-the-air transmission and reception.

The post World’s First 5G NR NTN Certification: Anritsu and Samsung Lead RFCT Testing appeared first on ELE Times.

What Are Memory Chips—and Why They Could Drive TV Prices Higher From 2026

As the rupee continues to depreciate, crossing the magical figure of 90, the electronics manufacturing industry in India is set to take a blow. As India imports nearly 70 percent of the components used in TVs, whether Open Cell Panels or glass substrates, and even the memory Chips, a continued rise in the prices of memory chips along with depreciating rupees beyond 90 is set to impact the TV prices by around 3-4 percent by January 2026. The situation is further aggravated by the rising demand for High-Bandwidth Memory (HBM) for AI servers, which is driving a global chip shortage.

Also, the chipmakers are largely focusing on the high-profit AI chips, lowering the supply of chips for legacy devices like TVs. Like any high-end device, Smart TVs are also heavily dependent on memory chips for various reasons, ranging from storing preferences to facilitating services.

What are Memory Chips?

Memory Chips are integrated chips used in TVs that store firmware, settings, apps, and user data, primarily using Electrically Erasable Programmable Read-Only Memory (EEPROM) for basic adjustments (like picture settings) and modern eMMC flash memory (like in smartphones) for smart TV OS, apps, and video buffering. The EEPROM mode allows the memory to retain data even when power is off and makes it essential for storing data like configuration settings and the system’s fundamental settings.

Why is it so important?

Since the chip stores such minimal yet crucial data, important for the functioning of smart TVs, they act as the brains of the TV’s memory. Most importantly, these not only hold data, but also ensure that the TVs start up correctly and store the TV’s Operating system and Software. In crux, it is an integrated memory that, like any memory, runs smart features, remembers preferences, and displays content smoothly.

7-10 % Price Hike

According to media reports, Super Plastronics Pvt Ltd (SPPL)—a TV manufacturing company that holds licences for several global brands, including Thomson, Kodak and Blaupunkt—has said that memory chip prices have surged by nearly 500 per cent over the past three months. The company’s CEO, Avneet Singh Marwah, added that television prices could rise by 7–10 per cent from January, driven largely by the memory chip crunch and the impact of a depreciating rupee.

According to a recent Counterpoint Research report, India’s smart TV shipments fell 4 per cent year-on-year in Q2 2025, weighed down by saturation in the smaller-screen segment, a lack of fresh demand drivers, and subdued consumer spending.

The post What Are Memory Chips—and Why They Could Drive TV Prices Higher From 2026 appeared first on ELE Times.

Anritsu & HEAD Launch Acoustic Evaluation Solution for Next-Gen Automotive eCall Systems

ANRITSU CORPORATION and HEAD acoustics have jointly launched of an advanced acoustic evaluation solution for next-generation automotive emergency call systems (“NG eCall”).

The new solution is compliant with ITU-T Recommendation P.1140, enabling precise assessment of voice communication quality between vehicle occupants and Public Safety Answering Points (PSAPs), supporting faster and more effective emergency response.

With NG eCall over 4G (LTE) and 5G (NR) now mandatory in Europe as of 1 January, 2026, ensuring high-quality, low-latency voice communication during vehicle emergencies has become essential. After a collision, calls are conducted hands-free inside the vehicle cabin, where high noise levels, echoes, and other acoustic challenges can significantly degrade speech clarity. Reliable voice performance is therefore critical to accurately conveying the situation and enabling rapid rescue operations.

The solution integrates Anritsu’s MD8475B (for 4G LTE base-station simulation) or MT8000A (for both 4G LTE and 5G NR simulation) with HEAD acoustics’ ACQUA voice quality analysis platform. This combination enables comprehensive evaluation of transmitted (microphone) and received (speaker) audio under a wide range of realistic operating conditions.

Example Evaluation Scenarios

• Echo and double-talk situations where speaker output re-enters the microphone or simultaneous speech may affect intelligibility

• Cabin noise simulations representing real driving environments, including road, wind, and engine noise

By delivering a reliable and repeatable approach to voice-quality assessment, Anritsu reinforces its commitment to supporting automotive manufacturers and suppliers in the development of NG eCall and advanced in-vehicle audio systems, contributing to a safer and more secure mobility ecosystem.

The post Anritsu & HEAD Launch Acoustic Evaluation Solution for Next-Gen Automotive eCall Systems appeared first on ELE Times.

Dell Technologies’ 2026 Predictions: AI Acceleration, Sovereign AI & Governance

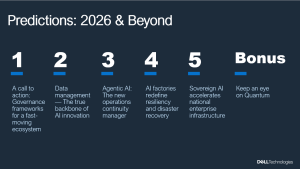

Dell Technologies hosted its Predictions: 2026 & Beyond briefing for the Asia Pacific Japan & Greater China (APJC) media, where the company’s Global Chief Technology Officer & Chief AI Officer, John Roese, and APJC President, Peter Marrs, outlined the transformative technology trends and Dell’s strategies for accelerating AI adoption and innovation in the region.

John Roese’s vision on the trends set to shape the technology industry in 2026 and beyond (Image Credits: Dell Technologies)

John Roese’s vision on the trends set to shape the technology industry in 2026 and beyond (Image Credits: Dell Technologies)

According to Roese, the rapid acceleration of AI is set to profoundly reengineer the entire fabric of enterprise and industry, driving new ways of operating, building, and innovating at an unprecedented scale and pace.

Focus on scalability and real adoption

A key trend is the shift in focus towards scaling AI for tangible business outcomes. “Conversations are on very real adoption, and AI is creating a truly transformational opportunity,” said Marrs. “We are working with customers across the region to build AI at scale.”

Marrs noted that growing deployment of agentic AI is an example of this transformation, with organizations such as Zoho in India already working with Dell to accelerate agentic AI adoption by delivering contextual, privacy-first and multimodal enterprise AI solutions. “AI has become more accessible for all companies in the region, and what we’ve been doing is successfully building foundations with customers to deploy AI at scale.”

Roese highlighted that the industry is now entering the autonomous agent era, where agentic AI is evolving from a helpful assistant to an integral manager of complex, long-running processes. “We expect that as people go on the agentic journey into 2026, they will be surprised by how much more agents do for them than they anticipated. Its very presence will bring value to make humans more efficient, and make the non-AI work, work better,” he noted.

As the industry continues to build and deploy more enterprise AI, Roese also emphasized the need for businesses to rethink how they treat and make resilient AI factories.

Sovereign AI and governance as the foundation for innovation

With the light-speed acceleration of AI development, there is a degree of volatility. Roese predicted that the demand for robust governance frameworks and private, controlled AI environments will become undeniable, urging the industry to build on both internal and external AI guardrails that allow organizations to innovate safely and sustainably.

“Last year, we predicted that ‘Agentic’ would be the word of 2025. This year, the word ‘Governance’ is going to play a much bigger role,” Roese said. “The technology and its use cases are not going to be successful if you do not have discipline and governance around how you operate your AI strategy as either an enterprise, a region, or a country.”

At national levels, the rapid rise of sovereign AI ecosystems will continue as AI becomes critical to state-level interests. Marrs discussed this trend’s momentum, noting that like many countries in the region, enterprises are also actively building their own frameworks to drive local innovation, with strong foundations already in place.

Building the ecosystem for impact and progress

To bridge that gap, Marrs reiterated the importance of a collaborative ecosystem in nurturing a skilled talent pool and advancing the region’s AI competitiveness, citing the APJ AI Innovation Hub as an initiative that is delivering impact through the combination of Dell’s capabilities, talent, and ecosystem.

“By working with experts, government, and industry peers, we’ve made unbelievable headway in fostering skill development and advancing our collective expertise,” said Marrs. “Together, we are accelerating Asia’s leadership as an AI region, identifying key steps to bolster the region’s growth. Dell is excited about how we’re participating and helping with this transformation.”

The post Dell Technologies’ 2026 Predictions: AI Acceleration, Sovereign AI & Governance appeared first on ELE Times.

NAL-CSIR Advances Field testing of Indigenous Defence Tech

The Council of Scientific and Industrial Research (CSIR)-National Aerospace Laboratories (NAL), in collaboration with Solar Defence & Aerospace Ltd. (SDAL), a private industry partner co-developed a 150-kg class Loitering Munition UAV (LM-UAV).

The drone system has an indigenous Wankel engine which is a perfect blend of efficiency and reliability, essential for defence products. With an operational range of up to 900 kilometres and an endurance for 6-9 hours, the drone is reliable for long missions. The system also has an operability at service ceilings reaching 5 kilometres which ensures altitude flexibility. The system is fitted with cutting edge features, such as GPS-denied navigation, essential for situations where the GPS is compromised, along with a low-radar cross-section that enhances its stealth characteristics.

The drone system is also updated with latest AI technology for target identification which will boost precision and autonomy during missions.

Next, is the field testing for High Altitude Platforms (HAPs), a solar-powered unmanned aircraft, capable of a sustained flight above a 20-kilometre altitude. These HAPs act as pseudo-satellites, used for extended surveillance, communication, and reconnaissance purposes.

The field testing of the 150-kg class LM-UAV and the development of solar-powered HAPs mark important milestones in India’s evolving indigenous defence technology landscape. These advancements are testament to the country’s commitment to building resilient and self-sustaining defence assets through collaborative public-private partnerships and cutting-edge aerospace research.

They are part of India’s efforts to develop self-reliant defence technologies under the ‘Atmanirbhar Bharat’ Initiative.

The post NAL-CSIR Advances Field testing of Indigenous Defence Tech appeared first on ELE Times.

Toyota & NISE Test Mirai Hydrogen FCEV in India Conditions

Toyota Kirloskar Motor (TKM) and the National Institute of Solar Energy (NISE) under the Ministry of New and Renewable Energy signed an MoU to collaborate to test Toyota’s Mirai, a hydrogen fuel-celled EV in Indian conditions.

The MoU was signed in the presence of Union Minister of New and Renewable Energy and Consumer Affairs, Food and Public Distribution, Prahlad Joshi in New Delhi. Under the said agreement, Toyota has handed over its Toyota Mirai to NISE who will conduct a comprehensive real-world test on the vehicle. The study will judge Mirai’s performance across Indian climates, terrains, and driving conditions.

NISE will study the fuel efficiency, real-world range, refuelling patterns, drivability, environmental resilience, along with the overall adaptability of the vehicle to Indian roads and traffic conditions. These results are then expected to manoeuvre the early-stage adoption of hydrogen mobility technologies in the country.

This initiative will be a breakthrough in the advancement for Hydrogen mobility in the country. It will also support India’s Green Hydrogen Mission and strengthen the country’s decarbonisation and clean transportation goals.

India’s decarbonization goals revolve around its Panchamrit targets: reaching 500 GW non-fossil fuel capacity and 50% renewable energy by 2030, cutting emissions intensity by 45% (vs. 2005) and reducing total emissions by 1 billion tonnes by 2030, all leading to Net-Zero by 2070, driven by massive solar, wind, green hydrogen, battery storage, and grid improvements.

The post Toyota & NISE Test Mirai Hydrogen FCEV in India Conditions appeared first on ELE Times.

Nissan Powering EV Component Plant with Repurposed Batteries

Nissan Australia has launched the Nissan Node project, a circular economy initiative which will repurpose end-of-life Nissan Leaf batteries to power a section of its Dandenong production facility.

This initiative is part of Nissan’s sustainability strategy. The project involves the installation of a new solar array along with a battery-energy storage system, made from repurposed first-generation Leaf batteries at the Nissan Casting Australia Plant (NCAP).

EV manufacturing is said to have a 50-100% higher production emission as compared to ICE vehicles due to battery manufacturing.

Nissan expects its new system to cut NCAP’s annual CO2 emissions by nearly 259 tonnes while saving approximately 128 megawatt-hours of energy annually.

“This isn’t just a hugely exciting project, but an important step into the future for end-of-life EV batteries,” Nissan Oceania managing director Andrew Humberstone says.

“As an early pioneer of the electric vehicle both globally and locally, we can also demonstrate leadership in second-life battery initiatives. I’m proud that this solution is every bit as innovative as the launch of not only the Leaf but the recently introduced ARIYA.”

The initiative was delivered in partnership with Melbourne-based technology firm Relectrify, which developed the battery-management and inverter technology enabling second-life Leaf batteries to be repurposed effectively. Its ReVolve energy-storage system installed at NCAP is the first commercial product built entirely from retired Leaf batteries.

The post Nissan Powering EV Component Plant with Repurposed Batteries appeared first on ELE Times.

KEC, Powernet & Wise Integration Co-Develop AI Server SMPS Power Solutions

Wise Integration (France), Powernet (Korea) and KEC (Korea) will co-develop next-generation switched-mode power supply (SMPS) solutions designed specifically for AI server applications in South Korea. The partnership aligns with the country’s push to expand AI infrastructure and build out the next generation of high-density data centers.

Under the agreement, Wise Integration will supply its GaN (gallium-nitride) power devices, digital-control expertise and technical support. Powernet Technologies Corporation will lead development of new SMPS designs using Wise’s WiseGan and WiseWare technologies. KEC Corporation will manage backend manufacturing, including module integration and system-in-package production tailored to the thermal and reliability demands of AI-server racks.

In addition to accelerating the design and development of competitive AI-server power supply solutions and creating business opportunities in Korea’s AI server market, the project aims to shorten the solutions’ time-to-market using WiseGan and WiseWare technologies. The collaboration builds on an earlier partnership between Wise Integration and Powernet, launched to serve OEMs that require compact, digitally controlled power-supply systems for faster, smaller and more energy-efficient electronic equipment.

AI servers draw extensive power, generate intense heat, and depend on SMPS designs capable of converting high-voltage input (e.g., 400 V) to stable 48 V rails with minimal loss. GaN devices with digital control are uniquely suited for that task: they enable higher switching frequencies, greater efficiency, and more precise management of fast, high-current load transients.

Supporting South Korea’s National Ambition

The South Korean government’s investments in AI-dedicated data centers includes high-performance GPU clusters and digital infrastructure that supports demand for more efficient, compact, and scalable power-conversion systems.

“Korea is moving quickly to build the next generation of AI data centers, and power architecture is a critical piece of that effort. Working with Powernet and KEC lets us bring GaN-based digital control into server-grade designs at scale—delivering the efficiency, thermal performance, and responsiveness that modern AI hardware depends on,” said Ghislain Kaiser, CEO of Wise Integration.

Technology Momentum: A Logical Extension of Wise Integration’s Roadmap

Beyond the MoU, Wise Integration has been widening the foundation that supports strategic partnerships. As Korea accelerates AI-ready data-center development, the demands on power-conversion systems intensify—higher switching frequencies, tighter thermal budgets and more aggressive efficiency targets. Wise has been expanding its GaN + digital-control portfolio to meet those constraints, ensuring the technologies Korea aims to deploy at scale already have a maturing, production-validated base behind them.

For example, the company recently launched WiseWare 1.0 for totem-pole PFC and LLC topologies, its newest fully digital controller. While aimed today at gaming, displays and industrial systems, it shares the same architecture—high-frequency GaN operation, compact form factor, digitally managed efficiency—that naturally scales into the server-class designs targeted under the Korea-focused collaboration.

The post KEC, Powernet & Wise Integration Co-Develop AI Server SMPS Power Solutions appeared first on ELE Times.

FAMES Pilot Line R&D Advances: 400°C CMOS Enables 3D Integration Goals

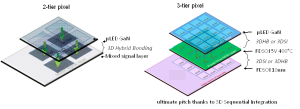

CEA-Leti, the coordinator of the FAMES Pilot line, has achieved a major milestone for next-generation chip stacking: fully functional 2.5 V SOI CMOS devices fabricated at 400°C. The devices match electrical performance of devices fabricated at standard thermal budget (>1000 °C), removing one of the last barriers to large-scale 3D sequential integration (3DSI) —a core objective of FAMES.

Enabled by advanced CEA-Leti expertise in low-temperature processes (nanosecond laser annealing (NLA) and solid-phase epitaxy regrowth (SPER)), this work offers true three-dimensional device stacking from the lab to fab. 3D sequential-integration interconnection density between tiers is the highest among 3D technologies, such as TSV and hybrid bonding. In this project, CEA-Leti demonstrated that Si CMOS is BEOL compatible, and thus can be stacked safely above BEOL, while transistor performance and maturity overtake largely the other technological options from the state-of-the-art, low-temperature solutions.

The achievement, presented today in a paper at IEDM 2025, titled, “High Performance 2.5 V n&p 400 °C SOI MOSFETs: A Breakthrough for Versatile 3D Sequential Integration,” is a key breakthrough for the FAMES Pilot Line, a European Union initiative launched in 2023 in response to the EU Chips Act strategy to strengthen sovereignty and competitiveness in semiconductor technologies. By combining 3D heterogeneous and sequential integration on FD-SOI platforms, the consortium aims to enable a new generation of More-Than-Moore devices and applications.

Enabling New Chip Architectures

“This breakthrough is a major milestone of the FAMES project as it enables innovative new chip architectures,” said Dominique Noguet, CEA-Leti vice president and coordinator of the FAMES Pilot Line. “Our low-temperature process could accelerate real-world demonstrations of multi-tier stacks combining advanced CMOS logic, with smart pixel or RF layers, to create new high-performance 3D chips.”

Concept of 3-tier µLED GaN pixel allowing an emissive array with strong pitch reduction thanks to 3DSI in combination with 3D hybrid bonding technology. CEA-Leti’s 400 °C CMOS process enables such top-tier integration without exceeding the thermal limits of the active circuitry below.

Concept of 3-tier µLED GaN pixel allowing an emissive array with strong pitch reduction thanks to 3DSI in combination with 3D hybrid bonding technology. CEA-Leti’s 400 °C CMOS process enables such top-tier integration without exceeding the thermal limits of the active circuitry below.

The team showed that SOI devices processed at 400 °C instead of the high temperature (>1000 °C) industry standard and high-temperature industrial reference, performed equivalent to high-temperature devices.

“The 400 °C process enables 3D sequential stacking on any bottom tier,” Noguet said. “It’s a huge step forward because it’s far more mature—reliable and scalable—than current low-temperature solutions, such as polycrystalline films, oxide semiconductors or carbon nanotubes.”

Protecting Circuitry on Bottom-Tier LayersCEA-Leti’s team demonstrated n- and p-type transistors matching the characteristics of conventional high-temperature CMOS devices, while staying within the ≤400 °C thermal budget required to preserve active circuitry in lower layers.

The process relies on an optimized 400 °C LPCVD deposition for amorphous silicon followed by NLA in the melt regime for dopant activation and diffusion—producing polycrystalline, low-resistance gates with excellent interface quality. In addition, NLA-SPER mastering enables dopant activation without diffusion leading to access resistance within specifications.

The post FAMES Pilot Line R&D Advances: 400°C CMOS Enables 3D Integration Goals appeared first on ELE Times.

Keysight & KT SAT Nail Industry First GEO-to-LEO Multi-Orbit NTN Handover!

Keysight Technologies, Inc., in collaboration with KT SAT, has demonstrated a successful non-terrestrial network (NTN) handover using the KOREASAT-6A satellite at KT SAT’s Kumsan Satellite Network Operation Center in Korea. In a controlled clinical environment, the companies established the industry’s first NR-NTN multi-orbit handover between a commercial GEO satellite and an emulated LEO link. The demonstration was performed over a live Ku‑band GEO connection (DL ~12.3 GHz / UL ~14.4 GHz), marking a major milestone toward testing the newly standardized 3GPP Rel‑19 Ku‑band NTN spectrum. By incorporating Ku‑band operation into this multi-orbit mobility scenario, Keysight and KT SAT have validated NTN behaviors in a frequency range now central to emerging global standards and operator deployment strategies.

As the industry moves toward 6G, integrating satellite and terrestrial networks is essential to deliver continuous coverage and resilient service in remote or disaster-affected areas. Satellite links, however, introduce longer delays, Doppler effects, and dynamic link conditions that complicate mobility and handover across space and ground domains.

By moving beyond earlier demonstrations focused on single-orbit GEO connectivity, KT SAT and Keysight have shown how operators can evolve from point-to-point satellite links to continuous multi-orbit mobility, expanding KT SAT’s service capabilities toward resilient, always-on coverage across space and ground domains. This achievement also establishes an important technical milestone for KT SAT and Keysight in advancing Ku-band NTN mobility.

Using Keysight’s Network Emulator Solutions and UeSIM RAN Testing Toolset, the teams emulated the base station and user equipment, established a two-way link through KOREASAT-6A, and maintained service continuity during a handover from GEO to an emulated LEO connection. Operating the GEO link in Ku‑band ensures that these mobility insights directly map to the Rel‑19 NTN frequency bands now entering commercial planning, giving operators and device vendors earlier visibility into real‑world propagation, timing, and interoperability behaviors. As a result, KT SAT can now explore and validate advanced NTN mobility scenarios in the lab, before satellites or user devices are widely deployed, accelerating its roadmap and reducing the time and cost of bringing new multi-orbit services to market.

This collaboration shows how operators can extend coverage and resilience, while device and chipset vendors gain a lab-based path to validate NTN mobility without relying solely on expensive field trials. Insights from this work are intended to inform standards discussions and operator evaluations, helping the ecosystem shorten time-to-trial and de-risk commercialization.

Seo Young-soo, CEO of KT SAT, said: “As the only satellite communications service provider in Korea, KT SAT is progressively validating the applicability of NTN gNB and UE using our five operational GEO satellites. Building on the results of this trial, we will actively explore strengthening the competitiveness of our next-generation GEO satellite for the global market and delivering integrated multi-orbit communication services based on NTN systems, including traffic handover across our own GEO and future LEO/MEO constellations.”

Peng Cao, Vice President and General Manager of Keysight’s Wireless Test Group, Keysight, said: “This demonstration shows how emulation can bring future multi-orbit networks into the lab today. By combining a live GEO connection with emulated LEO conditions using NR-NTN parameters in Ku-band, Keysight gives operators and vendors a practical way to study NTN handover behavior, optimize mobility strategies, and reduce the cost and risk of early deployments.”

The post Keysight & KT SAT Nail Industry First GEO-to-LEO Multi-Orbit NTN Handover! appeared first on ELE Times.

Nuvoton Emphasises Need to Strengthen Taiwan-Israel R&D Collaboration

Nuvoton Technology showcased its leadership in international expansion by participating in the “Israeli-Taiwanese Business Seminar,” hosted by the Economic Division of the Taipei Economic and Cultural Office in Tel Aviv from November 15 to 23. Drawing attention to the practical advantages of its established R&D center in Israel, Nuvoton played a key role in the seminar, sharing on-the-ground insights from its cross-border expansion.

Nuvoton stated that investments in global hubs such as Israel are not only an expansion of its business footprint but also a critical part of its long-term development strategy. By connecting innovation talent and technology networks worldwide, Nuvoton aims to address emerging market challenges and opportunities while helping build a more resilient and competitive Taiwan-Israel technology ecosystem.

During the seminar, Nuvoton highlighted opportunities for collaboration in R&D, technology, and market development among global enterprises. The company’s practical experience offered the delegation concrete guidance for establishing operations in Israel and accessing its innovation resources, reflecting the collaborative spirit within Taiwan’s technology community.

Nuvoton emphasized the complementary strengths of Taiwan and Israel in the high-tech sector: Taiwan excels in IC designing, while Israel leads in software innovation. Building on these advantages, Nuvoton has accelerated its global R&D strategy by establishing an R&D center in Israel, enabling the company to strengthen its international competitiveness.

The post Nuvoton Emphasises Need to Strengthen Taiwan-Israel R&D Collaboration appeared first on ELE Times.

element 14’s DevKit HQ: A One Stop Development Kit Solution

Engineering is all about trying and testing. According to a survey conducted by element 14, most engineering professionals feel that finding the right development kit is a major challenge. Identifying a holistic development kit is essential for most engineers before starting a project. They value standard interfaces and extensibility, often combining or modifying multiple kits to build prototypes and proof-of-concept designs.

Element 14 has come forward with DevKit HQ, a new online resource that brings evaluation boards, development kits, single board computers (SBCs), tools, and technical documents, all in one place. DevKit HQ brings together resources from key supplier product families, like Analog Devices, NXP, AMD, STMicroelectronics, Microchip, Infineon, Renesas, Raspberry Pi, BeagleBoard, Arduino, and more, for multiple purposes, such as, AI/ML, IoT, sensors, wireless, motor control, and power management. This makes it easy for developers to discover, compare, and accelerate their embedded design and innovation.

The site enables engineers to quickly find the latest development kits and modular solutions by application, along with available demo and application software. Engineers can also easily locate evaluation boards that match a supplier’s product family or series.

Additionally, the site features each kit’s datasheets, application notes, training videos, reference designs and more. Together, these resources help engineers accelerate design decisions and drive innovation across various applications, including AI, IoT, sensors, wireless, motor control and power management.

“Our mission is to make life easier for design engineers,” said Daniel Ford, Vice President of Sales at element14. “With the DevKit HQ, we’ve created the leading destination where they can search development kits by application as well as explore new technologies, experiment with the latest kits, and move from idea to prototype faster, freeing up more time to focus on innovation.’’

The post element 14’s DevKit HQ: A One Stop Development Kit Solution appeared first on ELE Times.

USB-IF Hardware Certification to Anritsu for USB4 2.0 Test Solution

ANRITSU CORPORATION gets certified by the USB Implementers Forum (USB-IF) for its test solution for the latest USB4 Version 2.0 (USB4 v2) communication standard.

The solution is based on the Signal Quality Analyzer-R MP1900A and provides advanced USB device evaluation capabilities. It helps improve the quality and reliability of products implementing the USB4 Version 2.0 standard, supporting widespread deployment of next-generation high-speed interfaces.

As of December 2025, USB4 v2 is the most advanced USB standard, delivering data transfer speeds of up to 80 Gbit/s — twice that of USB4 v1 (40 Gbit/s). This supports next-generation applications, such as high-resolution video transmission, external GPUs, high-speed storage, and VR/AR devices.

Furthermore, the specification significantly improves communication performance and reliability. By introducing innovations including Pulse Amplitude Modulation 3-level (PAM3) signaling to improve bandwidth efficiency, the Frequency Variation Profile to enhance the stability of link training — a signal quality and initialization procedure — and a new TS2.CLKSW training sequence incorporating clock switching.

Current demand for evaluation and certification testing is driven primarily by semiconductor manufacturers producing USB4 v2 control ICs. Looking ahead, adoption is expected to expand to test houses for test equipment deployment and, in the long term, to consumer product manufacturers of USB4 v2 hubs, docking stations, and cables.

Product Overview: Signal Quality Analyzer-R MP1900A

The MP1900A is a high-performance Bit Error Rate Tester (BERT) supporting receiver tests for multiple high-speed interfaces, including PCIe, USB, Thunderbolt, DisplayPort, and 400 GbE/800 GbE. It combines industry-leading PPG technology for high-quality waveforms with a high-sensitivity error detector, precision jitter sources (SJ, RJ, SSC, BUJ), and noise sources (CM-I, DM-I). The MP1900A also supports link training and LTSSM analysis for comprehensive high-speed device evaluation.

The post USB-IF Hardware Certification to Anritsu for USB4 2.0 Test Solution appeared first on ELE Times.

As Energy-Efficient Chips Are Rising — HCLTech × Dolphin’s New Partnership Gives the Trend a Heavy Push

Amid the ongoing push, HCLTech & Dolphin Semiconductors have announced a strategic partnership aiming to develop energy-efficient chips for IoT and data centre applications. As the world moves towards energy-efficient chips, it becomes more imperative than ever to trace the lines that are destined to become the future trends of the industry, at large. When chips come into the picture, energy efficiency is the most organic issue to crop up as it decides the longevity and reliability of the concerned chip. As per the statement by HCLTech and Dolphin Semiconductors, the partnership aims to support enterprises seeking to improve energy efficiency and performance as computing workloads increase.

What are Energy-Efficient Chips?

Energy-efficient chips are integrated circuits designed to perform computations while minimizing power consumption to extend battery life, reduce heat generation, and also lower the operational costs. Its architecture includes specialized cores, such as Neural Processing Units (NPUs) and Graphics Processing Units (GPUs), or, more broadly, AI accelerators rather than conventional CPUs. This is to ensure that tasks are performed using the most efficient hardware possible.

Why is it important?

The proliferation and development of energy-efficient chips is primarily important because, as the usage increases, the power consumption would also increase, and in that case, it is important and indispensable to cut the power requirement and bring the hardware to optimum performance, keeping in mind the sustainability and operation limitations, as the power needed would affect the environment and also cost money simultaneously.

HCLTech X Dolphin Semiconductors Partnership

HCLTech will integrate Dolphin Semiconductor’s low-power IP directly into its SoC design workflow, creating scalable, energy-efficient chips that handle a wide range of compute needs while keeping power use in check.

At its core, energy efficiency requires a holistic, full-stack design effort — from initial architecture to the software that ultimately runs on the chip.

The post As Energy-Efficient Chips Are Rising — HCLTech × Dolphin’s New Partnership Gives the Trend a Heavy Push appeared first on ELE Times.

Advanced GAA Chips: Minimizing Voltage Loss and Improving Yield

Courtesy: Lam Research

- As advanced logic chips decrease in size, voltage loss can increase

- An emerging solution is backside power delivery networks that use SABC architecture

The problem: As metal pitch scaling shrinks to support the next generation of logic devices, the IR (or voltage) drop from conventional frontside connections has become a major challenge.

As electricity travels through a chip’s metal wiring, some voltage gets lost because wires have resistance.

- If the voltage drops too much, the chip’s transistors can’t get enough power and can slow down or fail.

- In addition, the resistance of back-end-of-line (BEOL) metal lines and vias is dramatically increasing.

The solution: Backside power delivery networks (BSPDN) can address these challenges and are currently widely studied as an alternative to front-side power delivery and contact schemes.

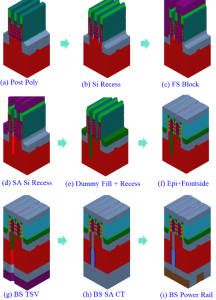

Virtual Study Compares DBC and SABC on a GAA Device

The Semiverse Solutions team conducted a virtual study using SEMulator3D to analyze gate-all-around (GAA) devices that use BSPDN.

In the Design of Experiments (DOE), the team focused on a process window for a GAA device that uses a direct backside contact (DBC) architecture and compared it to a GAA device process window using self-aligned backside contact (SABC) architecture.

DBC architecture, used to connect contacts with source/drain structures, requires a deep silicon etch, a small edge placement error (EPE), and precise alignment when used in an advanced GAA transistor.

The Semiverse Solutions team conducted the virtual experiment to see if an SABC scheme could address these precise alignment challenges.

Analyzing the process window of a device helps engineers and researchers understand the range of manufacturing conditions under which a device can be reliably produced while meeting its performance and quality requirements.

By comparing the process windows of different architectures, researchers can identify which design offers greater tolerance to manufacturing variations, fewer defects, and better overall performance.

Figure 1 displays the major integration (process) steps for a proposed SABC scheme. The process steps are like those used during a typical GAA logic process manufacturing flow.

Figure 1. The manufacturing process flow of a proposed self-aligned backside contact (SABC) scheme

Figure 1. The manufacturing process flow of a proposed self-aligned backside contact (SABC) scheme

Study Methodology

The team ran multiple virtual fabrication experiments that varied the smallest critical dimensions (CD), overlay, and over-etch amount of the through-silicon via (TSV).

Virtual measurements were taken of the number of opens and shorts generated (number of nets in the structure), high-k damage (high-k material volume change), and the backside contact area of the typical structure.

The manufacturing success criteria were specified as follows:

- Backside contact area (CT to epitaxy): ≥150 nm2

- High K damage: <20 nm3

- No contact with the metal gate shorts

Using these criteria, the results of each virtual experiment in the DOE were classified as a “pass” or “failure” event.

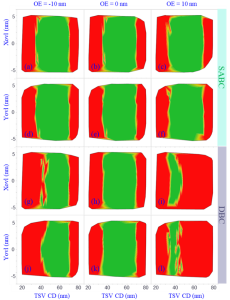

SABC Indicates Higher Yield for Advanced Logic Nodes

The DOE results are shown in Figure 2 as a set of process window contour diagrams at various CD, overlay, and over-etch amounts for both the SABC and DBC contact schemes. The green areas in Figure 2 represent “pass” results, while the red areas represent “fail” events.

Figure 2. Comparison of SABC and DBC process windows

Figure 2. Comparison of SABC and DBC process windows

Due to its self-aligned capabilities, the SABC approach exhibits a much larger process window (larger green area) than the DBC architecture.

The DBC process window is very narrow, especially when the TSV is 10 nm over- or under-etched. The TSV failure exhibits itself as high-k damage, source-drain to metal gate shorts caused by excessive over-etching, small contact areas created by TSV under-etch and increased EPE caused by a larger TSV CD and additional overlay errors.

The virtual study demonstrated that the SABC approach to backside power minimizes EPE and over-etch variations in the TSV process and provides a much larger and more stable process window than a DBC approach. SABC is promising for use at advanced logic nodes and may support further logic device scaling.

The post Advanced GAA Chips: Minimizing Voltage Loss and Improving Yield appeared first on ELE Times.

The Leading Five Essential Context Window Concepts In LLMs

Courtesy: Micron

This story outlines five essential concepts that explain how large language models process input within a context window. Using clear examples and practical insights, it covers foundational ideas like tokenization, sequence length, and attention. The goal is to help readers better understand how context affects model behavior in AI applications. We also present results from an analytical model used to estimate system behavior, to show how scaling input and output sequence lengths impacts response time. The results highlight how decoding longer outputs takes significantly more time, pointing to the importance of fast memory systems like HBM in supporting efficient inference at scale. These concepts are useful for anyone working with or designing prompts for generative AI systems.

Context window versus length

When working with large language models, it’s important to understand the difference between concepts like context window, context length, and sequence length. These terms are often used interchangeably, which can lead to confusion. In this blog, we will define and refer to them as distinct concepts.

The context window is the model’s maximum capacity: the total number of tokens it can process at once, including both your input and the model’s output. As a simple example, let’s define the rectangle size below as equivalent to a 100,000 token context window.

The context length, on the other hand, is how much you’ve put into that space, which is the actual number of tokens—input tokens (blue) and output tokens (green)—currently in use during a conversation. For example, if a model has a 100,000-token context window and your input uses 75,000 tokens, only 25,000 tokens remain for the model’s response before it reaches the upper limit of the window.

Sequence length typically refers to the length of a single input or output sequence within that window. It’s a more granular measure used in model training and inference to track the length of each segment of text.

The context window sets the limit for how much information a model can process, but it does not directly reflect intelligence. A larger window allows more input, yet the quality of the output often depends on how well that input is structured and used. Once the window is full, the model may lose coherence, leading to unwanted outcomes (for example, hallucinations).

Tokens aren’t words

If the context window is defined by an upper limit (say 100,000), tokens are the units that measure what fits inside, and it’s important to understand that tokens are not words. The words you type into a prompt are fed to a “tokenizer,” which breaks down text into tokens. A single word may be split into several tokens. For example, “strawberry” becomes three tokens and “trifle” becomes two. In other cases, a word may consist of just one token, like “cake”.

| St | raw | berry |

We can test this with a quote from the novel “Emma” by Jane Austen.

“Seldom, very seldom, does complete truth belong to any human disclosure; seldom can it happen that something is not a little disguised or a little mistaken.”

This text contains 26 words, and when run through the tokenizer of the Mistral language model provided by lunary.ai1, it produces 36 tokens. That’s about 0.72 words per token or roughly three-fourths of a word.

The ratio varies, but for English words, you might average around 0.75 words per token. That’s why a model with a 100,000-token context window (per user) does not necessarily fit 100,000 words. In practice, you might fit closer to 75,000 English words or fewer, depending on the text.

estimatedtokens≈words∗1.33

To further check the token-to-word ratio at scale, we ran a quick analysis using eight well-known literary works from Project Gutenberg, a library of more than 75,000 free e-books. First, we counted the words in each book, then ran the texts through a tokenizer to get the token counts. After comparing the numbers, we found that the average ratio was about 0.75 words per token.

Knowing this ratio can help everyday users get more out of their interactions with AI. Most AI platforms, like ChatGPT or Claude, operate with token-based constraints. That is, they process text in tokens, not words, so it’s easy to misjudge how much content you can actually fit into a prompt or response. Because usage is often measured in tokens rather than words, knowing the ratio makes you aware of any limits so you can plan your inputs more strategically. For example, if a model has a 4,000-token input limit, that’s roughly 3,000 words. This is good to know when feeding a model a long document or dataset for tasks like finding key insights or answering questions.

Attention is not equally distributed within the context window

AI hallucinations are often misunderstood as quirky behavior or signs that a language model is buggy and unreliable. But hallucinations are not random; they often stem from how a model might process and prioritize information, which is determined by things like how well a model is trained and how it distributes attention. In transformer-based models like GPT or Claude, attention is the mechanism that helps the model decide which parts of the context are most relevant when generating a response. To better understand the concept of attention, imagine being at a noisy cocktail party. If someone calls your name, you instinctively tune in.

“Frodo! Over here!”

But what if four people call your name at once from different corners of the room?

“Frodo! It’s me, Sam!”

“Frodo! Come quick!”

“Frodo! Look this way.”

“Frodo … yesss, precious Frodo …”

You hear them all, but your focus is now split. You might even pay more attention to the voice you recognize or the one closest to you. Each sound gets a fraction of your attention, but not all equally. It’s not a perfect analogy but this is one way you can conceive of how attention works in large language models. The model pays attention to all tokens in the context window, but it gives more weight to some than to others. And that’s why attention in large language models is often described as “weighted”, meaning that not all tokens are treated equally. This uneven distribution is key to understanding how models might prioritize information and why they sometimes appear to lose focus.

More context may or may not mean better answers

A model can scan all tokens within the context window, but it doesn’t consider each token with equal interest. As the window fills (say, up to 100,000 tokens), the model’s attention becomes more diffuse. In its attempt to keep track of everything, clarity may diminish.

When this happens, the model’s grip on the conversation loosens, and a user might experience slower, less coherent responses or confusion between earlier and later parts of the conversation thread. Hallucinations, from the Latin hallucinat or “gone astray in thought,” often appear at this edge. It’s important to understand that these occurrences are not signs that the model is malfunctioning. It is actually an indication that the model is reaching its threshold, where it is operating at capacity. And here is where the model may struggle to maintain coherence or relevance across long spans of input.

From the model’s perspective, earlier tokens are still visible. But as the window fills up and its attention becomes more distributed, the precision of response may degrade. The model might misattribute facts from previous prompts or fuse unrelated ideas into something that sounds coherent but isn’t. In the case of hallucinations, the model isn’t lying. It’s reaching for a reasonable response from fragments it can no longer fully distinguish, making a guess under the strain of limited attention. And to be fair, the model is working with what it has, trying to make sense of a conversation that’s grown too big to reliably focus on. Understanding attention in this way helps explain why more context doesn’t always lead to better answers.

That said, long context windows (greater than 200,000 and now reaching 1 million or more tokens) can be genuinely useful, especially for complex reasoning and emerging applications like video processing. Newer models are being trained to handle longer contexts more effectively. With better architecture and training, models can more effectively manage attention across inputs, reducing hallucinations and improving responses. So, while more context doesn’t always lead to better answers, newer models are getting better at staying focused, even when the conversation gets really long.

Sequence length affects response time

Following the explanation of attention, it’s useful to understand how sequence length affects inference. We can now ask a practical question: What happens when we vary the sequence length?

The input sequence length affects time to first token (TTFT), the time from entering the request to receiving the first output token. TTFT matters most for GPU performance, as it reflects how quickly the GPU can process the input and then compute it to output the first token. In contrast, varying the output sequence length affects inter-token latency (ITL) or the time between each generated token. This latency is more relevant to memory usage.

To explore this further, we used a first-order analytical model to estimate end-to-end latency during LLM inference. We ran the model using Llama 3 70B on a single GPU with high-bandwidth memory (HBM3E 12H, 36GB across 8 placements), and a context window of 128,000 tokens.

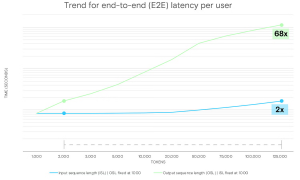

The chart below shows the impact of increasing input sequence length (ISL) and output sequence length (OSL) on the entire end-to-end latency. Each measurement was taken with a batch size of 1 (i.e., a single request).

Figure. End-to-end latency per user (seconds), for both output and input sequence lengths

Figure. End-to-end latency per user (seconds), for both output and input sequence lengths

Key takeaways

One important takeaway when measuring latency is that it takes much more time for the model to generate a long response than to process a long prompt. The model can read and understand the input all at once, which is relatively fast even for lengthy prompts. But generating a response happens token by token, with each new token depending on everything generated so far. This takes more time because the model follows an autoregressive process, meaning each token is built on the ones before it. For example, increasing the input sequence length (ISL) from 2,000 to 125,000 tokens results in only a roughly two times increase in latency. In contrast, scaling the output sequence length (OSL) across the same range leads to a roughly 68 times increase. This difference arises because longer input sequences drive more prefill computation, which can process multiple tokens in parallel. Meanwhile, decoding is inherently sequential, generating one token at a time, and that takes more time and demands much more memory bandwidth.

The implication is that longer output sequences result in longer decode times, and that means the GPU and memory subsystem remain active longer. In this context, power efficiency at the hardware level becomes especially valuable. A memory device like Micron HBM3Ee that runs using much less power than comparable high-bandwidth memory devices can complete identical inference tasks while using less energy.

For a user, this insight underscores the importance of optimizing prompts and managing input length (trimming any unnecessary content, for example). And if you’re building real-time apps, you can usually handle longer inputs without much trouble. But keeping the output concise may help your system stay fast and responsive.

The important role of memory for context length

Inference latency depends not only on sequence length but also on how the system manages the demands on compute and memory as it processes inputs and generates outputs. Many recently released language models now advertise context windows that exceed one million tokens. These larger context windows (when fully utilized) place greater stress on the memory subsystem, which may appear to the user as slower execution and increased runtimes. Newer memory technologies will offer higher bandwidth and larger capacity to support these larger context windows, improving response times and overall throughput (tokens per second). But these performance gains raise questions about energy use. As inference workloads scale to millions of tokens, designing systems that use power efficiently becomes increasingly important. Systems that remain active for longer periods require more power, and memory devices designed to use less power without sacrificing bandwidth can help address this challenge. For example, Micron HBM3E consumes much less power than competing high-bandwidth memory devices. And this lower power can help reduce the amount of energy AI consumes during inference workloads involving millions of tokens. Looking ahead, next-generation memory technologies, like HBM4 and HBM4E, are being designed to deliver even higher memory bandwidth and capacity while improving power efficiency. These improvements, which stem from advances in process technology (Micron’s use of 1-gamma DRAM), are expected to enable faster data movement with lower energy cost. Moreover, as these technologies mature, they may further reduce latency and improve throughput and energy use in large-scale AI deployments.

The post The Leading Five Essential Context Window Concepts In LLMs appeared first on ELE Times.