ELE Times

How to Build a Hacker-Proof Car: Insights from the Auto EV Tech Summit

Speaking at the Auto EV Tech Vision Summit 2025, Suresh D highlights the major cyber vulnerabilities and the corresponding technologies required to enable a safer and more resilient automotive ecosystem.

Since the electronic components in passenger vehicles are set to increase by 20-40 percent, as the recent studies suggest, including infotainment, ADAS, etc, drawing in a lot of sensors in the near future, automobiles are emerging as the new battlefield for cyber developments. Underlining this growing phenomenon, Suresh D, Group CTO, Minda Corporation, CEO, Spark Minda Tech Centre & Board Member, Spark Minda Green Mobility says, “A passenger vehicle is expected to see a 20–40 percent increase—nearly doubling in some cases—over the next two to three years, bringing in a large number of on-board electronic systems. This will significantly increase software content and complexity,” at the Auto EV Tech Vision Summit 2025 held at KTPO, Bengaluru, on November 18–19, 2025.

He further goes on to add that the phenomenon will make Operating Systems and other software indispensable, escalating the security question in automobiles.

Critical Challenges on the way

He says that the new architectural parameter of SDVs, where the distributed architecture is being replaced by controlled or zonal architecture, also poses certain security challenges. Also, as the new vehicles remain entirely connected, as in V-2-V or V-2-I connection, the proximity of cyber risks escalates.

Further, he touches upon the critical challenges that are to be tackled, including phishing, hacking, snooping, malware, etc. He goes on to underline some of the crucial cyberattacks that the automotive industry has seen in the recent past, ranging from CAN spoofing a Jeep Cherokee in 2014 to the latest TESLAMATE attack on Tesla cars in 2025, underlining how the question of cybersecurity becomes more relevant than ever.

Curious Case of SDVs & EVs

As EVs are on the rise across the world, Suresh D highlights how EV expansion and the need for robust charging systems also aggravate the risk. He explains that if a charging station compromises a supplier’s build server, it can be manipulated to tamper with BMS parameters via a compromised internal bus or a malicious charging station.

While for SDVs, potential risk sources he underlines include attack scenarios ranging from unprivileged root access and pivoting through fleet management backends, to compromised third-party apps and poorly protected cryptographic keys.

How to Tackle this?

In the latter part, he touches upon the important steps that can be taken to avoid the potential risks and create a safer and reliable cyber ecosystem for automotives. First among them is the System Architecture approach. He says, “It refers to developing a robust architecture—understanding the OEM’s architecture and aligning the product accordingly.” He sums it up as thinking way ahead of the OEMs. It also includes encryption and decryption of the hardware to ensure that no vulnerability remains open to exploitation.

Further, he also outlines a distinct approach, which is Embedded Edge Solutions, which means solving the problem at the source. It includes several protections, including secure flashing and secure boot. This is done through the plant server of the OEM that generates distinct private keys for each of the units for further authorization.

For SDVs, he highlights a telematics-based approach which consists of 3 layers, namely Layer 1- In-vehicle security, Layer 2- Vehicle Communication Security & Layer 3- The cloud infrastructure. When Internet Protocol is used for communication, it enables whitelisting of the IPs through encryption and decryption through SSL, enabling a better and safer environment.

High Frequency Options: Granting More Immunity

He also underlines how automobiles these days usually come with smart keys or keyless access to the vehicle. While the technology is referred to as Low-Frequency Radio Frequency (LFRF), it is immune to relay attacks. However, the industry is gradually moving towards safer and more reliable options like Bluetooth and Ultra Wide Bandgap (UWB), with high-frequency technology making decoding highly difficult.

He adds that even these technologies are prone to cyberattacks, either at the server level or the device level. Conclusively, some techniques like channel sounding with Bluetooth-based technology have been developed, which are more precise and help make authentication more secure. It offers a turnkey secure foundation, making automobiles reliable and secure.

The post How to Build a Hacker-Proof Car: Insights from the Auto EV Tech Summit appeared first on ELE Times.

Palo Alto Networks Unifies Observability and Security for the AI Era through Chronosphere Acquisition

As enterprises increasingly rely on AI to run digital operations, protect assets, and drive growth, success depends on one critical factor: trusted, high-quality, real-time data. Palo Alto Networks, the global cybersecurity leader, announced the completion of its acquisition of Chronosphere, addressing a core challenge of the AI era: the inability to see and secure the massive data volumes running modern businesses.

Chronosphere, a Leader in the 2025 Gartner Magic Quadrant for Observability Platforms, was purpose-built to handle this scale. While legacy tools break down in cloud-native environments, Chronosphere gives customers deep visibility across their entire digital estate. With this acquisition, Palo Alto Networks is redefining how organisations run at the speed of AI—by enabling customers to gain deep, real-time visibility into their applications, infrastructure, and AI systems — while maintaining strict control over data cost and value.

The planned integration of Palo Alto Networks Cortex AgentiX with Chronosphere’s cloud-native observability platform will allow customers to apply AI agents that can now find and fix security and IT issues automatically—before they impact the customer or the bottom line. AI security without deep observability is blind; this acquisition delivers the essential context across models, prompts, users, and performance to move from manual guessing to autonomous remediation.

Nikesh Arora, Chairman and CEO, Palo Alto Networks:

“Enterprises today are looking for fewer vendors, deeper partnerships, and platforms they can rely on for mission-critical security and operations. Chronosphere accelerates our vision to be the indispensable platform for securing and operating the cloud and AI. We believe that great security starts with deep visibility into all your data, and Chronosphere provides that foundation for our customers.”

Martin Mao, Co-founder and CEO, Chronosphere, is joining Palo Alto Networks as SVP, GM Observability and comments:

“Chronosphere was built to help the world’s most complex digital organisations operate at scale with confidence. Joining Palo Alto Networks allows us to bring AI-era observability to a global audience. Together, we’re delivering a new standard — where observability, security, and AI come together to give organisations control over their most valuable asset: data.”

The Chronosphere Telemetry Pipeline remains available as a standalone solution, enabling organisations to eliminate the ‘data tax’ associated with modern security operations. By acting as an intelligent control layer, the pipeline filters low-value noise to reduce data volumes by 30% or more while requiring 20x less infrastructure than legacy alternatives. This is key to Palo Alto Networks Cortex XSIAM strategy, ensuring customers can scale their security posture—not their spending—as they transition to autonomous, AI-driven operations.

The post Palo Alto Networks Unifies Observability and Security for the AI Era through Chronosphere Acquisition appeared first on ELE Times.

Spectral Engineering and Control Architectures Powering Human-Centric LED Lighting

As technological advancements continue to pursue personalisation & customisation at every level, illumination has also transformed from a need to a customisation. Consequently, the LED industry is moving towards a similar yet prominent stride, making customised and occasion-specific solutions, keeping in consideration the human behaviour and lighting changes across the day. Long seen as the constant and uniform thing, illumination is now being reimagined as something dynamic and customisable.

In the same pursuit, the industry has moved towards enabling Human-Centric Lighting(HCL), where lighting is designed and engineered to emulate natural daylight, ranging from dimming them as the Sun goes down, while brightening up as the day begins. Gradually, illumination is now being designed around human biology, visual comfort, and cognitive performance rather than simple brightness or energy efficiency.

But what lies behind this marvel is hardcore engineering. Technically, the result is made possible by the marvels of spectral engineering & control architectures, wherein the former adjusts the light spectrum while the latter enables the intelligence directing the timing changes of the lighting system. Simultaneously, the dual play brings forth today’s human-centric lighting into real-life examples and is also making them more customised and personalised. This ultimately helps in supporting human circadian rhythms, enhancing well-being, mood, and performance.

To enable these engineered outcomes, embedded sensors, digital drivers, and networked control platforms are integrated into the modern-day LED lights, transforming illumination into a responsive, data-driven infrastructure layer. In combination, spectral engineering and intelligent control systems are reshaping the capabilities of LED lighting, transforming it from a passive utility into a dynamic, precision-engineered tool for enhancing human wellbeing, productivity, and performance.

How is Spectral Power Distribution engineered?

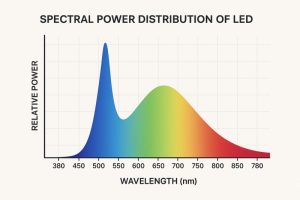

When we talk about LED lights, white light is the first thing that comes to our minds. Although the same is not true scientifically. Surprisingly, LEDs inherently emit blue light and not white. To turn the blue light into white, a Phosphor coating is applied over it. Consequently, the blue light mixes with the phosphor to turn some of the light into green, red & yellow simultaneously. These lights eventually mix to turn white.

Spectral Power Distribution (SPD) is simply the profile of the white colour which is not visible to our naked eyes, and how much of each colour is present in the visible white light. The final light can be controlled by various means, such as the type of phosphor, how thick the phosphor layer is, or by adding extra coloured LEDs (like red or cyan).

Spectral Power Distribution is engineered by carefully mixing different colours of light inside an LED, even though it looks white, so that the light feels right for the human body and mind.

Engineering the Spectral Power Distribution of White LEDs

Often, it is seen that the very same white light is sometimes harsh while sometimes soft- all this is because of various variables. Today, from being a static character, SPD has turned into a tunable design parameter,++ becoming a Controllable Design Variable. To this effect, SPD is largely controlled by Phosphor composition (which colours it emits), Particle size and density, and finally Layer thickness and distribution.

That’s why the same 400K LEDs from different manufacturers can feel completely different — their SPDs are different, even if the Correlated Color Temperature (CCT) is the same. But as long as the final color is decided by some application made during manufacturing, the effect remains static. While spectral Power distribution is essential, it is equally important to dictate the given behaviour as per the time of the day.

Multi-Channel LED Configurations for Spectral Tunability

To enable a real-time nature to this Spectral tunability, engineers today use multiple LED channels, including:

- White + Red

- White + Cyan

- RGBW / RGBA

- Tunable white (warm white + cool white)

By precisely varying the current supplied to each LED channel, the spectral power distribution can be reshaped in real time, allowing the system to shift between blue-enriched and blue-reduced lighting modes as required. This level of control allows you to adjust the perceived colour temperature independently of the light’s biological impact, rather than having them locked together. As a result, SPD is no longer a fixed characteristic of the light source but becomes a dynamic, real-time controllable design parameter.

Melanopic Response, Circadian Metrics, and Spectral Weighting

When we talk about light, visibility & brightness make up the primary issue, but that has changed drastically with the emergence of Human Centric Lighting (HCL). With HCL coming into play, photopic lux, the quantification of brightness, is no longer a go-to metric to decide upon the quality of lighting. It is because it explained only one part of the coin, which is visibility, and not how this light or visibility affects human biology.

At the same time, Human Centered Lighting focuses on how light affects the circadian system, alertness, sleep–wake cycles, mood, and hormonal regulation. This phenomenon has brought up new metrics that tell us not only about the brightness or visibility, but also how it biologically acts. One such metric is Melanopic Lux, which weights the spectrum based on melanopsin sensitivity. Melanopsin is a photopigment in our eyes, usually sensitive to Blue-Cyan light.

Interestingly, more melanopic stimulation → increased alertness and circadian activation, while less melanopic stimulation → relaxation and readiness for sleep. That’s where we come to the core of our subject – Light induced behaviuour. The emergence of Melanopic Lux allows engineers to decouple visual brightness from biological effect, giving the right direction to Human Centric Lighting.

While melanopic metrics define what kind of biological response light should produce, control architectures determine when and how that response is delivered. Translating circadian intent into real-world lighting behaviour requires intelligent control systems capable of dynamically adjusting spectrum, intensity, and timing throughout the day. This is where embedded sensors, digital LED drivers, and networked control platforms come into play, enabling lighting systems to modulate melanopic content in real time—boosting circadian stimulation during the day and reducing it in the evening—without compromising visual comfort or energy efficiency.

Other metrics, such as Melanopic Equivalent Daylight Illuminance (EDI) and Circadian Stimulus (CS) are used to quantify how effectively a light source supports circadian activation or melatonin suppression, beyond what photopic lux can describe.

LED Drivers and Power Electronics for Dynamic Spectral Control

In human-centric lighting systems, LED drivers are no longer simple power supplies but precision control elements that translate circadian intent into real-world illumination. Because LEDs are current-driven devices, accurate current regulation is essential to maintain stable brightness and spectral output, especially as temperature and operating conditions change.

Dynamic spectral tuning typically relies on multi-channel LED architectures, making channel balancing a critical requirement. Each LED colour behaves differently electrically and thermally, and without independent, well-balanced current control, the intended spectral profile can drift over time, affecting both visual quality and biological impact.

Equally important is dimming accuracy. Human-centric lighting demands smooth, flicker-free dimming that preserves spectral integrity at all brightness levels, particularly during low-light, evening scenarios. Advanced driver designs enable fine-grained dimming and seamless transitions, allowing lighting systems to dynamically adjust spectrum and intensity throughout the day while maintaining visual comfort and circadian alignment.

System Integration Challenges and Design Trade-Offs

While human-centric lighting promises precise control over both visual and biological responses, delivering this in real-world systems involves significant integration challenges and design trade-offs. Spectral accuracy, electrical efficiency, thermal management, and system cost must all be balanced within tight form-factor and reliability constraints. Multi-channel LED engines increase optical and control complexity, while higher channel counts demand more sophisticated drivers, sensing, and calibration strategies.

Thermal effects further complicate integration, as LED junction temperature directly influences efficiency, colour stability, and lifetime. Without careful thermal design and feedback control, even well-engineered spectral profiles can drift over time. At the same time, adding sensors, networking, and intelligence introduces latency, interoperability, and cybersecurity considerations that must be addressed at the system level.

Ultimately, successful human-centric lighting solutions are defined not by any single component, but by holistic co-design—where optics, power electronics, controls, and circadian metrics are engineered together. The trade-offs made at each layer determine whether a system merely adjusts colour temperature or truly delivers biologically meaningful, reliable, and scalable lighting performance.

The post Spectral Engineering and Control Architectures Powering Human-Centric LED Lighting appeared first on ELE Times.

Dell Technologies Enables NxtGen to Build India’s Largest AI Factory

Story Highlights

Dell AI Factory with NVIDIA to provide scalable and secure infrastructure for NxtGen’s AI platform, India’s first and largest AI factory, enabling national-scale AI development.

This milestone deployment accelerates India’s AI mission, enabling large‑scale generative, agentic, and physical AI while expanding NxtGen’s high‑performance AI services nationwide.

Dell Technologies today announced that NxtGen AI Pvt Ltd, one of India’s foremost sovereign cloud and AI infrastructure providers, has selected Dell AI Factory with NVIDIA solutions for building India’s first and largest dedicated AI factory. This milestone deployment will significantly expand India’s national AI capability, enabling large-scale generative AI, agentic AI, physical AI, and high-performance computing across enterprises, start-ups, and government programs.

Dell will provide the core infrastructure, including Vertiv liquid-cooled Dell PowerEdge XE9685L servers, delivered through Dell Integrated Rack Scalable Systems, for NxtGen’s new AI cluster, empowering the company to meet the growing demand for AI as a Service and large-scale GPU capacity.

Why it matters

This accelerated computing infrastructure is vital for advancing India’s AI mission, significantly expanding NxtGen’s AI cloud services for a diverse range of clients, from start-ups to academia and government. By empowering NxtGen with this advanced foundation, Dell is accelerating India’s next wave of AI development and innovation, ensuring critical access to high-performance AI capabilities across the region.

Powering the future of AI with advanced Dell AI infrastructure

The Dell AI Factory with NVIDIA combines AI infrastructure, software, and services in an advanced, full-stack platform designed to meet the most demanding AI workloads and deliver scalable, reliable performance for training and inference. Leveraging the Dell AI Factory with NVIDIA, NxtGen will deploy Vertiv liquid-cooled, fully integrated Dell IR5000 racks featuring Dell PowerEdge XE9685L servers with the NVIDIA accelerated computing platform to build a cluster with over 4,000 NVIDIA Blackwell GPUs, NVIDIA BlueField-3 DPUs, and NVIDIA Spectrum-X Ethernet networking, all purpose-built for AI. These will be complemented by Dell PowerEdge R670 servers and Dell PowerScale F710 storage.

Dell AI Factory with NVIDIA: Empowering AI for Human Progress

The Dell AI Factory with NVIDIA offers a full stack of AI solutions from data center to edge, enabling organizations to rapidly adopt and scale AI deployments. The integration of Dell’s AI capabilities with NVIDIA’s accelerated computing, networking, and software technologies provides customers with an extensive AI portfolio and an open ecosystem of technology partners. With more than 3,000 customers globally, the Dell AI Factory with NVIDIA reflects Dell’s leadership in enabling enterprises with scalable, secure and high-performance AI infrastructure.

The comprehensive Dell AI Factory with NVIDIA portfolio provides a simplified and reliable foundation for NxtGen to deliver advanced AI capabilities at speed and scale. This allows NxtGen to deliver on its core mission of providing sovereign, cost-effective and powerful AI services that help businesses grow and innovate, while at the same time reinforcing Dell’s commitment to providing the technology that drives human progress.

By equipping organizations like NxtGen with cutting-edge AI infrastructure and services, Dell is helping to unlock new possibilities and create a future where technology empowers everyone to achieve more.

Perspectives

“India’s rapid AI growth demands strong, reliable, and future-ready infrastructure,” said Manish Gupta, president and managing director, India, Dell Technologies. “Dell Technologies is addressing this need through the Dell AI Factory with NVIDIA, designed to simplify and scale AI deployments across industries. As the top AI infrastructure provider, we are enabling this shift by combining storage, compute, networking and software to accelerate AI adoption. Our collaboration with NxtGen brings these capabilities closer to Indian enterprises, helping them deploy AI efficiently and cost-effectively. This marks another step in our commitment to empowering India’s digital future through secure, scalable, and sovereign AI infrastructure.”

“NxtGen is committed to building India’s AI backbone,” said A. S. Rajgopal, managing director and chief executive officer, NxtGen. “This deployment marks a significant milestone for the country: India’s largest AI model-training cluster, built and operated entirely within India’s sovereign cloud framework. Dell Technologies has been critical in enabling this scale, performance, and reliability. Together, we are unlocking the infrastructure that will power the next generation of Indian AI models and applications.”

“India’s ambitious AI mission requires a foundation of secure, high-performance accelerated computing infrastructure to enable model and AI application development,” said Vishal Dhupar, managing director, Asia South, NVIDIA. “Dell’s integration of NVIDIA AI software and infrastructure, including NVIDIA Blackwell GPUs and NVIDIA Spectrum-X networking, provides the AI factory resources to help NxtGen accelerate this critical national capability.”

The post Dell Technologies Enables NxtGen to Build India’s Largest AI Factory appeared first on ELE Times.

Quest Global Appoints Richard Bergman as Global Business Head of its Semiconductor Division

Bengaluru, India, January 28th, 2026 – Quest Global, the world’s largest independent pure-play engineering services company, today announced the appointment of Richard (Rick) Bergman as President & Global Business Head of its Semiconductor vertical.

As the Global Business Head, Rick will focus on shaping the division’s long-term strategy, accelerating revenue growth, and deepening relationships with global customers. His responsibilities include defining a multi-year growth roadmap, supporting clients’ success through high-impact and transformational solutions, especially in AI, automotive, and industrial sectors, and fostering a culture of innovation and operational excellence to meet next-generation engineering demands.

“The semiconductor industry is at a turning point, fueled by AI, system innovation, and shifting supply chains,” says Ajit Prabhu, Co-Founder and CEO, Quest Global. “Rick is a fantastic addition to our team. He brings incredible leadership across semiconductors and computing, plus a real talent for scaling organizations and building genuine, long-term relationships with customers. Bringing him on board is a clear sign of our commitment to growing this vertical and making sure Quest Global remains a humble, trusted partner for engineering and transformation in this space.”

“Semiconductors are the foundational enablers of innovation across AI, high-performance computing, automotive, communications, and industrial systems,” said Rick Bergman, President & Global Business Head – Semiconductor, Quest Global. “What attracted me to Quest Global is the company’s unique combination of deep engineering DNA, global scale, and a long-term partnership mindset with customers. As the industry navigates increasing complexity, my focus will be on helping customers solve their most critical engineering challenges while building a scalable, high-impact business.”

Rick brings more than two decades of leadership experience across semiconductors, computing, graphics, and advanced technology platforms. Most recently, he served as President and CEO of Kymeta Corporation. Previously, he held senior leadership roles at AMD, Synaptics, and ATI Technologies. Throughout his career, Rick has led multi-billion-dollar businesses, overseen major acquisitions, and built high-performing global teams.

This appointment underscores Quest Global’s commitment to building category-leading leadership and scaling its Semiconductor business, aligned with evolving customer needs.

About Quest Global

At Quest Global, it’s not just what we do but how and why we do it that makes us different. We’re in the business of engineering, but what we’re really creating is a brighter future. For over 25 years, we’ve been solving the world’s most complex engineering problems. Operating in over 18 countries, with over 93 global delivery centers, our 21,500+ curious minds embrace the power of doing things differently to make the impossible possible. Using a multi-dimensional approach, combining technology, industry expertise, and diverse talents, we tackle critical challenges faster and more effectively. And we do it across the Aerospace & Defense, Automotive, Energy, Hi-Tech, MedTech & Healthcare, Rail and Semiconductor industries. For world-class end-to-end engineering solutions, we are your trusted partner.

The post Quest Global Appoints Richard Bergman as Global Business Head of its Semiconductor Division appeared first on ELE Times.

Def-Tech CON 2026: India’s Biggest Conference on Advanced Aerospace, Defence and Space Technologies to Take Place in Bengaluru.

The two-day international technology conference is focused on promoting innovation in the Aerospace, Defence, and Space sectors, in conjunction with DEF-TECH Bharat 2026, held in Bengaluru.

With a strong India-centric focus, DefTech CON 2026 features high-impact keynote sessions, expert panels, technology showcases, and interactive QA sessions, covering areas such as AI & autonomous systems, cyber defence, unmanned systems, advanced materials, space tech, next-generation battlefield solutions, advanced sensors, secure communication networks, AI-driven command and control, electronic warfare systems, autonomous platforms, space-based surveillance, next-generation missile defence, and more. These technologies enable faster decision-making, enhanced interoperability, and greater operational dominance across land, air, sea, cyber, and space.

Designed as a venue for engineers, researchers, defence laboratories, industry leaders, startups, and system integrators, the conference unites India’s most brilliant minds to investigate emerging trends, groundbreaking solutions, and essential capabilities that are influencing the strategic future of the nation.

Click here to visit the website for more details!

The post Def-Tech CON 2026: India’s Biggest Conference on Advanced Aerospace, Defence and Space Technologies to Take Place in Bengaluru. appeared first on ELE Times.

India- EU FTA to Empower India’s Domestic Electronics Manufacturing Industry to reach USD 100 Billion in the Following Decade

The India–European Union Free Trade Agreement (FTA) is poised to significantly reshape India’s electronics landscape, with industry estimates indicating it could scale exports to nearly $50 billion by 2031 across mobile phones, IT hardware, consumer electronics, and emerging technology segments—up from the current bilateral electronics trade of about $18 billion.

A Global Supplier

“The agreement aligns directly with India’s shift from scale-led domestic manufacturing to export-oriented integration with global value chains, while promoting inclusive growth across regions and skill levels,” says Pankaj Mohindroo, Chairman, ICEA

Emphsisisng on the significance of the FTA, he adds that in electronics, the FTA creates a credible pathway to build exports of nearly USD 50 billion by 2031 across electronic goods, including mobile phones, consumer electronics, and IT hardware. He further adds that the FTA carries the potential to exceed USD 100 billion in the following decade, anchored in manufacturing depth, job creation, innovation, and India’s emergence as a trusted global supplier.

Capitalsing a standards-driven market

At a time when global trade and supply chains are being reshaped by uncertainty and fragmentation, the India–EU FTA underscores a shared commitment to stability, predictability, and a trusted economic partnership. As the world’s fourth- and second-largest economies respectively, India and the European Union together account for nearly 25 percent of global GDP and close to one-third of global trade.

For India, the agreement goes beyond expanding trade volumes; it represents deeper engagement with one of the world’s most standards-driven markets, anchored in demonstrated capability, regulatory maturity, and institutional strength.

Preferential Access

The agreement gains added significance as global value chains increasingly prioritise resilience, diversification, and trusted partnerships. Under the FTA, over 99 percent of Indian exports by value are expected to receive preferential access to the EU market, sharply improving export competitiveness. With its scale, policy predictability, and expanding industrial base, India is well-positioned as a credible manufacturing partner for European lead firms seeking long-term stability beyond traditional supply centres.

Entry of Swedish Company ‘KonveGas’ into India

Amidst this positive environment, KonveGas, a Swedish company specializing in gas storage technology, has officially announced its entry into the Indian market. The fact that European Small and Medium Enterprises (SMEs) are now directly engaging with Indian industries is seen as a direct impact of the new trade policy.

The company has selected Delhi, Pune, and Gujarat for its initial phase of operations. These regions are India’s primary automotive and industrial hubs. Following the FTA, business opportunities in these sectors are expected to grow. The company aims to begin direct operations within the next six months.

The post India- EU FTA to Empower India’s Domestic Electronics Manufacturing Industry to reach USD 100 Billion in the Following Decade appeared first on ELE Times.

Anritsu Launches TestDeck Web Solution to enhance Test & Measurement

ANRITSU CORPORATION has launched TestDeck, a web-based solution designed to promote digital transformation (DX) of mobile device testing. TestDeck integrates test planning, configuration, execution, and results management by connecting multiple communication test and measurement systems to a web server and aggregating test data. This centralized approach streamlines test operations and supports new perspectives in test analysis.

TestDeck web-based solution enhances the efficiency of test operations for communication test and measurement systems. TestDeck users can centrally manage test results and progress to rapidly identify performance trends and issues by device version using collected historical data. Furthermore, by visualizing and sharing centralized communication test and measurement systems, TestDeck optimizes testing across multiple domestic and international sites, helping cut test costs and shortening mobile device development cycles.

Anritsu is continuing to expand TestDeck functions to further advance test operations in the Beyond 5G and 6G eras.

Development Background

The number of required mobile device test items continues growing as communication standards and device functions evolve, increasing the test burden for vendors. Additionally, fragmented test data from different global test environments makes cross-functional analysis and results sharing difficult. TestDeck addresses these challenges by aggregating and visualizing equipment and test data for efficient testing.

Product Overview

TestDeck web solution promotes the digital transformation of testing. It supports efficient use of communication test and measurement systems, streamlines workflows, and optimizes testing on a global scale for both efficiency and new analytical perspectives.

Key Features:

• Test Vision: Centralized management of test results for failure cause and device trend analyses

• Test Hub: Aggregated management of test environments, plans, reservations, execution, and results

• Test Utilization: Centralized management of test equipment and licenses

• Comprehensive Test Automation (for PCT*1/RFCT*2): Automated GCF/PTCRB-based test planning for efficient measurement system operation

Supported Products:

• 5G NR Mobile Device Test Platform ME7834NR

• New Radio RF Conformance Test System ME7873NR

• Rapid Test Designer Platform (RTD) MX800050A

• SmartStudio NR MX800070A

Contact Anritsu to learn more about TestDeck MX710000A

Technical Terms

*1 PCT

Abbreviation for Protocol Conformance Test—key ME7834NR function for evaluating whether device adheres to various 3GPP communication protocol procedures following GCF/PTCRB certification requirements

*2 RFCT

Abbreviation for RF Conformance Test—key ME7873NR function for evaluating whether device TRx characteristics meet 3GPP radio parameter specifications following GCF/PTCRB certification requirements

The post Anritsu Launches TestDeck Web Solution to enhance Test & Measurement appeared first on ELE Times.

STMicroelectronics recognised as a Top 100 Global Innovator 2026

- Clarivate’s list ranks the organisations leading the way in innovation worldwide

- ST earns the distinction for the eighth time overall, including five consecutive years since 2022

STMicroelectronics (NYSE: STM), a global semiconductor leader serving customers across the spectrum of electronics applications, has been named in the Top 100 Global Innovators 2026. In its 15th edition, the annual benchmark from Clarivate, a leading global provider of transformative intelligence, identifies and ranks organisations that consistently deliver high-impact inventions, shaping the future of innovation across industries. The Top 100 Global Innovators navigate complexity with clarity and set the pace for invention quality, originality and global reach.

“We are honoured to be recognised as a Top 100 Innovator by Clarivate for 2026, marking our fifth consecutive year and eighth time overall receiving this distinction. This achievement underscores STMicroelectronics’ unwavering commitment to sustained, large-scale innovation in products and technologies, driven by the creativity and dedication of our global teams,” said Alessandro Cremonesi, Executive Vice President, Chief Innovation Officer, and General Manager, System Research and Applications. “As the pace of technological change accelerates, we work in open collaboration with customers and partners to develop disruptive semiconductor technologies and solutions in sensing, power and energy, connectivity, data communications, compute and edge AI, helping them turn ambitious ideas into market-defining solutions.”

ST invests significantly in R&D, and about 20% of company employees work on product design, development and technology in extensive collaboration with leading research labs and corporate partners throughout around the world. The company’s Innovation Office focuses on connecting emerging market trends with internal technology expertise to identify opportunities, stay ahead of the competition, and lead in new or existing technology domains. ST is recognised as a leading semiconductor technology innovator in several areas, including smart power technologies, wide bandgap semiconductors, edge AI solutions, MEMS sensors and actuators, optical sensing, digital and mixed-signal technologies, and silicon photonics.

Maroun S. Mourad, President, Intellectual Property, Clarivate, said: “Recognition as a Top 100 Global Innovator is a remarkable achievement given the pace of change. Multi-year winners and new entrants are investing in AI innovation as it redefines the boundaries between research, engineering and commercial execution. The leaders we celebrate today are not just responding to this shift, they are designing for it.”

The Top 100 Global Innovators analysis is underpinned by the Clarivate Centre for IP and Innovation Research. Their analyses are founded in rigorous research leveraging the proprietary Derwent Strength Index, derived from the Derwent World Patents Index (DWPI) and its global invention data to measure the influence of ideas, their success and rarity, and the investment in inventions.

Detailed Methodology

The Top 100 Global Innovators uses a complete comparative analysis of global invention data to assess the strength of every patented idea, using measures tied directly to their innovative power. To move from the individual strength of inventions to identifying the organisations that create them more consistently and frequently, Clarivate sets two threshold criteria that potential candidates must meet and then adds a measure of their internationally patented innovation output over the past five years.

About STMicroelectronics

At ST, we are 50,000 creators and makers of semiconductor technologies, mastering the semiconductor supply chain with state-of-the-art manufacturing facilities. An integrated device manufacturer, we work with more than 200,000 customers and thousands of partners to design and build products, solutions, and ecosystems that address their challenges and opportunities, and the need to support a more sustainable world. Our technologies enable smarter mobility, more efficient power and energy management, and the wide-scale deployment of cloud-connected autonomous things. We are on track to be carbon neutral in all direct and indirect emissions (scopes 1 and 2), product transportation, business travel, and employee commuting emissions (our scope 3 focus), and to achieve our 100% renewable electricity sourcing goal by the end of 2027.

The post STMicroelectronics recognised as a Top 100 Global Innovator 2026 appeared first on ELE Times.

Aimtron Electronics acquires US-based ESDM and ODM company to expand global footprint

- Acquisition adds USD 17 million current revenue base, with a target to scale to ~USD 25 million within three years; consolidation from Q4 FY26

- Strengthens engagement with global OEM customers in mission-critical segments

- Advancing full-stack, mission-critical electronics capabilities

Aimtron Electronics Limited, an India-based electronics system design and manufacturing (ESDM) company with operations in the United States, announced the acquisition of ICS Company, a US-based ESDM and ODM company headquartered in Decatur. Based on CY 2025 estimates, the acquired business is expected to generate approximately USD 17 million in revenue, with stable performance across the year. Aimtron plans to consolidate revenues from Q4 of FY26.

The acquisition strengthens Aimtron’s capabilities by adding experienced engineering teams, proprietary intellectual property, and long-standing customer relationships, enhancing its end-to-end offerings across product design, manufacturing, and lifecycle support. ICS serves leading global OEMs across industrial and mission-critical segments, including Caterpillar and John Deere. This deepens Aimtron’s presence in high-reliability electronics and strengthens its engagement with global OEM customers.

The acquired facility is currently operating at around 54 per cent capacity utilisation, offering strong headroom for growth. Aimtron intends to scale utilisation to approximately 90 per cent over the next three years through improved procurement, enhanced operating leverage, and targeted capacity expansion.

The transaction has been evaluated on both trailing and forward financials and is expected to be margin- and EPS-supportive from year one, while remaining aligned to Aimtron’s ROCE and ROE benchmarks.

Mukesh Jeram Vasani, Founder, Aimtron Electronics Limited, said, “This acquisition is a decisive step in Aimtron’s global journey. It significantly expands our footprint in North America, deepens our engineering strength, and positions us closer to customers building mission-critical products. With scalable infrastructure, strong regional opportunity, and a highly capable team, this integration accelerates our move toward a truly full-stack ESDM platform and reinforces our ambition to build a global electronics powerhouse.”

The acquired company operates a 58,000 sq. ft. manufacturing facility located on approximately 3.9 acres in Decatur. Strategically positioned within an established industrial and OEM ecosystem, the facility serves customers across agrotech, oil and gas, mining, hardened electronics, heavy engineering, and medical technology.

Dennis Espinoza, Founder & CEO, said, “Joining the Aimtron family marks an exciting new chapter for ICS. By aligning our deep-rooted Midwest engineering focus with Aimtron’s global resources and manufacturing scale, we can now offer our long-standing customers even more comprehensive end-to-end solutions. This partnership empowers us to accelerate innovation and better serve the rising demand for rugged, high-reliability electronics in critical industries like agrotech and heavy engineering.”

Nirmal Vasani, Chief Operating Officer, Aimtron Electronics, said, “The acquisition of ICS is a cornerstone of our ‘Glocal’ strategy, combining the engineering precision of the US with the immense scale of our Indian manufacturing ecosystem. This is a significant leap toward our vision of becoming a ₹1,000 crore global ESDM powerhouse.”

Chris Espinoza, Vice President of ICS Company, said, “Under the Aimtron umbrella, we now have the scale, resources, and global reach to accelerate growth while continuing to serve our customers with the same engineering focus and operational discipline. Our Midwest location—at the heart of North America’s most productive farming regions—also positions us well to support the rising demand for advanced agrotech and rugged electronics solutions.”

Recently, Aimtron incorporated Aimtron Mechatronics, a wholly owned subsidiary in Gujarat, and raised ₹100 crore through preferential convertible warrants. Together with this US acquisition, these initiatives are expected to play a key role in fulfilling Aimtron’s mission of achieving ₹1,000 crore in revenue over the next three years.

The post Aimtron Electronics acquires US-based ESDM and ODM company to expand global footprint appeared first on ELE Times.

Microchip Introduces 600V Gate Driver Family for High-Voltage Power Management Applications

The post Microchip Introduces 600V Gate Driver Family for High-Voltage Power Management Applications appeared first on ELE Times.

From Power Grids to EV Motors: Industry Flags Key Budget 2026 Priorities for India’s Next Growth Phase

As India approaches Union Budget 2026–27, multiple industrial sectors—from power and automation to digital infrastructure and electric mobility—find themselves at a critical inflexion point. With the country balancing rapid industrialisation alongside sustainability and energy-transition goals, industry leaders are calling for continued capital expenditure, targeted incentives, and policy stability to strengthen infrastructure depth and global competitiveness.

At the core of these recommendations is the need to reinforce India’s power and grid ecosystem. According to Meenu Singhal, Regional Managing Director, Socomec Group, Greater India, sustained capex allocation, grid modernisation, and deeper indigenisation of critical power equipment will be essential to support rising industrial and digital demand. Industry stakeholders are urging the government to prioritise scalable manufacturing clusters, digitally enabled grid infrastructure, and structural reforms that improve reliability and execution efficiency.

Strategic schemes such as capex support mechanisms, fiscal incentives for local manufacturing, and policies favouring large-scale infrastructure implementation are seen as vital to closing capability gaps across transmission and distribution networks. Equally important, experts stress, is policy consistency and an enabling tax framework that continues to attract both domestic and global capital into the power sector, reinforcing India’s long-term vision of energy security and sustainable growth.

Automation as a Manufacturing MultiplierBeyond core infrastructure, industrial automation has emerged as a key lever for enhancing India’s manufacturing competitiveness as the economy advances towards the $5-trillion milestone. Sanjeev Srivastava, Business Head – Industrial Automation SBP at Delta Electronics India, highlights that smart factories, AI-driven automation, and closer human–machine collaboration will define the next phase of industrial transformation.

Industry players believe that stronger Budget support in the form of smart manufacturing incentives, R&D-linked tax benefits, and skill-development programmes can significantly accelerate the adoption of next-generation automation technologies. Such measures would help manufacturers improve productivity, reduce operating costs, and strengthen India’s position on the global manufacturing and automation curve.

Also read industry’s recommendations on the Union Budget 2026 at: PCB Duty Cuts to Manufacturing Zones: Top Industry Recommendations for Budget 2026

Digital Infrastructure and Data CentresAs India moves deeper into the 5G, cloud, and AI era, mission-critical digital infrastructure is increasingly being viewed as the backbone of every industry. Pankaj Singh, Head – Data Centre & Telecom Business Solutions at Delta Electronics India, notes that the upcoming Budget presents an opportunity to prioritise energy-efficient and resilient data-centre ecosystems.

Industry recommendations include stronger incentives for modular and containerised data-centre deployments to enable faster rollout of scalable core and edge facilities. There is also a growing emphasis on supporting advanced cooling technologies—such as liquid-to-liquid and liquid-to-air coolant distribution systems—to manage the high thermal loads associated with AI-driven workloads. When complemented with sustainability-linked benefits and Make-in-India incentives for locally manufactured power, cooling, and automation equipment, these measures could encourage OEMs to invest with greater confidence in building a future-ready, low-carbon digital backbone.

Strengthening the EV Manufacturing BaseMeanwhile, India’s electric mobility ecosystem is entering a decisive phase, where long-term resilience and supply-chain stability are becoming as critical as adoption numbers. Bhaktha Keshavachar, Co-Founder & CEO of Chara Technologies, points out that while policy efforts have successfully focused on vehicle adoption and battery localisation, recent global disruptions have exposed vulnerabilities stemming from India’s dependence on imported rare-earth magnet motors.

As Budget 2026 approaches, industry voices are calling for formal recognition and fiscal support for magnet-free motor technologies within existing incentive frameworks. These solutions offer predictable costs, reduced supply-chain risk, and the development of indigenous intellectual property—particularly for high-volume segments such as two-wheelers, three-wheelers, and commercial fleets.

Targeted incentives for rare-earth-free motor manufacturing, stakeholders argue, would not only de-risk India’s EV ambitions but also position the country as a global hub for affordable, resilient, and export-ready EV powertrain solutions.

The Road AheadTaken together, these pre-Budget recommendations underline a shared industry priority: building resilient, scalable, and future-ready industrial ecosystems through focused policy support. Whether in power infrastructure, automation, digital systems, or electric mobility, Budget 2026 is widely seen as a pivotal opportunity to reinforce India’s transition towards sustainable growth, technological leadership, and global manufacturing competitiveness.

The post From Power Grids to EV Motors: Industry Flags Key Budget 2026 Priorities for India’s Next Growth Phase appeared first on ELE Times.

India’s Next Big Concern in the AI Era: Cybersecurity for Budget 2026

Artificial Intelligence (AI), like any other technology, comes with its own set of boons and banes. According to the Stanford AI Index 2024, India ranks first globally in AI skill penetration with a score of 2.8, ahead of the US (2.2) and Germany (1.9). AI talent concentration in India has grown by 263% since 2016, positioning the country as a major AI hub. India also leads in AI Skill Penetration for Women, with a score of 1.7, surpassing the US (1.2) and Israel (0.9).

India in its AI Era

According to a PIB report, India is one of the top five fastest-growing AI talent hubs, alongside Singapore, Finland, Ireland, and Canada. The demand for AI professionals in India is projected to reach 1 million by 2026. Taking this advancement into mind, all eyes will be on the Budget for 2026, judging what the government intends to propose to boost the AI landscape in India.

“India’s rapid advancement in the AI era places the spotlight on the upcoming Union Budget as a decisive moment for building a future-ready workforce. With over 40% of India’s IT and gig workforce already utilising AI tools, and India projected to account for the world’s AI talent by 2027, there is clear momentum; yet, significant gaps remain.

Although the employability of the workforce has improved, some part of the young workforce possesses deep AI skills, and many companies complain about the difficulties in hiring the right people. The provision of more funds for AI workforce training programs, along with the National Education Policy’s emphasis on the incorporation of applied AI, data science, and digital technologies into the curriculum, is important. We anticipate policies that foster close cooperation between the industry and the universities, provide incentives for certifying the basic knowledge gained through practical training, and allow more students to have access to hands-on labs and internships. Not only will these measures lift the entry-level skills of the labour force, but they will also make it certain that the young population of India is capable of turning to the global market as the main supplier of leaders in the coming years,” says Tarun Anand, Founder & Chancellor, Universal AI University.

Shortcomings of AI: Cybersecurity Threats

While AI has great potential and proposed advanced opportunities in various sectors, it comes with its own set of shortcomings. The issues of cybersecurity have escalated significantly in the AI era. Subsequently, it will be important to note what the new budget has in store to build on cybersecurity, as AI will continue to dominate the Indian landscape.

“As India approaches the 2026 Union Budget, the cybersecurity sector does so with clarity: compliance is no longer optional, and policy must now accelerate infrastructure transitions that enterprises cannot manage alone. In 2025, India faced nearly 265 million cyberattacks, with AI-driven ransomware democratizing threats at an unprecedented scale.

First, cybersecurity data centre infrastructure must be formally recognised as a critical national asset. Expanding the PLI framework to include cybersecurity data centres would strengthen India’s cyber sovereignty and reduce reliance on offshore infrastructure.

Second, the Budget should enable public–private partnerships to bolster SME cyber resilience. Manufacturing and mid-market enterprises are increasingly targeted by ransomware-as-a-service, yet lack access to enterprise-grade security. Government-backed subsidies routed through certified MSP networks would protect the Make in India ecosystem while democratizing DPDP compliance at scale.

Third, India must invest decisively in cybersecurity talent infrastructure. With a shortage of over 80,000 professionals, Budget 2026 should fund structured partnerships between government, academic institutions, and industry certifiers. This would create a domestic talent pipeline comparable to Singapore’s model. While we currently train over 2,000 professionals annually, government backing could scale this to more than 10,000 within three years.

The DPDP execution phase, starting in November 2026, will ultimately determine whether cyber resilience scales equitably across the country or remains concentrated in metro markets. Through targeted investments in infrastructure, partnerships, and education, the 2026 Budget has the opportunity to shape that outcome decisively,” says Rajesh Chhabra, General Manager, India and South East Asia, Acronis

By: Shreya Bansal, Sub-Editor

The post India’s Next Big Concern in the AI Era: Cybersecurity for Budget 2026 appeared first on ELE Times.

Anritsu Unveils Visionary 6G Solutions at MWC 2026

ANRITSU CORPORATION showcases next-generation wireless solutions at MWC 2026 in Barcelona (Hall 5 Stand D41). The company’s portfolio includes software-centric tools for early 6G standardisation, pioneering 6G measurement and AI-powered test solution, unified RF Multiband and NTN Validation Platform, Field Simulation Test for digital twin development, cloud-based automotive validation for ADAS and SDV, sustainable IoT power consumption evaluation, and intelligent assurance for mobile, fixed, and private infrastructures. Together, these innovations confirm Anritsu’s vision to deliver trustworthy, sustainable, and high-performance connectivity, helping operators, manufacturers, and industry verticals unlock new value as networks evolve beyond intelligence.

As a global leader in communications test and measurement, Anritsu continues to empower innovators with tools that support the evolving demands of connectivity, automation, and network intelligence.

6G Test Platform for Early 6G Standardisation and Validation

Anritsu’s Virtual Signalling Tester is a software-based signalling tester for advanced 6G validation. Its Virtual ST-based solution supports L1/Physical layer test, and protocol test/Application test, capable of testing at the MAC layer, DIQ and RF Interface (with SDR). It’s equipped with an arbitrary waveform output function, which is being considered for 6G, making it highly useful for early validation during the 6G standardisation phase.

Pioneering 6G Measurement and AI-Driven Test Solution

Anritsu demonstrates a groundbreaking 6G measurement solution, designed to redefine wireless testing with AI at the core of the workflow. This next-generation solution harnesses advanced data management and AI-powered analytics to simplify complex test processes, reduce engineering workload, and accelerate development cycles. AI-assisted test sequence generation improves accuracy, optimises resource usage, and accelerates development cycles by learning from historical test patterns and real-world performance data. This intelligent approach ensures customers can meet the rapidly evolving demands of 6G technology, digital automation, and scalable network intelligence.

Unified 6G Multiband and NTN Validation Platform

The MT8000A Radio Communication Test Station now integrates support for the Upper Mid-Band (up to 16 GHz), enabling comprehensive RF front-end testing across FR1, FR2, and FR3 bands within a single modular platform. This unified architecture streamlines validation workflows for 6G devices, allowing simultaneous multi-band characterisation, inter-band handover testing, and advanced signal integrity analysis. In addition, the platform introduces next-generation NTN (Non-Terrestrial Network) measurement capabilities, including Direct-to-Cell and NR-NTN protocols delivered as a software upgrade. Engineers can leverage real-time emulation of satellite and aerial link conditions, protocol stack verification, and seamless integration with existing test automation environments. These enhancements empower engineers to efficiently validate NTN features, optimise RF performance, and maintain a competitive edge as wireless technologies evolve toward 6G.

Reproducing Real Networks: FST for Digital Twin Development

Anritsu demonstrates its unique Field Simulation Test (FST) solution, designed to capture real-world radio environments and accurately reproduce complex network propagation conditions in the laboratory. This innovative approach allows engineers to replicate issues observed in live networks and verify propagation scenarios. Moreover, the collection of propagation data supports the development of Digital Twin environments for research into next-generation wireless technologies, such as ISAC and CSI compression.

Future-Ready Automotive Testing: Cloud-Based Validation for Connected SDV Use Cases

Anritsu, in collaboration with Valeo, is demonstrating a Virtualised Automotive Testing Solution designed to accelerate Software development and reduce testing costs for connected and autonomous vehicles. By integrating Anritsu’s virtual connectivity solution for 5G and C-V2X connectivity with Valeo’s virtualised hardware and ECU simulation platform, this joint solution enables comprehensive validation of Connected SDV functions, eliminating the need for physical vehicles or test tracks. The approach delivers faster time-to-market, improved safety, and global scalability through cloud integration, transforming automotive testing into a cost-effective, future-ready process.

Power Consumption Testing for Smarter, Sustainable IoT Devices

As industries accelerate toward smarter, more connected solutions, the demand for low-power, sustainable devices is reshaping the landscape of IoT technology. Anritsu introduces a cutting-edge power consumption test environment, empowering engineers to evaluate sensors, wearables, and smart home systems under real-world operating conditions. This real-world approach provides actionable insights into energy usage, battery life, and optimisation potential, enabling precise measurement and analysis that drive smarter design choices and longer-lasting products. Leveraging the advanced capabilities of the Anritsu MT8000A platform, Qoitech’s Otii power measurement suite, and SmartViser’s expertise in intelligent test automation and orchestration, this solution sets a new benchmark for energy efficiency in IoT device development.

From Complexity to Clarity to Confidence. Applied AI for Autonomous Networks

Anritsu’s Service Assurance platform is a unified solution that transforms network complexity into a competitive advantage. By embedding intelligence directly into the service assurance workflow, Anritsu allows operators to surface the signals that matter most. Data from across the network is correlated, converting fragmented insights into actionable guidance that engineers and operations teams can trust.

This continuous AI-driven understanding of service health accelerates decision-making and forms the foundation for autonomous network transformation. The result is a clear, unified operational picture that empowers teams to act with confidence and deliver superior customer experiences.

Across our AI-powered assurance portfolio, Anritsu’s purpose-built intelligence delivers measurable business outcomes: lower costs, higher satisfaction, and faster resolution.

The post Anritsu Unveils Visionary 6G Solutions at MWC 2026 appeared first on ELE Times.

PCB Duty Cuts to Manufacturing Zones: Top Industry Recommendations for Budget 2026

As the nation gears up for the Union Budget 2026, slated to be presented in Parliament on February 1, electronics industry associations are stepping up efforts to push India’s electronics manufacturing ecosystem to its next phase of growth.

Among the key recommendations, the Electronic Industries Association of India (ELCINA) has proposed the establishment of 10 world-class, product-specific Electronics Manufacturing Zones (EMCs). The association has urged the government to upgrade the existing EMC 1.0 and EMC 2.0 cluster models to globally accepted infrastructure standards. According to ELCINA, such an approach would help ensure regional balance, improve local facilitation, and enhance India’s competitiveness and export potential.

Strengthening the manufacturing value chain further, the India Cellular & Electronics Association (ICEA) has recommended a reduction in customs duty on microphone, receiver, and speaker assemblies for mobile phones from the current 15% to 10%. The association believes that this duty rationalisation would create cumulative cost advantages, improve global competitiveness, and encourage additional investments in domestic component manufacturing.

ICEA has also suggested reducing duties on Printed Circuit Board Assemblies (PCBAs) and Flexible PCB Assemblies (FPCAs) from 15% to 10%, a move aimed at supporting localisation and scale in electronics production.

Testing & Certification

For any industry, standards play a major role, whether for exports or inbound use; without certification, no product can see the light of day. Recognising this to be at the forefront of product development, ELCINA recommends introducing a Testing & Certification Support Scheme to provide financial support or reimbursement of testing and certification charges to MSMEs.

Also, to make the services accessible for small entities with minimal investments, the body recommends establishing regional accredited testing centers in collaboration with private labs, industry associations, and technical institutions as per BIS and other international standards. This would successfully ensure one of the vitals for strengthening the domestic manufacturing and R&D ecosystem.

Investment Fund for SMEs

As the Union Budget 2026 approaches, ELCINA has also highlighted the long-standing challenge of limited access to low-cost finance for SMEs in the electronics system design and manufacturing (ESDM) sector, noting that funding constraints continue to hamper their ability to scale and invest in advanced technologies. To address this, the association has recommended the creation of a dedicated Technology Acquisition Fund to support technology transfer and licensing, enabling Indian firms to move up the value chain and transition towards a product-led ecosystem.

ELCINA has also proposed a professionally managed, government-backed venture fund to support high-value-added manufacturing in electronic components, PCBs, and modules, along with targeted tax incentives, including investment- and dividend-stage exemptions for at least five years, to attract private equity and high-net-worth investors and help build globally competitive Indian champions.

Classification of Displays

In the same vein, the India Cellular and Electronics Association (ICEA) has raised concerns over the lack of clarity in the customs classification of display assemblies used across automobiles, medical devices, industrial electronics, and other applications. Although these displays are technologically identical to flat panel display modules used in mobile phones and televisions, they are often classified under different HSN codes based on end-use, resulting in inconsistent customs treatment and operational uncertainty across field formations.

To address this, ICEA has recommended uniform classification of all display assemblies under HSN 8524, regardless of application, a step it says would ensure global alignment, reduce classification disputes, and enable smoother integration of display manufacturing across product segments as domestic capacity scales up under the Electronics Components and Manufacturing Scheme (ECMS).

Conclusively, the industry’s pre-Budget recommendations point to a clear priority: strengthening India’s electronics manufacturing depth through targeted policy, fiscal, and regulatory interventions. With focused action on infrastructure, duties, finance, and classification clarity, Budget 2026 has the opportunity to accelerate India’s shift from assembly-led growth to globally competitive electronics manufacturing.

The post PCB Duty Cuts to Manufacturing Zones: Top Industry Recommendations for Budget 2026 appeared first on ELE Times.

CEA-Leti Advances Silicon-Integrated Quantum Cascade Lasers for Mid-Infrared Photonics

CEA-Leti presented new research at SPIE Photonics West highlighting major progress in the integration of quantum cascade lasers (QCLs) with silicon photonic platforms for mid-infrared (MIR) applications.

The paper titled, “Advanced Architectures for Hybrid III-V/Silicon Quantum Cascade Lasers: Toward Integrated Mid-Infrared Photonic Platforms,” compares three complementary hybrid laser architectures that collectively advance the practicality, flexibility, and scalability of MIR photonics.

Toward ‘Smaller, More Robust, and More Manufacturable MIR Systems’

Mid-infrared light plays a critical role in technologies such as gas sensing, chemical spectroscopy, biomedical diagnostics, and security, because many molecules exhibit strong absorption signatures in this spectral region. Despite the technology’s importance, MIR photonic systems remain large, costly, and difficult to manufacture at scale. Integrating MIR light sources directly onto silicon photonic platforms offers a path toward smaller, more robust, and more manufacturable systems—bringing mid-infrared photonics closer to the level of integration in the near-infrared.

Three Architectures, Three Integration Strategies

In its Photonics West presentation, CEA-Leti demonstrated and compared three distinct hybrid III-V/silicon QCL architectures, each addressing a different integration challenge:

Hybrid Distributed Feedback QCL on Silicon-on-Nothing-on-Insulator with Adiabatic Coupling

- This approach enables robust single-mode emission around 4.3 µm with efficient optical power transfer from the III-V active region into silicon waveguides. High-index-contrast silicon photonics provides precise feedback and light routing, making this architecture well-suited for scalable photonic integrated circuits targeting spectroscopy and chemical sensing.

Hybrid QCL with an External Silicon Distributed Bragg Reflector Cavity

- In this configuration, optical gain and optical feedback are decoupled: the III-V material provides amplification, while wavelength selection and feedback are implemented in silicon using distributed Bragg reflector (DBR) cavities. This separation offers enhanced design flexibility and opens a clear path toward tunable and multifunctional MIR sources for advanced spectroscopic and sensing systems.

Ultra-Compact QCL Micro-Sources Based on Photonic Crystals & Micro-Rings

- Miniature light sources in these devices achieve footprints below 100 µm² by leveraging strong optical confinement and resonant effects. The resulting extreme miniaturization enables dense on-chip integration and supports new system architectures where size, power consumption, and integration density are critical.

From Passive Platform to Active Host

Collectively, the results show that silicon photonics can play an active role in mid-infrared laser systems. By combining adiabatic optical coupling, silicon-based feedback and cavity engineering, and ultra-compact laser concepts, CEA-Leti establishes several viable integration pathways rather than a single, one-size-fits-all solution. The work highlights how different architectures trade off stability, flexibility, and footprint, providing designers with a practical toolkit for MIR photonic systems.

“By combining quantum cascade lasers with silicon photonics, we are bringing mid-infrared sources closer to the level of integration and scalability that silicon platforms have already achieved in the near-infrared,” said Alexis Hobl, presenter and lead author of the paper.

Looking Ahead

Future work will focus on further improving optical coupling efficiency, fabrication robustness, and thermal and electrical management, as well as integrating additional on-chip photonic functions such as filters, multiplexers, and interferometric circuits. Demonstrating wafer-scale reproducibility and packaging-ready designs will be key milestones on the path toward fully integrated mid-infrared photonic systems.

Acknowledgements: L’Institut des Nanotechnologies de Lyon (INL), III-V Lab, and Fraunhofer Applied Solid State Physics IAF contributed to this project.

The post CEA-Leti Advances Silicon-Integrated Quantum Cascade Lasers for Mid-Infrared Photonics appeared first on ELE Times.

IOS-MCN develops India’s Open Source Platform to Build Private 5G network led by IISc Bengaluru, IIT Delhi, CDAC, MeitY

The Indian Open Source for Mobile Communication Networks (IOS-MCN) Consortium has developed a new open source, publicly releasing its software platform to allow organisations to build and run their own Private 5G networks. Designed for factories, campuses, research institutions and startups, the platform would allow users to deploy Private 5G networks that promise faster, more reliable and secure connectivity than Wi-Fi or public mobile networks at a lower cost.

The release, named Agartala 0.4.0, is a continuation of IOS-MCN’s convention of using Indian city names to reflect its national, open-source character. This is the fourth open-source milestone that is mature enough for pilot deployments of India’s homegrown Private 5G platform. Validation tests prove end-to-end latency of under 10 milliseconds and downlink throughput of up to 600 Mbps per gNB, making it suitable for early pilots and enterprise trials.

The IOS-MCN is being developed by a consortium led by IISc Bengaluru, IIT Delhi, and C-DAC, with funding from the Ministry of Electronics & Information Technology (MeitY), Government of India. Agartala 0.4.0 marks a decisive step towards industry-grade, deployable Private 5G networks built on an open-source platform.

What’s New in Agartala 0.4.0Brings all key parts of a private 5G network into one integrated, easy-to-deploy software platform:

- Advanced Mobility: Xn, F1, and N2 handovers, cell reselection, and robust RRC idle mode handling

- Voice & Multimedia: VoNR and ViNR with full IMS integration

- ORAN-Compliant Disaggregated RAN with unified RAN support

- Network Slicing for enterprise and mission-critical use cases

- Service Management & Orchestration (SMO) with a unified dashboard

- RIC Framework: E2 interface implementation with near-RT and non-RT RIC support

- Precision Timing: PTP LLS C1 and C3

- Operational Excellence: PM counters across RAN and Core, extensive crash and assert fixes, and expanded test coverage

Agartala 0.4.0 positions IOS-MCN for early pilot deployments led by ecosystem partners, with installations planned to commence in the second half of 2026 across multiple sectors.

Niral Networks, which builds indigenous telecom and networking solutions, has proposed an Intelligent Village pilot. It stated, “Agartala 0.4.0 provides the foundational technology that enables Private 5G deployments for rural and semi-urban environments. We will leverage the IOS-MCN stack to deliver real-world analytics and digital inclusion solutions through initiatives like the Intelligent Village Pilot and Smart Village Connectivity Program.”

Techphosis, which develops secure technology solutions for defence and strategic applications, has proposed a Network-in-a-Box pilot for defence applications. It said: “Agartala 0.4.0 demonstrates the readiness of open-source, ORAN-compliant platforms for defence use cases. The Network-in-a-Box pilot, planned for the second half of 2026, aims to validate rapid, secure, portable and reliable Private 5G deployments for defence communications.”

Together, these proposed pilots, spanning several sectors, underscore IOS-MCN Agartala 0.4.0’s readiness to transition from platform development to industry validation and deployment starting in 2026.

The post IOS-MCN develops India’s Open Source Platform to Build Private 5G network led by IISc Bengaluru, IIT Delhi, CDAC, MeitY appeared first on ELE Times.

How A Real-World Problem Turned Into Research Impact at IIIT-H

The idea for a low-cost UPS monitoring system at IIIT-H did not begin in a laboratory or a funding proposal. It began with a familiar frustration – raised by Prakash Nayak, a campus IT staffer who was tired of equipment failures with no clear explanation.

Power outages were happening. Servers were restarting. Despite the installation of UPS units everywhere, no one could say with certainty what the UPS systems were actually doing when the lights went out. That real-world problem became the starting point for a research project that has now resulted in a ₹2,000 IoT-based device capable of tracking UPS behaviour during outages with near-second precision.

The research was documented in a paper titled “Low-cost IoT-based Downtime Detection for UPS and Behaviour Analysis,” by authors Sannidhya Gupta, Prakash Nayak, and Prof. Sachin Chaudhari. It also received the Best Paper award at the 18th International Conference on COMmunication System and NETworkS (COMSNETS-2026) Workshop on AI of Things, recently held in Bangalore.

When monitoring costs more than the problem

“Frequent power outages in developing regions cause equipment damage, operational downtime, and data loss,” says Sannidhya Gupta, noting that while UPS systems are meant to provide protection, “affordable options for monitoring their performance remain limited.” Commercial UPS monitoring tools – typically SNMP cards that collect and organise information about managed devices over IP networks – were an option, but an impractical one. According to the paper, “Commercial solutions are expensive, manufacturer-specific, and reliant on network infrastructure”. With prices exceeding ₹20,000 per unit, the campus IT team simply could not justify deploying them at scale. Worse, these tools often failed at the moment they were most needed. “These systems are unable to record data when the UPS itself loses power,” the authors point out, making post-outage diagnosis nearly impossible.

A device that watches, not interferes

Responding directly to the IT team’s request for something affordable and reliable, the team designed a non-intrusive current-monitoring device. Instead of tapping into UPS internals, it clamps onto the input and output lines, observing how current flows before, during, and after outages. “UPS input and output currents are sensed non-intrusively to detect outages, switchovers, and recovery behaviour,” the researchers explain. Additionally, the device is battery-backed, allowing it to keep recording even when both mains power and internet connectivity are lost.

From theory to campus corridors

In order to test out the system, it was deployed across four UPS installations on campus, including one unit already suspected by IT staff to be malfunctioning. Over a month, the devices collected around 3.7 million data points, automatically detecting 61 outage events. The data confirmed what the IT team had suspected but could never prove. “One UPS repeatedly showed no clear charging behaviour after outages,” reports Prakash, indicating a system that could briefly support loads but failed to properly recharge its batteries.

Smart algorithms, Simple assumptions

The backend analytics automatically labels each event into phases – normal operation, outage, stabilisation, and battery charging – without manual configuration. “All thresholds are expressed as fractions of a locally estimated baseline,” the authors note, adding that this allows the system to adapt to different installations automatically. The results were precise: no missed outages, no false alarms, and timing errors typically within three seconds.

Real-time monitoring, Ten times cheaper

A web-based dashboard now gives IT staff something they never had before: visibility. Instead of guessing whether a UPS is healthy, administrators can now see it. Plus, they have access to historical analysis of UPS behaviour. Built using off-the-shelf components, the device costs about ₹2,000 – roughly one-tenth the price of commercial monitoring cards. “Its affordability, power independence, and portability make it a practical option for cost-constrained environments,” concludes Sannidhya.

Research grounded in reality

What sets this work apart is not just the technology, but its origin. This was research born out of a real operational pain point, brought directly by the people responsible for keeping systems running. “It is important to note that IT staff, Mr. Prakash, is part of the research paper we have published. He is also part of the patent we have recently filed on this. This highlights the value of treating campus operations teams as co-creators of research problems rather than mere end users – a mindset that leads to more relevant and impactful outcomes,” states Prof. Chaudhari. In a landscape where academic research is often criticised for being disconnected from reality, this project offers a counter example of how researchers take note when a problem statement is identified, and build something that changes how systems are understood and managed.

The post How A Real-World Problem Turned Into Research Impact at IIIT-H appeared first on ELE Times.