ELE Times

NXP and NVIDIA Collaborate to Deliver New Innovations for Advanced Physical AI

NXP Semiconductors N.V. announced innovative robotics solutions for reliable, secure, real-time data processing and transport and advanced networking, enabling sensor fusion, machine vision and precision motor control. First in a series of NXP’s foundational robotics solutions, these ready-to-deploy solutions were developed in collaboration with NVIDIA and implement NVIDIA Holoscan Sensor Bridge with NXP’s highly integrated SoCs. This reduces discrete components, significantly shrinking footprint, power and cost, while also simplifying the software complexity of robotic sensing and actuation, including humanoid form factors.

Physical AI is the next frontier of innovation, featuring systems that can sense, interpret, and interact with their surroundings with precision, reliability and safety. Humanoid robots are one of the most advanced embodiments of physical AI, requiring secure, reliable, low-latency data processing and transport throughout the robot body to enable synchronised motion, dense sensor fusion and advanced actuation.

NXP’s new integrated robot body solutions directly address this challenge, delivering powerful edge intelligence and low-latency networking to enable safe, secure, real-time communication. These solutions seamlessly integrate NVIDIA Holoscan Sensor Bridge into NXP’s software enablement, allowing developers to easily implement real-time processing and establish a direct transport route between the body and pre-specified regions of the robot brain, substantially reducing latency. This significantly simplifies the challenges of bringing AI into the physical world, where real-time decision-making is a critical requirement.

“Physical AI is redefining what machines can do in the real world, and humanoid robots represent the most complex expression of that revolution,” said Charles Dachs, Executive Vice President and General Manager, Secure Connected Edge, NXP Semiconductors. “By combining NXP’s deep expertise in edge processing, secure networking, functional safety and real-time control with NVIDIA robotics platforms, we are greatly simplifying physical AI development, enabling seamless connectivity between the physical AI edge and the central brain. This is just the beginning of what NXP will deliver to accelerate the ecosystem for physical AI.”

“The development of autonomous machines requires a high-performance computing architecture that can synchronise complex motor controls with real-time perception,” said Deepu Talla, vice president of robotics and edge AI at NVIDIA. “By integrating NVIDIA Holoscan Sensor Bridge into its edge portfolio, NXP is providing developers with a scalable foundation to accelerate the deployment of physical AI.”

The collaboration between NXP and NVIDIA helps define a unified architecture for full-body humanoid robotics. NXP’s edge processors, motor control MCUs, automotive-grade networking technology, high-throughput asymmetric data transport capability acquired through Aviva Links, and functional safety expertise built on decades of automotive experience, combined with NVIDIA AI infrastructure, create a flexible, energy-efficient system architecture for next-generation robots.

The first Holoscan Sensor Bridge-ready solutions in NXP’s robotic portfolio include a machine vision solution based on the i.MX 95 applications processor delivering high-bandwidth data to the robot brain. It also includes a motor control solution based on a kinematic chain of i.MX RT1180 crossover MCUs, aggregated by NXP’s S32J TSN switch, directly connect to the brain. This motor control solution features integrated support for popular industrial protocols such as EtherCAT® and TSN. These flexible and software‑driven solutions are highly integrated to reduce footprint, power and cost, without sacrificing performance, safety or security, providing a complete, scalable foundation for full-body humanoid robot design.

The post NXP and NVIDIA Collaborate to Deliver New Innovations for Advanced Physical AI appeared first on ELE Times.

EDOM Showcases Physical AI & Robotics Applications at GTC 2026

EDOM Technology will participate in NVIDIA GTC for the third consecutive year under the theme “From AI to Action: Physical AI in Motion.” Together with ecosystem partners, EDOM will showcase its latest AI computing platforms, key components, and system integration capabilities. At Booth #242, EDOM will present physical AI and robotics solutions powered by NVIDIA Jetson hardware and software resources, demonstrating how AI technologies are being developed and deployed across diverse fields such as smart healthcare, vision recognition, and speech understanding. The showcase will highlight EDOM’s capability to connect the AI ecosystem and accelerate industry innovation through integrated solutions.

During the GTC exhibition, EDOM Technology plans to demonstrate several innovative applications that combine physical AI with edge computing. With the introduction of the NVIDIA Jetson Thor, AI inference and control architectures can now be integrated into a single system, providing a high-performance computing foundation for real-time closed-loop control and multimodal sensing, and accelerating the real-world deployment of multimodal intelligent robots.

In the robot interaction showcase, EDOM collaborates with Algoltek to present “Dexterous Hand AI.” Powered by 4D AI Vision technology and vision-action models, the system can recognise and predict audience hand gestures in real time and respond accordingly. This demonstration highlights low-latency AI inference and instant feedback, while also presenting a complete Sim-to-Real workflow, from virtual simulation to physical deployment. EDOM also partners with Nexuni to present “AI Workforce: Embodied Intelligence.” Built on the NVIDIA Thor platform, the system enables dual-arm robot manipulation through few-shot learning. The demonstration shows a service robot performing household tasks, including object recognition, grasping, and fabric folding. Because fabric is a highly deformable material, handling it requires complex visual perception, state estimation, and coordinated dual-arm control. By leveraging Jetson Thor’s edge AI inference and real-time dynamic path correction, the system continuously adjusts its movements during the folding process, improving task success rate and operational stability. This highlights the potential of physical AI in smart manufacturing, human-robot collaboration, and service robotics.

In the area of enterprise AI and smart biotech applications, EDOM collaborates with Avalanche Computing to showcase the “Secure Offline Generative AI” platform. Combining enterprise-grade private LLMs with real-time speech intelligence, the platform runs on the NVIDIA Jetson edge platform, enabling low-latency offline speech recognition and semantic analysis. This allows generative AI to deliver real-time interaction within highly secure enterprise environments. EDOM is also working with CyteSi to present the “Software-Defined High-Throughput Wet Lab,” an AI-driven laboratory automation platform. CyteSi’s EWOD (Electrowetting-on-Dielectric) technology digitally controls micro-droplets with precision, supporting workflows such as NGS sample preparation, drug discovery, and synthetic biology research. The system uses NVIDIA Jetson as its edge computing core, integrating biochips from Japan Display Inc. (JDI) with real-time image analysis capabilities to provide an intuitive user interface and highly efficient experimental workflow, advancing AI adoption in smart biotech research and automated laboratories.

In addition, EDOM will showcase the “NVIDIA Jetson Thor Peripheral Ecosystem,” integrating a range of EDOM-certified peripheral components, including high-speed storage, Wi-Fi 6/6E and 5G communication modules, GMSL and MIPI cameras, 10G high-speed networking, sensors, camera modules, and high-speed I/O interfaces. This ecosystem helps developers rapidly build next-generation robotics and physical AI systems. Through comprehensive hardware integration and platform support, EDOM assists robotics developers in accelerating product design, deployment, and mass production, further expanding the physical AI and Jetson Thor ecosystem.

Jeffrey Yu, CEO of EDOM Technology, stated, “The key to physical AI lies not only in computing performance, but in comprehensive hardware–software integration and ecosystem collaboration. Through NVIDIA’s Three-Computer architecture and the Jetson Thor platform, we help customers build scalable, production-ready, end-to-end AI solutions—from model training and simulation validation to edge deployment.” He further noted that EDOM is not just a hardware supplier, but is committed to integrating peripheral modules, AI software frameworks, and partner resources to help enterprises accelerate the adoption of AI and robotics technologies.

The post EDOM Showcases Physical AI & Robotics Applications at GTC 2026 appeared first on ELE Times.

Deep Learning-Based Predictive Maintenance: The Backbone of Smart Manufacturing 4.0

Unplanned downtime remains one of the most persistent and costly challenges in modern manufacturing. Studies and industry assessments from organisations such as Siemens and the Aberdeen Group have consistently shown that unexpected equipment failures cost global manufacturers tens of billions of dollars every year, with large automotive plants, semiconductor fabs, and energy facilities losing millions of dollars per hour during major production disruptions.

In the current manufacturing landscape, where production systems operate with minimal margins and global supply chains are under continuous pressure, downtime has evolved from a technical inconvenience to a significant strategic liability.

With the advent of Industry 4.0, manufacturing facilities have transitioned from isolated mechanical environments to complex digital ecosystems comprising interconnected machines, industrial electronics, sensors, software platforms, and automation. In this context, traditional maintenance approaches, such as reactive repairs or fixed-schedule servicing, are increasingly misaligned with contemporary operational requirements.

Predictive maintenance (PdM), enabled by deep learning and industrial artificial intelligence, is fundamentally transforming approaches to reliability in manufacturing. Rather than reacting to failures, organisations can now anticipate them, plan interventions proactively, and maintain uninterrupted production. Predictive maintenance, once considered a support function, is increasingly recognised as a core strategic capability.

From Rules to Learning: How Deep Learning Predicts FailuresEarlier predictive maintenance systems relied on fixed thresholds and rule-based logic—triggering alerts when temperature, vibration, or current crossed predefined limits. While effective for detecting obvious faults, these approaches were inherently reactive and struggled to capture the complex, nonlinear behaviour of modern equipment operating under variable loads and conditions.

Deep learning signifies a fundamental transition from rule-based systems to data-driven intelligence. Instead of relying on manually encoded expert assumptions, deep learning models extract knowledge directly from historical and real-time data, identifying subtle, multi-parameter patterns that precede failures, often weeks in advance and prior to the activation of conventional alarms. These early indicators are typically undetectable when individual signals are analysed in isolation.

In addition to enhancing prediction accuracy, deep learning facilitates a strategic shift toward probabilistic and horizon-based maintenance planning. Maintenance decisions are guided by remaining useful life estimates and associated confidence levels, rather than binary fault alerts, enabling teams to prioritise interventions, manage operational risk, and align maintenance actions with production objectives. Several deep learning techniques are now widely applied in industrial environments.

Convolutional Neural Networks (CNNs)CNNs are commonly used to analyse vibration spectrograms, thermal images, acoustic signatures, and visual inspection data. Subtle changes in these signals—often undetectable to human operators—can indicate early-stage bearing wear, imbalance, or surface degradation.

Recurrent Neural Networks (RNNs) and Long Short-Term Memory (LSTM) NetworksManufacturing equipment continuously generates time-series data from sensors embedded in motors, pumps, gearboxes, and actuators. LSTM models are particularly effective at learning long-term temporal dependencies, making them well-suited for predicting gradual wear, fatigue accumulation, and performance drift.

Autoencoders for Anomaly DetectionAutoencoders learn the normal operating behaviour of machines. When incoming data deviates from this learned baseline, the system flags anomalies that may signal emerging faults—even when labelled failure data is limited.

Practically, these models serve as digital reliability engineers by continuously monitoring assets and providing early warnings well in advance of potential production disruptions.

However, the effectiveness of learned intelligence is fundamentally dependent on the quality of the physical systems responsible for sensing, capturing, and transmitting data from the factory floor.

The Electronics Foundation Behind Predictive IntelligenceDeep learning-based predictive maintenance does not exist in isolation. Its effectiveness depends critically on the quality, reliability, and consistency of data originating from industrial electronics and sensing infrastructure. The performance of an AI model is fundamentally bounded by the fidelity of the signals it receives, which determines how accurately physical degradation mechanisms—such as bearing wear, insulation breakdown, or mechanical imbalance—are reflected in the data domain.

High-precision MEMS vibration sensors, thermal imaging modules, acoustic sensors, pressure sensors, and current-monitoring ICs form the data backbone of predictive systems. If these sensors are poorly calibrated, noisy, or inconsistently sampled, even the most advanced deep learning models will learn misleading patterns.

At the edge, industrial gateways and AI-capable processors facilitate local, low-latency analytics, thereby reducing reliance on cloud connectivity. This capability is particularly critical in sectors such as semiconductor manufacturing, automotive robotics, and power generation, where even milliseconds of delay or brief connectivity interruptions can result in significant consequences. In this context, sensors, edge processors, and industrial communication networks are foundational enablers of predictive intelligence rather than merely supporting components.

Industry Adoption: From Pilots to ProductionAcross sectors, deep learning-driven predictive maintenance is moving steadily from pilot projects to full-scale deployment.

Automotive ManufacturingAutomotive manufacturers increasingly apply AI-driven analytics to robotic assembly lines, analysing torque, vibration, and process parameters. These systems reduce unplanned downtime, stabilise quality, and support a transition from fixed maintenance schedules to condition-based strategies.

Aerospace and AviationRolls-Royce remains a reference point in this domain. Through its Engine Health Monitoring and Total Care programs, the company uses advanced analytics to anticipate component degradation, improve fleet availability, and enhance safety—demonstrating the long-term value of predictive intelligence in mission-critical systems.

Energy and UtilitiesPower plants rely on deep learning models to detect early signs of turbine imbalance, transformer insulation ageing, and rotating equipment faults. Early detection reduces outage risk and supports more reliable grid operations.

Electronics and Semiconductor ManufacturingIn semiconductor fabs, where uptime and yield are paramount, AI-based diagnostics monitor temperature stability, vibration, and process consistency. Predictive maintenance plays a central role in maintaining the precision required for advanced chip fabrication.

Industry Perspective: Insights from the FieldAccording to Sanjeev Srivastava, an industry spokesperson with extensive experience in industrial automation and intelligent manufacturing systems, the evolution of predictive maintenance reflects a deeper transformation in how manufacturers approach reliability and operational efficiency.

He observes that the transition from rule-based monitoring to learning-driven intelligence enables organisations to detect early-stage stress and degradation patterns that would otherwise remain invisible until failure. In this view, predictive maintenance is no longer a standalone analytics initiative but an integral part of how modern factories manage uptime, energy efficiency, and long-term asset performance.

This perspective aligns with a broader industry consensus that deep learning–based predictive maintenance is increasingly influencing strategic decision-making at the factory level, moving beyond experimental deployments.

Practical Challenges That Still MatterDespite its advantages, the implementation of deep learning–based predictive maintenance presents challenges that extend beyond algorithmic development. Frequently, organisational and data-related constraints are more formidable than the technological aspects.

Data Quality and ConsistencyDeep learning models require large volumes of reliable data. Poor sensor calibration, noise, and inconsistent sampling can significantly degrade prediction accuracy.

Legacy Equipment IntegrationMany factories operate a heterogeneous mix of new and ageing equipment that was never designed for continuous data sharing. Retrofitting sensors and integrating AI insights with existing PLCs, ERP, and MES systems requires careful engineering and cross-functional coordination.

Model Transparency and TrustMaintenance engineers with decades of experience are unlikely to act on AI recommendations without appreciating their rationale. Explainable AI techniques are, therefore, essential for building trust and encouraging adoption on the factory floor.

Scalability Throughout SitesModels trained in one plant may not transfer directly to another due to differences in equipment, operating conditions, and maintenance practices. Hybrid cloud–edge architectures and continuous retraining are essential for enterprise-wide deployment.

Cost constraints and return-on-investment timelines also significantly influence adoption, especially when predictive maintenance initiatives compete with other capital priorities within the plant.

The Road Ahead for Predictive MaintenanceSeveral trends are shaping the next phase of predictive maintenance:

- Edge AI for real-time, low-latency predictions

- Digital twins that simulate asset behaviour and support model training without disrupting production

- Federated learning to enable collaborative model improvement while preserving data privacy

- AI-driven maintenance orchestration linking predictions with scheduling and spare-part logistics

- Greater alignment with industrial standards such as IEC 62443 for cybersecurity and ISO 55000 for asset management

Digital twins provide substantial advantages; however, their effectiveness is contingent upon model fidelity and close synchronisation with real operational data. When anchored in live data rather than functioning as standalone simulations, digital twins serve as powerful tools for model training and workforce preparation without incurring downtime risks.

Edge AI, meanwhile, brings intelligence closer to the machine, enabling resilient, real-time decision-making even in connectivity-constrained environments. Together, these technologies are shaping more autonomous, responsive, and scalable maintenance systems.

Importantly, the future of predictive maintenance does not entail replacing engineers. Rather, artificial intelligence augments human expertise by managing continuous monitoring, anomaly detection, and early warning tasks at a scale unattainable by human teams alone.

Conclusion: A Strategic Shift, Not a Technology TrendDeep learning–based predictive maintenance is transforming the management of reliability, efficiency, and risk in the context of Smart Manufacturing 4.0. Through the integration of advanced algorithms, robust industrial electronics, and edge computing, organisations are able to anticipate failures, minimise downtime, extend asset lifespans, and enhance safety.

While challenges remain, momentum across industries is unmistakable. Advances in explainable AI, digital twins, and edge intelligence are accelerating adoption and lowering practical barriers.

Engineers continue to play a critical role in interpreting predictions, balancing safety with production priorities, and making high-impact decisions. In this collaboration between human judgment and machine intelligence, predictive maintenance can be realised. Within this context, deep learning–based predictive maintenance should be regarded not as an isolated artificial intelligence initiative, but as the foundational reliability backbone of Smart Manufacturing 4.0.ng 4.0 is being built.

The post Deep Learning-Based Predictive Maintenance: The Backbone of Smart Manufacturing 4.0 appeared first on ELE Times.

How Ethernet accelerates the move to software-defined vehicles

By: Madison Ecklund, systems manager, Body Electronics & Lighting

A zone architecture and Ethernet represent the future of networking in vehicles. New features in vehicles, as well as the shift to aggregating sensors and actuators into zonal control modules, require a high-bandwidth and low-latency in-vehicle communication network. A zone architecture implementing Ethernet enables the growing trend of the software-defined vehicle.

Most vehicles today are built using a type of wiring and electronic control unit (ECU) architecture called a domain architecture. A domain architecture categorises ECUs into domains based on specific functions, regardless of their physical location in the vehicle.

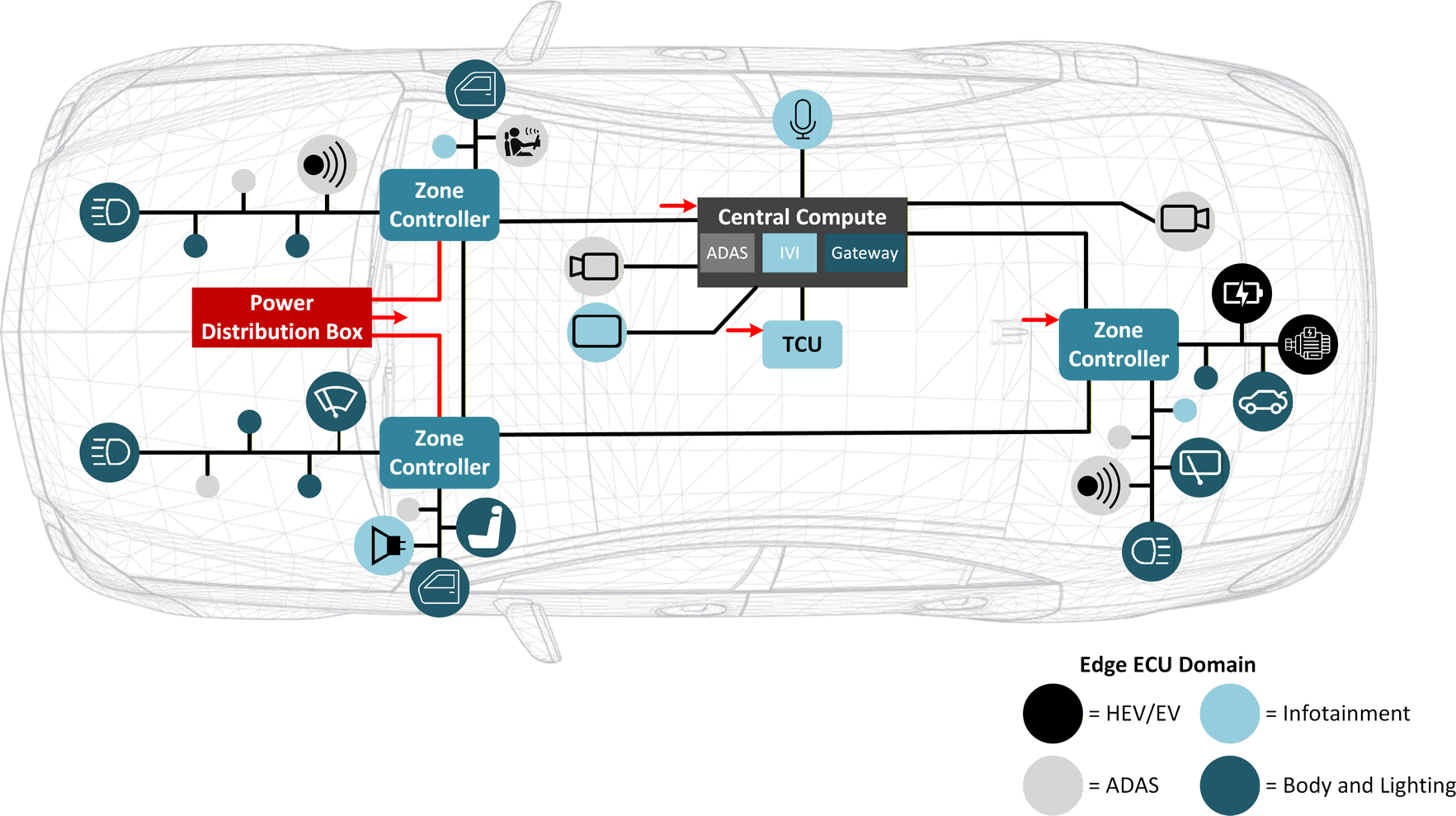

A zone architecture, in contrast to a domain architecture, organises communication, power distribution and load control by location rather than by function, as shown in Figure 1. A zonal control module behaves as a network data bridge between the vehicle’s computing system and local edge nodes such as smart sensors and ECUs. To reduce cabling in the vehicle, a zonal control module will also distribute power to different edge nodes (by implementing semiconductor smart fuse capabilities), handle low-level computing, and drive local loads such as motors and lighting.

Figure 1. Example of a zone architecture

Figure 1. Example of a zone architecture

Zonal control modules transfer data from various sensors and ECUs through an edge-node communication network and forward the combined sensor data to the central computing system through backbone communication. Similarly, the zonal control modules transfer data received from the central computing system to various actuators, again through backbone communication, and again through an edge-node communication network. This two-way communication between the central computing system and the zonal control modules requires a high-bandwidth and low-latency communication backbone in order to handle the large amount of data generated by functions such as multiple advanced driver assistance system (ADAS) sensors, vehicle motion control and adaptive driving beams.

Bandwidth requirements in a zone architectureTo understand the value in using Ethernet in vehicles, let’s break down Ethernet use by application. The newly defined Single-Pair Ethernet supports speeds from 10Mbps to 10Gbps, defined through Institute of Electrical and Electronics Engineers (IEEE) 802.3cg (10Mbps), IEEE 802.3bw (100 Mbps), IEEE 802.bu (1Gbps) and IEEE 802.3ch (10Gbps). All of these new Ethernet technologies work over a single-pair cable and can communicate at distances as far as 15m, which is long enough to cover the longest link in a vehicle. Ethernet can also enable the time synchronisation of sensor data using IEEE 802.1AS timestamping to achieve low latency.

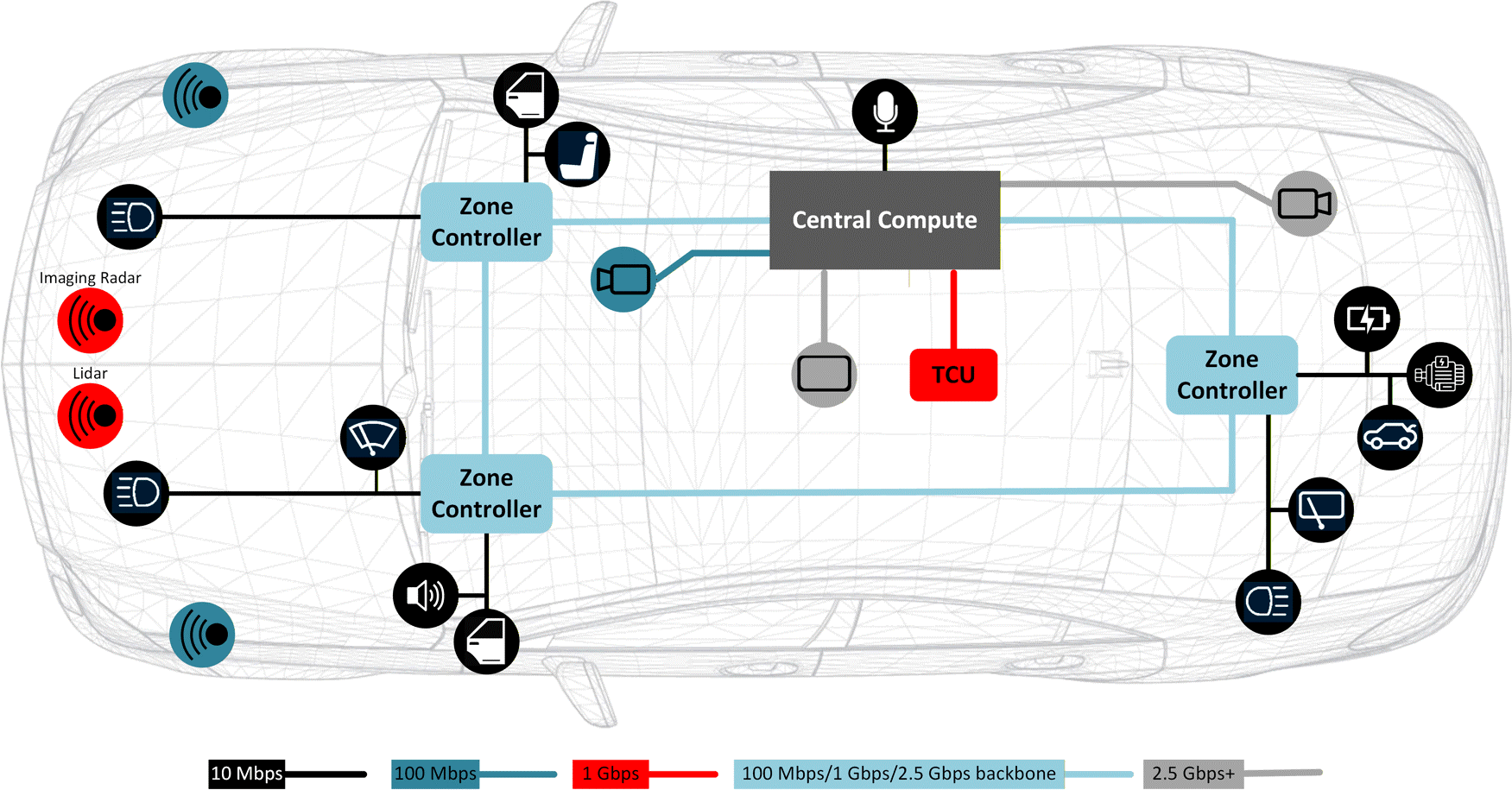

While Ethernet is capable of extremely fast speeds, these speeds are not necessary in every context. For example, communicating with the door control module or the heating, ventilation and air-conditioning system does not require a 100Mbps data rate. A 10Mbps Ethernet PHY, such as the DP83TD555J-Q1, or an alternative network protocol, such as Controller Area Network (CAN), is better for lower-speed and less bandwidth-intensive use cases, while reserving higher speeds for sending aggregated camera and autonomous driving sensor data from the zonal control modules to the central computing system. Figure 2 shows where to use different speeds of Ethernet in a zone architecture.

Figure 2. Ethernet in a zone architecture

Figure 2. Ethernet in a zone architecture

Using Figure 2, let’s take a closer look at the communication speeds used for radar, lidar, camera and body applications. When the radar or lidar system-on-a-chip (SoC) processes the data, typically CAN, 10Mbps Ethernet or 100Mbps Ethernet communicates lidar or radar data to the zonal control module. When only first- or second-level data processing occurs, 100Mbps to 1Gbps Ethernet communicates radar and lidar data to the zonal module or central computer. Sending raw lidar or radar data to the central computing system for processing will extract more information through sensor fusion of various sensors. The transmission of such a large amount of raw data requires a higher bandwidth, typically a serial/deserializer (SerDes) protocol or 2.5Gbps plus Ethernet.

For cameras, SerDes such as Flat Panel Display (FPD)-Link is the most appropriate protocol when a level of increased ADAS data requires all of the raw data from the front camera for post-processing.

If it is possible to compress the data from the front camera and you don’t need this increased level of ADAS data, 100Mbps Ethernet is an alternative.

Body domain modules such as door handle sensors, window-lift control modules and side mirror control modules traditionally use the CAN and Local Interconnect Network (LIN) protocols to communicate, as neither requires a high bandwidth. While designers will continue to use CAN and LIN, the increased use of Ethernet in vehicles also creates a place for 10Mbps 10BASE-T1S multidrop Ethernet. Ethernet is traditionally a point-to-point topology, but 10BASE-T1S Ethernet is the first Ethernet standard enabling functionality over a bus topology.

Multigigabit Ethernet in a zone architectureWhat is the potential evolution of the zone architecture? It begins with aggregating body domain data, incorporating power distribution, and centralising computing. Over time, zone architectures will start aggregating data from other domains such as ADAS and infotainment. The end goal is to incorporate all domains into

the zone architecture. Regardless of which domain the data belongs to, the zonal control module and central computing system will still use the same backbone communication network to transfer data. Audio is a prime target to move into zone control modules, since it’s possible to transmit audio data over Ethernet with Audio Video Bridging standards.

Body domain functions typically require 10Mbps or less. But as ADAS or in-vehicle infotainment functions such as radar, lidar, audio and cameras become incorporated into the zone architecture, the speed and bandwidth requirements must increase and/or the Ethernet backbone topology may change from star to ring to accommodate the amount of safety-critical and time-sensitive sensor data.

Audio generates about 1.5Mbps per channel; a radar sensor typically generates 0.1Mbps to 15Mbps. Lidar generates 20Mbps to 100Mbps. Cameras generate the most 500Mbps to 3.5Gbps. Today’s vehicles typically have four to six radar sensors, one to five lidar sensors, 12 to 20 audio speakers, 12 to 16 audio microphones and six to 12 cameras. Table 1 shows the range of data generated by each type.

Table 1. Data generated in a zone architecture| Type | Data Generated | Quantity of Sensors | Low | Mid | High |

| Audio speaker | 1.5Mbps | 12 to 20 | 3.2Mbps | 24Mbps | 30Mbps |

| Audio microphone | 1.5Mbps | 12 to 16 | 3.2Mbps | 21Mbps | 24Mbps |

| Radar | 0.1 – 15Mbps | 4 to 6 | 0.4Mbps | 35Mbps | 90Mbps |

| Lidar | 20 – 100Mbps | 1 to 5 | 20Mbps | 100Mbps | 500Mbps |

| Camera | 500Mbps – 3.5Gbps | 6 to 12 | 3Gbps | 9Gbps | 42Gbps |

It is the total data being generated that is causing the push for 2.5Gbps, 5Gbps and 10Gbps Ethernet among original equipment manufacturers (OEMs). The zone architecture needs a backbone communication network capable of transmitting the enormous amount of data produced by ADAS sensors to the central computing system. Uncompressed camera data already goes beyond current Ethernet capabilities, and cameras continue increasing in resolution and pixel count. As vehicles continue toward autonomy, the number of sensors

will increase. Thus, the bandwidth required to support increased camera resolution and sensors will grow correspondingly.

The Ethernet speeds that OEMs are requesting most likely differ because of the transition schedules for incorporating different functions into the zonal control module. Audio playback on interior speakers is one of the first cross-domain data types adopted for use on the Ethernet backbone. This is likely caused by lower data generation in comparison, since 20 audio speaker channels generate about 30 Mbps. An existing 100Mbps or 1Gbps Ethernet backbone can easily accommodate the addition of audio playback data. Overall, the more high-level data functions in zonal control modules, the higher the bandwidth requirements.

Using Ethernet as the backbone for a zone architecture allows vehicles to transfer more data over the in-vehicle network when connecting to the internet or remote OEM servers. This enables subscription-based services and vehicle diagnostics through remotely performed firmware-over-the-air (FOTA) updates. FOTA updates allow for different hardware and software update cycles, which can be asynchronous as a result of the independence of sensors and actuators from the central computing node. A FOTA update can also push additional features and safety improvements, instead of waiting for a new model or having to bring the vehicle in to be worked on. Both the OEM and the customer benefit, as the OEM has control over updating the vehicle with additional features after launch, and the consumer is less inconvenienced by trips to a dealer to update firmware.

PHYs in a zone architectureEthernet requires the use of PHYs to transmit and receive high-speed data. Automotive Ethernet PHYs eliminate many of the concerns with Ethernet as the backbone of the wiring in vehicles, such as poor signal quality in

such a volatile environment. Ethernet PHYs from Texas Instruments (TI) are capable of operating at a range of temperatures from –40°C to 125°C, in compliance with Automotive Electronics Council-Q100 Grade 1 standards.

Ethernet PHYs also have to pass Ethernet compliance standards, ensuring that they meet certain interoperability and reliability standards regarding electromagnetic compatibility and electromagnetic interference, as well as IEEE conformance as specified by Open Alliance TC1 and TC12 standards, to work in a vehicular environment. With advanced diagnostic features such as signal quality indication, time domain reflectometry and electrostatic discharge sensors, PHYs are capable of detecting when errors occur and can identify these faults and enable the host system to respond proactively. For example, in the event of electrostatic discharge (ESD), the PHY sends an interrupt signal to the SoC and Media Access Control to alert it of the event and then checks other parts in the system.

Ethernet PHYs can also wake up remote ECUs over the Single-Pair Ethernet cable using the Open Alliance TC10 specification’s wake and sleep technology, which eliminates the need for a separate wire to wake

the ECUs from sleep. IEEE 802.1AE Media Access Control Security (MACsec) could also be an important technology to enable the authentication of networking ECUs and to encrypt/decrypt data to avoid cyberattacks, as cyberattacks represent the biggest threat to automotive networking.

Additional Ethernet PHYs include:

- TI’s DP83TC812-Q1, DP83TC815-Q1, and DP83TC814-Q1 100BASE-T1 PHYs have next-generation features suitable for luxury vehicles, while the smaller DP83TC813-Q1 100BASE-T1 PHY may be appealing in situations where printed circuit board space is at a premium. The DP83TG720-Q1 and DP83TG721-Q1 can connect zonal modules to data-intensive features such as the central computing system and telematics control unit, leaving headroom for the inclusion of additional features in later models without making intensive changes to the wiring harness. Combined, these PHYs open the door for more advanced and capable vehicles on the road.

- TI’s portfolio of Single-Pair Ethernet PHYs is designed to be footprint or pin-to-pin-compatible with both TI’s 100BASE-T1 and 1000BASE-T1. A single board design enables upgrades to the feature set or

bandwidth in future developments with no hardware changes. This approach helps accelerate development cycles, meet the requirements of different OEMs, and reduce time to market, thus saving R&D costs.

- The DP83TD555J-Q1 10BASE-T1S Serial Peripheral Interface MAC PHY integrates seamlessly into existing Ethernet backbone networks, eliminating protocol conversion gateways and their associated latency and processing overhead when connecting traditional CAN/LIN edge nodes. Supporting Power over Data Line, the device delivers both power and 10Mbps data over a single twisted-pair cable, reducing cable weight and system cost. Built-in PHY Collision Avoidance provides deterministic scheduling with guaranteed transmit opportunities for each network node, ensuring predictable communication timing. The larger Ethernet frame payloads enable extraction of higher data volumes and more diverse data types from ECUs at the vehicle’s edge, facilitating advanced diagnostics and over-the-air updates while maintaining real-time performance.

Advancements in automotive Ethernet technology will enable automakers to deliver more features and capabilities to new vehicles. A zone architecture implementing Ethernet will help accelerate the shift to software- defined vehicles by providing the data capacity that will support the next wave of autonomous features – leading to safer and smarter vehicles on the road.

The post How Ethernet accelerates the move to software-defined vehicles appeared first on ELE Times.

Intel Foundry Advances Chip Power Delivery with Next-Generation Capacitor Technology

Courtesy: Intel

Highlights

- Intel and Intel Foundry researchers demonstrated three promising metal-insulator-metal (MIM) materials delivering intrinsic capacitance density up to 98 femtofarads per square micrometre (fF/μm²), which is significantly higher than the 37 fF/um² intrinsic capacitance density of the material option used in state-of-the-art technology for more efficient chip power delivery.

- Compatible with advanced integrated MIM structures, these technologies deliver leakage well below targets while maintaining stable performance for over 400,000 seconds at elevated temperature, enabling multi-generational improvements without increasing manufacturing complexity.

- These process technology advances aid in the delivery of high performance per watt for chips ranging from data centres to mobile devices, continuing manufacturing efficiency improvements without added fabrication complexity.

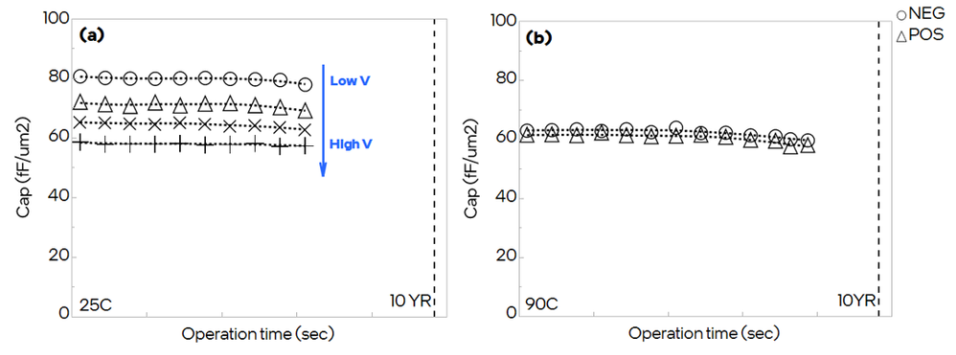

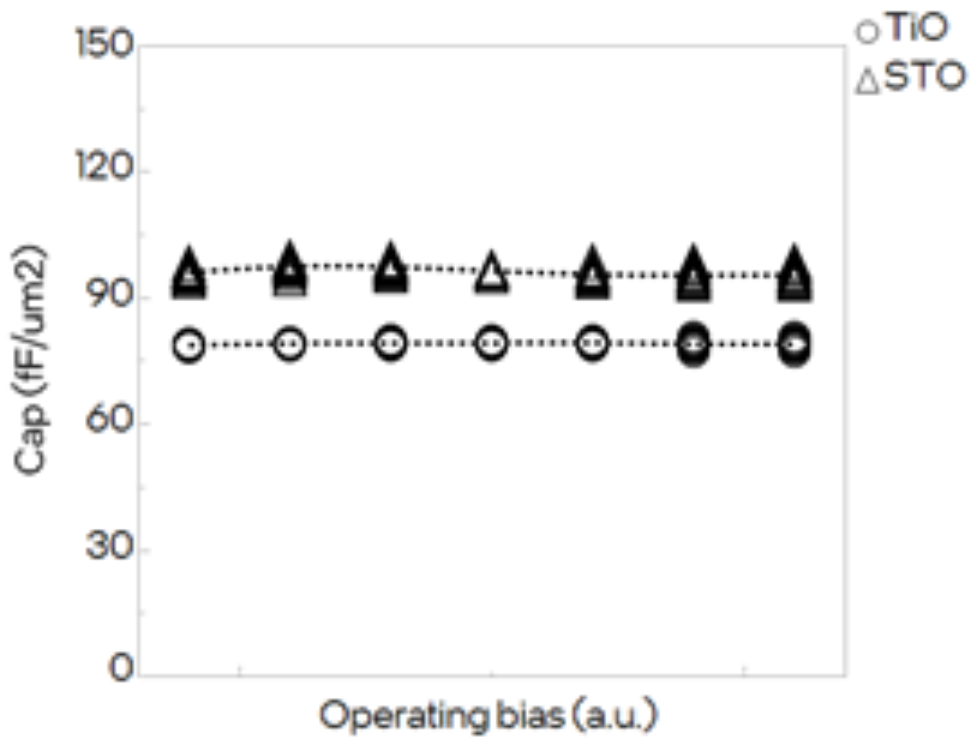

Researchers at Intel and Intel Foundry have demonstrated next-generation decoupling capacitor (DCAP) materials that deliver substantial performance improvements for power delivery in advanced computer chips. Presented at the 2025 IEEE International Electron Devices Meeting (IEDM), the breakthrough takes advantage of unique metal-insulator-metal material properties. Ferroelectric hafnium zirconium oxide (HZO) leverages field-dependent dielectric response to achieve 60 to 80 fF/μm², while titanium oxide (TiO) and strontium titanium oxide (STO) reach 80 and 98 fF/μm² through ultra-high dielectric constants — each offering exceptional reliability with minimal voltage dependency.¹ All three materials show negligible capacitance drift over 100,000 seconds, leakage currents much lower than requirements, and 10-year projected breakdown voltages exceeding specifications at 90 degrees Celsius.

These advances have immediate implications for the semiconductor industry and the broader technology sector. Data centres processing artificial intelligence (AI) workloads can maintain high performance per watt longer with higher MIM decap, completing workloads faster while reducing data centre energy consumption and operation costs. Mobile devices benefit from reliable high performance and faster transitions to lower power states, leading to better battery efficiency. High-performance computing (HPC) systems gain processing headroom from a stable supply voltage, enabling maximum frequency for longer durations.

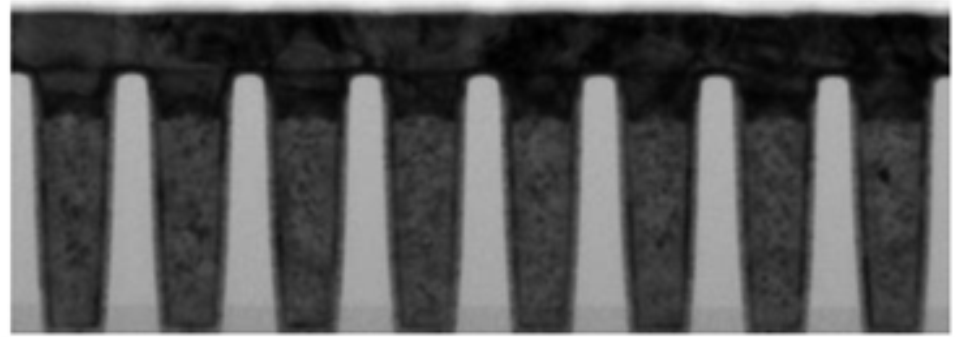

For chip manufacturers, these new capacitor materials offer a path to continue improving power delivery efficiency across multiple technology generations. The materials integrate seamlessly with existing backend manufacturing processes, enabling adoption without major retooling investments. Today’s advanced MIM capacitors often focus on architectural solutions similar to the state-of-the-art Omni MIM used in Intel 18A. Omni MIM has 397 fF/um² capacitance due to its deep-trench and multi-plate structure (see top image). By developing novel oxide materials that integrate seamlessly with these types of structures, the industry can unlock capacitance densities that exceed today’s benchmarks. These technologies help maintain the economic viability of advancing semiconductor manufacturing while meeting the escalating power delivery demands of next-generation processors, accelerators, and systems of chips designs.

The Challenge of Power Delivery in Advanced Chips

As chips pack more transistors into smaller spaces, maintaining stable power delivery grows increasingly difficult. When billions of transistors switch simultaneously, voltage can drop momentarily — called voltage droop — causing processors to slow, make errors, or run at reduced speeds. Decoupling capacitors solve this issue by acting as electrical reservoirs that instantly supply current when needed and absorb excess when demand drops.

Traditional approaches to increasing capacitance involve stacking multiple capacitor layers or etching deeper trenches to create more surface area. However, both significantly increase manufacturing complexity and cost. Material innovations offer an alternative by dramatically increasing the effective dielectric response, which is the material’s ability to store electrical charge. Finding materials with high effective dielectric constants that meet strict reliability requirements for years of operation at elevated temperatures represents a major materials science challenge.

Harnessing Ferroelectric Materials for Voltage-Responsive Capacitance

Ferroelectric hafnium zirconium oxide offers a unique property — the ability to store electrical charge changes with the applied electric field. Unlike conventional dielectrics, where this ability remains constant, ferroelectric materials contain microscopic regions called domains that orient themselves in response to electric fields, enabling different capacitance values at various operating voltages.

Figure 1. Transmission electron microscope image showing the deep-trench capacitor structure used to characterise the MIM stacks.

This type of testing requires careful attention to measurement methods. Under actual decoupling capacitor operation, where a constant bias voltage experiences small disturbances, the material shows remarkably stable capacitance independent of disturbance voltage, hold time, or number of pulses applied. The ferroelectric capacitors deliver 60 to 80 fF/μm² depending on operating voltage. Extensive reliability testing under various voltage levels, elevated temperatures of 90 degrees Celsius, and extended operation exceeding 400,000 seconds demonstrated negligible capacitance drift.

Figure 2. Capacitance measurements showing HZO’s stable, reliable performance under actual decoupling capacitor usage conditions.

Achieving Ultra-High Capacitance with Advanced Dielectric Materials

Titanium oxide and strontium titanium oxide provide even higher capacitance through extremely high dielectric constants with minimal voltage dependency. TiO achieves approximately 80 fF/μm², while STO reaches 98 fF/μm² — the highest demonstrated capacitance density.

Figure 3. Capacitance versus voltage measurements showing weak voltage dependency across the operating range of interest.

Achieving these performance levels requires precise control over material structure at the atomic scale. The team developed optimised processes, including templating layers that guide crystal growth, controlling film deposition, thermal annealing that promotes desired crystal structure, and interface engineering to minimise defects. X-ray diffraction confirmed the successful production of strong high-dielectric-constant phases in both materials.

For TiO, detailed analysis reveals leakage current follows the Poole-Frenkel mechanism, where electrons trapped at defects gain energy from the electric field and hop between trap sites. This explains why the material withstands high currents without breaking down, with stress-induced leakage behaviour providing self-limiting protection against premature failure.

Exceptional Reliability Enables Multi-Layer Integration

All three materials demonstrate reliability exceeding decoupling capacitor requirements. Leakage current remains below targets even at 90 degrees Celsius. This is achieved through improved dielectric properties rather than making insulators thinner, which would increase leakage.

Time-dependent dielectric breakdown testing stressed devices at higher voltages and elevated temperatures, using statistical models to extrapolate expected lifetime. For HZO, extrapolation predicts operation exceeding high-voltage target spec for 10 years at 90 degrees Celsius with nearly identical breakdown behaviour regardless of voltage polarity. TiO also shows an extrapolated 10-year operating voltage significantly exceeding high-voltage requirements.¹ STO passes reliability targets for lower-voltage applications, though with larger variation due to deposition tooling requiring further optimisation.

The symmetric breakdown behaviour of HZO enables cost-effective multi-layer stacking where multiple capacitor layers connect in series, multiplying total capacitance without requiring complex integration schemes.

Looking Forward: Enabling Next-Generation Computing

This research demonstrates a clear path for continuous decoupling capacitor improvements across multiple semiconductor generations without increasing integration complexity. By improving intrinsic material properties rather than relying on structural innovations like deeper trenches or more layers, these approaches maintain manufacturing feasibility while delivering substantial performance gains.

The three materials provide flexibility for different requirements. HZO offers a practical, near-term option with reliability and straightforward integration. TiO serves as a successor with higher capacitance and exceptional high-voltage capability. STO represents the ultimate capacitance density for applications prioritising maximum capacitance.

Future work will focus on optimising these materials for manufacturing integration, refining deposition processes to improve uniformity, and exploring multi-layer stacking strategies. As computing advances toward AI accelerators, high-performance processors, and energy-efficient data centres, these capacitor technologies will play a vital role in enabling stable power delivery for next-generation semiconductor devices.

The post Intel Foundry Advances Chip Power Delivery with Next-Generation Capacitor Technology appeared first on ELE Times.

Keysight Partners with 3dB Labs to Enable Interoperable Signal Monitoring and Analysis

Keysight Technologies announced the integration of its spectrum analysers and FieldFox handheld analysers with 3dB Labs’ Sceptre, expanding interoperability between signal monitoring hardware and analysis software. The collaboration enables teams to monitor, analyse, and record radio frequency (RF) activity across mixed receiver environments within a unified workflow.

Teams operating in dense and contested RF environments often rely on equipment from multiple vendors while needing a consistent way to observe and interpret spectrum activity. By enabling Keysight instruments to operate directly within the Sceptre software environment, the integration reduces friction between measurement hardware and signal analysis workflows —allowing organisations to maintain multi-vendor receiver architectures while gaining a common platform for signal monitoring and investigation.

Sceptre supports real-time and offline spectrum and temporal analysis and connects receivers deployed on stationary or mobile collection platforms. When paired with Keysight analysers, users can monitor and characterise signals as they appear, capture activity for later playback, and analyse unknown or interfering signals to determine their nature and behaviour. The platform also supports unattended and remote operation, making it suitable for long-duration monitoring, RF surveys, and post-event analysis where access may be constrained.

Together, Keysight and 3dB Labs aim to strengthen the broader signal analysis ecosystem by promoting interoperability between measurement hardware and software-defined receiver platforms. Beyond improving day-to-day operations, the collaboration supports the development of more adaptable signal workflows, encourages integration across traditionally siloed tools, and helps teams build expertise that evolves as signal environments and mission demands change.

Dave Evans, President, 3dB Labs, Inc., said: “We are excited to partner with Keysight and integrate with their FieldFox handheld analysers, bringing Sceptre’s powerful signal detection, identification, and analysis capabilities to the tactical edge. We designed Sceptre for maximum interoperability, enabling teams to move from signal data to decisive action without bottlenecks, and together, we’re delivering an integrated solution that turns advanced signal processing into immediate mission advantage.”

Eric Taylor, Vice President of Aerospace, Defence and Government Solutions, Keysight, said: “Operational environments demand interoperability. By integrating Keysight analysers into multi-vendor ecosystems, teams can spend less time managing tools and more time understanding the spectrum. This integration brings together two leaders in the industry —Keysight in RF measurement and 3dB Labs in signal intelligence — to deliver advanced signal monitoring and analysis capabilities for our customers.”

The post Keysight Partners with 3dB Labs to Enable Interoperable Signal Monitoring and Analysis appeared first on ELE Times.

India to Boost Local Chip-making with a 1 Trillion Rupees Funding

India is expected to unveil a funding of more than a trillion rupees, nearly $10.8 billion, to fuel domestic chip-making. With 10 projects already approved, India aims to become a global manufacturing hub in the near future.

This new set of funds is expected to provide subsidies for chip designing projects, supply chain developments, and manufacturing equipment. Currently, the plan is under evaluation and may see the green light in a couple of months.

With the accelerated demand for chips driven by the rise of AI and electronics, the market is evolving at an exponential rate. Under the current leadership, India aims to position itself at the top to meet global demand.

With companies like Micron, TATA, and Foxconn already building India’s chip ecosystem, the country is expected to be close to industry leaders such as Taiwan and the US by 2032.

The post India to Boost Local Chip-making with a 1 Trillion Rupees Funding appeared first on ELE Times.

Qualitas Semiconductor Picks Anritsu’s Vector Network Analyzer for High-Speed Interconnect Signal Integrity Verification

Qualitas Semiconductor Co., Ltd., a leading developer specialising in PHY IP solutions for high-speed interconnects, has adopted Anritsu’s ShockLine 4-Port Performance Vector Network Analyzer (VNA) MS46524B to enhance signal integrity verification for its high-speed interface IP development. Qualitas has significantly improved the quality and reliability of its IP solutions by establishing a verification environment that enables highly accurate, repeatable signal-integrity evaluations across the entire system, including PHY IP.

Qualitas develops high-speed interface IP solutions, including SerDes PHY IP, PCI Express® PHY IP, UCIe interconnect solutions, and Ethernet PHY IP, and it collaborates with global customers across advanced semiconductor markets in fields such as AI, data centres, automotive, and mobile systems.

As semiconductor interface technologies continue to increase data transmission speeds, system-level verification that includes the characteristics of the entire interconnect channel, such as the PCB, package, and socket, has become increasingly important, rather than just the performance of the chips. In high-speed signal environments, factors such as transmission loss, reflection, and crosstalk affect signal integrity, making precise measurement-based verification environments essential.

To address these requirements, Qualitas has adopted Anritsu’s ShockLine MS46524B to analyse the characteristics of high-speed interconnect channels and quantitatively verify signal integrity, based on differential S-parameter analysis and time-domain reflectometry (TDR) measurements.

The ShockLine MS46524B provides high-frequency measurement stability, support for mixed probe and coaxial cable environments, and high-resolution TDR measurement capabilities, enabling precise analysis of subtle impedance variations occurring in the package and PCB structures. Through this approach, Qualitas has established a verification environment that is close to the conditions of real systems, enabling it to provide the reliability required in the PHY IP development process.

Anritsu highlights the importance of signal integrity verification solutions and measurement technologies that are required in next-generation interface technology environments, and it plans to support semiconductor and high-speed interface development companies in building more efficient verification environments.

The post Qualitas Semiconductor Picks Anritsu’s Vector Network Analyzer for High-Speed Interconnect Signal Integrity Verification appeared first on ELE Times.

The Tomorrow for AI and India’s edge advantage

Courtesy: Qualcomm

Artificial intelligence is entering its next chapter, one that reshapes not only how computing works, but how people experience technology in their daily lives. Intelligence is no longer just a feature, but is being built directly into devices and woven into systems and experiences so that it becomes ambient and always present.

In this next chapter, AI runs everywhere — across smartphones, PCs, wearables, cars, industrial machines, robots and connected infrastructure. These systems will understand context and the physical world around them and adjust in real time to our needs. Intelligence will operate quietly alongside us — working in the background, responding instantly, adapting continuously and ultimately expanding what’s possible in productivity, creativity and learning.

This marks a fundamental shift in how humans interact with technology. The interfaces we’ve relied on for decades — screens, apps, menus — will matter less as intelligence becomes more natural and intuitive. We won’t have to tell our devices what to do because they will understand our intent, anticipate what we want and act on our behalf. Some devices will increasingly see what we see, hear what we hear, understand what we read and write. In many cases, AI will feel less like a tool and more like a trusted assistant — always available, always learning and designed around us.

As agentic AI assistants become more common, they will become your personal companion in your home, the workplace and your car — everywhere you go. For example, in India, smart glasses are already being used to make digital payments using voice commands or by scanning a QR code. In your car, your AI assistant will not only help you find the fastest route but can also manage your errands, make recommendations or answer questions about places of interest.

In industries, edge AI boxes are being used to improve decision-making and operational efficiency, including monitoring and optimising production processes in a manufacturing facility or better managing inventory in a retail store.

Making these experiences real requires a new architecture — one where intelligence is distributed seamlessly across every computing device from cloud to edge. Training and deep reasoning will continue to scale in the cloud. At the same time, immediacy, perception and personalisation, as well as ambient and physical AI, will happen on devices — closer to people and things.

“To realise this future, democratizing access to AI is essential. That requires competitive and efficient data centre technology, powerful on-device intelligence and advanced connectivity working together.”

India’s size, diversity, economic growth and digital momentum make it one of the most important countries for AI’s next chapter. With hundreds of millions of connected users, a vibrant developer ecosystem, and deep expertise across engineering and software, India is not simply adopting AI — it is helping define how AI can work for the world.

In agriculture, AI can help enable precision farming and natural resource optimisation. Access to healthcare can be improved by on-device screening and diagnostics, which extend care into clinics, homes,s and remote communities. AI will realise the vision of smart cities with intelligent traffic management, smart infrastructure, security, and more. And, AI-enabled devices, such as PCs, smartphones, and wearables, will make education more personalised and support continuous, lifelong learning. These are not abstract ideas; they are practical pathways to broader participation in the AI economy.

To realise this future, democratizing access to AI is essential. That requires competitive and efficient data centre technology, powerful on-device intelligence, and advanced connectivity working together. It also requires an ecosystem approach — bringing together industry, startups, academia, and policymakers to ensure innovation is trusted, accessible,e and sustainable.

At Qualcomm, we’ve been building toward this future — advancing high-performance, power-efficient, and heterogeneous computing, AI, and wireless technologies that enable intelligence everywhere. But no single company can define AI’s next chapter alone. Progress will come through collaboration, from aligning technology with real-world needs, and from ensuring the benefits of AI extend beyond early adopters to entire societies.

With the right choices, India can help shape a future where intelligence empowers people, accelerates opportunity, and reaches every community — setting an example the world can follow.

The post The Tomorrow for AI and India’s edge advantage appeared first on ELE Times.

Posifa Technologies Introduces PVC4001-C MEMS Pirani Vacuum Transducer for Wide-Range Vacuum Measurement

Posifa Technologies has introduced its new PVC4001-C MEMS Pirani vacuum transducer, the latest device in the company’s PVC4000 series. Designed for cost-effective OEM integration, the transducer combines a MEMS thermal conduction sensor, measurement electronics, a microprocessor, and an onboard barometric pressure sensor in an ultra-compact PCB assembly with a connector-terminated wire harness.

Based on Posifa’s second-generation MEMS thermal conduction chip, the PVC4001-C operates on the principle that the thermal conductivity of gases is proportional to vacuum pressure. Its electronics and microprocessor amplify and digitise the sensor signal and provide output via an I²C interface. For applications requiring calibrated output, users can enter up to 10 pairs of calibration points, which are used by a built-in piecewise linearization algorithm.

The PVC4001-C is designed to deliver stable performance across changing operating conditions. A built-in temperature sensor supports a temperature compensation algorithm to offset changes in thermal conductivity caused by ambient temperature variation. In addition, a pulsed excitation scheme — in which the sensor is heated for about 100 ms and then turned off for one second — helps minimise drift due to self-heating in high vacuum, while also reducing power consumption for battery-powered instruments.

The device provides a measurement range from 0.001 Torr to 900 Torr (1.3*10-4 kPa to 120 kPa) with a response time of less than 200 ms. Because Pirani vacuum sensors typically lose resolution above 10 Torr, the PVC4001-C adds an onboard barometric pressure sensor that supports measurement from 10 Torr to 760 Torr with 5 % accuracy across that extended range. This combination makes the device especially well-suited for portable digital vacuum gauges and for leak detection in closed systems maintained under primary vacuum, including vacuum-insulated panels.

Additional features of the PVC4001-C include low power consumption, resistance to contamination, and an operating temperature range of -25 °C to +85 °C.

The post Posifa Technologies Introduces PVC4001-C MEMS Pirani Vacuum Transducer for Wide-Range Vacuum Measurement appeared first on ELE Times.

STMicroelectronics to support AI infrastructure demand with high-volume production of its industry-leading silicon photonics platform

STMicroelectronics is now entering high-volume production for its state-of-the-art silicon photonics-based PIC100 platform used by hyperscalers for optical interconnect for data centres and AI clusters. The 800G and 1.6T PIC100 transceivers enable higher bandwidth, lower latency, and greater energy efficiency as AI workloads surge.

“Following the announcement of its new silicon photonics technology in February 2025, ST is now entering high-volume production for leading hyperscalers. The combination of our technology platform and the superior scale of our 300 mm manufacturing lines gives us a unique competitive advantage to support the AI infrastructure super-cycle,” said Fabio Gualandris, President, Quality, Manufacturing & Technology, STMicroelectronics. “Looking ahead, we are planning and executing on capacity expansions to enable more than quadrupling of production by 2027. This fast expansion is fully underpinned by customers’ long-term capacity reservation commitments.”

“The data centre pluggable optics market continues to expand strongly, reaching $15.5 billion in 2025. We expect the market to grow at a compound annual growth rate (CAGR) of 17% from 2025 through 2030, surpassing $34 billion by the end of the forecast period. In addition, co-packaged optics (CPO) will emerge as a rapidly growing segment, contributing more than $9 billion in revenue by 2030. Over the same period, the share of transceivers incorporating silicon photonics modulators is projected to increase from 43% in 2025 to 76% by 2030,” said Dr. Vladimir Kozlov, CEO and Chief Analyst at LightCounting. “ST’s leading silicon photonics platform, coupled with its aggressive capacity expansion plan, illustrates its capabilities to provide hyperscalers with secure, long-term supply, predictable quality, and manufacturing resilience.”

Upcoming PIC100 TSV Platform TechnologyAI infrastructure is experiencing unprecedented scaling, with cloud-optical interconnect performance becoming a critical bottleneck. Drawing on years of silicon photonics innovation, ST’s PIC100 platform provides state-of-the-art optical performance, including best-in-class silicon and silicon nitride waveguide losses (respectively as low as 0.4 and 0.5 dB/cm), advanced modulator and photodiode performance, as well as an innovative edge coupling technology.

In parallel with high-volume PIC100 production, ST is planning to introduce the next step in its silicon photonics technology roadmap: the PIC100 TSV, a new and unique platform that integrates through-silicon via (TSV) technology to further increase optical connectivity density, module integration, and system-level thermal efficiency. The PIC100 TSV platform is designed to support future generations of Near Packaged Optics (NPO) and co-packaged optics (CPO), aligning with hyperscalers’ long-term migration paths toward deeper optical–electronic integration for scale up.

The post STMicroelectronics to support AI infrastructure demand with high-volume production of its industry-leading silicon photonics platform appeared first on ELE Times.

Impact of AI on Computing and the Criticality of Testing

Courtesy: Teradyne

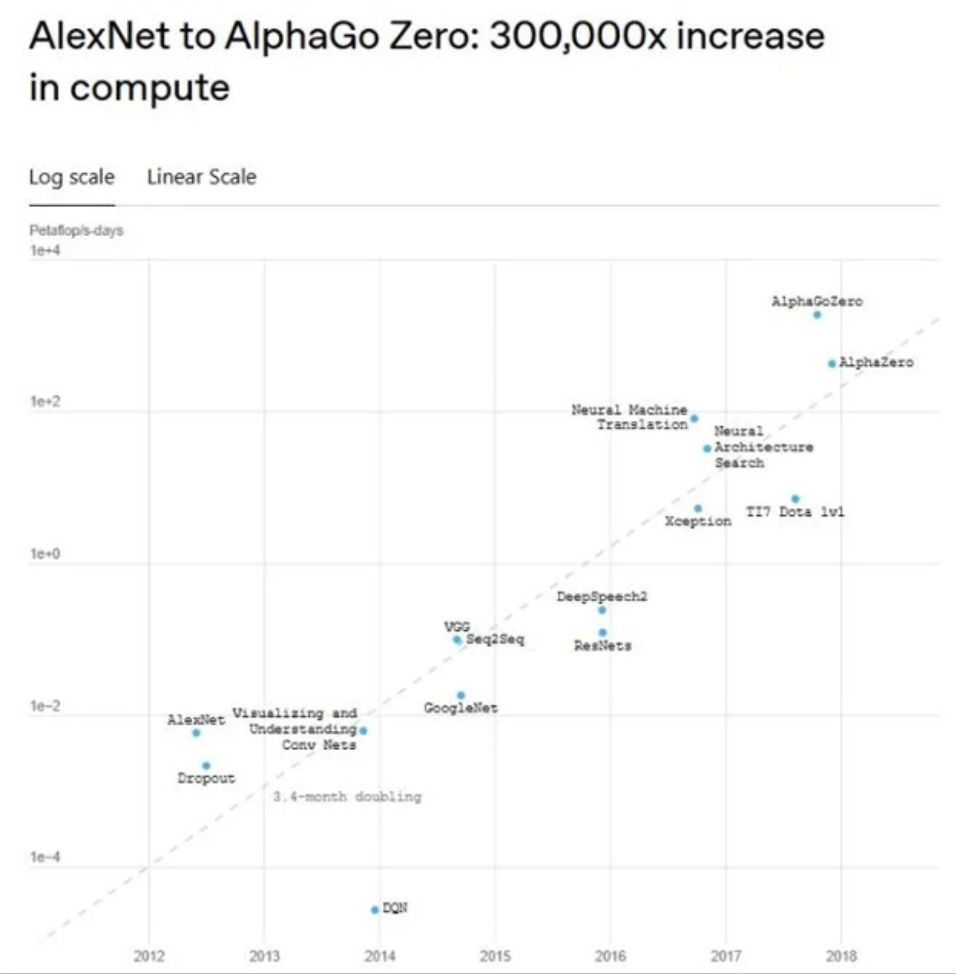

Artificial intelligence (AI) is transforming industries, enhancing our daily lives, and improving efficiency and decision-making, but its need for computing power is growing at an astonishing rate, doubling every three months (Figure 1). To maintain this pace, the semiconductor industry is moving beyond traditional chip development – it has entered the era of heterogeneous chiplets in advanced integrated packages.

(Figure 1: The Growth of Compute Requirements. Source: https://openai.com/index/ai-and-compute/)

(Figure 1: The Growth of Compute Requirements. Source: https://openai.com/index/ai-and-compute/)

The Rise of Chiplets

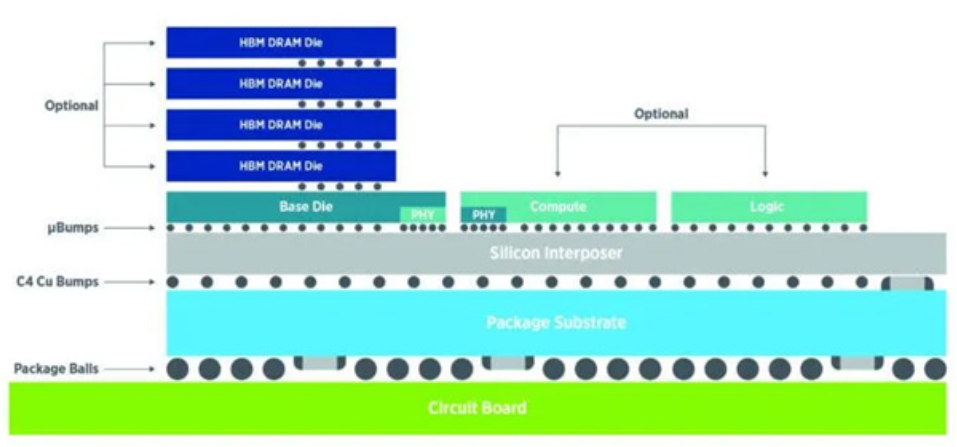

Chip companies like NVIDIA and AMD are rewriting the rules, designing architectures that combine multiple CPUs and GPUs in a single advanced package along with high bandwidth memory (HBM). AI workloads require rapid access to vast amounts of data, made possible by integrating HBMs. This approach, combining two, four, or more processing cores with HBM stacks, requires a complex, advanced packaging technique developed by TSMC called CoWos® – Chip-on-Wafer-on-Substrate, typically referred to as 2.5/3D packaging (Figure 2). These packages can exceed 100 mm x 100 mm in size and will require wafer interposer probers that can handle large CoW modules/stacks and also meet significantly larger thermal dissipation requirements, as discussed below.

(Figure 2: 2.5D/3D packaging architecture, Source: Teradyne)

(Figure 2: 2.5D/3D packaging architecture, Source: Teradyne)

To maintain peak performance, these heterogeneously integrated advanced packaging devices need proprietary high-speed interfaces to communicate efficiently. All these requirements contribute to an increasingly complex semiconductor landscape.

Testing Becomes More Complex in Step with Chip Advancements

As package complexity increases, so does the need for more deliberate test strategies. In the transition from monolithic dies to chiplets, long-established test methods are not always directly transferable because test IP is now distributed across multiple dies and, in some cases, across different design teams or companies. This fragmentation requires a clearer definition of what must be tested at each stage—die, bridge, interposer, substrate, and stack—and which standards or techniques apply to each scope.

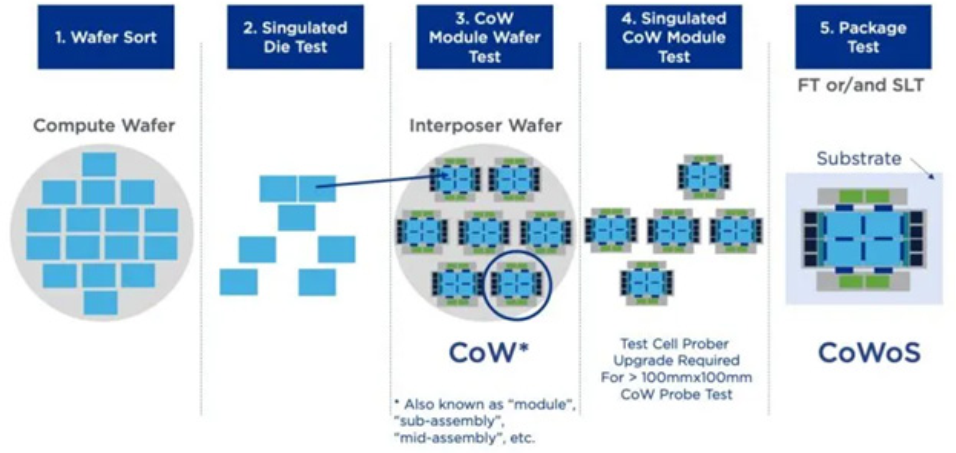

Packing multiple dies into a single chiplet-based system is a major advancement, but it raises a key challenge: verifying that every component functions correctly before final assembly. Multi-die packages require rigorous screening to avoid yield loss, and it is not enough to qualify only the dies. Interposers, substrates, bridges, and stacks also need to be validated, using test techniques appropriate to each layer. The industry is thus moving into “known-good-everything”, from known-good-die (KGD) to known-good-interposer (KGI), to known-good-CoW (KG-CoW), and so on. (Figure 3)

(Figure 3: Possible test insertions to ensure KGD and KG-CoW. Source: Teradyne)

(Figure 3: Possible test insertions to ensure KGD and KG-CoW. Source: Teradyne)

High-speed communication between chiplets introduces an additional layer of complexity. Dies must exchange data at extreme speeds – such as during GPU-to-HBM transfers – yet their physical and electrical interfaces vary by manufacturer. Open standards like Universal Chiplet Interconnect Express (UCIe ) continue to evolve, but chiplet interfaces still differ widely. To support this diversity, test solutions increasingly need interface IP that behaves like the device’s native protocol to avoid electrical overstress or probe-related damage. Some suppliers now offer UCIe-compliant PHY and controller IP that device makers can integrate, enabling automated test equipment (ATE) platforms to test high-speed links safely and consistently.

) continue to evolve, but chiplet interfaces still differ widely. To support this diversity, test solutions increasingly need interface IP that behaves like the device’s native protocol to avoid electrical overstress or probe-related damage. Some suppliers now offer UCIe-compliant PHY and controller IP that device makers can integrate, enabling automated test equipment (ATE) platforms to test high-speed links safely and consistently.

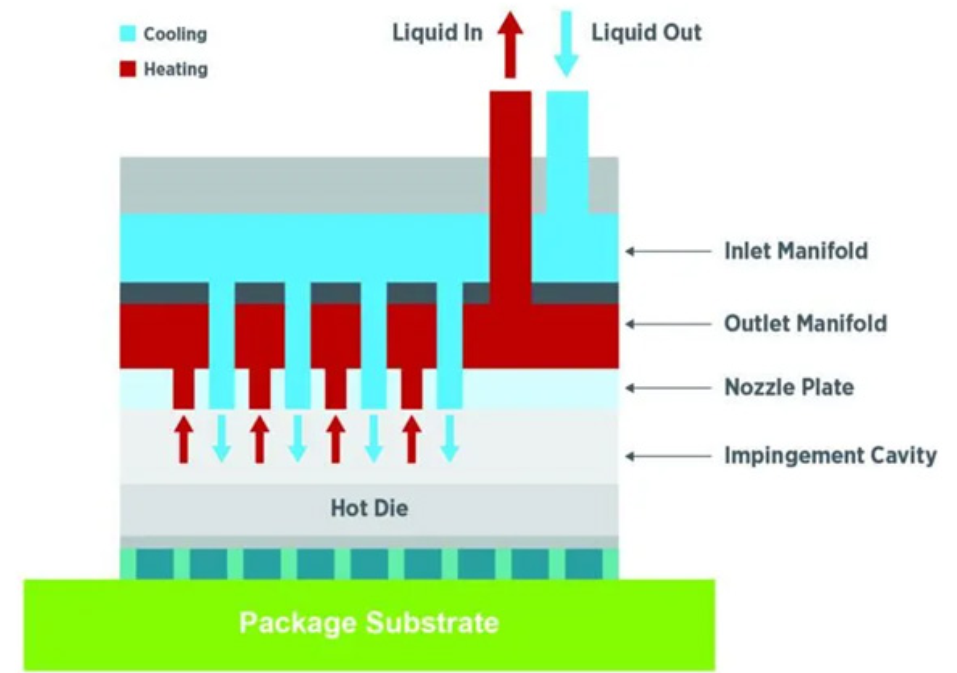

(Figure 4: Chip-level bare cooling, Source: Teradyne)

(Figure 4: Chip-level bare cooling, Source: Teradyne)

Manufacturers and test operators must also pay close attention to thermal management. More processing power means more heat dissipation issues, requiring advanced cooling methods – perhaps even liquid cooling inside the package itself (Figure 4). More die in the package means more connections, and thus, more resources are needed in the tester. More transistors mean higher power supply current requirements, more power supply instruments, and an increased set of thermal challenges that demand innovative cooling solutions and advanced adaptive thermal control (ATC) strategies.

Lastly, manufacturing test operations must consider the interposer, a physical interface layer that electrically connects a chip to a substrate or other active component. For example, a multilayer or 2.5D package includes multiple dies on an interposer assembled on top of a substrate. That interposer functions as a mini silicon board, routing signals from the upper floor die to the bottom floor die. It’s critical that the interposer is also a known good die or known good interposer (KGI) to ensure adequate yields for advanced packages.

The Future of AI and Semiconductor Testing

There has been an uptick in industry recognition that semiconductor testing is an integral part of today’s chiplet and advanced packaging trend. As this unfolds, AI computing will continue its pace of unprecedented evolution, relying on semiconductor testing to fill a crucial role in ensuring quality devices get to market in the shortened timelines today’s market demands. Semiconductor test will remain the unsung hero of AI-driven computing, steadily enabling the next wave of technological breakthroughs.

The post Impact of AI on Computing and the Criticality of Testing appeared first on ELE Times.

Advanced Aerospace Materials: Driving Hypersonic, Stealth and High-Efficiency Defence Systems

By- Shreya Bansal, Sub-Editor

The aerospace and defence sector is undergoing a materials revolution driven by escalating performance demands that conventional alloys and composites can no longer meet. As military and commercial aircraft push toward hypersonic speeds, extended operational ranges, and stealth capabilities, traditional materials face fundamental limitations in thermal tolerance, weight-to-strength ratios, and electromagnetic properties. This article examines the current landscape of advanced materials, including ceramic matrix composites, high-entropy alloys, graphene-enhanced structures, and metamaterials that are displacing legacy materials in critical applications.

The transition isn’t merely about incremental improvement; it represents a paradigm shift in how aerospace components are designed and manufactured. Engineers are abandoning materials that have dominated the industry for decades in favour of solutions that enable previously impossible capabilities: jet engines operating at temperatures that would melt nickel super alloys, airframe structures that self-repair micro-damage, and radar-absorbing surfaces engineered at the molecular level. Through expert interviews with materials scientists and aerospace engineers, this article explores why this transition is happening now, what technical and economic factors are driving adoption, and which materials are positioned to define the next generation of aerospace and defence systems.

The Materials Revolution Redefining Aerospace and DefenceThe aerospace and defence sector is undergoing a profound materials transformation. As aircraft and defence systems push toward hypersonic velocities, extended mission endurance, lower radar signatures, and improved fuel efficiency, conventional materials such as aluminium alloys, titanium, and nickel-based superalloys are reaching their performance ceilings.

The next generation of aerospace capability is no longer driven solely by aerodynamics or propulsion; it is increasingly defined by materials science. From ceramic matrix composites (CMCs) that withstand temperatures exceeding traditional alloy limits to high-entropy alloys (HEAs) engineered at the atomic scale, advanced materials are enabling systems once considered technologically unattainable.

Vern Benson, Northrop Grumman Technical Fellow (2026):

“Previous generations of aircraft were mostly made of metal, while newer versions are approximately 50% composite materials, with an even higher percentage for military aircraft… In order to compete with metals, we have to be highly automated in our manufacturing process.”

For decades, aerospace engineering relied on aluminium alloys, titanium, and nickel-based superalloys as the backbone of structural and propulsion systems. These materials delivered an optimal balance of strength, weight, corrosion resistance, and manufacturability for subsonic and supersonic flight regimes. However, modern mission profiles, ranging from hypersonic vehicles and advanced fighter aircraft to long-endurance unmanned systems, have exposed the inherent limitations of these conventional materials. Aluminium alloys lose strength rapidly at elevated temperatures, titanium becomes cost-prohibitive at scale, and nickel superalloys are approaching their thermal operating thresholds in next-generation turbine engines. As propulsion systems push for higher combustion temperatures to improve efficiency, and as airframes demand lighter structures to extend range and payload capacity, traditional materials no longer provide sufficient performance margins. These realities have shifted material selection from being a secondary design consideration to a primary performance enabler in aerospace and defence programs. Conventional materials, while proven and reliable, were engineered for a different era of performance requirements. Aluminium alloys struggle under extreme thermal loads. Titanium offers strength but at a weight and cost penalty. Nickel superalloys approach thermal limits in advanced jet engines.

Baba Kalyani, Chairman & MD, Bharat Forge (2025):

“At a time of heightened geopolitical and supply-chain uncertainty… the emphasis is firmly on upgrading platforms, systems and technologies… we must emerge as an ‘Innovation driven Economy’ that manufactures and exports best-in-class products.”

Hypersonic systems and next-generation combat aircraft demand materials that can endure sustained extreme heat while maintaining structural integrity. The tipping point for abandoning traditional materials often occurs during thermal modelling or fatigue simulations, where safety margins erode under mission stress scenarios.

Engineers are increasingly identifying material limitations during early-stage digital twin simulations, triggering a search for advanced alternatives before physical prototyping even begins.

Ceramic Matrix Composites and High-Entropy Alloys: From Research to RealityAdvanced materials such as ceramic matrix composites (CMCs) and high-entropy alloys (HEAs) are redefining what aerospace components can endure. CMCs, for example, can withstand temperatures hundreds of degrees higher than conventional superalloys while weighing significantly less. Their integration into turbine shrouds and combustor liners has enabled engines developed by companies such as GE Aerospace to operate at higher efficiencies while reducing cooling requirements. Similarly, HEAs, engineered with multiple principal elements rather than one dominant base metal, exhibit exceptional strength, oxidation resistance, and thermal stability. These materials are transitioning from laboratory experimentation to production-grade deployment, driven by advances in additive manufacturing and precision powder metallurgy. What was once considered experimental is now becoming operational, marking a fundamental shift in aerospace material qualification and deployment timelines.

Five years ago, advanced materials comprised a niche percentage of aerospace structural systems. Today, in next-generation engines and airframes, they are becoming integral rather than experimental.

Lifecycle Economics: Justifying Advanced Material InvestmentWhile advanced materials often command higher upfront costs, their value becomes evident when evaluated over the full lifecycle of an aerospace platform. Lighter airframes reduce fuel consumption, directly lowering operational expenditures and emissions. Higher-temperature engine materials increase thermodynamic efficiency and reduce maintenance frequency. Extended component durability minimises downtime and replacement cycles, factors critical for both commercial airlines and military operators. Leaders at organisations such as RTX Corporation have emphasised that procurement decisions are increasingly based on total ownership cost rather than acquisition price alone. Moreover, as production volumes scale and supply chains mature, the cost gap between conventional and advanced materials continues to narrow. When assessed across decades of operational service, advanced materials frequently offer compelling economic advantages despite their initial premium.

Fuel savings, extended maintenance intervals, and enhanced durability can offset initial procurement premiums.

Yet supply chain maturity remains a gating factor. Dependency on rare earth elements, specialised ceramic fibres, or advanced powder metallurgy inputs introduces geopolitical and availability risks.

Government R&D partnerships, particularly in the U.S., Europe, and Asia, have played a critical role in de-risking early-stage adoption by subsidising demonstration programs and pilot manufacturing lines.

Manufacturing Evolution: Scaling Advanced Materials SafelyThe adoption of advanced materials requires a parallel transformation in manufacturing methodologies and quality assurance frameworks. Unlike traditional metal alloys that rely on well-established forging and casting processes, materials such as CMCs and ultra-high-temperature ceramics demand specialised fabrication techniques, including fibre weaving, matrix infiltration, and controlled sintering. Additive manufacturing has further expanded possibilities, enabling complex geometries that were previously impossible to machine. However, these innovations introduce new challenges in inspection, certification, and scalability. Companies like Boeing are investing heavily in digital twins, advanced non-destructive evaluation methods, and automated production systems to ensure reliability and repeatability. Successfully integrating advanced materials into flight-ready systems requires not only material innovation but also synchronised advances in manufacturing science, workforce training, and regulatory certification standards.

Dr. G. Satheesh Reddy, Former Chairman, DRDO (2025):

“While precision manufacturing of the stealth airframe is critical, the speciality materials, including RAM (Radar Absorbent Material), have already been developed… Manufacturing the aircraft itself won’t be a challenge if we work entirely from the provided designs and material lists with disciplined scaling.”

CMC production, for instance, requires precision fibre weaving and matrix infiltration processes not used in conventional metallurgy. Quality assurance shifts from visual inspection and ultrasonic testing to advanced microstructural evaluation.

Organisations must also retrain engineers and technicians in new fabrication techniques, creating workforce transition challenges alongside technological ones.

Strategic and Geopolitical Implications of Materials LeadershipIn aerospace and defence, materials innovation is no longer purely a technical matter; it is a strategic asset. Advanced composites enable stealth characteristics through radar absorption and electromagnetic manipulation. Ultra-high-temperature ceramics support hypersonic weapon systems capable of withstanding extreme aerodynamic heating. Lightweight structures extend operational reach and payload flexibility, enhancing mission effectiveness. As global competition intensifies, control over critical materials, precursor chemicals, and advanced manufacturing capabilities has become a national security priority. Organisations such as Airbus and major U.S. defence contractors increasingly view materials science as a pillar of competitive differentiation. Nations investing heavily in advanced material research are positioning themselves to define the performance boundaries of next-generation aircraft, spacecraft, and defence platforms.