ELE Times

Anritsu Unveils Visionary 6G Solutions at MWC 2026

ANRITSU CORPORATION showcases next-generation wireless solutions at MWC 2026 in Barcelona (Hall 5 Stand D41). The company’s portfolio includes software-centric tools for early 6G standardisation, pioneering 6G measurement and AI-powered test solution, unified RF Multiband and NTN Validation Platform, Field Simulation Test for digital twin development, cloud-based automotive validation for ADAS and SDV, sustainable IoT power consumption evaluation, and intelligent assurance for mobile, fixed, and private infrastructures. Together, these innovations confirm Anritsu’s vision to deliver trustworthy, sustainable, and high-performance connectivity, helping operators, manufacturers, and industry verticals unlock new value as networks evolve beyond intelligence.

As a global leader in communications test and measurement, Anritsu continues to empower innovators with tools that support the evolving demands of connectivity, automation, and network intelligence.

6G Test Platform for Early 6G Standardisation and Validation

Anritsu’s Virtual Signalling Tester is a software-based signalling tester for advanced 6G validation. Its Virtual ST-based solution supports L1/Physical layer test, and protocol test/Application test, capable of testing at the MAC layer, DIQ and RF Interface (with SDR). It’s equipped with an arbitrary waveform output function, which is being considered for 6G, making it highly useful for early validation during the 6G standardisation phase.

Pioneering 6G Measurement and AI-Driven Test Solution

Anritsu demonstrates a groundbreaking 6G measurement solution, designed to redefine wireless testing with AI at the core of the workflow. This next-generation solution harnesses advanced data management and AI-powered analytics to simplify complex test processes, reduce engineering workload, and accelerate development cycles. AI-assisted test sequence generation improves accuracy, optimises resource usage, and accelerates development cycles by learning from historical test patterns and real-world performance data. This intelligent approach ensures customers can meet the rapidly evolving demands of 6G technology, digital automation, and scalable network intelligence.

Unified 6G Multiband and NTN Validation Platform

The MT8000A Radio Communication Test Station now integrates support for the Upper Mid-Band (up to 16 GHz), enabling comprehensive RF front-end testing across FR1, FR2, and FR3 bands within a single modular platform. This unified architecture streamlines validation workflows for 6G devices, allowing simultaneous multi-band characterisation, inter-band handover testing, and advanced signal integrity analysis. In addition, the platform introduces next-generation NTN (Non-Terrestrial Network) measurement capabilities, including Direct-to-Cell and NR-NTN protocols delivered as a software upgrade. Engineers can leverage real-time emulation of satellite and aerial link conditions, protocol stack verification, and seamless integration with existing test automation environments. These enhancements empower engineers to efficiently validate NTN features, optimise RF performance, and maintain a competitive edge as wireless technologies evolve toward 6G.

Reproducing Real Networks: FST for Digital Twin Development

Anritsu demonstrates its unique Field Simulation Test (FST) solution, designed to capture real-world radio environments and accurately reproduce complex network propagation conditions in the laboratory. This innovative approach allows engineers to replicate issues observed in live networks and verify propagation scenarios. Moreover, the collection of propagation data supports the development of Digital Twin environments for research into next-generation wireless technologies, such as ISAC and CSI compression.

Future-Ready Automotive Testing: Cloud-Based Validation for Connected SDV Use Cases

Anritsu, in collaboration with Valeo, is demonstrating a Virtualised Automotive Testing Solution designed to accelerate Software development and reduce testing costs for connected and autonomous vehicles. By integrating Anritsu’s virtual connectivity solution for 5G and C-V2X connectivity with Valeo’s virtualised hardware and ECU simulation platform, this joint solution enables comprehensive validation of Connected SDV functions, eliminating the need for physical vehicles or test tracks. The approach delivers faster time-to-market, improved safety, and global scalability through cloud integration, transforming automotive testing into a cost-effective, future-ready process.

Power Consumption Testing for Smarter, Sustainable IoT Devices

As industries accelerate toward smarter, more connected solutions, the demand for low-power, sustainable devices is reshaping the landscape of IoT technology. Anritsu introduces a cutting-edge power consumption test environment, empowering engineers to evaluate sensors, wearables, and smart home systems under real-world operating conditions. This real-world approach provides actionable insights into energy usage, battery life, and optimisation potential, enabling precise measurement and analysis that drive smarter design choices and longer-lasting products. Leveraging the advanced capabilities of the Anritsu MT8000A platform, Qoitech’s Otii power measurement suite, and SmartViser’s expertise in intelligent test automation and orchestration, this solution sets a new benchmark for energy efficiency in IoT device development.

From Complexity to Clarity to Confidence. Applied AI for Autonomous Networks

Anritsu’s Service Assurance platform is a unified solution that transforms network complexity into a competitive advantage. By embedding intelligence directly into the service assurance workflow, Anritsu allows operators to surface the signals that matter most. Data from across the network is correlated, converting fragmented insights into actionable guidance that engineers and operations teams can trust.

This continuous AI-driven understanding of service health accelerates decision-making and forms the foundation for autonomous network transformation. The result is a clear, unified operational picture that empowers teams to act with confidence and deliver superior customer experiences.

Across our AI-powered assurance portfolio, Anritsu’s purpose-built intelligence delivers measurable business outcomes: lower costs, higher satisfaction, and faster resolution.

The post Anritsu Unveils Visionary 6G Solutions at MWC 2026 appeared first on ELE Times.

PCB Duty Cuts to Manufacturing Zones: Top Industry Recommendations for Budget 2026

As the nation gears up for the Union Budget 2026, slated to be presented in Parliament on February 1, electronics industry associations are stepping up efforts to push India’s electronics manufacturing ecosystem to its next phase of growth.

Among the key recommendations, the Electronic Industries Association of India (ELCINA) has proposed the establishment of 10 world-class, product-specific Electronics Manufacturing Zones (EMCs). The association has urged the government to upgrade the existing EMC 1.0 and EMC 2.0 cluster models to globally accepted infrastructure standards. According to ELCINA, such an approach would help ensure regional balance, improve local facilitation, and enhance India’s competitiveness and export potential.

Strengthening the manufacturing value chain further, the India Cellular & Electronics Association (ICEA) has recommended a reduction in customs duty on microphone, receiver, and speaker assemblies for mobile phones from the current 15% to 10%. The association believes that this duty rationalisation would create cumulative cost advantages, improve global competitiveness, and encourage additional investments in domestic component manufacturing.

ICEA has also suggested reducing duties on Printed Circuit Board Assemblies (PCBAs) and Flexible PCB Assemblies (FPCAs) from 15% to 10%, a move aimed at supporting localisation and scale in electronics production.

Testing & Certification

For any industry, standards play a major role, whether for exports or inbound use; without certification, no product can see the light of day. Recognising this to be at the forefront of product development, ELCINA recommends introducing a Testing & Certification Support Scheme to provide financial support or reimbursement of testing and certification charges to MSMEs.

Also, to make the services accessible for small entities with minimal investments, the body recommends establishing regional accredited testing centers in collaboration with private labs, industry associations, and technical institutions as per BIS and other international standards. This would successfully ensure one of the vitals for strengthening the domestic manufacturing and R&D ecosystem.

Investment Fund for SMEs

As the Union Budget 2026 approaches, ELCINA has also highlighted the long-standing challenge of limited access to low-cost finance for SMEs in the electronics system design and manufacturing (ESDM) sector, noting that funding constraints continue to hamper their ability to scale and invest in advanced technologies. To address this, the association has recommended the creation of a dedicated Technology Acquisition Fund to support technology transfer and licensing, enabling Indian firms to move up the value chain and transition towards a product-led ecosystem.

ELCINA has also proposed a professionally managed, government-backed venture fund to support high-value-added manufacturing in electronic components, PCBs, and modules, along with targeted tax incentives, including investment- and dividend-stage exemptions for at least five years, to attract private equity and high-net-worth investors and help build globally competitive Indian champions.

Classification of Displays

In the same vein, the India Cellular and Electronics Association (ICEA) has raised concerns over the lack of clarity in the customs classification of display assemblies used across automobiles, medical devices, industrial electronics, and other applications. Although these displays are technologically identical to flat panel display modules used in mobile phones and televisions, they are often classified under different HSN codes based on end-use, resulting in inconsistent customs treatment and operational uncertainty across field formations.

To address this, ICEA has recommended uniform classification of all display assemblies under HSN 8524, regardless of application, a step it says would ensure global alignment, reduce classification disputes, and enable smoother integration of display manufacturing across product segments as domestic capacity scales up under the Electronics Components and Manufacturing Scheme (ECMS).

Conclusively, the industry’s pre-Budget recommendations point to a clear priority: strengthening India’s electronics manufacturing depth through targeted policy, fiscal, and regulatory interventions. With focused action on infrastructure, duties, finance, and classification clarity, Budget 2026 has the opportunity to accelerate India’s shift from assembly-led growth to globally competitive electronics manufacturing.

The post PCB Duty Cuts to Manufacturing Zones: Top Industry Recommendations for Budget 2026 appeared first on ELE Times.

CEA-Leti Advances Silicon-Integrated Quantum Cascade Lasers for Mid-Infrared Photonics

CEA-Leti presented new research at SPIE Photonics West highlighting major progress in the integration of quantum cascade lasers (QCLs) with silicon photonic platforms for mid-infrared (MIR) applications.

The paper titled, “Advanced Architectures for Hybrid III-V/Silicon Quantum Cascade Lasers: Toward Integrated Mid-Infrared Photonic Platforms,” compares three complementary hybrid laser architectures that collectively advance the practicality, flexibility, and scalability of MIR photonics.

Toward ‘Smaller, More Robust, and More Manufacturable MIR Systems’

Mid-infrared light plays a critical role in technologies such as gas sensing, chemical spectroscopy, biomedical diagnostics, and security, because many molecules exhibit strong absorption signatures in this spectral region. Despite the technology’s importance, MIR photonic systems remain large, costly, and difficult to manufacture at scale. Integrating MIR light sources directly onto silicon photonic platforms offers a path toward smaller, more robust, and more manufacturable systems—bringing mid-infrared photonics closer to the level of integration in the near-infrared.

Three Architectures, Three Integration Strategies

In its Photonics West presentation, CEA-Leti demonstrated and compared three distinct hybrid III-V/silicon QCL architectures, each addressing a different integration challenge:

Hybrid Distributed Feedback QCL on Silicon-on-Nothing-on-Insulator with Adiabatic Coupling

- This approach enables robust single-mode emission around 4.3 µm with efficient optical power transfer from the III-V active region into silicon waveguides. High-index-contrast silicon photonics provides precise feedback and light routing, making this architecture well-suited for scalable photonic integrated circuits targeting spectroscopy and chemical sensing.

Hybrid QCL with an External Silicon Distributed Bragg Reflector Cavity

- In this configuration, optical gain and optical feedback are decoupled: the III-V material provides amplification, while wavelength selection and feedback are implemented in silicon using distributed Bragg reflector (DBR) cavities. This separation offers enhanced design flexibility and opens a clear path toward tunable and multifunctional MIR sources for advanced spectroscopic and sensing systems.

Ultra-Compact QCL Micro-Sources Based on Photonic Crystals & Micro-Rings

- Miniature light sources in these devices achieve footprints below 100 µm² by leveraging strong optical confinement and resonant effects. The resulting extreme miniaturization enables dense on-chip integration and supports new system architectures where size, power consumption, and integration density are critical.

From Passive Platform to Active Host

Collectively, the results show that silicon photonics can play an active role in mid-infrared laser systems. By combining adiabatic optical coupling, silicon-based feedback and cavity engineering, and ultra-compact laser concepts, CEA-Leti establishes several viable integration pathways rather than a single, one-size-fits-all solution. The work highlights how different architectures trade off stability, flexibility, and footprint, providing designers with a practical toolkit for MIR photonic systems.

“By combining quantum cascade lasers with silicon photonics, we are bringing mid-infrared sources closer to the level of integration and scalability that silicon platforms have already achieved in the near-infrared,” said Alexis Hobl, presenter and lead author of the paper.

Looking Ahead

Future work will focus on further improving optical coupling efficiency, fabrication robustness, and thermal and electrical management, as well as integrating additional on-chip photonic functions such as filters, multiplexers, and interferometric circuits. Demonstrating wafer-scale reproducibility and packaging-ready designs will be key milestones on the path toward fully integrated mid-infrared photonic systems.

Acknowledgements: L’Institut des Nanotechnologies de Lyon (INL), III-V Lab, and Fraunhofer Applied Solid State Physics IAF contributed to this project.

The post CEA-Leti Advances Silicon-Integrated Quantum Cascade Lasers for Mid-Infrared Photonics appeared first on ELE Times.

IOS-MCN develops India’s Open Source Platform to Build Private 5G network led by IISc Bengaluru, IIT Delhi, CDAC, MeitY

The Indian Open Source for Mobile Communication Networks (IOS-MCN) Consortium has developed a new open source, publicly releasing its software platform to allow organisations to build and run their own Private 5G networks. Designed for factories, campuses, research institutions and startups, the platform would allow users to deploy Private 5G networks that promise faster, more reliable and secure connectivity than Wi-Fi or public mobile networks at a lower cost.

The release, named Agartala 0.4.0, is a continuation of IOS-MCN’s convention of using Indian city names to reflect its national, open-source character. This is the fourth open-source milestone that is mature enough for pilot deployments of India’s homegrown Private 5G platform. Validation tests prove end-to-end latency of under 10 milliseconds and downlink throughput of up to 600 Mbps per gNB, making it suitable for early pilots and enterprise trials.

The IOS-MCN is being developed by a consortium led by IISc Bengaluru, IIT Delhi, and C-DAC, with funding from the Ministry of Electronics & Information Technology (MeitY), Government of India. Agartala 0.4.0 marks a decisive step towards industry-grade, deployable Private 5G networks built on an open-source platform.

What’s New in Agartala 0.4.0Brings all key parts of a private 5G network into one integrated, easy-to-deploy software platform:

- Advanced Mobility: Xn, F1, and N2 handovers, cell reselection, and robust RRC idle mode handling

- Voice & Multimedia: VoNR and ViNR with full IMS integration

- ORAN-Compliant Disaggregated RAN with unified RAN support

- Network Slicing for enterprise and mission-critical use cases

- Service Management & Orchestration (SMO) with a unified dashboard

- RIC Framework: E2 interface implementation with near-RT and non-RT RIC support

- Precision Timing: PTP LLS C1 and C3

- Operational Excellence: PM counters across RAN and Core, extensive crash and assert fixes, and expanded test coverage

Agartala 0.4.0 positions IOS-MCN for early pilot deployments led by ecosystem partners, with installations planned to commence in the second half of 2026 across multiple sectors.

Niral Networks, which builds indigenous telecom and networking solutions, has proposed an Intelligent Village pilot. It stated, “Agartala 0.4.0 provides the foundational technology that enables Private 5G deployments for rural and semi-urban environments. We will leverage the IOS-MCN stack to deliver real-world analytics and digital inclusion solutions through initiatives like the Intelligent Village Pilot and Smart Village Connectivity Program.”

Techphosis, which develops secure technology solutions for defence and strategic applications, has proposed a Network-in-a-Box pilot for defence applications. It said: “Agartala 0.4.0 demonstrates the readiness of open-source, ORAN-compliant platforms for defence use cases. The Network-in-a-Box pilot, planned for the second half of 2026, aims to validate rapid, secure, portable and reliable Private 5G deployments for defence communications.”

Together, these proposed pilots, spanning several sectors, underscore IOS-MCN Agartala 0.4.0’s readiness to transition from platform development to industry validation and deployment starting in 2026.

The post IOS-MCN develops India’s Open Source Platform to Build Private 5G network led by IISc Bengaluru, IIT Delhi, CDAC, MeitY appeared first on ELE Times.

How A Real-World Problem Turned Into Research Impact at IIIT-H

The idea for a low-cost UPS monitoring system at IIIT-H did not begin in a laboratory or a funding proposal. It began with a familiar frustration – raised by Prakash Nayak, a campus IT staffer who was tired of equipment failures with no clear explanation.

Power outages were happening. Servers were restarting. Despite the installation of UPS units everywhere, no one could say with certainty what the UPS systems were actually doing when the lights went out. That real-world problem became the starting point for a research project that has now resulted in a ₹2,000 IoT-based device capable of tracking UPS behaviour during outages with near-second precision.

The research was documented in a paper titled “Low-cost IoT-based Downtime Detection for UPS and Behaviour Analysis,” by authors Sannidhya Gupta, Prakash Nayak, and Prof. Sachin Chaudhari. It also received the Best Paper award at the 18th International Conference on COMmunication System and NETworkS (COMSNETS-2026) Workshop on AI of Things, recently held in Bangalore.

When monitoring costs more than the problem

“Frequent power outages in developing regions cause equipment damage, operational downtime, and data loss,” says Sannidhya Gupta, noting that while UPS systems are meant to provide protection, “affordable options for monitoring their performance remain limited.” Commercial UPS monitoring tools – typically SNMP cards that collect and organise information about managed devices over IP networks – were an option, but an impractical one. According to the paper, “Commercial solutions are expensive, manufacturer-specific, and reliant on network infrastructure”. With prices exceeding ₹20,000 per unit, the campus IT team simply could not justify deploying them at scale. Worse, these tools often failed at the moment they were most needed. “These systems are unable to record data when the UPS itself loses power,” the authors point out, making post-outage diagnosis nearly impossible.

A device that watches, not interferes

Responding directly to the IT team’s request for something affordable and reliable, the team designed a non-intrusive current-monitoring device. Instead of tapping into UPS internals, it clamps onto the input and output lines, observing how current flows before, during, and after outages. “UPS input and output currents are sensed non-intrusively to detect outages, switchovers, and recovery behaviour,” the researchers explain. Additionally, the device is battery-backed, allowing it to keep recording even when both mains power and internet connectivity are lost.

From theory to campus corridors

In order to test out the system, it was deployed across four UPS installations on campus, including one unit already suspected by IT staff to be malfunctioning. Over a month, the devices collected around 3.7 million data points, automatically detecting 61 outage events. The data confirmed what the IT team had suspected but could never prove. “One UPS repeatedly showed no clear charging behaviour after outages,” reports Prakash, indicating a system that could briefly support loads but failed to properly recharge its batteries.

Smart algorithms, Simple assumptions

The backend analytics automatically labels each event into phases – normal operation, outage, stabilisation, and battery charging – without manual configuration. “All thresholds are expressed as fractions of a locally estimated baseline,” the authors note, adding that this allows the system to adapt to different installations automatically. The results were precise: no missed outages, no false alarms, and timing errors typically within three seconds.

Real-time monitoring, Ten times cheaper

A web-based dashboard now gives IT staff something they never had before: visibility. Instead of guessing whether a UPS is healthy, administrators can now see it. Plus, they have access to historical analysis of UPS behaviour. Built using off-the-shelf components, the device costs about ₹2,000 – roughly one-tenth the price of commercial monitoring cards. “Its affordability, power independence, and portability make it a practical option for cost-constrained environments,” concludes Sannidhya.

Research grounded in reality

What sets this work apart is not just the technology, but its origin. This was research born out of a real operational pain point, brought directly by the people responsible for keeping systems running. “It is important to note that IT staff, Mr. Prakash, is part of the research paper we have published. He is also part of the patent we have recently filed on this. This highlights the value of treating campus operations teams as co-creators of research problems rather than mere end users – a mindset that leads to more relevant and impactful outcomes,” states Prof. Chaudhari. In a landscape where academic research is often criticised for being disconnected from reality, this project offers a counter example of how researchers take note when a problem statement is identified, and build something that changes how systems are understood and managed.

The post How A Real-World Problem Turned Into Research Impact at IIIT-H appeared first on ELE Times.

Microchip Expands PolarFire FPGA Smart Embedded Video Ecosystem providing enhanced video connectivity

The post Microchip Expands PolarFire FPGA Smart Embedded Video Ecosystem providing enhanced video connectivity appeared first on ELE Times.

element14 and Fulham announce global distribution partnership

element14 has formed a new global distribution partnership with Fulham, expanding access to advanced LED drivers, emergency lighting and intelligent control solutions for customers across EMEA & APAC. The agreement strengthens element14’s lighting portfolio in the region, supporting engineers and buyers across commercial, industrial and architectural lighting applications.

Fulham brings more than 30 years of expertise in LED drivers, modules, emergency lighting and intelligent control systems. Headquartered in the United States, the company operates globally, with manufacturing in India, supply channels in India and China, and a strong presence across Europe. Its portfolio includes indoor and outdoor LED drivers, emergency lighting systems, UV ballasts, and smart control technologies, all designed to meet key international standards, including CE, ENEC, DALI-2, and UL.

Through this partnership, element14 will distribute Fulham’s lighting solutions globally, improving availability and access to future-ready technologies for engineers and buyers worldwide.

The agreement includes Fulham’s core lighting portfolio, including emergency lighting systems, indoor LED drivers, and constant-voltage driver platforms, with key ranges including the HotSpot Series, WorkHorse DALI-2 constant-current drivers, and the ThoroLED Series for architectural lighting, signage, and LED strip applications.

Customer benefits include:

- Broader access to certified, future-ready lighting technologies.

- Global availability through element14’s established distribution network.

- Support for a wide range of lighting applications and form factors.

- Access to Fulham’s deep technical expertise and proven product platforms.

Jose Lok, Global Product Category Director – Onboard Components & SBC, element14, said: “element14 has a strong commitment to adding value for our customers, and this partnership expands both choice and access to innovative lighting technologies. By working with Fulham, we are enabling customers worldwide to source advanced LED drivers, emergency lighting and control solutions through a trusted global distribution partner.”

Antony Corrie, CEO, Fulham, added: “Fulham is extremely excited to embark on this new relationship with element14. The partnership brings together shared values, strong heritage and a commitment to global innovation. element14 in APAC will be selling Fulham’s LED drivers, emergency battery backup solutions, exit signs and UV-C power systems across their global customer base.”

The post element14 and Fulham announce global distribution partnership appeared first on ELE Times.

India’ PLI Scheme Brings a Surge of 146% in Electronics Production

Despite geopolitical tensions, manufacturing in India has done exponentially well, with smartphones leading the trail. According to data shared by CareEdge Ratings, India’s production has surged by 146% since 2021. The Performance Linked Incentive (PLI) scheme played a significant role in boosting electronics manufacturing from Rs 2.13 lakh crore in the Financial Year 2021 to Rs 5.45 lakh crore in the Financial Year 2025.

Additionally, the boost in production was aided by USD 4billion in FDI, where 70% was to PLI beneficiaries. Apart from economic benefits, the accelerated production has triggered a massive socio-economic multiplier effect. The electronics sector has been a dominant contributor to the 9.5 lakh jobs generated across all PLI schemes, providing significant direct and indirect employment. Simultaneously, electronics have climbed to become one of India’s top export categories. By shifting from an importer to a “net exporter” of mobile phones, India is successfully narrowing its trade deficit and reducing its long-term dependency on imports from neighbouring manufacturing hubs.

While the 146% jump is a historic achievement, the roadmap ahead focuses on “Deep Localisation.” The government and industry leaders are now pivoting toward high-value components, including semiconductor packaging and display manufacturing. As of January 2026, this momentum positions India to reach its goal of a $300 billion electronics production ecosystem, solidifying its role as a critical alternative in the global “China Plus One” supply chain strategy.

The post India’ PLI Scheme Brings a Surge of 146% in Electronics Production appeared first on ELE Times.

How AI and ML Became Core to Enterprise Architecture and Decision-Making

by Saket Newaskar, Head of AI Transformation, Expleo

Enterprise architecture is no longer a behind-the-scenes discipline focused on stability and control. It is fast becoming the backbone of how organizations think, decide, and compete. As data volumes explode and customer expectations move toward instant, intelligent responses, legacy architectures built for static reporting and batch processing are proving inadequate. This shift is not incremental; it is structural. In recent times, enterprise architecture has been viewed as an essential business enabler.

The global enterprise architecture tools market will grow to USD 1.60 billion by 2030, driven by organizations aligning technology more closely with business outcomes. At the same time, the increasing reliance on real-time insights, automation, and predictive intelligence is pushing organizations to redesign their foundations. Also, artificial intelligence (AI) and machine learning (ML) are not just optional enhancements. They have become essential architectural components that determine how effectively an enterprise can adapt, scale, and create long-term value in a data-driven economy.

Why Modernisation Has Become Inevitable

Traditional enterprise systems were built for reliability and periodic reporting, not for real-time intelligence. As organisations generate data across digital channels, connected devices, and platforms, batch-based architectures create latency that limits decision-making. This challenge is intensifying as enterprises move closer to real-time operations. According to IDC, 75 per cent of enterprise-generated data is predicted to be processed at the edge by 2025. It highlights how data environments are decentralising rapidly. Legacy systems, designed for centralised control, struggle to operate in this dynamic landscape, making architectural modernisation unavoidable.

AI and ML as Architectural Building Blocks

AI and ML have moved from experimental initiatives to core decision engines within enterprise architecture. Modern architectures must support continuous data pipelines, model training and deployment, automation frameworks, and feedback loops as standard capabilities. This integration allows organisations to move beyond descriptive reporting toward predictive and prescriptive intelligence that anticipates outcomes and guides action.

In regulated sectors such as financial services, this architectural shift has enabled faster loan decisions. Moreover, it has improved credit risk assessment and real-time fraud detection via automated data analysis. AI-driven automation has also delivered tangible efficiency gains, with institutions reporting cost reductions of 30–50 per cent by streamlining repetitive workflows and operational processes. These results are not merely the outcomes of standalone tools. Instead, they are outcomes of architectures designed to embed intelligence into core operations.

Customer Experience as an Architectural Driver

Customer expectations are now a primary driver of enterprise architecture. Capabilities such as instant payments, seamless onboarding, and self-service have become standard. In addition, front-end innovations like chatbots and virtual assistants depend on robust, cloud-native, and API-led back-end systems that deliver real-time, contextual data at scale. While automation increases, architectures must embed security and compliance by design. Reflecting this shift, the study projects that the global market worth for zero-trust security frameworks will exceed USD 60 billion annually by 2027. As a result, this will reinforce security as a core architectural principle.

Data Governance and Enterprise Knowledge

With the acceleration of AI adoption across organisations, governance has become inseparable from architecture design. Data privacy, regulatory compliance, and security controls must be built into systems from the outset, especially as automation and cloud adoption expand. Meanwhile, enterprise knowledge, proprietary data, internal processes, and contextual understanding have evolved as critical differentiators.

Grounding AI models in trusted enterprise knowledge improves accuracy, explainability, and trust, particularly in high-stakes decision environments. This alignment further ensures that AI systems will support real business outcomes rather than producing generic or unreliable insights.

Human Readiness and Responsible Intelligence

Despite rapid technological progress, architecture-led transformation ultimately depends on people. Cross-functional alignment, cultural readiness, and shared understanding of AI initiatives are imperative for sustained adoption. Enterprise architects today increasingly act as translators between business strategy and intelligent systems. Additionally, they ensure that innovation progresses without compromising control.

Looking ahead, speed and accuracy will remain essential aspects of enterprise architecture. However, responsible AI will define long-term success. Ethical use, transparency, accountability, and data protection are becoming central architectural concerns. Enterprises will continue redesigning their architectures to be scalable, intelligent, and responsible for the years to come. Those that fail to modernise or embed AI-driven decision-making risk losing relevance in an economy where data, intelligence, and trust increasingly shape competitiveness.

The post How AI and ML Became Core to Enterprise Architecture and Decision-Making appeared first on ELE Times.

Nuvoton Releases High-Power Ultraviolet Laser Diode (379 nm, 1.0 W)

Nuvoton Technology announced the start of mass production of its high-power ultraviolet semiconductor laser (379 nm, 1.0 W) [1], which delivers industry-leading optical output in a 9.0 mm diameter CAN package (TO-9) [2]. This product achieves short wavelength, high output power, and long lifetime—three elements previously considered difficult for ultraviolet semiconductor lasers—through our proprietary device structure and advanced high-heat-dissipation packaging technology. As a result, it contributes to fine patterning and improved production throughput in maskless lithography [3] for advanced semiconductor packaging [4].

Achievements:

- Achieves 1.0 W-class optical output at 379 nm, contributing to fine patterning and improved production throughput in maskless lithography for advanced semiconductor packaging.

- Improves heat dissipation of ultraviolet semiconductor lasers through our proprietary device structure and packaging technology, suppressing device degradation from self-heat generation and ultraviolet light and contributing to the extended lifetime of optical equipment.

- Expands the lineup of mercury lamp replacement solutions [5], enhancing flexibility in product selection to suit different applications.

Features of New Product:

- Achieves 1.0 W-class optical output at 379 nm, contributing to fine patterning and improved production throughput in maskless lithography for advanced semiconductor packaging.

As demand grows for information processing capabilities driven by the evolution of artificial intelligence (AI), there is an increasing need for higher performance from semiconductors than ever before. On the other hand, as the miniaturisation of transistors approaches its physical and economic limits, semiconductor back-end package technologies and advanced semiconductor packaging, which allow for integration by arranging multiple semiconductor chips side by side or stacking them vertically, have been attracting attention.

In advanced semiconductor packaging, the mainstream method for forming wiring connections between multiple semiconductor chips has been exposure technology using the i-line (365 nm) of the mercury spectrum and photomasks (master masks of circuits). On the other hand, there has been growing interest in recent years in maskless lithography technology, which directly exposes (draws) wiring patterns based on design data without using photomasks.

This technology is considered to reduce the time and cost associated with the design and production of photomasks. Furthermore, because it is possible to directly imprint wiring patterns to match the surface shape of the target for drawing, alignment and correction are easier, and application to advanced semiconductor packages is currently under consideration.

As one of the key light sources in maskless lithography, semiconductor lasers have faced increasing demands for shorter wavelengths closer to the i-line (365 nm) and higher output, to enable finer wiring and improve equipment throughput. To meet these requirements, we have leveraged over 40 years of experience in laser design and manufacturing to develop and commercialise an ultraviolet semiconductor laser with a wavelength of 379 nm and an output of 1.0 W.

- Improves heat dissipation of ultraviolet semiconductor lasers through our proprietary device structure and packaging technology, suppressing device degradation from self-heat generation and ultraviolet light and contributing to the extended lifetime of optical equipment.

Ultraviolet semiconductor lasers generally suffer from significant heat generation caused by low wall-plug efficiency (WPE)[6], and a tendency for device degradation caused by ultraviolet light, making stable operation at high output levels above 1.0 W difficult to achieve. To address this, we took a dual approach by focusing on both a “device structure that enhances wall-plug efficiency (WPE)” and a “high thermal conduction package technology that effectively dissipates heat,” enabling us to develop a product that successfully combines short wavelength, high output, and long lifetime: a 1.0 W ultraviolet (379 nm) device. As a result, we are contributing to extending the lifetime of optical devices that utilise ultraviolet light.

・Device structure to enhance wall-plug efficiency

In addition to optimising the emission layer and optical guide layer, we have adopted a proprietary structure that precisely controls the doping profile. By reducing light absorption loss and operating voltage, this allows electrical energy to be converted into light more efficiently.

・High thermal conduction package technology that efficiently dissipates heat

In addition to adopting a submount made of high thermal conductivity materials, the package materials have been revised to reduce thermal resistance. As a result, rises in device temperature are suppressed, allowing for stable operation at high output.

- Expands the lineup of mercury lamp replacement solutions, enhancing flexibility in product selection to suit different applications.

This product has been newly added to our lineup of “semiconductor laser-based alternatives to mercury lamps,” providing customers with a new choice. With this addition, customers can flexibly select products according to application, installation environment, and required performance, thereby increasing the freedom of system design.

Details of this new product will be showcased at our booth at SPIE Photonics West 2026 in San Francisco, USA, and at OPIE’26 in Yokohama, Japan. We sincerely look forward to welcoming you.

Applications:

- Maskless lithography

・ Resin curing

・ Marking

・ 3D printing

・ Biomedical

・ Alternative light source for mercury lamps, etc.

Definitions:

[1] Ultraviolet semiconductor laser:

Our term is for a semiconductor laser that emits laser light with a peak wavelength of approximately 380 nm or less.

[2] TO-9 CAN:

CAN-type package with a diameter of 9.0 mm.

[3] Maskless lithography:

A technology that directly exposes photosensitive materials (resist) on a substrate based on design data without using a photomask and forms fine patterns. When using laser light, it is also referred to as Laser Direct Imaging (LDI).

[4] Advanced semiconductor packaging:

An implementation technology in which multiple semiconductor chips are densely integrated to optimise performance and power efficiency.

[5] Mercury lamp replacement solutions:

Nuvoton Technology’s lineup of semiconductor lasers is designed to replace the emission lines of mercury lamps: i-line (365 nm), h-line (405 nm), and g-line (436 nm). We propose the combined use of ultraviolet semiconductor lasers (379 nm), violet semiconductor lasers (402 nm), and indigo semiconductor lasers (420 nm).

[6] Wall-Plug Efficiency (WPE)

An indicator of the efficiency of converting electrical input into optical output. It is generally used to express the emission efficiency of semiconductor lasers.

The post Nuvoton Releases High-Power Ultraviolet Laser Diode (379 nm, 1.0 W) appeared first on ELE Times.

SST & UMC Release 28nm SuperFlash Gen 4 for Next-Gen Automotive Controllers

- Automotive Electronics Council (AEC) Q-100 Grade 1 qualified for operating temperatures of -40°C to +150°C (Tj)

- Read access time < 12.5ns

- 100K+ endurance cycles

- Data retention of > 10 years @ 125°C

- Only 1-bit ECC required

- Qualification of 32Mb macro at auto grade 1 conditions:

- Zero bit failures (no ECC applied)

- Peak yield reached 100%

The post SST & UMC Release 28nm SuperFlash Gen 4 for Next-Gen Automotive Controllers appeared first on ELE Times.

“The role of distributors is rapidly evolving as India’s electronics manufacturing sector expands,” says Dr. Raphael Salmi of Arrow Electronics

| Dr. Raphael Salmi is the president of Arrow Electronics’ South Asia, Korea, and Japan components business. He is responsible for overseeing the teams supporting Indonesia, Malaysia, the Philippines, Singapore, Thailand, Vietnam, India, Korea, Japan, Australia, and New Zealand.

With over 19 years at Arrow, Raphael brings extensive experience overseeing various businesses in the Americas and EMEA, focused on driving and achieving growth, innovation, and performance on a global scale. |

As the electronic industry moves past 2025, it advances with significant strides in electronic design systems, encompassing power efficiency, thermal management, and materials. Also, for India, 2025 remained a high-growth year in electronics, wherein the exports of the same grew sixfold from ₹1.9 lakh crore to ₹11.3 lakh crore in a decade. But all this can be sustained and progressed further only through a strong backing in R&D capabilities, as well as exceptional engineering capabilities and resources. In an exclusive conversation with ELE Times, Dr. Raphael Salmi, President – South Asia, Korea, and Japan Components, Arrow Electronics & Bhartendu Mishra, Managing Director – India, Arrow Electronics, discuss these issues in some detail.

Dr. Raphael Salmi notes, “ The role of distributors is rapidly evolving as technology accelerates and India’s electronics manufacturing sector expands,” while he underlines the role of component distributors in India’s dynamic electronics landscape.

Challenges faced by Design Engineers in India

Apart from the core challenges of supply chain and reliable sourcing being evidently face-to-face in the electronics design industry, Dr. Salmi points towards a growing trend in the Indian landscape. He says,” There is an increasing demand for end-to-end engineering capabilities to accelerate innovation and achieve faster time-to-market.”

He adds that his company addresses these challenges by leveraging its extensive technology portfolio, deep engineering expertise, and comprehensive supply chain services coupled with a robust trade compliance program, aiming to empower innovation & resilience in India’s rapidly growing market.

Distributors’ Role – As India Chases the Electronics Dream

As India grows its electronics footprint, the role of electronics distributors is obvious to transform as it forms the very core of Research & development in the sector. To this, Dr. Salmi says, “The role of distributors is rapidly evolving as technology accelerates and India’s electronics manufacturing sector expands”.

Arrow does this by allowing its customers to outsource portions of their supply chain or specific elements of their bill of materials to it, while the companies can focus on research, development, and go-to-market strategies. This allows for enhanced efficiency and flexibility in the entire landscape. For Arrow’s role in India’s dynamically growing electronics landscape, Dr. Salmi says, “ For Arrow, India serves as a key hub for engineering, integration, and supply chain services. . We are committed to serving the Indian market by leveraging our extensive technology portfolio, engineering expertise, and supply chain capabilities to help customers innovate

faster and scale efficiently, and capture these burgeoning opportunities”.

Design Centre in India

Arrow’s recently inaugurated Arrow Engineering Solutions Center in Bangalore is a testament to its commitment to serve and rope in the Indian design engineering talent into the latest innovations in the sector. Bhartendu says, “ The center helps bridge gaps in local engineering capabilities and accelerates adoption of next-generation technologies, such as AIoT, edge computing, and intelligent sensing—reinforcing Arrow’s commitment to innovation and customer success.”

It enables world-class engineering support and advanced technology resources for sectors such as industrial, automotive, transportation, energy management, aerospace, and defense, he adds.

Arrow’s Beyond Components Approach

Arrow’s approach beyond components says a lot about its efforts to support and empower the design engineers. Beyond distribution, it enables supply chain management, engineering design, and integration services, allowing it to act as an extension of OEMs and suppliers’ product development teams.

Bhartendu Mishra, Managing Director – India, Arrow Electronics, says, “ Our robust compliance framework, global reach, and ability to deliver innovative solutions make Arrow a trusted partner for design engineers seeking reliability, expertise, and comprehensive support”.

Looking Ahead

Concluding the discussion, Dr. Salmi highlights Arrow Electronics’ commitment to empowering customers and suppliers across India and Asia-Pacific through its platform-based, data-driven model. The company is expanding value-added services in design engineering, integration, and supply chain management to help customers innovate faster and reach the market sooner. Continued investments in digital platforms will improve real-time access to inventory, pricing, and design resources, while a strong vertical focus on energy management, robotics, AIoT, and e-mobility will anchor Arrow’s growth strategy in the region.

| India’s semiconductor ambitions are backed by initiatives like the ₹76,000 crore ISM and the ₹1,000 crore DLI scheme, which focuses on fostering a strong design ecosystem. A critical part of this effort is ensuring design engineers get timely access to quality components.

To highlight how distributors are enabling this, we present our exclusive series — “Powering the Chip Chain” — featuring conversations with key industry players. |

The post “The role of distributors is rapidly evolving as India’s electronics manufacturing sector expands,” says Dr. Raphael Salmi of Arrow Electronics appeared first on ELE Times.

Global AI Spending to Reach $2.5 Trillion in 2026, Predicts Gartner

Gartner, a business and technology insights company forcasts the global AI spending to rise to $ 2.52 trillion this year through a 44% increase year-over-year.

“AI adoption is fundamentally shaped by the readiness of both human capital and organisational processes, not merely by financial investment,” said John-David Lovelock, Distinguished VP Analyst at Gartner. Organisations with greater experiential maturity and self-awareness are increasingly prioritising proven outcomes over speculative potential.

“Because AI is in the Trough of Disillusionment throughout 2026, it will most often be sold to enterprises by their incumbent software provider rather than bought as part of a new moonshot project,” said Lovelock. “The improved predictability of ROI must occur before AI can truly be scaled up by the enterprise.”

Building AI foundations alone will drive a 49% increase in spending on AI-optimised servers, representing 17% of total AI spending (see Table 1). AI infrastructure will also add $401 billion in spending as a result of technology providers building out AI foundations.

Table 1: Worldwide AI Spending by Market, 2025-2027 (Millions of U.S. Dollars)

| Market | 2025 | 2026 | 2027 |

| AI Services | 439,438 | 588,645 | 761,042 |

| AI Cybersecurity | 25,920 | 51,347 | 85,997 |

| AI Software | 283,136 | 452,458 | 636,146 |

| AI Models | 14,416 | 26,380 | 43,449 |

| AI Platforms for Data Science and Machine Learning | 21,868 | 31,120 | 44,482 |

| AI Application Development Platforms | 6,587 | 8,416 | 10,922 |

| AI Data | 827 | 3,119 | 6,440 |

| AI Infrastructure | 964,960 | 1,366,360 | 1,748,212 |

| Total AI Spending | 1,757,152 | 2,527,845 | 3,336,690 |

Source: Gartner (January 2026)

The post Global AI Spending to Reach $2.5 Trillion in 2026, Predicts Gartner appeared first on ELE Times.

Industry 5.0 in Practice: Collaborative, Connected, and Conscious Manufacturing

As the world transitions towards Industry 5.0, the notion of smart factories is no science fiction. The concept of smart manufacturing revolves around collecting, analysing, and using data from multiple sources to automate and optimise processes. India’s Smart factory market is projected to grow at a 14.3% CAGR (2025-30) to reach USD 15.6 billion by 2026. Among the various industries, the electronics and semiconductors sector is expected to be the fastest-growing in the adoption of Industry 5.0. The concept of Industry 5.0 thrives on three basic pillars– human-centricity and collaboration, sustainability, and resilience.

The game of smart manufacturing is all about the usage of data. The one who has the most holistic variety of data is bound to excel in the adoption and implementation of smart factories. From advanced automation, using AI, harnessing updated IoT technology, and big data analytics, the real deal of manufacturing lies in how well data can be used. Apart from these, the biggest innovations in Industry 5.0 are the use of ‘cobots’, or as one would call them, collaborative robots. Among the various technological advancements, the ‘Human-Machine Interface’ (HMI) is projected to be the fastest-growing technology segment in the Indian Smart Factory market.

Shifting from Industry 4.0

Industry 4.0 focused largely on technological advancement to boost the pace of production and its efficiency, and it was often said that this advancement of technology would eventually replace the human workforce. However, the trend changes with the adoption of Industry 5.0.

So, the idea now is that digitalisation will not make humans in industrial manufacturing obsolete; instead, it will integrate smart automation, systems, and devices in the workplace to promote cooperation and collaboration between humans and machines.

Technology in Smart Manufacturing

Core technologies under the smart manufacturing set-up of Industry 5.0 are said to work on edge-computing, cybersecurity measures, cloud-computing, Artificial Intelligence and Machine Learning, and big data analytics.

Edge and cloud computing in Industry 5.0 smart manufacturing offer real-time responsiveness (Edge), enhanced human-cobot collaboration, greater flexibility for mass customization, improved security/privacy, reduced costs/bandwidth, and powerful predictive analytics, with the cloud handling heavy storage/complex AI while the edge provides speed and reliability for immediate action, enabling personalized, sustainable, and efficient production.

Man + Machine Middleware

The contemporary concept of smart factories is no longer just automation through machines. Today, it is all about the ‘coexistence’ of humans and machines, and that is exactly what everyone is focused on!

While there is a buzz about AI taking over human jobs in the coming future, the real deal is that humans are central to manufacturing; however, the role of the human workforce will shift from handling repetitive and laborious tasks to indulging in customisation and creativity.

“The integration of AI and machine learning enables better human-machine collaboration where technology adapts to human needs on a real-time basis. IoT turns equipment into context-aware responsive assets. Digital twins enable engineers and operators to experiment in the virtual world, which is less risky and less cognitively demanding than the shop floor. Complex data is converted into easy-to-use visual and tactile interfaces with advanced HMIs, which can be used to make decisions faster and more safely,” reflects Prasad Kulkarni, Lead–Digital Transformation, Factory Automation Systems, Mitsubishi Electric India.

“Co” in Cobot

Imagine a workspace where a technician is hand-soldering a custom prototype while, just inches away, a robotic arm is flawlessly placing components the size of a grain of salt with 0.01mm precision. Meet your new favourite bench-mate. It doesn’t take coffee breaks, it never gets a “Monday morning” headache, and it can hold a PCB steady for hours without a single tremor. Cobots are the literal “Helping Hands” of Industry 5.0, filling the gap between rigid automation and the high-touch craftsmanship that modern electronics manufacturing requires.

“Cobots are designed with safety and human collaboration in mind. They include sensors and control systems that detect human proximity and can instantly slow or stop motion to prevent accidents. Their flexibility and ease of programming make them suitable for varied tasks such as assembly, material handling, and packaging. These features help reduce worker fatigue and ergonomic risk while maintaining process consistency. When used properly, cobots enhance productivity and workplace safety, enabling humans to focus on tasks that require judgment and creativity,” underscores Dr Sanjeev Srivastava, Business Head–Industrial Automation SBP, Delta Electronics India.

Throwing light on the working mechanism of cobots, Prasad Kulkarni, Lead–Digital Transformation, Factory Automation Systems, Mitsubishi Electric India, detailed, “Cobots are designed with safety, flexibility, and ergonomics as core principles. They are specifically engineered to work safely alongside human operators. This is achieved through built-in safety mechanisms such as force and torque limitation, advanced vision systems, proximity sensing, and real-time feedback, which enable immediate response to human presence. From an efficiency standpoint, cobots deliver high consistency and precision in tasks such as calibration, complex assembly, inspection, sealant application, etc.”

Adoption and Challenges

The complete adoption of smart manufacturing under Industry 5.0 is still a work in progress, but “Adoption of Industry 5.0–aligned technologies is steadily increasing, particularly among organisations seeking flexibility, scalability, and workforce sustainability. While early adoption has been led by larger enterprises, interest from mid-sized manufacturers is also growing. In the long term, this trend will result in more resilient supply chains, faster response to market changes, and a workforce that is better supported by intelligent automation,” confirms Prateek Jain, COO and Co- founder of Addverb.

The International Federation of Robotics predicts that 35% of worldwide manufacturers will implement Industry 5.0 technologies (such as cobots) over the next decade to unite automated systems with human capabilities.

The shift is not expected to take too long; however, there are certain challenges that one may face in the course of time. “One challenge is integrating advanced automation with existing equipment and processes without overwhelming operators. Smart solutions must be powerful yet intuitive. Connectivity and cybersecurity are also critical as more systems communicate across networks. Additionally, helping the workforce upskill and get comfortable with new tools is essential. While intelligent automation can improve efficiency and safety, it requires thoughtful design and training support so technology feels empowering rather than disruptive in everyday operations,” explains Dr Sanjeev Srivastava, Business Head–Industrial Automation SBP, Delta Electronics India.

In Near Future

Industry 5.0 technologies are already in use across several industries, from automotive to electronics manufacturing. AI-based vision systems are supporting human-led quality inspection in the semiconductor and FMCG sectors for early detection of defects while allowing humans to take the final decisions. Simultaneously, “digital twins are widely used to simulate new production lines and train operators before commissioning, particularly in automotive and heavy industry, reducing ramp-up time and risk. At the same time, manufacturers in energy-intensive sectors such as automotive and metals are using data-driven energy optimisation to lower power consumption and emissions. Together, these examples show how factory automation is evolving to be more human-centric, sustainable, and resilient – not just more automated,” says Prasad Kulkarni, Lead–Digital Transformation, Factory Automation Systems, Mitsubishi Electric India.

Ultimately, Industry 5.0 marks a transition from “how fast can we build?” to “how well can we collaborate?” For the electronics B2B sector, this isn’t just a technical upgrade; it’s a cultural renaissance. By weaving the irreplaceable intuition of human craftsmanship into the tireless precision of smart manufacturing, we are moving beyond cold, rigid automation. The future of silicon and circuitry lies in this hybrid harmony where cobots and creators coexist to drive innovation that is as personal as it is powerful. In this new era, the most sophisticated component on the factory floor will always be the human touch.

By: Shreya Bansal, Sub-Editor

The post Industry 5.0 in Practice: Collaborative, Connected, and Conscious Manufacturing appeared first on ELE Times.

AI-Enabled Autonomous Testing for Mission-Critical Electronics

The rise of Artificial Intelligence (AI) and Machine Learning (ML) is transforming the landscape of electronics testing. Across the globe, electronics companies are under pressure to launch ever more sophisticated products at breakneck speed, all while upholding strict quality standards. Today’s system-on-chip (SoC) designs can pack in billions of transistors and a tangle of complex firmware, making comprehensive testing a daunting challenge. Traditional approaches—relying on preset test vectors and deterministic algorithms—are often outpaced by the sheer scale and complexity. Now, AI and ML are stepping into the spotlight, promising to revolutionise autonomous testing. By applying probabilistic reasoning, pattern recognition, and adaptive algorithms, these technologies are saving weeks of development cycles and boosting defect detection rates by as much as 25-40 percent, according to industry insiders.

AI Efficiency vs. Manual Testing

The story of testing in electronics has a quirky beginning: in 1945, engineers traced a malfunction to a real, fluttering moth inside a computer relay. Fast-forward a decade, and testing had become a specialised discipline. Yet, even as methods grew more sophisticated, manual testing remained laborious, vulnerable to human oversight, and often unable to keep pace with the demands of modern design. Enter a new era, where technology, not tradition, leads the charge, and the boundaries between human and machine blur in pursuit of precision.

Challenges in AI/ML for Testing

While there is no doubt that integration of AI and ML offers pace to the testing procedure, the real problem comes down to determining how much data is needed to feed the AI for thorough and accurate testing, mainly for start-ups and small teams.

“One of the primary challenges in developing AI-driven test systems is ensuring high-quality, unbiased data, as AI models depend heavily on reliable inputs. Maintaining transparency in AI-driven decisions and balancing automation with human expertise are also critical, particularly in regulated and safety-sensitive industries. NI addresses these challenges through its open, software-defined test architecture and engineer-led AI integration. By standardising data acquisition and validation at the source, NI ensures data consistency, traceability, and transparency before AI is applied. This same foundation enables efficient integration with emerging IoT technologies, as modular hardware and open software can easily connect with diverse sensors, devices, and communication protocols. Edge computing capabilities further support real-time data processing closer to IoT endpoints, reducing latency and improving responsiveness as IoT systems continue to evolve,” says Shitendra Bhattacharya, Country Head & Director, NI India, Emerson.

The “Black Box” issue is considered one of the most core challenges in the incorporation of AI in autonomous testing. It refers to the difficulty in understanding how complex models, especially deep learning systems, reach their decisions, treating them like opaque boxes with only inputs and outputs visible, leading to issues with trust, fairness, accountability, and debugging, as their intricate, multi-layered processes are hard to interpret, potentially hiding bias or errors.

“Each step of the way, decisions need to be made on parameters to optimise. The goal is to test as much as possible with as few test patterns as possible to manage costs,” explained Fadi Maamari, vice president of engineering for hardware analytics and test, Synopsys

Benefits of AI in Testing Solutions

“AI is already making a big impact in adaptive test optimisation and anomaly detection,” said Eduardo Estima de Castro, senior manager of R&D engineering at Synopsys. “Machine learning helps prioritise high-value test patterns, cut test time, and identify systemic yield issues. It also enables real-time adjustments to test limits, improving outgoing quality. These capabilities bring significant efficiency gains in high-volume production.”

AI has the capability to generate an extensive range of real-world scenarios for testing. The extensive range helps reduce the possibility of error and gives engineers a wider scope for creativity.

NLP-driven technologies analyse requirements and user stories to produce test cases that meet defined criteria. This approach guarantees full test coverage while reducing the manual labour required to create test cases. Additionally, AI and machine learning algorithms may prioritise test cases based on risk, criticality, and defect history. This prioritisation guarantees that the most critical tests are run first, hence optimising testing efforts and increasing coverage. AI-powered technologies may also do root cause analysis, identifying the underlying causes of faults and making meaningful recommendations for remediation. AI and ML technologies automate visual testing by comparing application aspects (e.g., UI components) to predicted results. The best feature is their ability to continuously learn from fresh data and adapt to changing requirements. This continual learning increases the accuracy and efficacy of test automation technologies, resulting in continued improvements to software testing.

Core Technology Involved

While different AI models are designed differently, throwing light on the core functioning of this concept, Shitendra Bhattacharya, Country Head & Director, NI India, Emerson said, “The foundation of this testing software is a software-defined, modular test architecture combined with AI-driven data intelligence. This design enables autonomous test systems that can adapt to changing requirements, learn from data, and improve performance over time. Open test software platforms such as NI LabVIEW, TestStand, SystemLink, and FlexLogger allow engineers to design, customise, and reuse test logic across products and industries while integrating seamlessly with third-party tools, cloud platforms, and emerging technologies. This is complemented by modular, software-connected hardware, including PXI, CompactDAQ, CompactRIO, and USRP, which decouples hardware from software logic, allowing systems to be upgraded or repurposed through software alone. An AI-ready data architecture further embeds analytics directly into the testing workflow, enabling real-time data collection, automated structuring, and intelligent analysis to detect patterns, predict failures, and flag anomalies early.”

Adoption Rate and Use Cases

AI is widely used to test software embedded in electrical devices, which falls under the wider umbrella of AI in software testing. The key areas of adoption include:

Self-healing tests: AI systems detect changes in the application’s UI or code and automatically update test scripts, eliminating the need for maintenance.

Test case and data generation: AI can create broad and realistic test data and scenarios based on user stories and historical data, including edge situations that human testers may overlook.

Predictive analytics: Artificial intelligence analyses past data to anticipate where problems are most likely to arise, allowing QA teams to focus testing on high-risk regions.

“Adoption of AI-enabled and autonomous testing is already accelerating, particularly in industries experiencing rapid growth in electronic complexity, including semiconductors, automotive and EVs, aerospace and defence, and advanced manufacturing. Today, adoption is most visible in R&D and validation environments, where AI helps analyse large test datasets, and in high-volume production testing, where automation improves speed, consistency, and yield. Looking ahead, AI-driven testing is expected to become the norm as systems become too complex for manual approaches. This shift will enable faster time-to-market, improve product reliability, and allow engineers to focus more on innovation and system-level problem-solving rather than repetitive test tasks,” explains Shitendra Bhattacharya, Country Head & Director, NI India, Emerson.

Manufacturers use AI-powered computer vision systems to inspect electronic components like PCBs for defects such as misaligned components, micro-cracks, and solder joint faults. These systems can identify anomalies with high accuracy and speed that are difficult for human inspectors or traditional camera systems to catch, leading to zero-defect manufacturing goals.

Agilent used AI vision tools to reduce defect rates by 49% in four months in one application. Companies like Jidoka and Averroes provide specific AI tools for PCB inspection that integrate with existing manufacturing lines and learn new defect types from minimal sample images.

What the Future Holds?

The future of AI and ML in test automation is bright, with continued advancements set to further revolutionise testing in the electronics industry. Here are some emerging trends to watch:

- Autonomous Testing: The ultimate goal of AI and ML in test automation is to achieve autonomous testing, where the entire testing process, from test case generation to execution and analysis, is fully automated with minimal human intervention. While this level of autonomy is still developing, ongoing advancements are bringing it closer to reality.

- Enhanced Collaboration: AI and ML are bound to drive better collaboration between development, QA, and operations teams by providing actionable insights and predictive analytics. These insights will allow teams to make informed decisions, refine testing strategies, and enhance overall software quality.

- Integration with Emerging Technologies: AI and ML will increasingly integrate with other emerging technologies such as the Internet of Things (IoT), blockchain, and edge computing. This integration will enable holistic testing of complex, interconnected systems, ensuring their reliability and performance in real-world scenarios.

• Continuous Learning and Improvement: AI and ML models will continue to evolve, learning from new data and adapting to changing requirements. This continuous learning will enhance the accuracy and effectiveness of test automation tools, driving ongoing improvements in software testing.

By: Shreya Bansal, Sub-Editor

The post AI-Enabled Autonomous Testing for Mission-Critical Electronics appeared first on ELE Times.

Vishay Intertechnology Extends Miniature Snap-in Power Aluminium Electrolytic Capacitors with New Voltage Ratings

Vishay Intertechnology has extended its 193 PUR-SI series of miniature snap-in power aluminium electrolytic capacitors with new voltage ratings of 550 V and 600 V. Traditionally, designers have used three 400 V to 450 V capacitors in series, with voltage balancing resistors across each device, to handle DC bus voltages up to 1100 V — an approach that adds design complexity and potential failure points. But with Vishay’s higher voltage ratings up to 600 V, the enhanced 193 PUR-SI series devices released allow designers to achieve this voltage range with fewer components, while eliminating the need for voltage balancing circuits — saving PCB space and reducing BOM costs. In addition, the added voltage headroom extends capacitor lifetimes and enhances overall system reliability.

Designed to withstand the demanding switching currents of today’s high power converters, the 193 PUR-SI series devices handle ripple currents up to 3.27 A. Their long useful life of 5000 hours at +105 °C permits use in demanding applications requiring more than 25 years of operation from capacitors in ambient temperatures up to +60 °C. Featuring a cylindrical aluminium case insulated with a blue sleeve, the devices offer capacitance from 47 µF to 820 µF in 25 compact case sizes ranging from 22 mm x

25 mm to 35 mm x 60 mm. The RoHS-compliant capacitors are also available with 3-pin keyed polarity snap-in terminals for more stable PCB mounting.

As polarized aluminum electrolytic capacitors with a non-solid electrolyte, the devices are ideally suited for smoothing, buffering, and DC-Link filtering in switch mode power supplies; chargers, battery management systems (BMS), and motor controls in electric (EV) and hybrid electric (HEV) vehicles; industrial motor drives and air conditioners; solar inverters; medical equipment; UPS; and welding equipment.

Device Specification Table:

| Case size (D x L in mm) |

22 x 25 to 35 x 60 |

|

| Capacitance range |

47 µF to 820 µF |

|

| Tolerance |

± 20 % |

|

| Rated voltage |

400 V to 450 V |

500 V to 600 V |

| Category temperature range |

-40 °C to +105 °C |

|

| Useful life at +105 °C |

5000 h |

|

| Max. ESR at 100 Hz |

120 mΩ to 3365 mΩ |

|

| Max. impedance at 10 kHz |

80 mΩ to 2700 mΩ |

|

| Sectional specification |

IEC 60384-4 / EN130300 |

|

| Climatic category IEC 60038 |

40 / 105 / 56 |

25 / 105 / 56 |

Samples of the extended 193 PUR-SI series capacitors are available from catalogue houses in small quantities. Production quantities are available now, with lead times of 18 weeks.

The post Vishay Intertechnology Extends Miniature Snap-in Power Aluminium Electrolytic Capacitors with New Voltage Ratings appeared first on ELE Times.

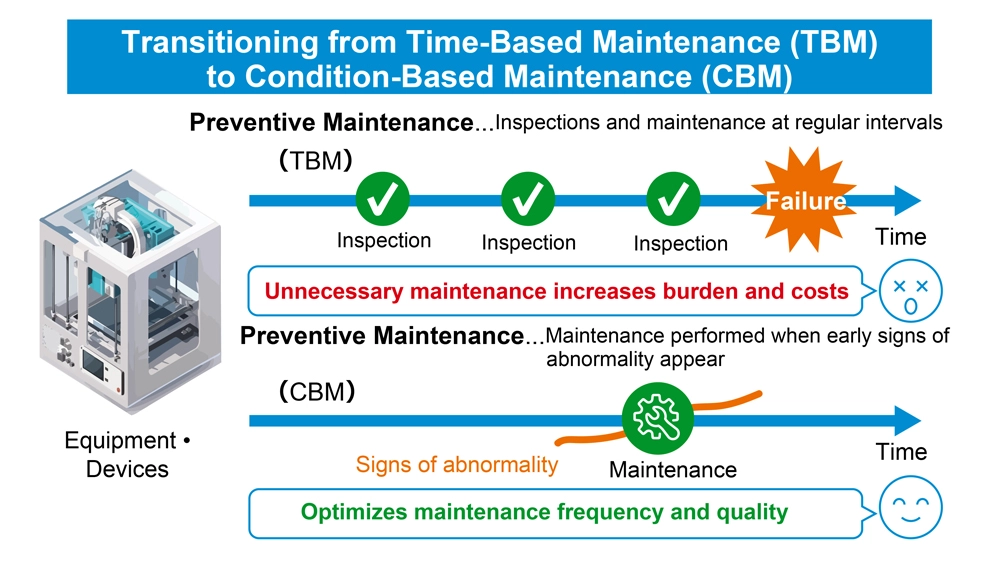

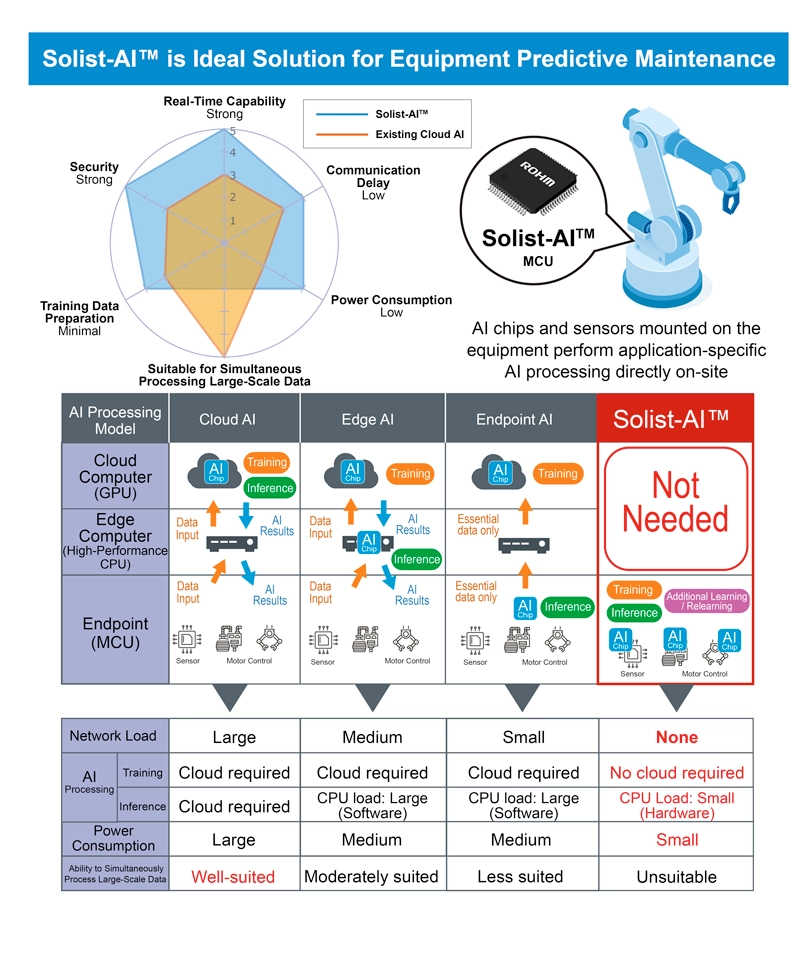

Shifting from preventive maintenance to predictive maintenance

Courtesy: RoHM

In the manufacturing industry, equipment maintenance has traditionally relied on preventive maintenance (TBM: Time-Based Maintenance), where inspections and part replacements are carried out at predetermined intervals. While effective to a degree, this approach often requires shutting down equipment that is still operating normally, resulting in unnecessary downtime, higher operating costs, and increased workload on production sites. In some cases, excessive inspection tasks can even introduce the problems they are intended to prevent—such as mistakes and human error.

Consequently, many manufacturers are now transitioning to predictive maintenance (CBM: Condition-Based Maintenance), which monitors equipment conditions in real time and performs maintenance only when early signs of abnormality are detected.

This white paper examines Solist-AI, ROHM’s AI-driven solution that enhances the efficiency and reliability of predictive maintenance.

- Transitioning from Time-Based Maintenance (TBM) to Condition-Based Maintenance (CBM)

The primary difference between preventive maintenance (TBM) and predictive maintenance (CBM) lies in how maintenance timing is determined. TBM schedules inspections and part replacements at fixed time intervals, independent of the equipment’s actual condition. In contrast, CBM performs maintenance only when truly necessary. AI plays a critical role in CBM by continuously monitoring parameters such as temperature, vibration, and current through sensors, processing large volumes of time-series data, and detecting early signs of abnormality from subtle pattern deviations.

By adopting predictive maintenance, manufacturers can avoid unnecessary shutdowns of equipment that is still operating normally. AI-driven optimisation of maintenance timing reduces operational costs and workload, while also preventing excessive inspections that may introduce procedural or human errors. Furthermore, because CBM ensures consistent maintenance quality without relying on technician intuition or experience, it is gaining attention as an effective solution to on-site challenges such as the shortage of skilled workers and persistent labour shortages.

- Shifting from Cloud-Based to Endpoint-Based Architecture

Conventional cloud-based AI systems face several challenges, including communication latency, the complexity of building and maintaining network infrastructure, and the added burden of implementing security measures to prevent data leaks during transmission.

These systems also require large volumes of training data to be collected and prepared in advance, making it difficult to account for variations in operating environments and equipment-specific differences at each site. High-precision AI processing also demands significant computational resources such as CPUs and GPUs, which inevitably increases power consumption.

This has led to the growing adoption of endpoint AI as a more practical alternative. By performing both learning and inference directly on devices equipped with sensors and MCUs, endpoint AI can adapt to the characteristics of individual machines, perform real-time processing, and maintain exceptionally low power consumption. In addition, because no data is transmitted to the cloud, it ensures a high level of security.

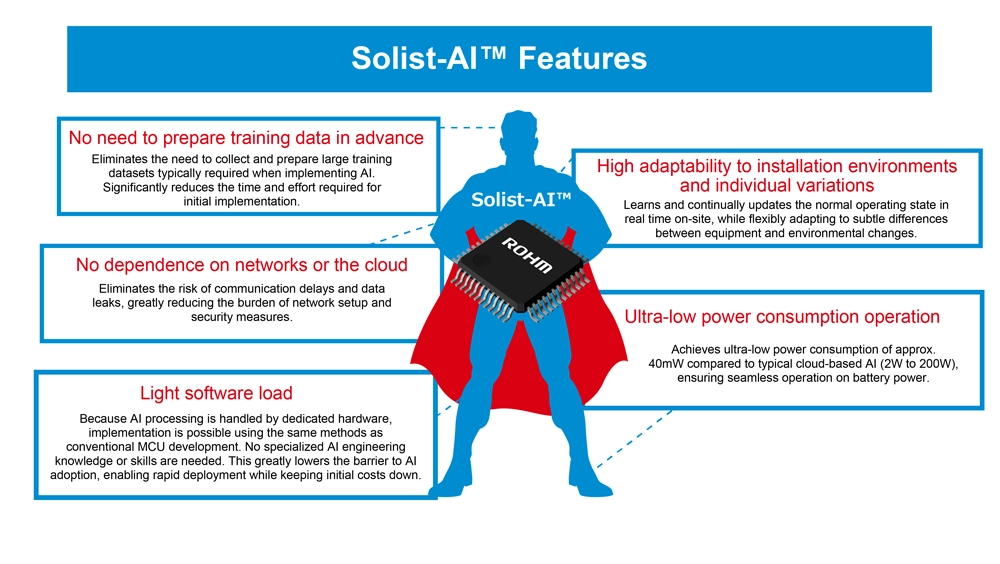

To support this paradigm, ROHM developed Solist-AI, an on-device AI solution optimised for edge computing, which is the core technology behind predictive maintenance. The name is derived from ‘Solution with On-device Learning IC for Standalone AI.’ Just like a ‘solist’ in music who performs independently, Solist-AI completes the entire AI workflow, from learning to inference, locally on the device without relying on the cloud or external networks.

completes the entire AI workflow, from learning to inference, locally on the device without relying on the cloud or external networks.

While Solist-AI delivers significant advantages for on-device AI, it is not designed for large-scale data processing or image-based workloads compared to conventional AI architectures (cloud, edge, or endpoint types). For advanced analytics, such as detailed image diagnostics, cloud-based AI remains the preferred solution.

For applications that do not require advanced analysis, Solist-AI offers a major benefit: substantial cost savings by eliminating expenses associated with network infrastructure and cloud service fees.

And with ongoing learning capabilities, Solist-AI can continuously update its understanding of equipment deterioration over time. This greatly improves responsiveness to both normal and abnormal conditions as machines age, making it a compelling alternative to traditional AI solutions.

- Solist-AI: The Optimal Solution for Predictive Maintenance

Unlike general-purpose AI processors, Solist-AI is purpose-built for targeted use cases such as failure prediction and anomaly detection. The ability to train and fine-tune models directly on-site based on the actual operating environment ensures flexible adaptation to equipment variations and changing ambient conditions.

At the heart of the solution is the Solist-AI MCU, which integrates ROHM’s proprietary AI accelerator AxlCORE-ODL (On-Device Learning). This accelerator performs a three-layer neural network and FFT (Fast Fourier Transform) processing in dedicated hardware, enabling real-time detection and notification of various anomalies and operational changes in the field.